使用 kubeadm 在 GCP 部署 Kubernetes

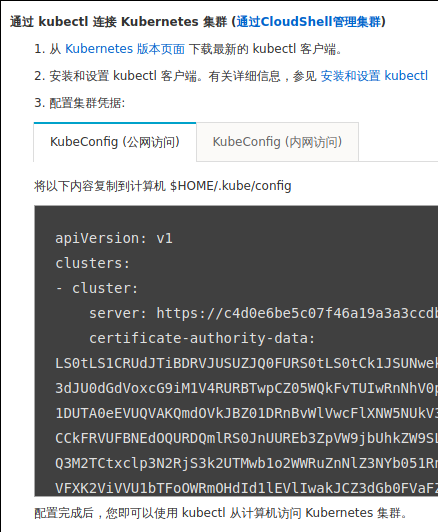

0. 介绍 最近在准备 CKA 考试,所以需要搭建一个 Kubernetes 集群来方便练习.GCP 平台新用户注册送 300 刀体验金,所以就想到用 kubeadm 在 GCP 弄个练练手,既方便又省钱. 这一套做下来,还是比较容易上手的,kubeadm 提供的是傻瓜式的安装体验,所以难度主要还是在科学上网和熟悉 GCP 的命令上,接下来就详细记述一下如何操作. 1. 准备 接下来的操作都假设已经设置好了科学上网,由于政策原因,具体做法请自行搜索;而且已经注册好了 GCP 账户,链接如下:GCP 1.1 gcloud 安装和配置 首先需要在本地电脑上安装 GCP 命令行客户端:gcloud,参考链接为:gcloud 因为众所周知的原因,gcloud 要能正常使用,要设置代理才可以,下面是设置 SOCKS5 代理的命令: # gcloud config set proxy/type PROXY_TYPE $ gcloud config set proxy/type socks5 # gcloud config set proxy/address PROXY_IP_ADDRESS $ gcloud config set proxy/address 127.0.0.1 # gcloud config set proxy/port PROXY_PORT $ gcloud config set proxy/address 1080 如果是第一次使用 GCP,需要先进行初始化.在初始化的过程中会有几次交互,使用默认选项即可.由于之前已经设置了代理,网络代理相关部分就可以跳过了.注意:在选择 region(区域)时,建议选择 us-west2,原因是目前大部分 GCP 的 region,体验用户只能最多创建四个虚拟机实例,只有少数几个区域可以创建六个,其中就包括 us-west2,正常来讲,搭建 Kubernetes 需要三个 master,三个 worker,四个不太够用,当然如果只是试试的话,两个节点,一主一从,也够用了. $ gcloud init Welcome! This command will take you through the configuration of gcloud. Settings from your current configuration [profile-name] are: core: disable_usage_reporting: 'True' Pick configuration to use: [1] Re-initialize this configuration [profile-name] with new settings [2] Create a new configuration [3] Switch to and re-initialize existing configuration: [default] Please enter your numeric choice: 3 Your current configuration has been set to: [default] You can skip diagnostics next time by using the following flag: gcloud init --skip-diagnostics Network diagnostic detects and fixes local network connection issues. Checking network connection...done. ERROR: Reachability Check failed. Cannot reach https://www.google.com (ServerNotFoundError) Cannot reach https://accounts.google.com (ServerNotFoundError) Cannot reach https://cloudresourcemanager.googleapis.com/v1beta1/projects (ServerNotFoundError) Cannot reach https://www.googleapis.com/auth/cloud-platform (ServerNotFoundError) Cannot reach https://dl.google.com/dl/cloudsdk/channels/rapid/components-2.json (ServerNotFoundError) Network connection problems may be due to proxy or firewall settings. Current effective Cloud SDK network proxy settings: type = socks5 host = PROXY_IP_ADDRESS port = 1080 username = None password = None What would you like to do? [1] Change Cloud SDK network proxy properties [2] Clear all gcloud proxy properties [3] Exit Please enter your numeric choice: 1 Select the proxy type: [1] HTTP [2] HTTP_NO_TUNNEL [3] SOCKS4 [4] SOCKS5 Please enter your numeric choice: 4 Enter the proxy host address: 127.0.0.1 Enter the proxy port: 1080 Is your proxy authenticated (y/N)? N Cloud SDK proxy properties set. Rechecking network connection...done. Reachability Check now passes. Network diagnostic (1/1 checks) passed. You must log in to continue. Would you like to log in (Y/n)? y Your browser has been opened to visit: https://accounts.google.com/o/oauth2/auth?redirect_uri=...... 已在现有的浏览器会话中创建新的窗口。 Updates are available for some Cloud SDK components. To install them, please run: $ gcloud components update You are logged in as: [<gmail account>]. Pick cloud project to use: [1] <project-id> [2] Create a new project Please enter numeric choice or text value (must exactly match list item): 1 Your current project has been set to: [<project-id>]. Your project default Compute Engine zone has been set to [us-west2-b]. You can change it by running [gcloud config set compute/zone NAME]. Your project default Compute Engine region has been set to [us-west2]. You can change it by running [gcloud config set compute/region NAME]. Created a default .boto configuration file at [/home/<username>/.boto]. See this file and [https://cloud.google.com/storage/docs/gsutil/commands/config] for more information about configuring Google Cloud Storage. Your Google Cloud SDK is configured and ready to use! * Commands that require authentication will use <gmail account> by default * Commands will reference project `<project-id>` by default * Compute Engine commands will use region `us-west2` by default * Compute Engine commands will use zone `us-west2-b` by default Run `gcloud help config` to learn how to change individual settings This gcloud configuration is called [default]. You can create additional configurations if you work with multiple accounts and/or projects. Run `gcloud topic configurations` to learn more. Some things to try next: * Run `gcloud --help` to see the Cloud Platform services you can interact with. And run `gcloud help COMMAND` to get help on any gcloud command. * Run `gcloud topic -h` to learn about advanced features of the SDK like arg files and output formatting 1.2 GCP 资源创建 接下来创建 Kuernetes 所需的 GCP 资源. 第一步是创建网络和子网. $ gcloud compute networks create cka --subnet-mode custom $ gcloud compute networks subnets create kubernetes --network cka --range 10.240.0.0/24 接下来要创建防火墙规则,配置哪些端口是可以开放访问的.一共两条规则,一个外网,一个内网.外网规则只需要开放 ssh, ping 和 kube-api 的访问就足够了: $ gcloud compute firewall-rules create cka-external --allow tcp:22,tcp:6443,icmp --network cka --source-ranges 0.0.0.0/0 内网规则设置好 GCP 虚拟机网段和后面 pod 的网段可以互相访问即可,因为后面会使用 calico 作为网络插件,所以只开放 TCP, UDP 和 ICMP 是不够的,还需要开放 BGP,但 GCP 的防火墙规则中没有 BGP 选项,所以放开全部协议的互通. $ gcloud compute firewall-rules create cka-internal --network cka --allow=all --source-ranges 192.168.0.0/16,10.240.0.0/16 最后创建 GCP 虚拟机实例. $ gcloud compute instances create controller-1 --async --boot-disk-size 200GB --can-ip-forward --image-family ubuntu-1804-lts --image-project ubuntu-os-cloud --machine-type n1-standard-1 --private-network-ip 10.240.0.11 --scopes compute-rw,storage-ro,service-management,service-control,logging-write,monitoring --subnet kubernetes --tags cka,controller $ gcloud compute instances create worker-1 --async --boot-disk-size 200GB --can-ip-forward --image-family ubuntu-1804-lts --image-project ubuntu-os-cloud --machine-type n1-standard-1 --private-network-ip 10.240.0.21 --scopes compute-rw,storage-ro,service-management,service-control,logging-write,monitoring --subnet kubernetes --tags cka,worker 2. 主节点配置 使用 gcloud 登录 controller-1 $ gcloud compute ssh controller-1 WARNING: The public SSH key file for gcloud does not exist. WARNING: The private SSH key file for gcloud does not exist. WARNING: You do not have an SSH key for gcloud. WARNING: SSH keygen will be executed to generate a key. Generating public/private rsa key pair. Enter passphrase (empty for no passphrase): Enter same passphrase again: Your identification has been saved in /home/<username>/.ssh/google_compute_engine. Your public key has been saved in /home/<username>/.ssh/google_compute_engine.pub. The key fingerprint is: SHA256:jpaZtzz42t7FjB1JV06GeVHhXVi12LF/a+lfl7TK2pw <username>@<username> The key's randomart image is: +---[RSA 2048]----+ | O&| | B=B| | ...*o| | . o .| | S o .o| | * = .. *| | *.o . = *o| | ..+.o .+ = o| | .+*....E .o| +----[SHA256]-----+ Updating project ssh metadata...⠧Updated [https://www.googleapis.com/compute/v1/projects/<project-id>]. Updating project ssh metadata...done. Waiting for SSH key to propagate. Warning: Permanently added 'compute.2329485573714771968' (ECDSA) to the list of known hosts. Welcome to Ubuntu 18.04.1 LTS (GNU/Linux 4.15.0-1025-gcp x86_64) * Documentation: https://help.ubuntu.com * Management: https://landscape.canonical.com * Support: https://ubuntu.com/advantage System information as of Wed Dec 5 03:05:31 UTC 2018 System load: 0.0 Processes: 87 Usage of /: 1.2% of 96.75GB Users logged in: 0 Memory usage: 5% IP address for ens4: 10.240.0.11 Swap usage: 0% Get cloud support with Ubuntu Advantage Cloud Guest: http://www.ubuntu.com/business/services/cloud 0 packages can be updated. 0 updates are security updates. $ ssh -l<user-name> -i .ssh/google_compute_engine.pub 35.236.126.174 安装 kubeadm, docker, kubelet, kubectl. $ sudo apt update $ sudo apt upgrade -y $ sudo apt-get install -y docker.io $ sudo vim /etc/apt/sources.list.d/kubernetes.list deb http://apt.kubernetes.io/ kubernetes-xenial main $ curl -s https://packages.cloud.google.com/apt/doc/apt-key.gpg | sudo apt-key add - OK $ sudo apt update $ sudo apt-get install -y \ kubeadm=1.12.2-00 kubelet=1.12.2-00 kubectl=1.12.2-00 kubeadm 初始化 $ sudo kubeadm init --pod-network-cidr 192.168.0.0/16 配置 calico 网络插件 $ wget https://tinyurl.com/yb4xturm \ -O rbac-kdd.yaml $ wget https://tinyurl.com/y8lvqc9g \ -O calico.yaml $ kubectl apply -f rbac-kdd.yaml $ kubectl apply -f calico.yaml 配置 kubectl 的 bash 自动补全. $ source <(kubectl completion bash) $ echo "source <(kubectl completion bash)" >> ~/.bashrc 3. 从节点配置 这里偷懒了一下,从节点安装的包和主节点一模一样,大家可以根据需求,去掉一些不必要的包. $ sudo apt-get update && sudo apt-get upgrade -y $ apt-get install -y docker.io $ sudo vim /etc/apt/sources.list.d/kubernetes.list deb http://apt.kubernetes.io/ kubernetes-xenial main $ curl -s \ https://packages.cloud.google.com/apt/doc/apt-key.gpg \ | sudo apt-key add - $ sudo apt-get update $ sudo apt-get install -y \ kubeadm=1.12.2-00 kubelet=1.12.2-00 kubectl=1.12.2-00 如果此时 kubeadm init 命令中的 join 命令找不到了,或者 bootstrap token 过期了,该怎么办呢,下面就是解决方法. $ sudo kubeadm token list TOKEN TTL EXPIRES USAGES DESCRIPTION 27eee4.6e66ff60318da929 23h 2017-11-03T13:27:33Z authentication,signing The default bootstrap token generated by ’kubeadm init’.... $ sudo kubeadm token create 27eee4.6e66ff60318da929 $ openssl x509 -pubkey \ -in /etc/kubernetes/pki/ca.crt | openssl rsa \ -pubin -outform der 2>/dev/null | openssl dgst \ -sha256 -hex | sed ’s/^.* //’ 6d541678b05652e1fa5d43908e75e67376e994c3483d6683f2a18673e5d2a1b0 最后执行 kubeadm join 命令. $ sudo kubeadm join \ --token 27eee4.6e66ff60318da929 \ 10.128.0.3:6443 \ --discovery-token-ca-cert-hash \ sha256:6d541678b05652e1fa5d43908e75e67376e994c3483d6683f2a18673e5d2a1b0 4. 参考文档 GCP Cloud SDK 安装指南 配置 Cloud SDK 以在代理/防火墙后使用 Kubernetes the hard way Linux Academy: Certified Kubernetes Administrator (CKA)