Filebeat-1.3.1安装和设置(图文详解)(多节点的ELK集群安装在一个节点就好)(以Console Output为例)

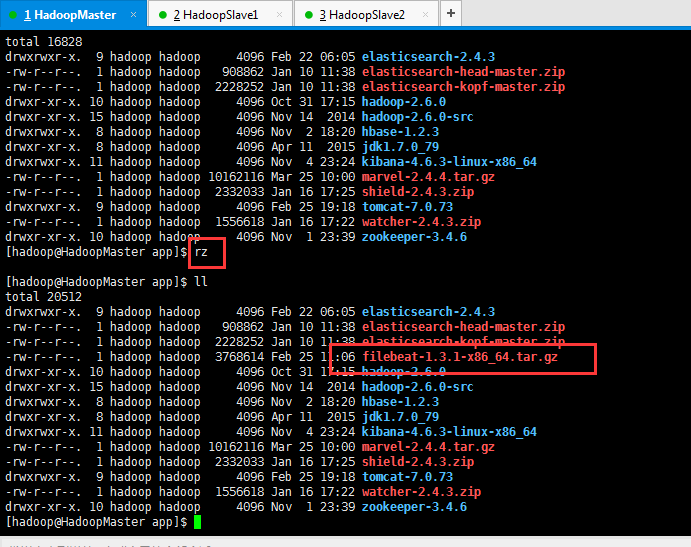

在此基础上,我们安装好ELK(Elasticsearch、Logstasg和kibana)之后,我们继续来安装,Filebeat。 Filebeat是轻量级的日志收集工具,使用go语言开发。 我这里的机器集群情况分别是: HadoopMaster(192.168.80.10)、HadoopSlave1(192.168.80.11)和HadoopSlave2(192.168.80.12)。 1、上传elasticsearch-2.4.3.tar.gz压缩包 total 16828 drwxrwxr-x. 9 hadoop hadoop 4096 Feb 22 06:05 elasticsearch-2.4.3 -rw-r--r--. 1 hadoop hadoop 908862 Jan 10 11:38 elasticsearch-head-master.zip -rw-r--r--. 1 hadoop hadoop 2228252 Jan 10 11:38 elasticsearch-kopf-master.zip drwxr-xr-x. 10 hadoop hadoop 4096 Oct 31 17:15 hadoop-2.6.0 drwxr-xr-x. 15 hadoop hadoop 4096 Nov 14 2014 hadoop-2.6.0-src drwxrwxr-x. 8 hadoop hadoop 4096 Nov 2 18:20 hbase-1.2.3 drwxr-xr-x. 8 hadoop hadoop 4096 Apr 11 2015 jdk1.7.0_79 drwxrwxr-x. 11 hadoop hadoop 4096 Nov 4 23:24 kibana-4.6.3-linux-x86_64 -rw-r--r--. 1 hadoop hadoop 10162116 Mar 25 10:00 marvel-2.4.4.tar.gz -rw-r--r--. 1 hadoop hadoop 2332033 Jan 16 17:25 shield-2.4.3.zip drwxrwxr-x. 9 hadoop hadoop 4096 Feb 25 19:18 tomcat-7.0.73 -rw-r--r--. 1 hadoop hadoop 1556618 Jan 16 17:22 watcher-2.4.3.zip drwxr-xr-x. 10 hadoop hadoop 4096 Nov 1 23:39 zookeeper-3.4.6 [hadoop@HadoopMaster app]$ rz [hadoop@HadoopMaster app]$ ll total 20512 drwxrwxr-x. 9 hadoop hadoop 4096 Feb 22 06:05 elasticsearch-2.4.3 -rw-r--r--. 1 hadoop hadoop 908862 Jan 10 11:38 elasticsearch-head-master.zip -rw-r--r--. 1 hadoop hadoop 2228252 Jan 10 11:38 elasticsearch-kopf-master.zip -rw-r--r--. 1 hadoop hadoop 3768614 Feb 25 11:06 filebeat-1.3.1-x86_64.tar.gz drwxr-xr-x. 10 hadoop hadoop 4096 Oct 31 17:15 hadoop-2.6.0 drwxr-xr-x. 15 hadoop hadoop 4096 Nov 14 2014 hadoop-2.6.0-src drwxrwxr-x. 8 hadoop hadoop 4096 Nov 2 18:20 hbase-1.2.3 drwxr-xr-x. 8 hadoop hadoop 4096 Apr 11 2015 jdk1.7.0_79 drwxrwxr-x. 11 hadoop hadoop 4096 Nov 4 23:24 kibana-4.6.3-linux-x86_64 -rw-r--r--. 1 hadoop hadoop 10162116 Mar 25 10:00 marvel-2.4.4.tar.gz -rw-r--r--. 1 hadoop hadoop 2332033 Jan 16 17:25 shield-2.4.3.zip drwxrwxr-x. 9 hadoop hadoop 4096 Feb 25 19:18 tomcat-7.0.73 -rw-r--r--. 1 hadoop hadoop 1556618 Jan 16 17:22 watcher-2.4.3.zip drwxr-xr-x. 10 hadoop hadoop 4096 Nov 1 23:39 zookeeper-3.4.6 [hadoop@HadoopMaster app]$ 2、解压缩filebeat-1.3.1-x86_64.tar.gz压缩包 total 20512 drwxrwxr-x. 9 hadoop hadoop 4096 Feb 22 06:05 elasticsearch-2.4.3 -rw-r--r--. 1 hadoop hadoop 908862 Jan 10 11:38 elasticsearch-head-master.zip -rw-r--r--. 1 hadoop hadoop 2228252 Jan 10 11:38 elasticsearch-kopf-master.zip -rw-r--r--. 1 hadoop hadoop 3768614 Feb 25 11:06 filebeat-1.3.1-x86_64.tar.gz drwxr-xr-x. 10 hadoop hadoop 4096 Oct 31 17:15 hadoop-2.6.0 drwxr-xr-x. 15 hadoop hadoop 4096 Nov 14 2014 hadoop-2.6.0-src drwxrwxr-x. 8 hadoop hadoop 4096 Nov 2 18:20 hbase-1.2.3 drwxr-xr-x. 8 hadoop hadoop 4096 Apr 11 2015 jdk1.7.0_79 drwxrwxr-x. 11 hadoop hadoop 4096 Nov 4 23:24 kibana-4.6.3-linux-x86_64 -rw-r--r--. 1 hadoop hadoop 10162116 Mar 25 10:00 marvel-2.4.4.tar.gz -rw-r--r--. 1 hadoop hadoop 2332033 Jan 16 17:25 shield-2.4.3.zip drwxrwxr-x. 9 hadoop hadoop 4096 Feb 25 19:18 tomcat-7.0.73 -rw-r--r--. 1 hadoop hadoop 1556618 Jan 16 17:22 watcher-2.4.3.zip drwxr-xr-x. 10 hadoop hadoop 4096 Nov 1 23:39 zookeeper-3.4.6 [hadoop@HadoopMaster app]$ tar -zxvf filebeat-1.3.1-x86_64.tar.gz filebeat-1.3.1-x86_64/ filebeat-1.3.1-x86_64/filebeat filebeat-1.3.1-x86_64/filebeat.template.json filebeat-1.3.1-x86_64/filebeat.yml [hadoop@HadoopMaster app]$ ll total 20516 drwxrwxr-x. 9 hadoop hadoop 4096 Feb 22 06:05 elasticsearch-2.4.3 -rw-r--r--. 1 hadoop hadoop 908862 Jan 10 11:38 elasticsearch-head-master.zip -rw-r--r--. 1 hadoop hadoop 2228252 Jan 10 11:38 elasticsearch-kopf-master.zip drwxr-xr-x. 2 hadoop hadoop 4096 Sep 15 2016 filebeat-1.3.1-x86_64 -rw-r--r--. 1 hadoop hadoop 3768614 Feb 25 11:06 filebeat-1.3.1-x86_64.tar.gz drwxr-xr-x. 10 hadoop hadoop 4096 Oct 31 17:15 hadoop-2.6.0 drwxr-xr-x. 15 hadoop hadoop 4096 Nov 14 2014 hadoop-2.6.0-src drwxrwxr-x. 8 hadoop hadoop 4096 Nov 2 18:20 hbase-1.2.3 drwxr-xr-x. 8 hadoop hadoop 4096 Apr 11 2015 jdk1.7.0_79 drwxrwxr-x. 11 hadoop hadoop 4096 Nov 4 23:24 kibana-4.6.3-linux-x86_64 -rw-r--r--. 1 hadoop hadoop 10162116 Mar 25 10:00 marvel-2.4.4.tar.gz -rw-r--r--. 1 hadoop hadoop 2332033 Jan 16 17:25 shield-2.4.3.zip drwxrwxr-x. 9 hadoop hadoop 4096 Feb 25 19:18 tomcat-7.0.73 -rw-r--r--. 1 hadoop hadoop 1556618 Jan 16 17:22 watcher-2.4.3.zip drwxr-xr-x. 10 hadoop hadoop 4096 Nov 1 23:39 zookeeper-3.4.6 [hadoop@HadoopMaster app]$ 3、删除filebeat-1.3.1-x86_64.tar.gz压缩包和修改为hadoop权限。 [hadoop@HadoopMaster app]$ ll total 20516 drwxrwxr-x. 9 hadoop hadoop 4096 Feb 22 06:05 elasticsearch-2.4.3 -rw-r--r--. 1 hadoop hadoop 908862 Jan 10 11:38 elasticsearch-head-master.zip -rw-r--r--. 1 hadoop hadoop 2228252 Jan 10 11:38 elasticsearch-kopf-master.zip drwxr-xr-x. 2 hadoop hadoop 4096 Sep 15 2016 filebeat-1.3.1-x86_64 -rw-r--r--. 1 hadoop hadoop 3768614 Feb 25 11:06 filebeat-1.3.1-x86_64.tar.gz drwxr-xr-x. 10 hadoop hadoop 4096 Oct 31 17:15 hadoop-2.6.0 drwxr-xr-x. 15 hadoop hadoop 4096 Nov 14 2014 hadoop-2.6.0-src drwxrwxr-x. 8 hadoop hadoop 4096 Nov 2 18:20 hbase-1.2.3 drwxr-xr-x. 8 hadoop hadoop 4096 Apr 11 2015 jdk1.7.0_79 drwxrwxr-x. 11 hadoop hadoop 4096 Nov 4 23:24 kibana-4.6.3-linux-x86_64 -rw-r--r--. 1 hadoop hadoop 10162116 Mar 25 10:00 marvel-2.4.4.tar.gz -rw-r--r--. 1 hadoop hadoop 2332033 Jan 16 17:25 shield-2.4.3.zip drwxrwxr-x. 9 hadoop hadoop 4096 Feb 25 19:18 tomcat-7.0.73 -rw-r--r--. 1 hadoop hadoop 1556618 Jan 16 17:22 watcher-2.4.3.zip drwxr-xr-x. 10 hadoop hadoop 4096 Nov 1 23:39 zookeeper-3.4.6 [hadoop@HadoopMaster app]$ rm filebeat-1.3.1-x86_64.tar.gz [hadoop@HadoopMaster app]$ ll total 16832 drwxrwxr-x. 9 hadoop hadoop 4096 Feb 22 06:05 elasticsearch-2.4.3 -rw-r--r--. 1 hadoop hadoop 908862 Jan 10 11:38 elasticsearch-head-master.zip -rw-r--r--. 1 hadoop hadoop 2228252 Jan 10 11:38 elasticsearch-kopf-master.zip drwxr-xr-x. 2 hadoop hadoop 4096 Sep 15 2016 filebeat-1.3.1-x86_64 drwxr-xr-x. 10 hadoop hadoop 4096 Oct 31 17:15 hadoop-2.6.0 drwxr-xr-x. 15 hadoop hadoop 4096 Nov 14 2014 hadoop-2.6.0-src drwxrwxr-x. 8 hadoop hadoop 4096 Nov 2 18:20 hbase-1.2.3 drwxr-xr-x. 8 hadoop hadoop 4096 Apr 11 2015 jdk1.7.0_79 drwxrwxr-x. 11 hadoop hadoop 4096 Nov 4 23:24 kibana-4.6.3-linux-x86_64 -rw-r--r--. 1 hadoop hadoop 10162116 Mar 25 10:00 marvel-2.4.4.tar.gz -rw-r--r--. 1 hadoop hadoop 2332033 Jan 16 17:25 shield-2.4.3.zip drwxrwxr-x. 9 hadoop hadoop 4096 Feb 25 19:18 tomcat-7.0.73 -rw-r--r--. 1 hadoop hadoop 1556618 Jan 16 17:22 watcher-2.4.3.zip drwxr-xr-x. 10 hadoop hadoop 4096 Nov 1 23:39 zookeeper-3.4.6 [hadoop@HadoopMaster app]$ 4、解读认识Filebeat的目录结构 这里,仅仅是在HadoopMaster安装,为例。 [hadoop@HadoopMaster app]$ cd filebeat-1.3.1-x86_64/ [hadoop@HadoopMaster filebeat-1.3.1-x86_64]$ pwd /home/hadoop/app/filebeat-1.3.1-x86_64 [hadoop@HadoopMaster filebeat-1.3.1-x86_64]$ ll total 11116 -rwxr-xr-x. 1 hadoop hadoop 11354200 Sep 15 2016 filebeat -rw-r--r--. 1 hadoop hadoop 814 Sep 15 2016 filebeat.template.json -rw-r--r--. 1 hadoop hadoop 17212 Sep 15 2016 filebeat.yml [hadoop@HadoopMaster filebeat-1.3.1-x86_64]$ 5、修改filebeat.yml文件配置文件 [hadoop@HadoopMaster filebeat-1.3.1-x86_64]$ pwd /home/hadoop/app/filebeat-1.3.1-x86_64 [hadoop@HadoopMaster filebeat-1.3.1-x86_64]$ vim filebeat.yml 默认,/var/log/*.log。即监控/var/log下这个目录里所有以.log结尾的文件。 同时,比如配置的如果是: 则只会去/var/log目录的所有子目录中寻找以”.log”结尾的文件,而不会寻找/var/log目录下以”.log”结尾的文件。 注意: 如果说,你收集的日志,不需做任何解析,则可以用Filebeat收集到es里。 因为,我这里是,请移步, 采用的是,如下这么一个架构。 架构设计3:filebeat(1.3)(3台)-->redis-->logstash(parse)-->es集群-->kibana--ngix(可选) (我这里,目前为了学习,走这条线路) 所以,我这里就把默认的(filebeat收集后放到es里),给注销掉。以后自己可以改回来。 启动 filebeat [hadoop@HadoopMaster filebeat-1.3.1-x86_64]$ pwd /home/hadoop/app/filebeat-1.3.1-x86_64 [hadoop@HadoopMaster filebeat-1.3.1-x86_64]$ ll total 11116 -rwxr-xr-x. 1 hadoop hadoop 11354200 Sep 15 2016 filebeat -rw-r--r--. 1 hadoop hadoop 814 Sep 15 2016 filebeat.template.json -rw-r--r--. 1 hadoop hadoop 17212 Sep 15 2016 filebeat.yml [hadoop@HadoopMaster filebeat-1.3.1-x86_64]$ ./filebeat -c filebeat.yml 初步修改好配置文件之后,我这里打开HadoopMaster(因为Filebeat安装在这台)的另一个窗口。 [hadoop@HadoopMaster ~]$ pwd /home/hadoop [hadoop@HadoopMaster ~]$ ll total 40 drwxrwxr-x. 11 hadoop hadoop 4096 Mar 26 18:49 app drwxrwxr-x. 7 hadoop hadoop 4096 Mar 25 06:34 data drwxr-xr-x. 2 hadoop hadoop 4096 Oct 31 17:19 Desktop drwxr-xr-x. 2 hadoop hadoop 4096 Oct 31 17:19 Documents drwxr-xr-x. 2 hadoop hadoop 4096 Oct 31 17:19 Downloads drwxr-xr-x. 2 hadoop hadoop 4096 Oct 31 17:19 Music drwxr-xr-x. 2 hadoop hadoop 4096 Oct 31 17:19 Pictures drwxr-xr-x. 2 hadoop hadoop 4096 Oct 31 17:19 Public drwxr-xr-x. 2 hadoop hadoop 4096 Oct 31 17:19 Templates drwxr-xr-x. 2 hadoop hadoop 4096 Oct 31 17:19 Videos [hadoop@HadoopMaster ~]$ touch app.log [hadoop@HadoopMaster ~]$ echo My name is zhouls >> app.log [hadoop@HadoopMaster ~]$ more app.log My name is zhouls [hadoop@HadoopMaster ~]$ 立马,对应这边,是 [hadoop@HadoopMaster filebeat-1.3.1-x86_64]$ ll total 11116 -rwxr-xr-x. 1 hadoop hadoop 11354200 Sep 15 2016 filebeat -rw-r--r--. 1 hadoop hadoop 814 Sep 15 2016 filebeat.template.json -rw-r--r--. 1 hadoop hadoop 17218 Mar 26 19:58 filebeat.yml [hadoop@HadoopMaster filebeat-1.3.1-x86_64]$ ./filebeat -c filebeat.yml {"@timestamp":"2017-03-26T11:59:26.392Z", "beat":{"hostname":"HadoopMaster","name":"HadoopMaster"}, "count":1, "fields":null, "input_type":"log", "message":"My name is zhouls", "offset":0, "source":"/home/hadoop/app.log", "type":"log"} 同时,继续等待下一个的数据收集,其实啊,功能类似与hadoop里的flume。这个很简单 若想,深入学习的,请移步, http://www.cnblogs.com/zlslch/category/894300.html filebeat的帮助命令 [hadoop@HadoopMaster filebeat-1.3.1-x86_64]$ pwd /home/hadoop/app/filebeat-1.3.1-x86_64 [hadoop@HadoopMaster filebeat-1.3.1-x86_64]$ ./filebeat -h Usage of ./filebeat: -N Disable actual publishing for testing -c string Configuration file (default "/home/hadoop/app/filebeat-1.3.1-x86_64/filebeat.yml") -configtest Test configuration and exit. -cpuprofile string Write cpu profile to file -d string Enable certain debug selectors -e Log to stderr and disable syslog/file output -httpprof string Start pprof http server -memprofile string Write memory profile to this file -v Log at INFO level -version Print version and exit [hadoop@HadoopMaster filebeat-1.3.1-x86_64]$ filebeat的后台运行 [hadoop@HadoopMaster filebeat-1.3.1-x86_64]$ pwd /home/hadoop/app/filebeat-1.3.1-x86_64 [hadoop@HadoopMaster filebeat-1.3.1-x86_64]$ nohup ./filebeat -e -c filebeat.yml >/dev/null 2>&1 & [1] 2697 [hadoop@HadoopMaster filebeat-1.3.1-x86_64]$ 为了后期调试和排除问题方便。建议开启日志(可选) 去修改filebeat.yml配置文件Logging部分 [hadoop@HadoopMaster filebeat-1.3.1-x86_64]$ pwd /home/hadoop/app/filebeat-1.3.1-x86_64 [hadoop@HadoopMaster filebeat-1.3.1-x86_64]$ ll total 11116 -rwxr-xr-x. 1 hadoop hadoop 11354200 Sep 15 2016 filebeat -rw-r--r--. 1 hadoop hadoop 814 Sep 15 2016 filebeat.template.json -rw-r--r--. 1 hadoop hadoop 17218 Mar 26 19:58 filebeat.yml [hadoop@HadoopMaster filebeat-1.3.1-x86_64]$ vim filebeat.yml logging: logging: # Send all logging output to syslog. On Windows default is false, otherwise # default is true. to_syslog: false # Write all logging output to files. Beats automatically rotate files if rotateeverybytes # limit is reached. to_files: true # To enable logging to files, to_files option has to be set to true files: # The directory where the log files will written to. path: /home/hadoop/mybeat (日志保存的目录) # The name of the files where the logs are written to. name: mybeat (日志文件名) # Configure log file size limit. If limit is reached, log file will be # automatically rotated rotateeverybytes: 10485760 # = 10MB (当日志文件达到10M的时候会滚动生成一个新文件) # Number of rotated log files to keep. Oldest files will be deleted first. keepfiles: 7 (文件保留时间 7天) # Enable debug output for selected components. To enable all selectors use ["*"] # Other available selectors are beat, publish, service # Multiple selectors can be chained. #selectors: [ ] # Sets log level. The default log level is error. # Available log levels are: critical, error, warning, info, debug level: debug (在调试期间可以把日志级别调整为debug级别,正式运行的时候可以调整为info或者error级别) 然后,我们再次启动filebeat,来查看它的日志 [hadoop@HadoopMaster filebeat-1.3.1-x86_64]$ pwd /home/hadoop/app/filebeat-1.3.1-x86_64 [hadoop@HadoopMaster filebeat-1.3.1-x86_64]$ ./filebeat -c filebeat.yml [hadoop@HadoopMaster ~]$ pwd /home/hadoop [hadoop@HadoopMaster ~]$ ll total 44 drwxrwxr-x. 11 hadoop hadoop 4096 Mar 26 18:49 app -rw-rw-r--. 1 hadoop hadoop 18 Mar 26 19:59 app.log drwxrwxr-x. 7 hadoop hadoop 4096 Mar 25 06:34 data drwxr-xr-x. 2 hadoop hadoop 4096 Oct 31 17:19 Desktop drwxr-xr-x. 2 hadoop hadoop 4096 Oct 31 17:19 Documents drwxr-xr-x. 2 hadoop hadoop 4096 Oct 31 17:19 Downloads drwxr-xr-x. 2 hadoop hadoop 4096 Oct 31 17:19 Music drwxr-xr-x. 2 hadoop hadoop 4096 Oct 31 17:19 Pictures drwxr-xr-x. 2 hadoop hadoop 4096 Oct 31 17:19 Public drwxr-xr-x. 2 hadoop hadoop 4096 Oct 31 17:19 Templates drwxr-xr-x. 2 hadoop hadoop 4096 Oct 31 17:19 Videos [hadoop@HadoopMaster ~]$ ll total 48 drwxrwxr-x. 11 hadoop hadoop 4096 Mar 26 18:49 app -rw-rw-r--. 1 hadoop hadoop 18 Mar 26 19:59 app.log drwxrwxr-x. 7 hadoop hadoop 4096 Mar 25 06:34 data drwxr-xr-x. 2 hadoop hadoop 4096 Oct 31 17:19 Desktop drwxr-xr-x. 2 hadoop hadoop 4096 Oct 31 17:19 Documents drwxr-xr-x. 2 hadoop hadoop 4096 Oct 31 17:19 Downloads drwxr-xr-x. 2 hadoop hadoop 4096 Oct 31 17:19 Music drwxr-xr-x. 2 hadoop hadoop 4096 Mar 26 20:35 mybeat drwxr-xr-x. 2 hadoop hadoop 4096 Oct 31 17:19 Pictures drwxr-xr-x. 2 hadoop hadoop 4096 Oct 31 17:19 Public drwxr-xr-x. 2 hadoop hadoop 4096 Oct 31 17:19 Templates drwxr-xr-x. 2 hadoop hadoop 4096 Oct 31 17:19 Videos [hadoop@HadoopMaster ~]$ [hadoop@HadoopMaster mybeat]$ pwd /home/hadoop/mybeat [hadoop@HadoopMaster mybeat]$ ll total 12 -rw-rw-r--. 1 hadoop hadoop 9497 Mar 26 20:37 mybeat [hadoop@HadoopMaster mybeat]$ more mybeat 2017-03-26T20:35:42+08:00 DBG Disable stderr logging 2017-03-26T20:35:42+08:00 DBG Initializing output plugins 2017-03-26T20:35:42+08:00 INFO GeoIP disabled: No paths were set under output.geoip.paths 2017-03-26T20:35:42+08:00 INFO Activated console as output plugin. 2017-03-26T20:35:42+08:00 DBG Create output worker 2017-03-26T20:35:42+08:00 DBG No output is defined to store the topology. The server fields might not be filled. 2017-03-26T20:35:42+08:00 INFO Publisher name: HadoopMaster 2017-03-26T20:35:42+08:00 INFO Flush Interval set to: 1s 2017-03-26T20:35:42+08:00 INFO Max Bulk Size set to: 2048 2017-03-26T20:35:42+08:00 DBG create bulk processing worker (interval=1s, bulk size=2048) 2017-03-26T20:35:42+08:00 INFO Init Beat: filebeat; Version: 1.3.1 2017-03-26T20:35:42+08:00 INFO filebeat sucessfully setup. Start running. 2017-03-26T20:35:42+08:00 INFO Registry file set to: /home/hadoop/app/filebeat-1.3.1-x86_64/.filebeat 2017-03-26T20:35:42+08:00 INFO Loading registrar data from /home/hadoop/app/filebeat-1.3.1-x86_64/.filebeat 2017-03-26T20:35:42+08:00 DBG Set idleTimeoutDuration to 5s 2017-03-26T20:35:42+08:00 DBG File Configs: [/home/hadoop/app.log] 2017-03-26T20:35:42+08:00 INFO Set ignore_older duration to 0s 2017-03-26T20:35:42+08:00 INFO Set close_older duration to 1h0m0s 2017-03-26T20:35:42+08:00 INFO Set scan_frequency duration to 10s 2017-03-26T20:35:42+08:00 INFO Input type set to: log 2017-03-26T20:35:42+08:00 INFO Set backoff duration to 1s 2017-03-26T20:35:42+08:00 INFO Set max_backoff duration to 10s 2017-03-26T20:35:42+08:00 INFO force_close_file is disabled 2017-03-26T20:35:42+08:00 DBG Waiting for 1 prospectors to initialise 2017-03-26T20:35:42+08:00 INFO Starting prospector of type: log 2017-03-26T20:35:42+08:00 DBG exclude_files: [] 2017-03-26T20:35:42+08:00 DBG scan path /home/hadoop/app.log 扩展延伸(进一步建议学习,更贴近生产) 本文转自大数据躺过的坑博客园博客,原文链接:http://www.cnblogs.com/zlslch/p/6622052.html,如需转载请自行联系原作者