Hadoop手把手逐级搭建(3) Hadoop高可用(HA)

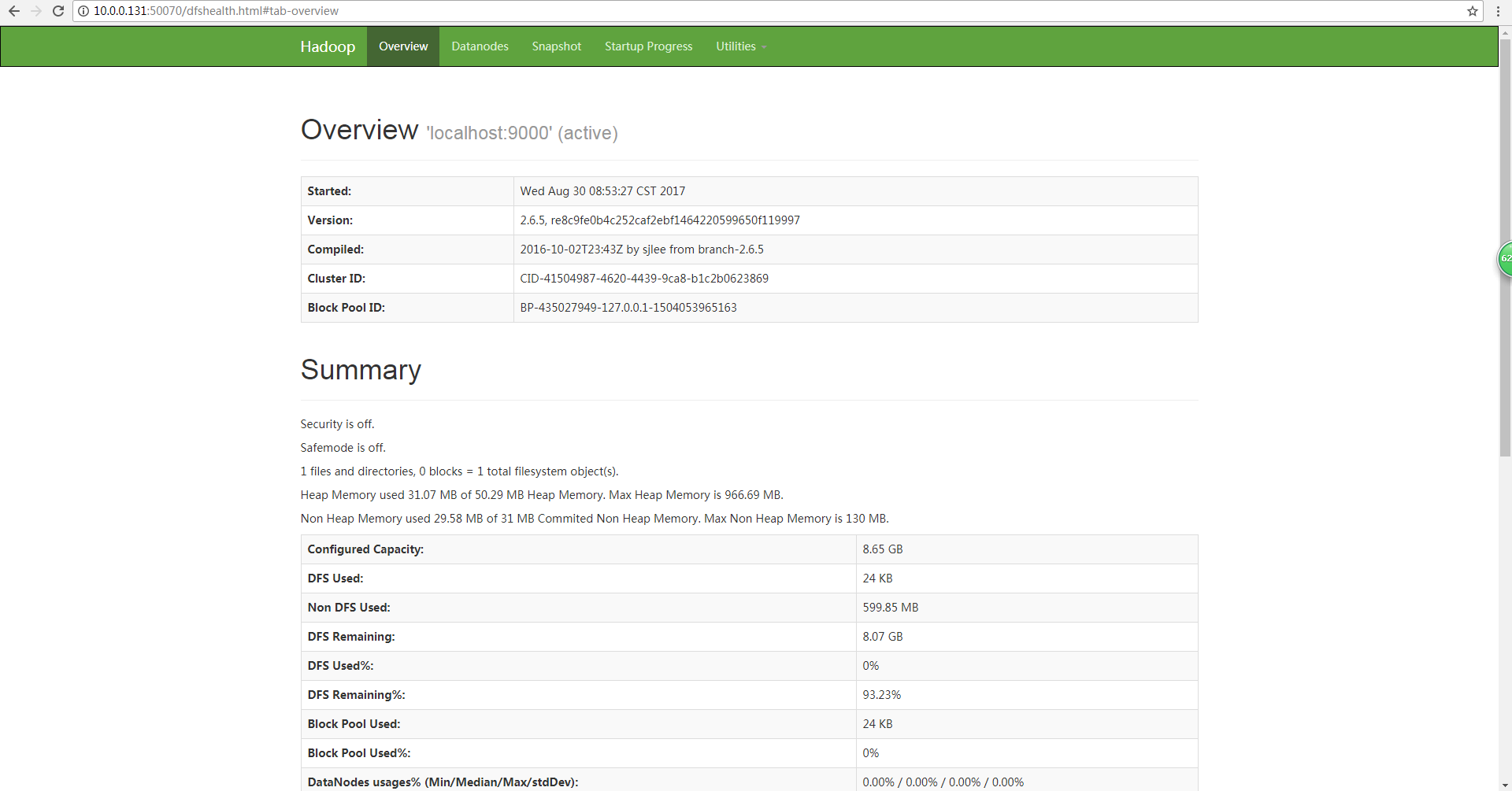

前置步骤: 1). 第一阶段:Hadoop单机伪分布(single) 2). 第二阶段:Hadoop完全分布式(full) 第三阶段: Hadoop高可用(HA) 0. 步骤概述 1). 为完全分布式保存hadoop配置 2). 为hadoop2配置hadoop1的ssh免密 3). 在hadoop2上配置zookeeper 4). 在hadoop1上修改hadoop配置文件为HA高可用模式 5). 第一次启动HA 6). 常规启动HA 7). 在完全分布式集群上测试wordcount程序 1. 为完全分布式保存hadoop配置 1.1 进入$HADOOP_HOME/etc/目录 [root@hadoop1 ~]# cd /opt/test/hadoop-2.6.5/etc 1.2 备份hadoop完全分布式配置,命名为hadoop-full,供以后使用 [root@hadoop1 etc]# cp -r hadoop/ hadoop-full 1.3 查看$HADOOP_HOME/etc/目录,备份成功 [root@hadoop1 etc]# ls hadoop hadoop-full # hadoop-full保留了已有配置,接下来高可用的配置继续在hadoop文件夹内修改 2. 为hadoop2配置hadoop1的ssh免密 2.1 在hadoop2上生成密匙 [root@hadoop2 ~]# ssh-keygen -t dsa -P '' -f ~/.ssh/id_dsa 2.2 在hadoop2上配置对自身免密 [root@hadoop2 ~]# cat ~/.ssh/id_dsa.pub >> ~/.ssh/authorized_keys 2.3 在hadoop2上查看authorized_keys密匙 [root@hadoop2 ~]# cat ~/.ssh/authorized_keys ssh-dss ***** root@hadoop1 ssh-dss ***** root@hadoop2 # hadoop2上的authorized_keys现在有两个,一个来自hadoop1,一个是自身的 2.4 在hadoop2上将公匙拷贝给hadoop1 2.4.1 方式一:直接用ssh-copy-id –i命令从hadoop2上拷贝到hadoop1 [root@hadoop2 ~]# ssh-copy-id -i ~/.ssh/id_dsa.pub hadoop1 2.4.2.1 方式二(1):首先在hadoop2上操作,用scp命令将公匙复制到hadoop1 [root@hadoop2 ~]# scp ~/.ssh/id_dsa.pub hadoop1:~/.ssh/hadoop2.pub 2.4.2.2 方式二(2):接着在hadoop1上使用cat命令使hadoop2公匙生效 [root@hadoop2 ~]# cat hadoop2.pub >> authorized_keys 2.5 在hadoop2上测试ssh到hadoop1是否成功免密 [root@hadoop2 ~]# ssh hadoop1 [root@hadoop1 ~]# #成功进入hadoop1,没有提示输入密码,表示免密成功 3. 在hadoop2上配置zookeeper 3.1 进入/opt/test/目录 [root@hadoop2 ~]# cd /opt/test [root@hadoop2 test] 3.2 通过xftp将zookeeper-3.4.6.tar.gz上传到hadoop2的/opt/test/目录 3.3 解压缩文件 [root@hadoop2 test]# tar -zxvf zookeeper-3.4.6.tar.gz 3.4 为hadoop2,hadoop3,hadoop4设置zookeeper环境变量 3.4.1 在hadoop2上编辑/etc/profile,增加zookeeper环境变量配置 [root@hadoop2 ~]# vim /etc/profile export ZOOKEEPER_PREFIX=/opt/test/zookeeper-3.4.6 export PATH=$PATH:$JAVA_HOME/bin:$HADOOP_PREFIX/bin:$HADOOP_PREFIX/sbin:$ZOOKEEPER_PREFIX/bin 3.4.2 分发hadoop2上的/etc/profile到hadoop3,hadoop4 [root@hadoop2 ~]# scp /etc/profile hadoop2:/etc/ [root@hadoop2 ~]# scp /etc/profile hadoop2:/etc/ 3.4.3 在hadoop2,hadoop3,hadoop4上使/etc/profile生效 [root@hadoop2 ~]# source /etc/profile [root@hadoop3 ~]# source /etc/profile [root@hadoop4 ~]# source /etc/profile 3.5 编辑zoo.cfg文件 3.5.0 进入/opt/etc/zookeeper-3.4.6/conf目录 [root@hadoop2 ~]# cd /opt/test/zookeeper-3.4.6/conf [root@hadoop2 conf] 3.5.1将zoo_sample.cfg复制为zoo.cfg文件 [root@hadoop2 conf]# cp zoo_sample.cfg zoo.cfg 3.5.2 在zoo.cfg中添加如下内容 [root@hadoop2 conf]# vim zoo.cfg # 配置zookeeper数据存放目录 dataDir=/var/test/zk/ # 设置zookeeper位置信息 server.1=hadoop2:2888:3888 server.2=hadoop3:2888:3888 server.3=hadoop4:2888:3888 3.6 设置zookeeper节点对应的ID 3.6.1 在hadoop2上进入/var/test/ [root@hadoop2 ~]# cd /var/test/ 3.6.2 在test目录下创建zk目录 [root@hadoop2 test]# mkdir zk 3.6.3 进入/var/test/zk目录 [root@hadoop2 test]# cd /var/test/zk 3.6.4 在/var/test/zk目录下生成myid文件 [root@hadoop2 zk]# echo 1 > myid 3.6.5 分别在hadoop3和hadoop4重复3.6.1~3.6.4的操作,其中3.6.4步骤中hadoop3的myid内容为2,hadoop4的myid内容为3 3.6.6 查看hadoop2,hadoop3,hadoop4的myid文件 [root@hadoop2 zk]# cat myid 1 [root@hadoop3 zk]# cat myid 2 [root@hadoop4 zk]# cat myid 3 3.7 将zookeeper-3.4.6目录分发到其他节点上 3.7.1 在hadoop2上进入/opt/test/目录 [root@hadoop2 zk]# cd /opt/test/ 3.7.2 分发zookeeper-3.4.6目录到hadoop3,hadoop4 [root@hadoop2 test]# scp -r zookeeper-3.4.6 hadoop3:`pwd` [root@hadoop2 test]# scp -r zookeeper-3.4.6 hadoop4:`pwd` 3.8 验证zookeeper是否安装成功 3.8.1 在hadoop2,hadoop3,hadoop4上分别启动zookeeper [root@hadoop2 test]# zkServer.sh start [root@hadoop3 test]# zkServer.sh start [root@hadoop4 test]# zkServer.sh start 3.8.2 查看zookeeper状态 # 如果成功,可以看到2个follower,1个leader,leader由选举产生 [root@hadoop2 test]# zkServer.sh status JMX enabled by default Using config: /opt/test/zookeeper-3.4.6/bin/../conf/zoo.cfg Mode: follower [root@hadoop3 test]# zkServer.sh status JMX enabled by default Using config: /opt/test/zookeeper-3.4.6/bin/../conf/zoo.cfg Mode: follower [root@hadoop4 test]# zkServer.sh status JMX enabled by default Using config: /opt/test/zookeeper-3.4.6/bin/../conf/zoo.cfg Mode: leader 3.9 使用zookeeper客户端 3.9.1 进入zookeeper客户端 [root@hadoop2 test]# zkCli.sh [zk: localhost:2181(CONNECTED) 0] 显示上述信息表示进入成功 3.9.2 退出zookeeper客户端 [zk: localhost:2181(CONNECTED) 0] quit Quitting... 2017-11-30 20:43:17,953 [myid:] - INFO [main:ZooKeeper@684] - Session: ** closed 2017-11-30 20:43:17,953 [myid:] – INFO [main-EventThread:ClientCnxn$EventThread@512] - EventThread shut down 3.9.3 停止zookeeper服务 [root@hadoop4 test]# zkServer.sh stop JMX enabled by default Using config: /opt/test/zookeeper-3.4.6/bin/../conf/zoo.cfg Stopping zookeeper ... STOPPED 4. 在hadoop1上修改hadoop配置文件为HA高可用模式 4.1 进入$HADOOP_HOME/etc/hadoop目录 [root@hadoop1 ~]# cd /opt/test/hadoop-2.6.5/etc/hadoop/ 4.2 修改hdfs-site.xml文件 [root@hadoop1 hadoop]# vim hdfs-site.xml 4.2.1删除secondary的配置信息 <property> <name>dfs.namenode.secondary.http-address</name> <value>hadoop2:50090</value> </property> 4.2.2 将原有hdfs-site.xml配置替换为如下内容 <configuration> <property> <name>dfs.replication</name> <value>3</value> </property> <!--定义nameservices逻辑名称--> <property> <name>dfs.nameservices</name> <value>mycluster</value> </property> <!--映射nameservices逻辑名称到namenode逻辑名称--> <property> <name>dfs.ha.namenodes.mycluster</name> <value>nn1,nn2</value> </property> <!--映射namenode逻辑名称到真实主机名称(RPC)--> <property> <name>dfs.namenode.rpc-address.mycluster.nn1</name> <value>hadoop1:8020</value> </property> <!--映射namenode逻辑名称到真实主机名称(RPC)--> <property> <name>dfs.namenode.rpc-address.mycluster.nn2</name> <value>hadoop2:8020</value> </property> <!--映射namenode逻辑名称到真实主机名称(HTTP)--> <property> <name>dfs.namenode.http-address.mycluster.nn1</name> <value>hadoop1:50070</value> </property> <!--映射namenode逻辑名称到真实主机名称(HTTP)--> <property> <name>dfs.namenode.http-address.mycluster.nn2</name> <value>hadoop2:50070</value> </property> <!--配置journalnode集群位置信息及目录--> <property> <name>dfs.namenode.shared.edits.dir</name> <value>qjournal://hadoop1:8485;hadoop2:8485;hadoop3:8485/mycluster</value> </property> <property> <name>dfs.journalnode.edits.dir</name> <value>/var/test/hadoop/ha/jn</value> </property> <!--配置故障切换实现类--> <property> <name>dfs.client.failover.proxy.provider.mycluster</name> <value>org.apache.hadoop.hdfs.server.namenode.ha.ConfiguredFailoverProxyProvider</value> </property> <!--指定切换方式为SSH免密钥方式--> <property> <name>dfs.ha.fencing.methods</name> <value>sshfence</value> </property> <property> <name>dfs.ha.fencing.ssh.private-key-files</name> <value>/root/.ssh/id_dsa</value> </property> <!--设置自动切换--> <property> <name>dfs.ha.automatic-failover.enabled.mycluster</name> <value>true</value> </property> </configuration> 4.3 修改core-site.xml文件,将原有配置替换如下 [root@hadoop1 hadoop]# vim core-site.xml <configuration> <!--设置fs.defaultFS为nameservices的逻辑主机名--> <property> <name>fs.defaultFS</name> <value>hdfs://mycluster</value> </property> <!--设置zookeeper数据存放目录--> <property> <name>hadoop.tmp.dir</name> <value>/var/test/hadoop/ha</value> </property> <!--设置zookeeper位置信息--> <property> <name>ha.zookeeper.quorum.mycluster</name> <value>hadoop2:2181,hadoop3:2181,hadoop4:2181</value> </property> </configuration> 4.4 将修改后的hdfs-site.xml和core-site.xml分发到其他节点 [root@hadoop1 hadoop]# scp hdfs-site.xml core-site.xml hadoop2:`pwd` [root@hadoop1 hadoop]# scp hdfs-site.xml core-site.xml hadoop3:`pwd` [root@hadoop1 hadoop]# scp hdfs-site.xml core-site.xml hadoop4:`pwd` 5. 第一次启动HA 5.1 启动zookeeper 5.1.1 在hadoop2,hadoop3,hadoop4上分别启动zookeeper [root@hadoop2 ~]# zkServer.sh start [root@hadoop3 ~]# zkServer.sh start [root@hadoop4 ~]# zkServer.sh start 5.1.2 hadoop2,hadoop3,hadoop4进程显示如下 [root@hadoop2 ~]# jps **** Jps **** QuorumPeerMain [root@hadoop3 ~]# jps **** Jps **** QuorumPeerMain [root@hadoop4 ~]# jps **** Jps **** QuorumPeerMain 5.2 启动journalnode 5.2.1 在hadoop1,hadoop2,hadoop3上启动journalnode [root@hadoop1 ~]# hadoop-daemon.sh start journalnode [root@hadoop2 ~]# hadoop-daemon.sh start journalnode [root@hadoop3 ~]# hadoop-daemon.sh start journalnode 5.2.2 hadoop1,hadoop2,hadoop3进程显示如下 [root@hadoop1 ~]# jps **** Jps **** JournalNode [root@hadoop2 ~]# jps **** Jps **** QuorumPeerMain **** JournalNode [root@hadoop3 ~]# jps **** Jps **** QuorumPeerMain **** JournalNode 5.3 在hadoop1上格式化namenode [root@hadoop1 ~]# hdfs namenode -format 17/11/30 21:16:35 INFO namenode.NameNode: STARTUP_MSG: /************************************************************ …… SHUTDOWN_MSG: Shutting down NameNode at hadoop1/192.168.111.211 ************************************************************/ 5.4 在hadoop1上启动namenode 5.4.1 格式化完成后在hadoop1上启动namenode [root@hadoop1 ~]# hadoop-daemon.sh start namenode starting namenode, logging to /opt/test/hadoop-2.6.5/logs/hadoop-root-namenode-hadoop1.out 5.4.2 hadoop1进程显示如下 [root@hadoop1 ~]# jps **** Jps **** JournalNode **** NameNode 5.5 在hadoop2,即另一台namenode上同步hadoop1的CID等信息 [root@hadoop2 ~]# hdfs namenode -bootstrapStandby 17/11/30 21:20:27 INFO namenode.NameNode: STARTUP_MSG: /************************************************************ SHUTDOWN_MSG: Shutting down NameNode at hadoop2/192.168.111.212 ************************************************************/ 5.6 在hadoop1上启动其他服务 [root@hadoop1 ~]# start-dfs.sh 17/11/30 21:21:17 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable Starting namenodes on [hadoop1 hadoop2] hadoop1: namenode running as process 1555. Stop it first. hadoop2: starting namenode, logging to /opt/test/hadoop-2.6.5/logs/hadoop-root-namenode-hadoop2.out hadoop2: starting datanode, logging to /opt/test/hadoop-2.6.5/logs/hadoop-root-datanode-hadoop2.out hadoop3: starting datanode, logging to /opt/test/hadoop-2.6.5/logs/hadoop-root-datanode-hadoop3.out hadoop4: starting datanode, logging to /opt/test/hadoop-2.6.5/logs/hadoop-root-datanode-hadoop4.out Starting journal nodes [hadoop1 hadoop2 hadoop3] hadoop1: journalnode running as process 1397. Stop it first. hadoop3: journalnode running as process 1437. Stop it first. hadoop2: journalnode running as process 1435. Stop it first. 17/11/30 21:21:31 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable Starting ZK Failover Controllers on NN hosts [hadoop1 hadoop2] hadoop1: starting zkfc, logging to /opt/test/hadoop-2.6.5/logs/hadoop-root-zkfc-hadoop1.out hadoop2: starting zkfc, logging to /opt/test/hadoop-2.6.5/logs/hadoop-root-zkfc-hadoop2.out 5.7 在hadoop1上格式化zookeeper [root@hadoop1 ~]# hdfs zkfc -formatZK …… 17/11/30 21:23:10 INFO ha.ActiveStandbyElector: Successfully created /hadoop-ha/mycluster in ZK. 17/11/30 21:23:10 INFO ha.ActiveStandbyElector: Session connected. 17/11/30 21:23:10 INFO zookeeper.ClientCnxn: EventThread shut down 17/11/30 21:23:10 INFO zookeeper.ZooKeeper: Session: 0x2600d0c1b960000 closed 5.8 在hadoop2,hadoop3,hadoop4上使用zkCli.sh查看格式化结果 5.8.1 进入zookeeper客户端 [root@hadoop2 ~]# zkCli.sh [zk: localhost:2181(CONNECTED) 0] 5.8.2 使用ls /命令查看根目录 [zk: localhost:2181(CONNECTED) 0] ls / [hadoop-ha, zookeeper] #每个根目录都生成了hadoop-ha目录 *格式化namenode后出现datanode无法启动的情况,查看BUGFIX1 6. 常规启动HA 6.1 启动zookeeper 6.1.1 在hadoop2,hadoop3,hadoop4上分别启动zookeeper [root@hadoop2 ~]# zkServer.sh start [root@hadoop3 ~]# zkServer.sh start [root@hadoop4 ~]# zkServer.sh start 6.1.2 hadoop2,hadoop3,hadoop4进程显示如下 [root@hadoop2 ~]# jps **** Jps **** QuorumPeerMain [root@hadoop3 ~]# jps **** Jps **** QuorumPeerMain [root@hadoop4 ~]# jps **** Jps **** QuorumPeerMain 6.2 启动hdfs集群 6.2.1 在hadoop1上启动整个集群start-dfs.sh [root@hadoop1 ~]# start-dfs.sh 6.2.2 hadoop会启动如下进程: hadoop1, hadoop2: namenode hadoop2, hadoop3, hadoop4: datanode hadoop1, hadoop2, hadoop3: journalnode hadoop1, hadoop2: ZKFC 6.2.3 启动完成后各节点进程显示如下: [root@hadoop1 ~]# jps 2559 JournalNode 2724 DFSZKFailoverController 2790 Jps 2366 NameNode [root@hadoop2 ~]# jps 2099 JournalNode 2217 DFSZKFailoverController 2265 Jps 1754 QuorumPeerMain 2014 DataNode 1945 NameNode [root@hadoop3 ~]# jps 1583 QuorumPeerMain 1714 DataNode 1799 JournalNode 1859 Jps [root@hadoop4 ~]# jps 1685 Jps 1510 QuorumPeerMain 1613 DataNode 6.3 启动yarn 6.3.1 在hadoop1上启动yarn [root@hadoop1 ~]# start-yarn.sh 6.3.2 启动完成后各集群进程如下 [root@hadoop1 ~]# jps 2559 JournalNode 2935 ResourceManager 2724 DFSZKFailoverController 3350 Jps 2366 NameNode [root@hadoop2 ~]# jps 2099 JournalNode 2217 DFSZKFailoverController 1754 QuorumPeerMain 2381 NodeManager 2014 DataNode 2587 Jps 1945 NameNode [root@hadoop3 ~]# jps 1583 QuorumPeerMain 1714 DataNode 2628 Jps 1901 NodeManager 1799 JournalNode [root@hadoop4 ~]# jps 1728 NodeManager 1510 QuorumPeerMain 1613 DataNode 1891 Jps 7. 在完全分布式集群上测试wordcount程序 7.1 从hadoop1进入$HADOOP_HOME/share/hadoop/mapreduce/目录 [root@hadoop1 ~]# cd /opt/test/hadoop-2.6.5/share/hadoop/mapreduce/ 7.2上传test.txt文件到根目录 7.2.1 默认上传 [root@hadoop1 mapreduce]# hadoop fs -put test.txt / 7.2.2 也可以指定blocksize [root@hadoop1 mapreduce]# hdfs dfs -D dfs.blocksize=1048576 -put test.txt / 7.3 运行wordcount测试程序,输出到/output [root@hadoop1 mapreduce]# hadoop jar hadoop-mapreduce-examples-2.6.5.jar wordcount /test.txt /output #运行时会首先看到如下信息 INFO client.RMProxy: Connecting to ResourceManager at /0.0.0.0:8032 7.4 查看mapreduce运行结果 [root@hadoop1 mapreduce]# hadoop dfs -text /output/part-* hello 100003 world 200002 “hello 100000 后续步骤: 4). 第四阶段:Hadoop高可用+联邦+视图文件系统(HA+Federation+ViewFs)