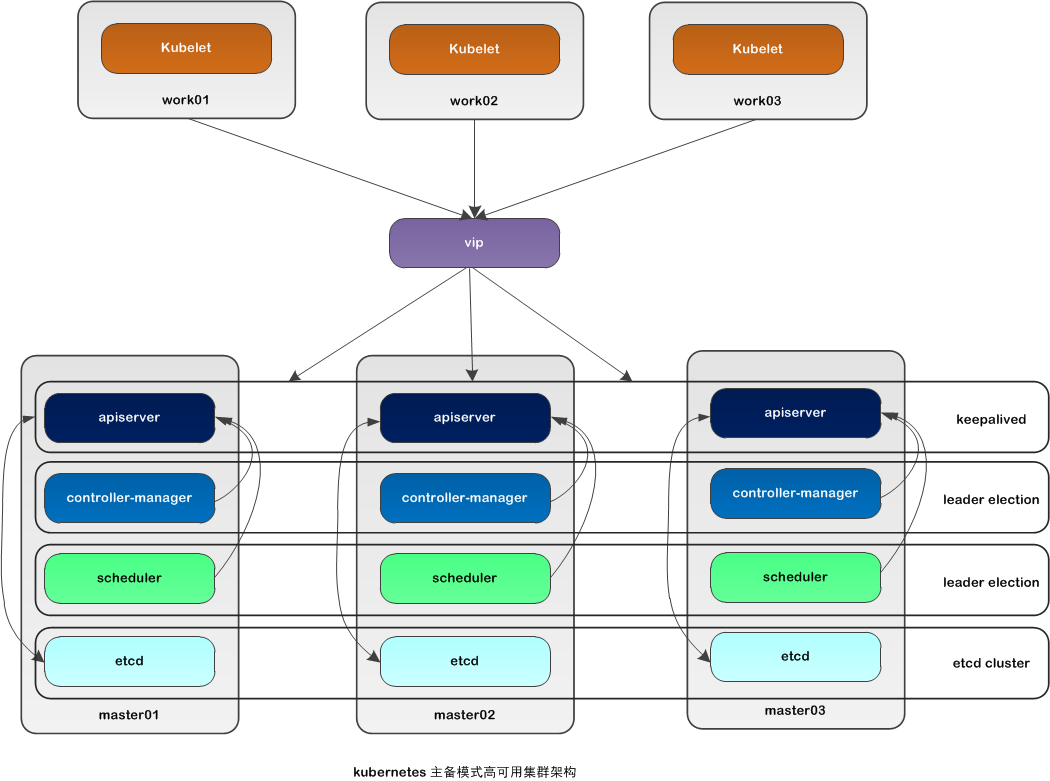

kubernetes 高可用的配置

标签(空格分隔): kubernetes系列

一:kubernetes 高可用的配置

一:kubernetes 的 kubeadmn高可用的配置

![image_1e5fe57c54bk19pf1urc16kk24t9.png-93.3kB]()

二: 系统初始化

2.1 系统主机名

192.168.100.11 node01.flyfish

192.168.100.12 node02.flyfish

192.168.100.13 node03.flyfish

192.168.100.14 node04.flyfish

192.168.100.15 node05.flyfish

192.168.100.16 node06.flyfish

192.168.100.17 node07.flyfish

----

node01.flyfish / node02.flyfish /node03.flyfish 作为master 节点

node04.flyfish / node05.flyfish / node06.flyfish 作为work节点

node07.flyfish 作为 测试节点

keepalive集群VIP 地址为: 192.168.100.100

![image_1e5ekelon1k88ke4eh8tfvph9.png-84.6kB]()

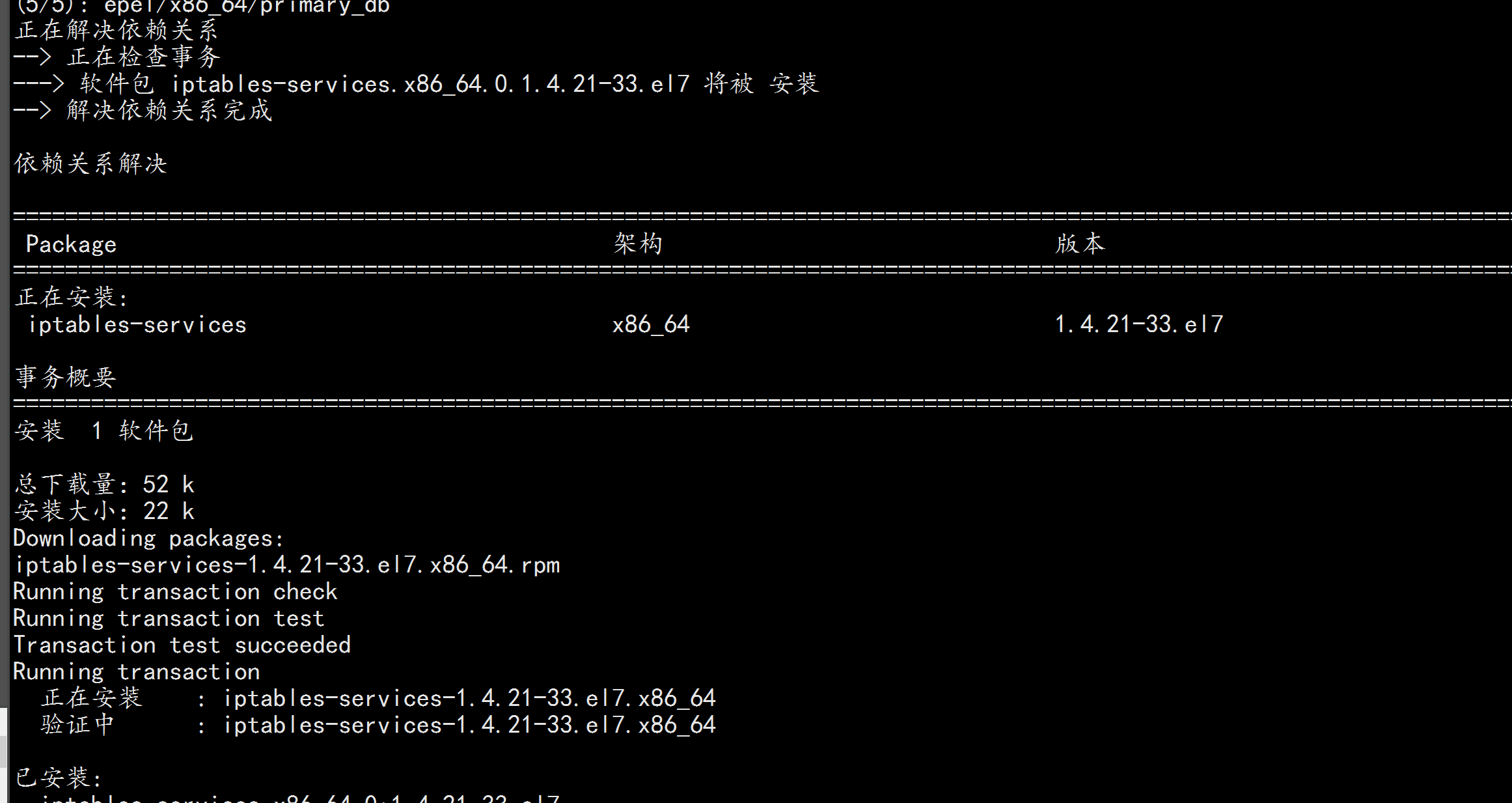

2.2 关闭firewalld 清空iptables 与 selinux 规则

系统节点全部执行:

systemctl stop firewalld && systemctl disable firewalld && yum -y install iptables-services && systemctl start iptables && systemctl enable iptables && iptables -F && service iptables save

![image_1e5ekimj2rha3bi4ab1svc18kmm.png-168.9kB]()

关闭 SELINUX与swap 内存

swapoff -a && sed -i '/ swap / s/^\(.*\)$/#\1/g' /etc/fstab

setenforce 0 && sed -i 's/^SELINUX=.*/SELINUX=disabled/' /etc/selinux/config

![image_1e5ekjkm11idhjke1j40eoakkf13.png-96.6kB]()

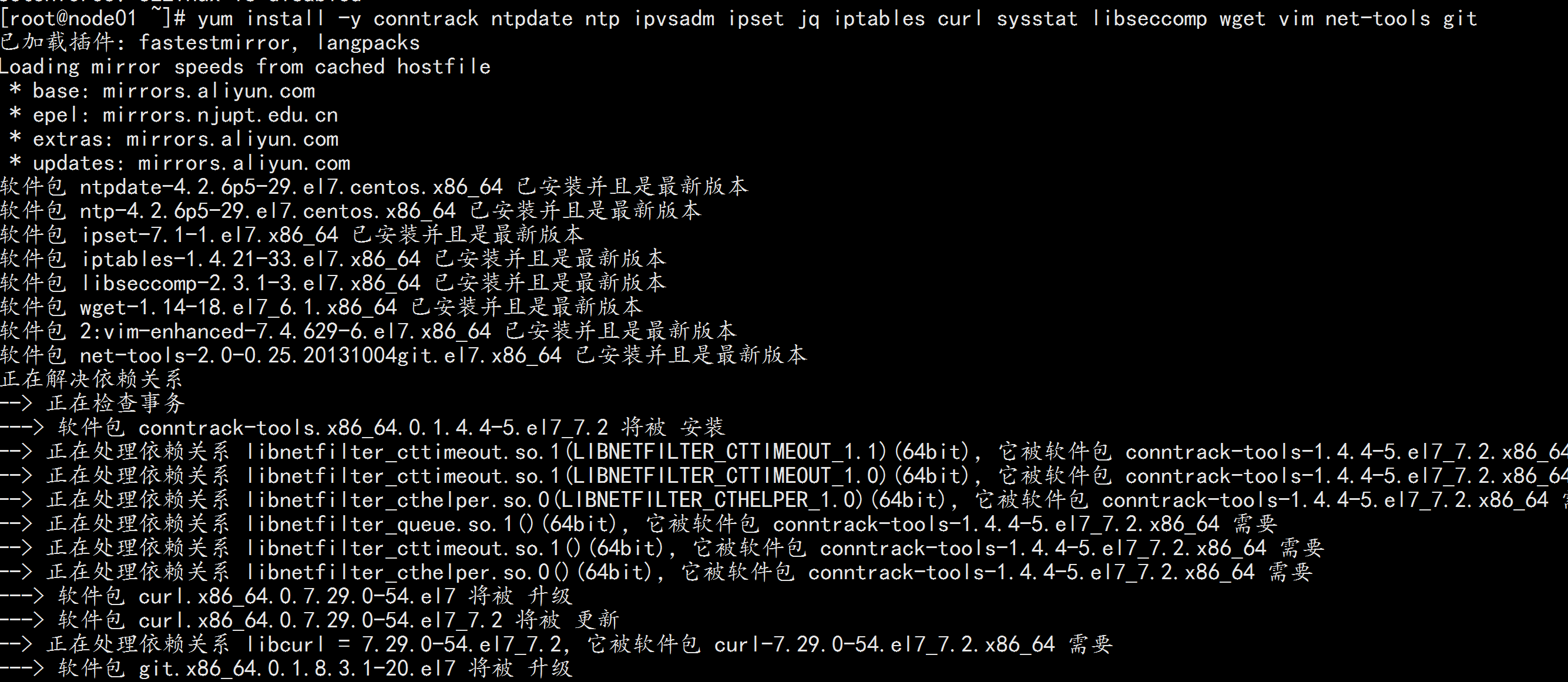

2.3 安装 依赖包

全部节点安装

yum install -y conntrack ntpdate ntp ipvsadm ipset jq iptables curl sysstat libseccomp wget vim net-tools git

![image_1e5ekku5ccjg13fr1cdpo7rvlu1g.png-380.6kB]()

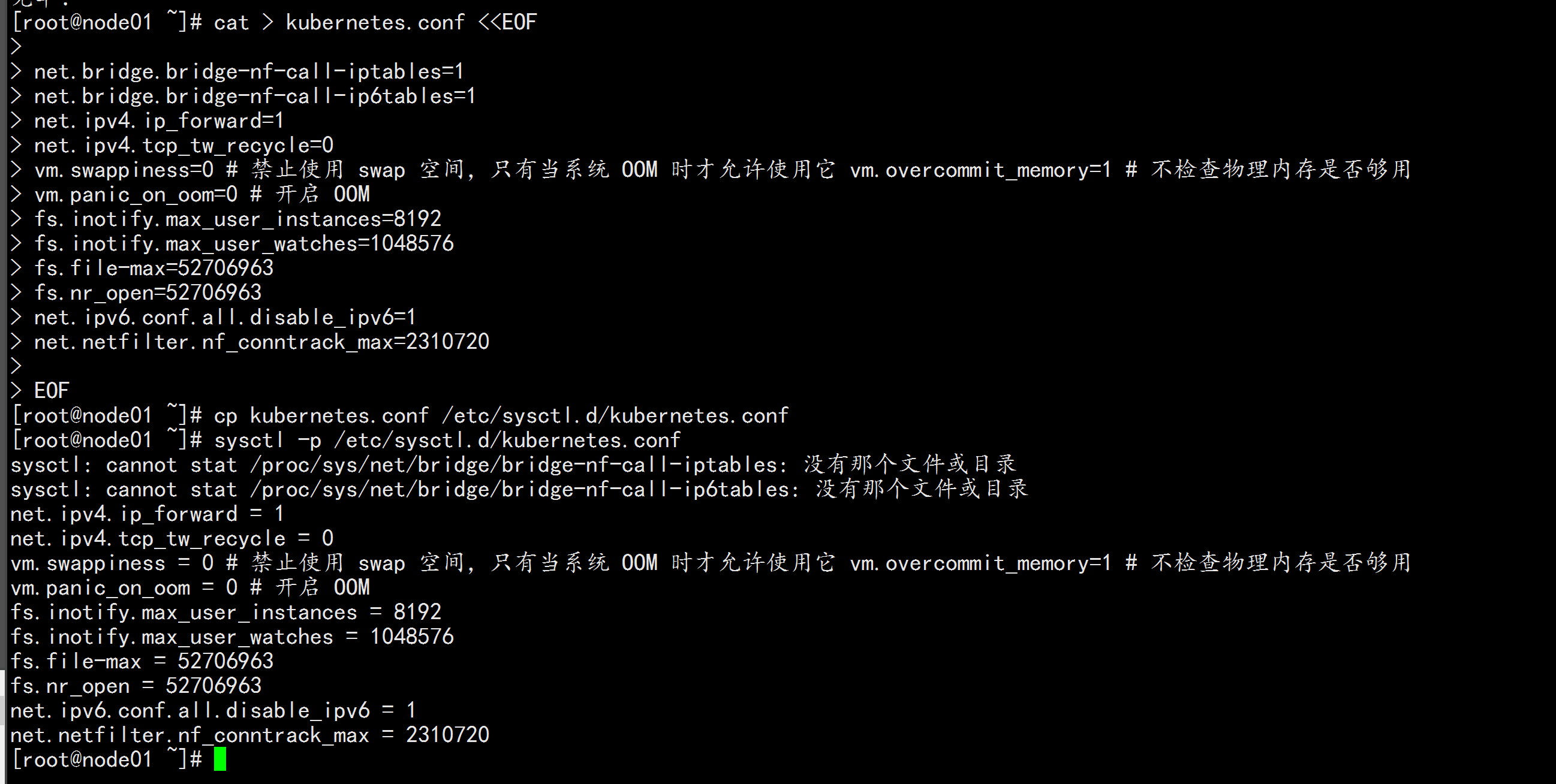

2.4升级调整内核参数,对于 K8S

所有节点都执行

cat > kubernetes.conf <<EOF

net.bridge.bridge-nf-call-iptables=1

net.bridge.bridge-nf-call-ip6tables=1

net.ipv4.ip_forward=1

net.ipv4.tcp_tw_recycle=0

vm.swappiness=0 # 禁止使用 swap 空间,只有当系统 OOM 时才允许使用它 vm.overcommit_memory=1 # 不检查物理内存是否够用

vm.panic_on_oom=0 # 开启 OOM

fs.inotify.max_user_instances=8192

fs.inotify.max_user_watches=1048576

fs.file-max=52706963

fs.nr_open=52706963

net.ipv6.conf.all.disable_ipv6=1

net.netfilter.nf_conntrack_max=2310720

EOF

cp kubernetes.conf /etc/sysctl.d/kubernetes.conf

sysctl -p /etc/sysctl.d/kubernetes.conf

![image_1e5eko4qg16kt1g7i117j1hru15p41t.png-258.4kB]()

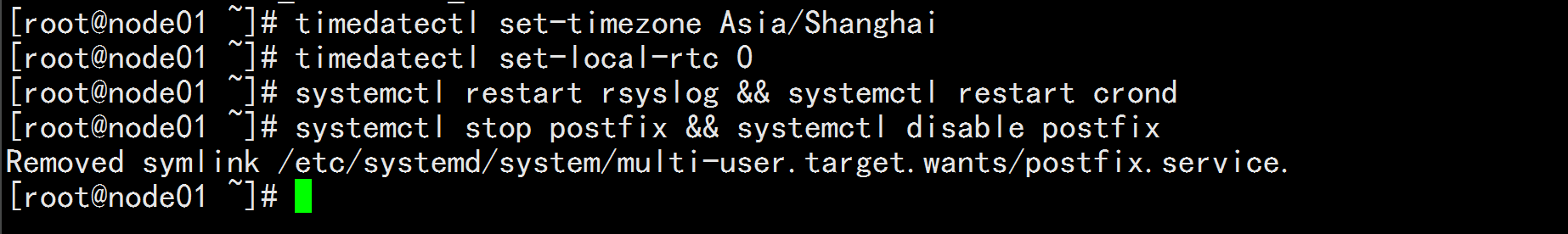

2.5 调整系统时区

# 设置系统时区为 中国/上海 timedatectl set-timezone Asia/Shanghai

# 将当前的 UTC 时间写入硬件时钟 timedatectl set-local-rtc 0

# 重启依赖于系统时间的服务

systemctl restart rsyslog && systemctl restart crond

关闭系统不需要的服务

systemctl stop postfix && systemctl disable postfix

![image_1e5ekqum213e9evpg01r3v17sb2a.png-50.5kB]()

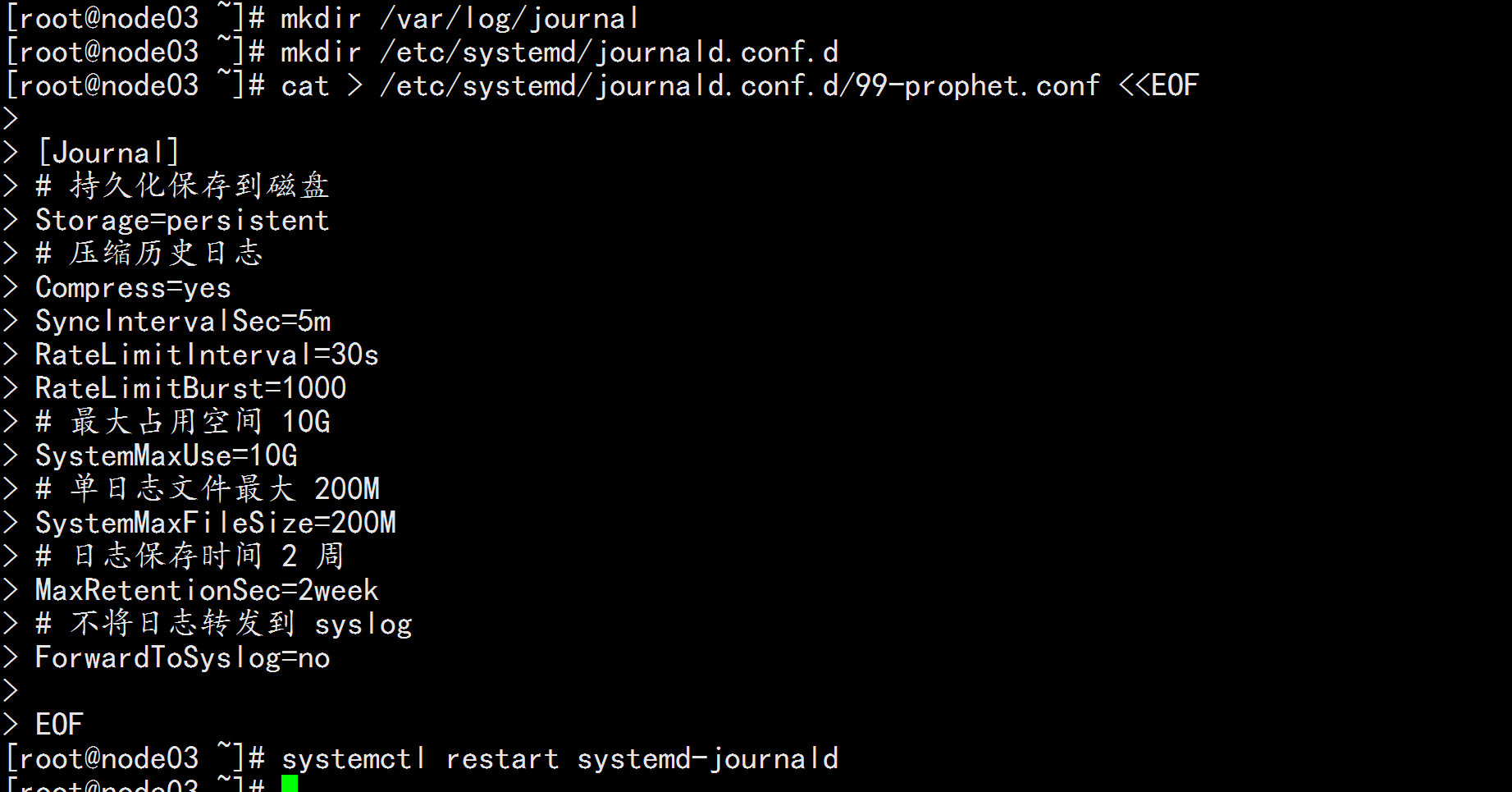

2.6 设置 rsyslogd 和 systemd journald

系统全部节点

mkdir /var/log/journal # 持久化保存日志的目录

mkdir /etc/systemd/journald.conf.d

cat > /etc/systemd/journald.conf.d/99-prophet.conf <<EOF

[Journal]

# 持久化保存到磁盘

Storage=persistent

# 压缩历史日志

Compress=yes

SyncIntervalSec=5m

RateLimitInterval=30s

RateLimitBurst=1000

# 最大占用空间 10G

SystemMaxUse=10G

# 单日志文件最大 200M

SystemMaxFileSize=200M

# 日志保存时间 2 周

MaxRetentionSec=2week

# 不将日志转发到 syslog

ForwardToSyslog=no

EOF

systemctl restart systemd-journald

![image_1e5el0stg1rf01l261ofj1gmg1ks82n.png-140.1kB]()

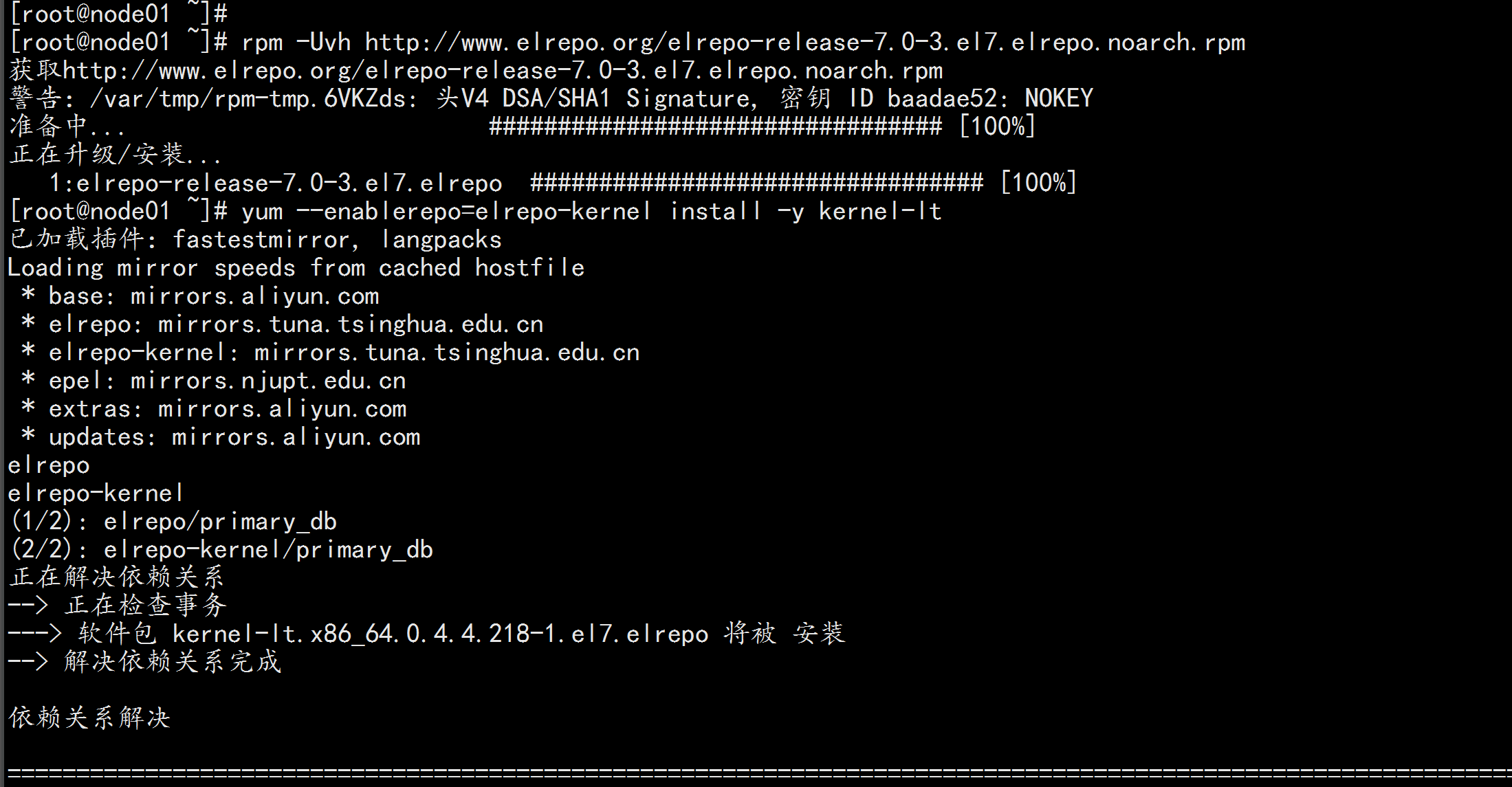

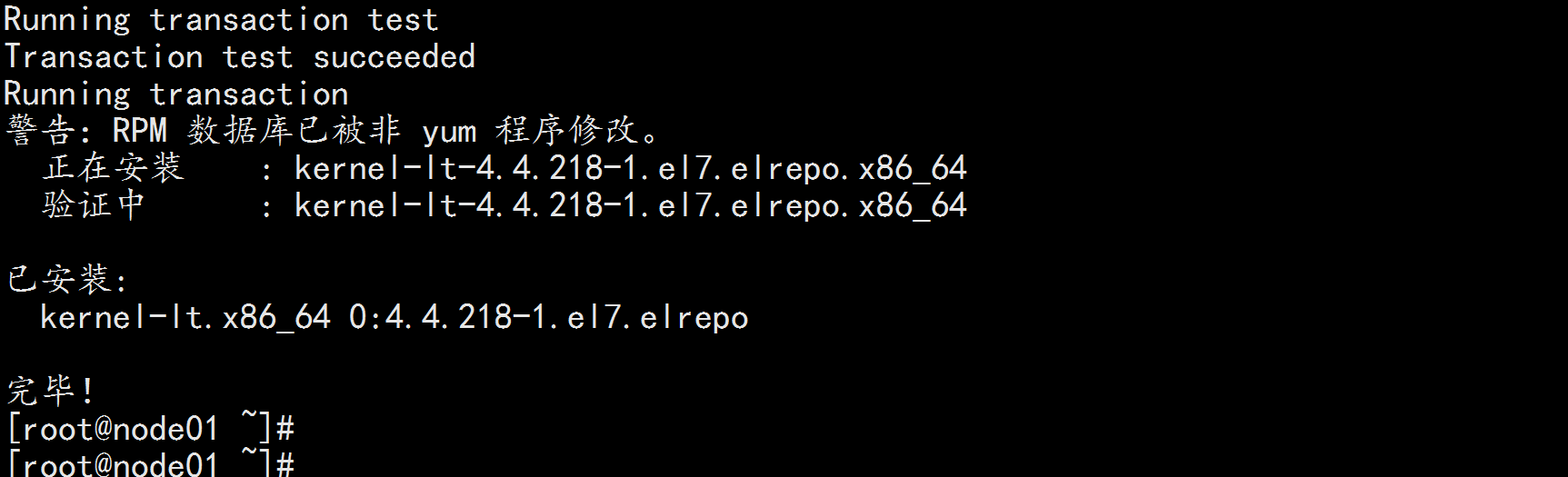

2.7升级系统内核为 4.44

CentOS 7.x 系统自带的 3.10.x 内核存在一些 Bugs,导致运行的 Docker、Kubernetes 不稳定,例如: rpm -Uvh

http://www.elrepo.org/elrepo-release-7.0-3.el7.elrepo.noarch.rpm

rpm -Uvh http://www.elrepo.org/elrepo-release-7.0-3.el7.elrepo.noarch.rpm

# 安装完成后检查 /boot/grub2/grub.cfg 中对应内核 menuentry 中是否包含 initrd16 配置,如果没有,再安装 一次!

yum --enablerepo=elrepo-kernel install -y kernel-lt

# 设置开机从新内核启动

grub2-set-default "CentOS Linux (4.4.182-1.el7.elrepo.x86_64) 7 (Core)"

reboot

# 重启后安装内核源文件

yum --enablerepo=elrepo-kernel install kernel-lt-devel-$(uname -r) kernel-lt-headers-$(uname -r)

![image_1e5elbe4h1l39uf73105qag9234.png-190.9kB]()

![image_1e5elbvs38sj1bojtfik4je0b3h.png-67.1kB]()

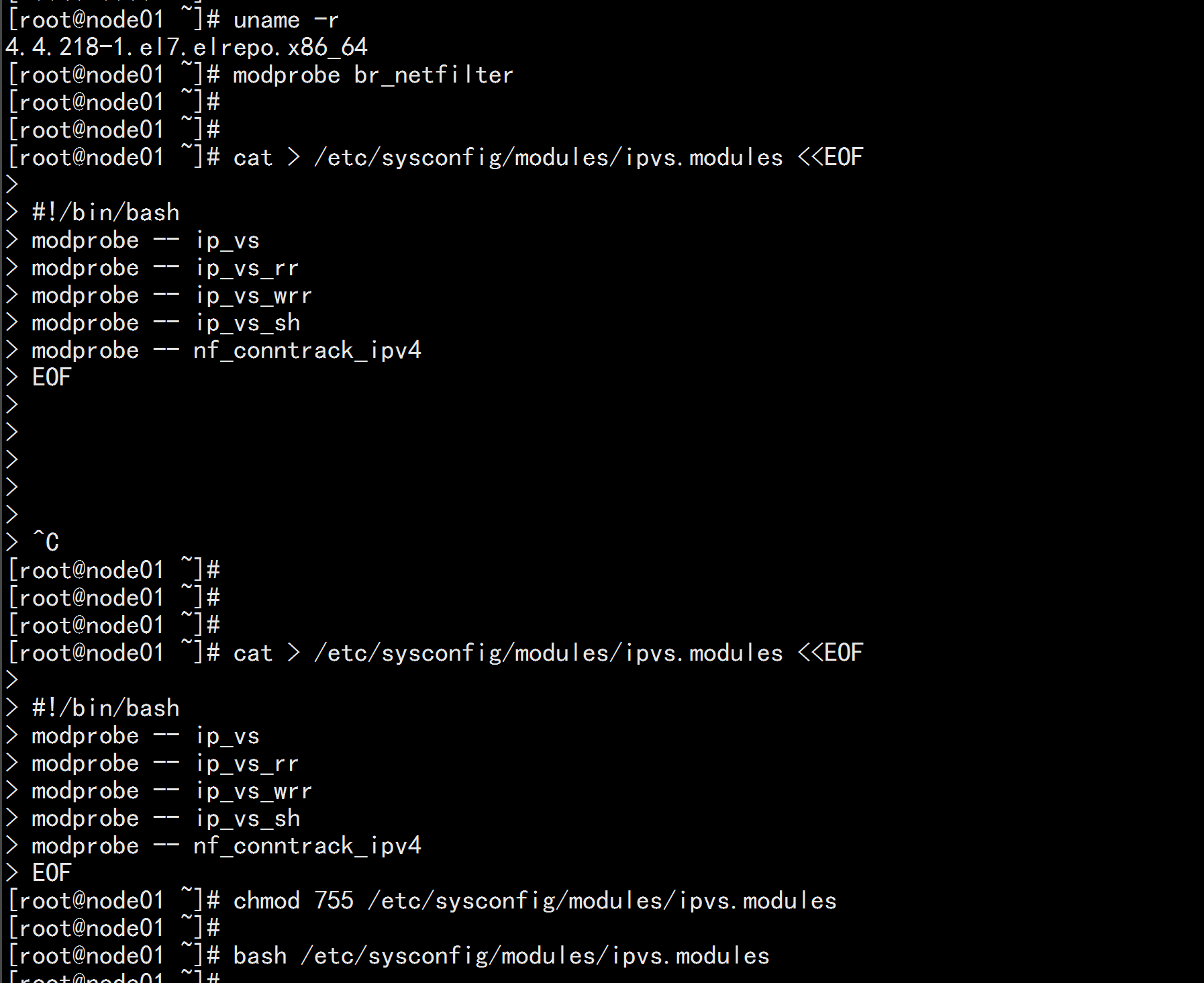

2.8 kube-proxy开启ipvs的前置条件

modprobe br_netfilter

cat > /etc/sysconfig/modules/ipvs.modules <<EOF

#!/bin/bash

modprobe -- ip_vs

modprobe -- ip_vs_rr

modprobe -- ip_vs_wrr

modprobe -- ip_vs_sh

modprobe -- nf_conntrack_ipv4

EOF

chmod 755 /etc/sysconfig/modules/ipvs.modules

bash /etc/sysconfig/modules/ipvs.modules

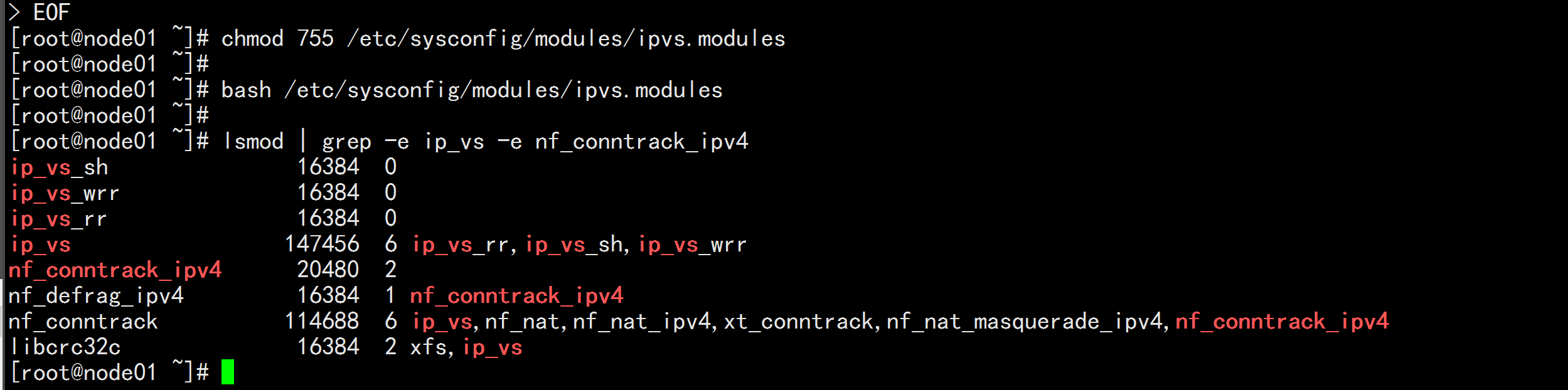

lsmod | grep -e ip_vs -e nf_conntrack_ipv4

![image_1e5elhs3f1o6k4ld14hhf1gqq641.png-168.3kB]()

![image_1e5eli9t91enb1r6s8fk1401thj4e.png-110.8kB]()

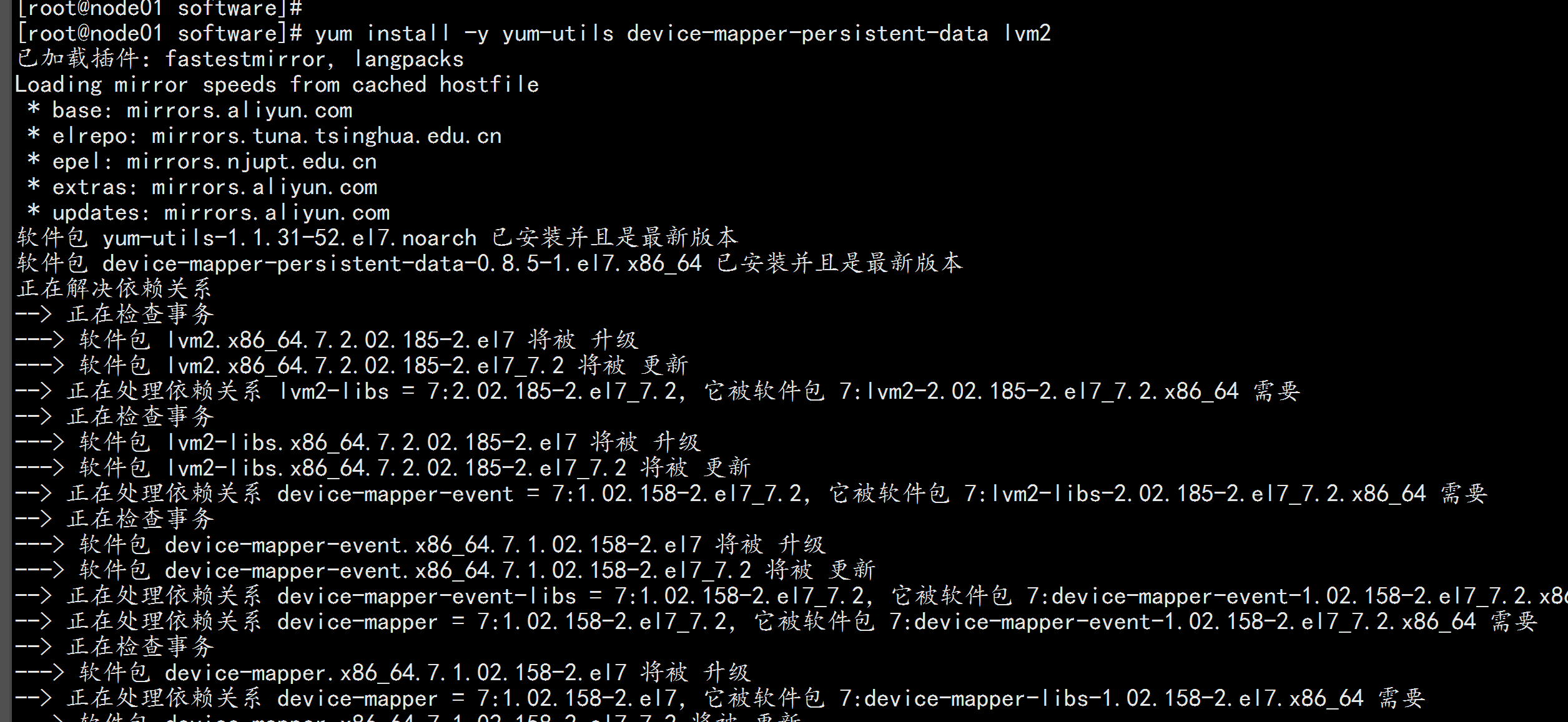

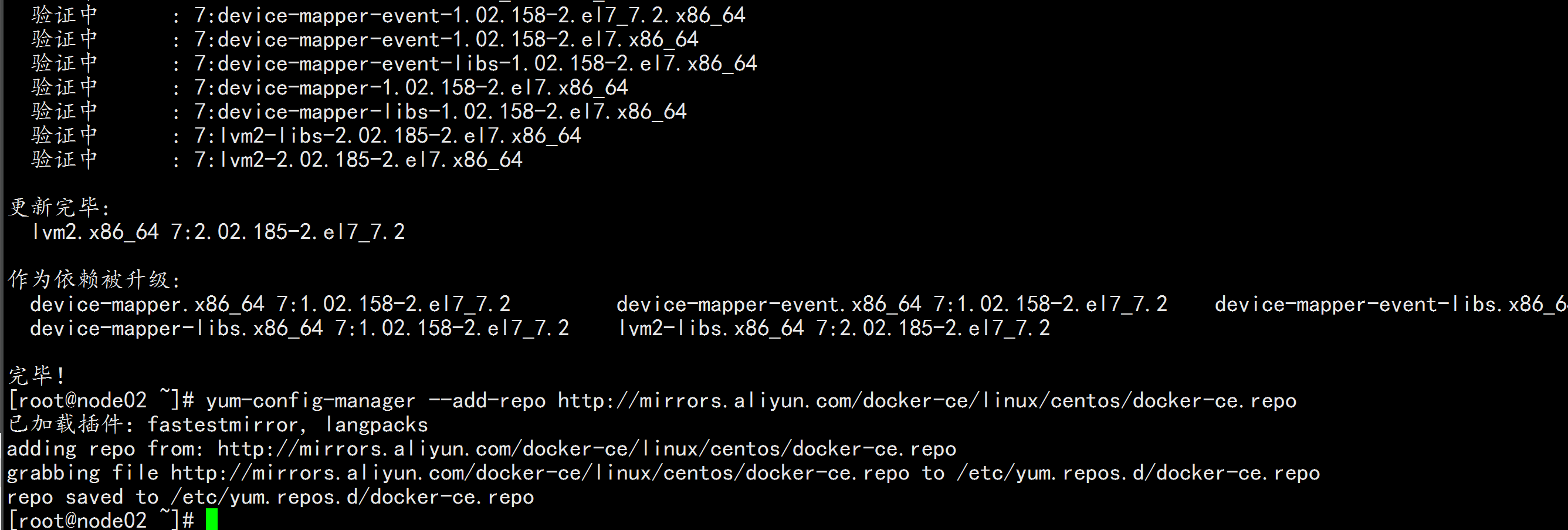

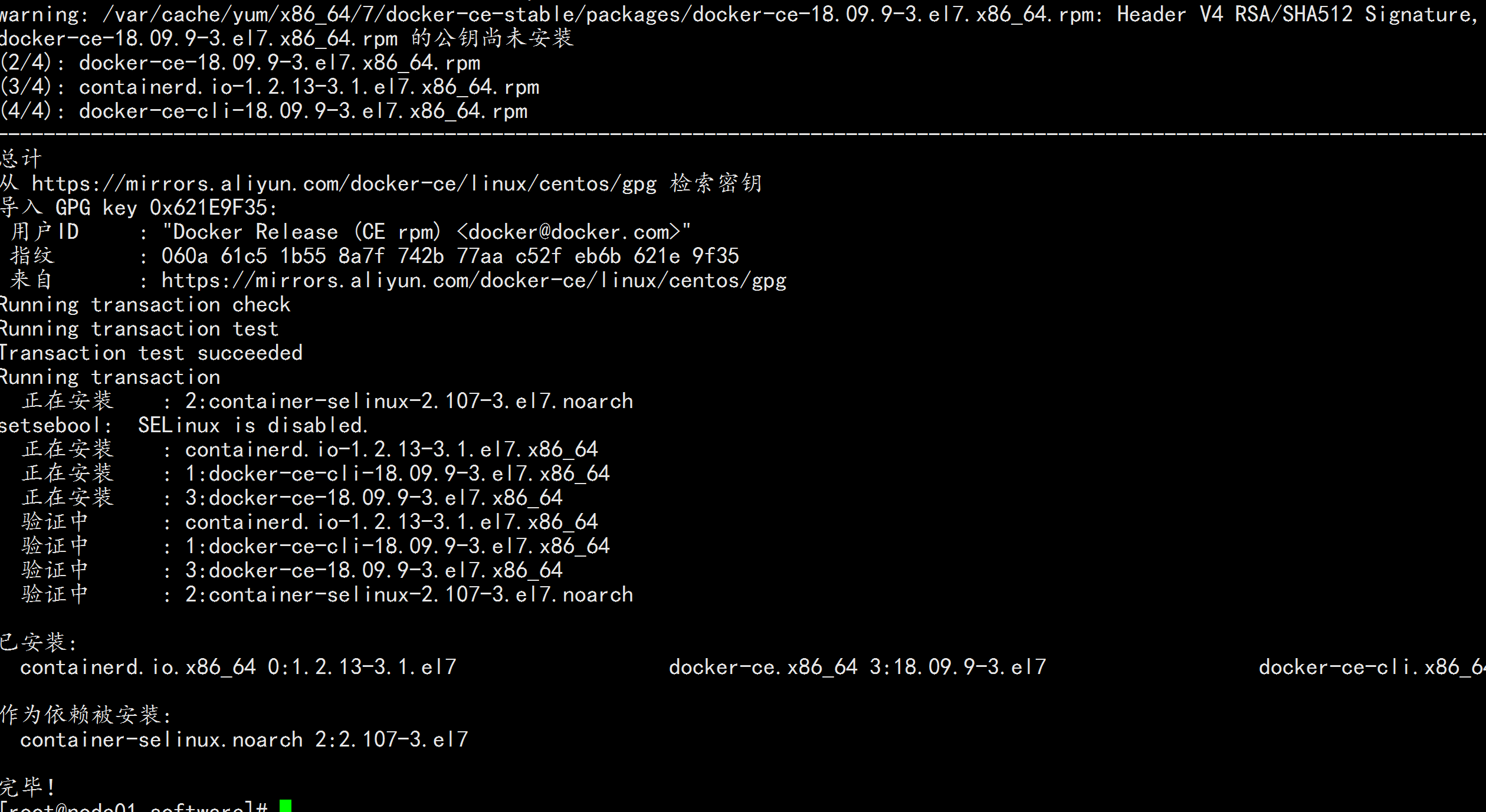

三: 开始安装docker

3.1 安装docker

机器节点都执行:

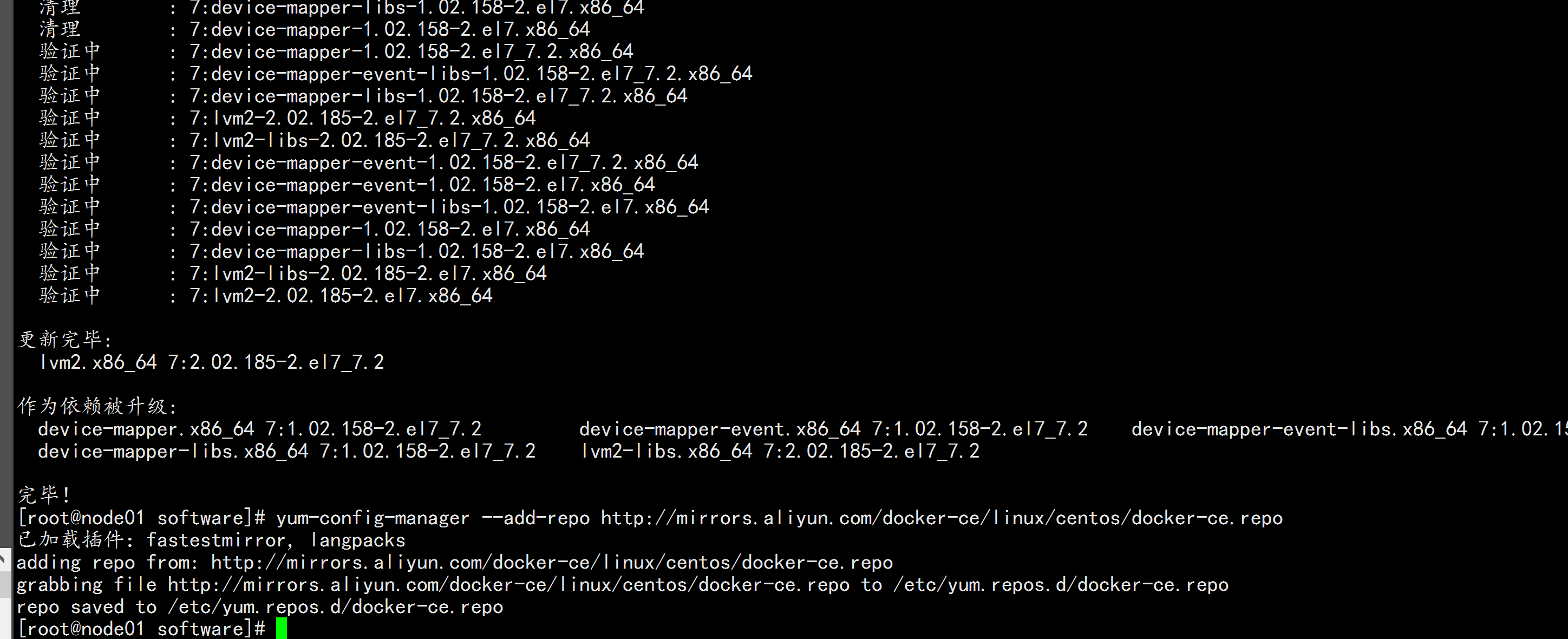

yum install -y yum-utils device-mapper-persistent-data lvm2

yum-config-manager --add-repo http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

yum update -y && yum install docker-ce-18.09.9 docker-ce-cli-18.09.9 containerd.io -y

重启机器: reboot

查看内核版本: uname -r

在加载: grub2-set-default "CentOS Linux (4.4.182-1.el7.elrepo.x86_64) 7 (Core)" && reboot

如果还不行

就改 文件 : vim /etc/grub2.cfg 注释掉 3.10 的 内核

保证 内核的版本 为 4.4

service docker start

chkconfig docker on

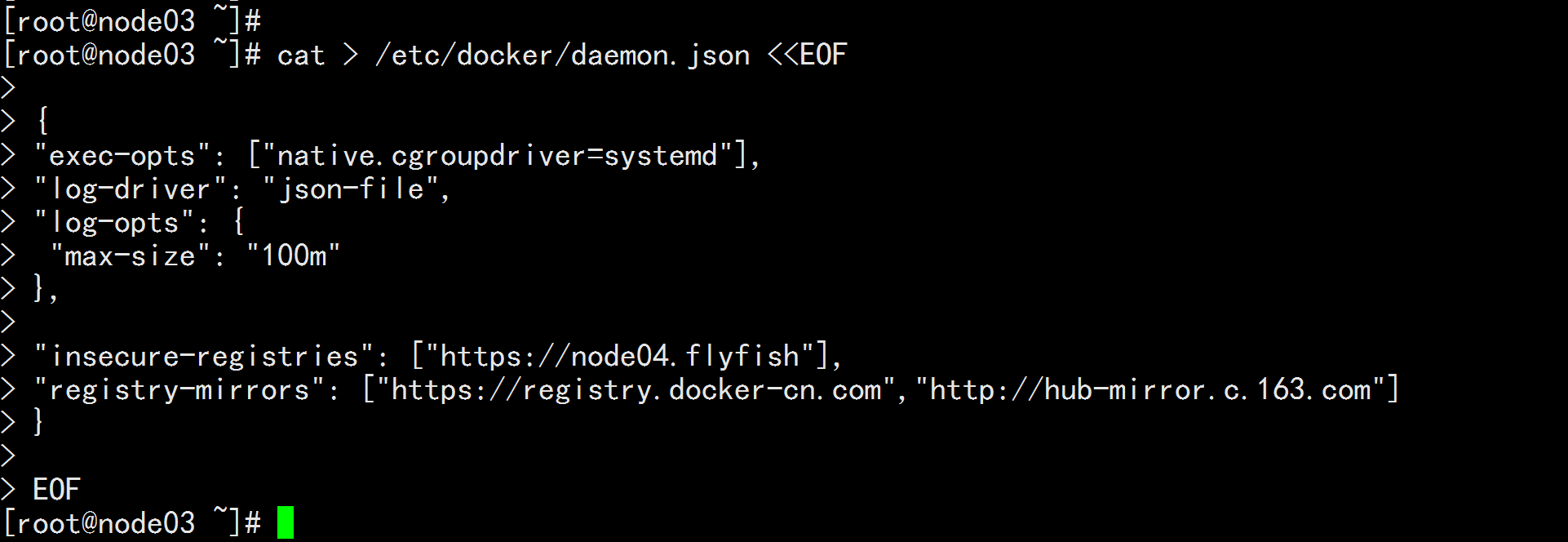

## 创建 /etc/docker 目录

cat > /etc/docker/daemon.json <<EOF

{

"exec-opts": ["native.cgroupdriver=systemd"],

"log-driver": "json-file",

"log-opts": {

"max-size": "100m"

},

"insecure-registries": ["https://node04.flyfish"],

"registry-mirrors": ["https://registry.docker-cn.com","http://hub-mirror.c.163.com"]

}

EOF

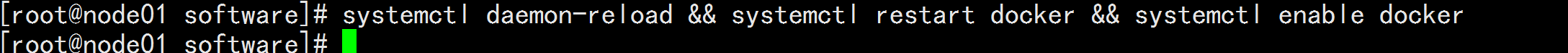

mkdir -p /etc/systemd/system/docker.service.d

# 重启docker服务

systemctl daemon-reload && systemctl restart docker && systemctl enable docker

![image_1e5elqtq91e3vm5l57mngs7ec4r.png-321kB]()

![image_1e5elrgrm6o82vs5do1a2r11c158.png-261.4kB]()

![image_1e5elrv1l1ikn1o9m1qu72q1j5i5l.png-183.5kB]()

![image_1e5eluv3i1v7eht9hhktge1pu162.png-257kB]()

![image_1e5en7ilm1hvr6l816pldgr1j2cs.png-75.1kB]()

![image_1e5en8otc1mv61m3313rmqlau449.png-17kB]()

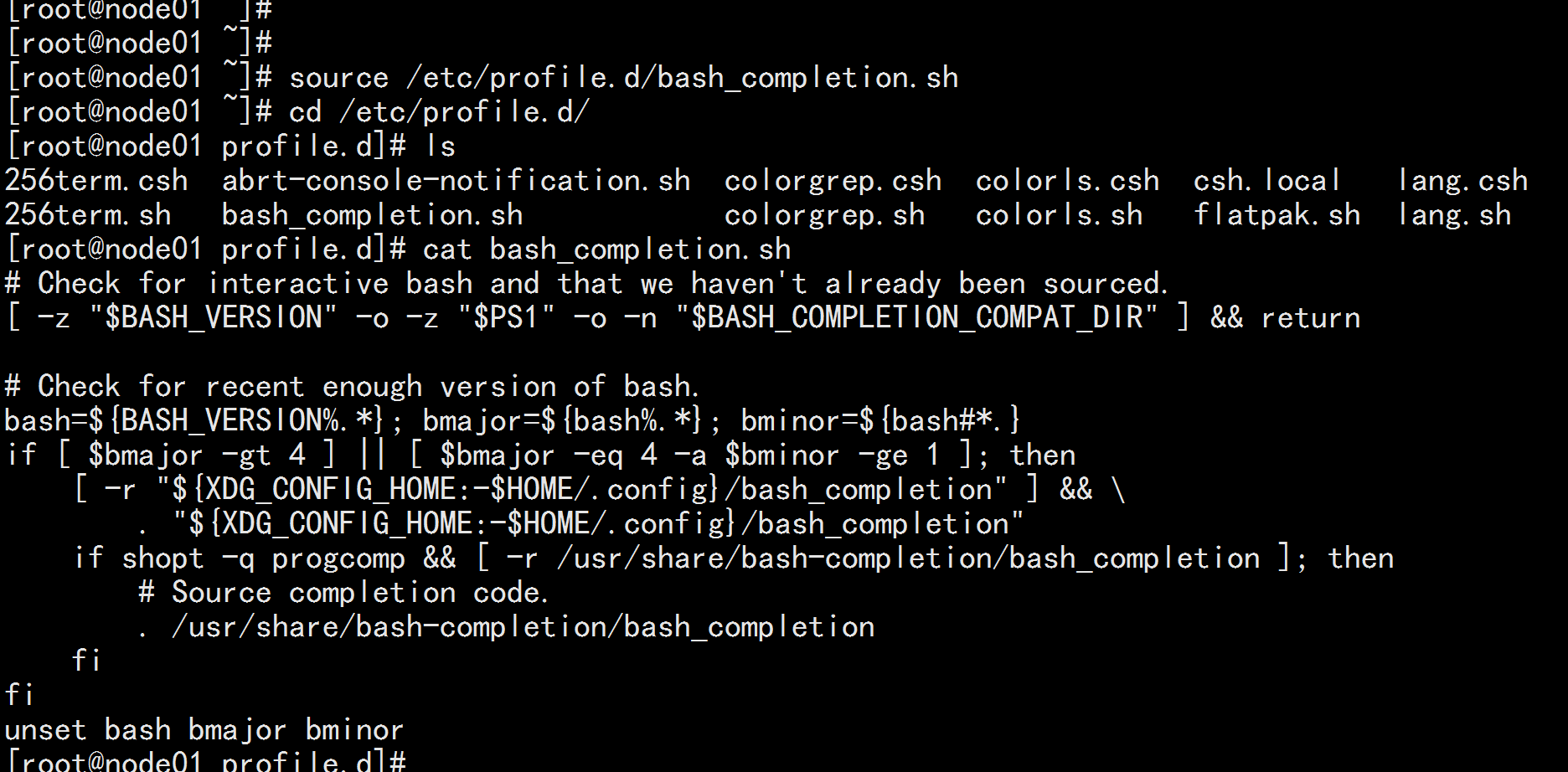

安装命令补全工具

yum -y install bash-completion

source /etc/profile.d/bash_completion.sh

![image_1e5f3c3vp1phl1l511q7ge0d1otc6v.png-69.6kB]()

![image_1e5f3goqbfs2j7k1mig2fp16oe7c.png-149.6kB]()

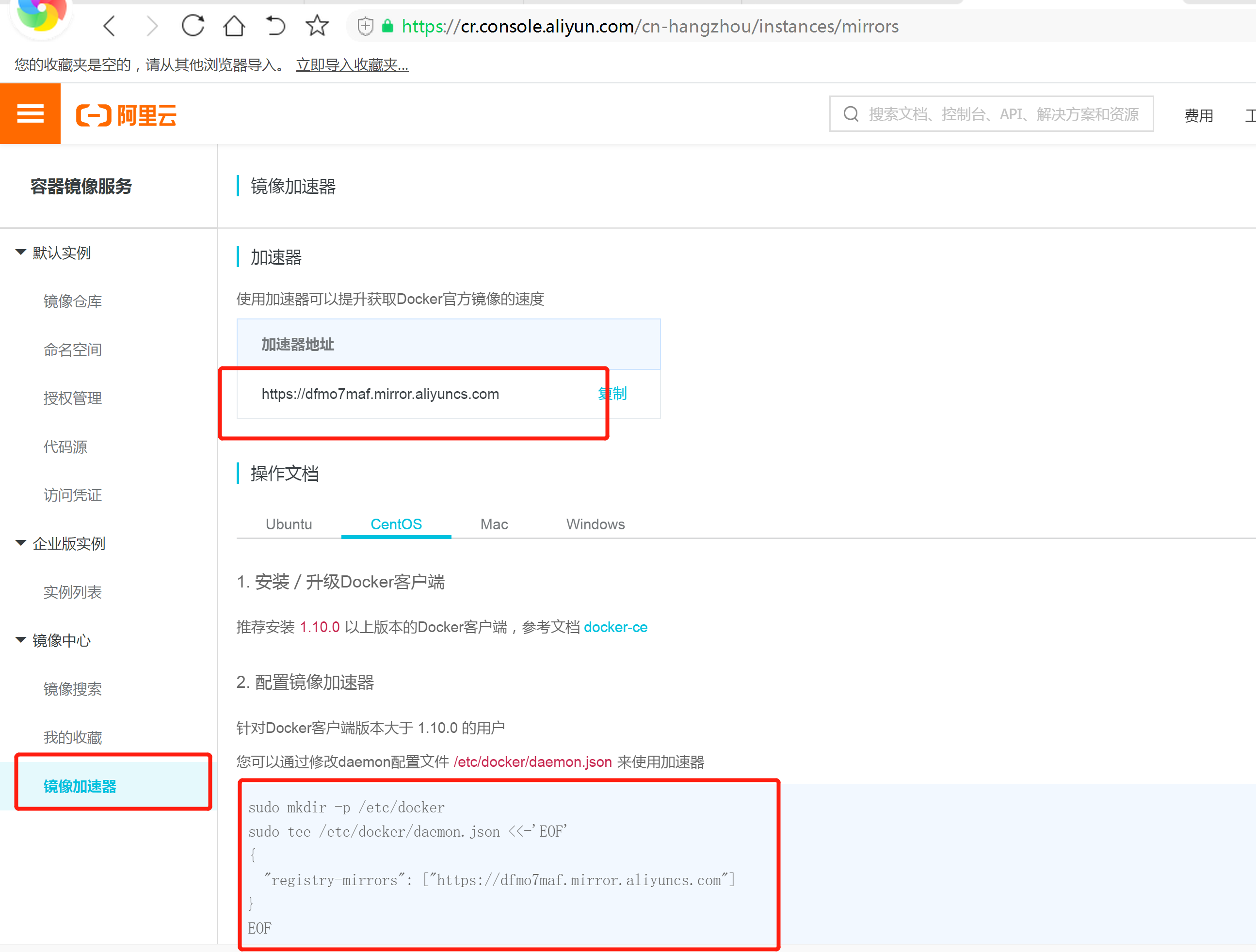

镜像加速

由于Docker Hub的服务器在国外,下载镜像会比较慢,可以配置镜像加速器。主要的加速器有:Docker官方提供的中国registry mirror、阿里云加速器、DaoCloud 加速器,本文以阿里加速器配置为例。

登陆阿里云容器模块:

登陆地址为:https://cr.console.aliyun.com ,未注册的可以先注册阿里云账户

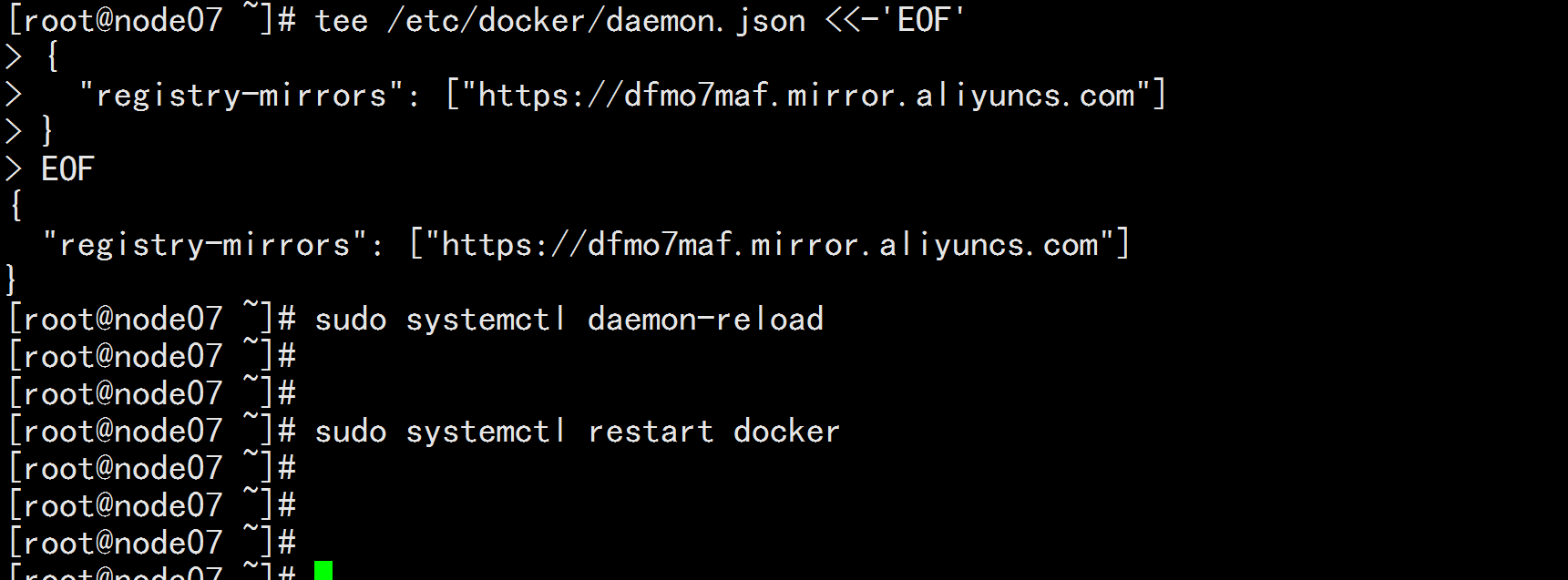

mkdir /etc/docker

tee /etc/docker/daemon.json <<-'EOF'

{

"registry-mirrors": ["https://dfmo7maf.mirror.aliyuncs.com"]

}

EOF

![image_1e5f3nutnne01tnat6321613k86.png-369.3kB]()

![image_1e5f3qfq6nlbtgr10gjdl713vo9g.png-76.7kB]()

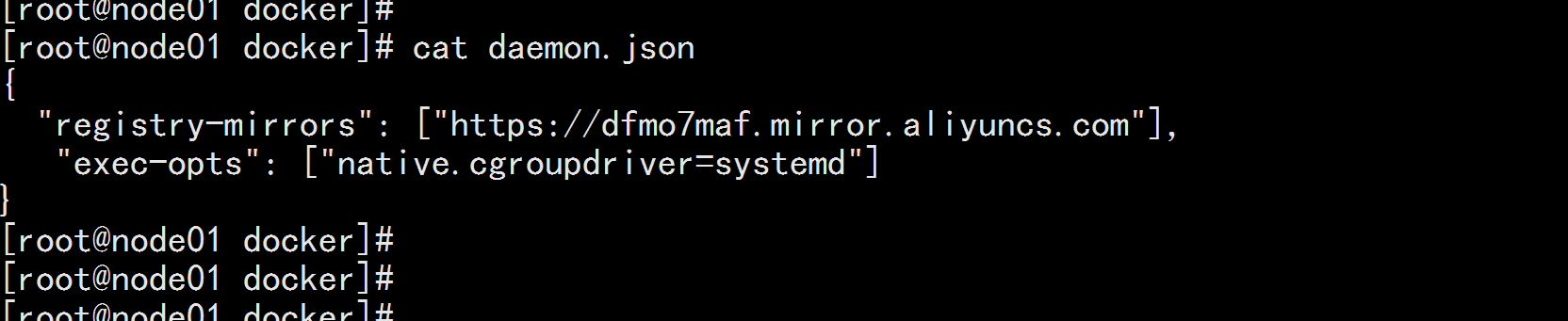

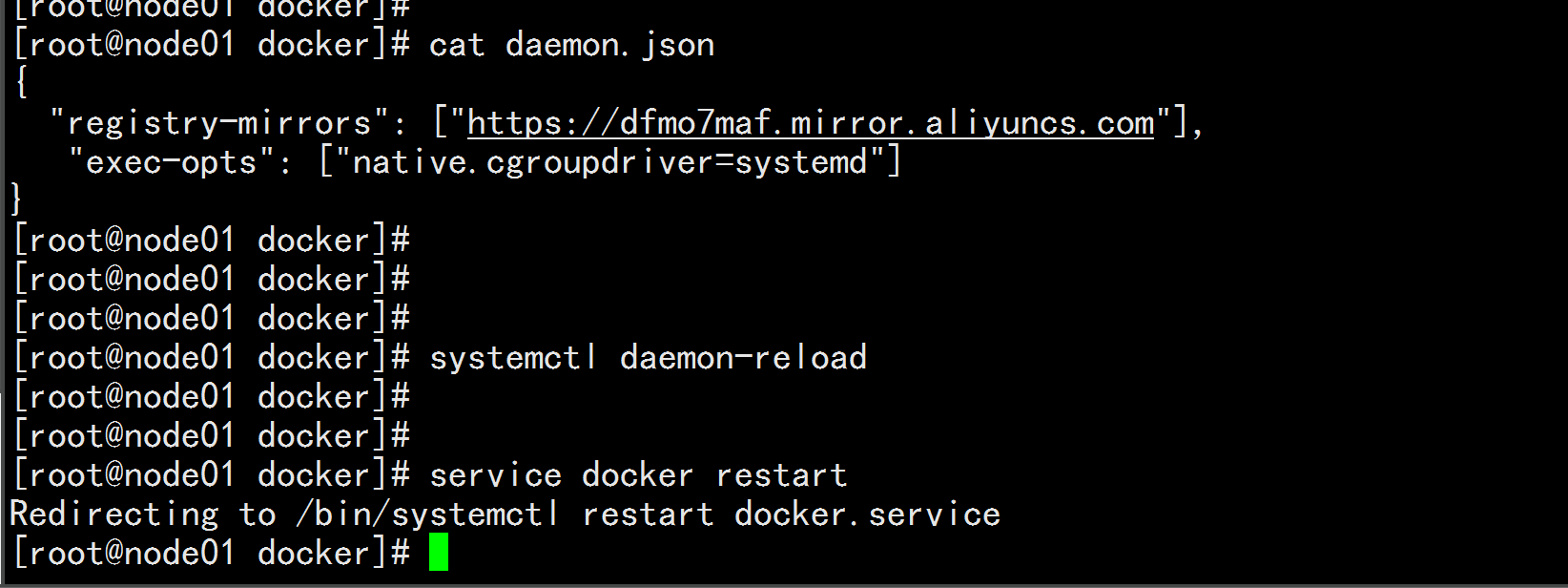

Cgroup Driver:

修改daemon.json

修改daemon.json,新增‘"exec-opts": ["native.cgroupdriver=systemd"]

cat /etc/docker/daemon.json

{

"registry-mirrors": ["https://dfmo7maf.mirror.aliyuncs.com"],

"exec-opts": ["native.cgroupdriver=systemd"]

}

修改cgroupdriver是为了消除告警:

[WARNING IsDockerSystemdCheck]: detected "cgroupfs" as the Docker cgroup driver. The recommended driver is "systemd". Please follow the guide at https://kubernetes.io/docs/setup/cri/

![image_1e5f4kvhhkb01dmlainbdf11mn9t.png-42.5kB]()

重新加载docker

systemctl daemon-reload

systemctl restart docker

![image_1e5f51e1vjpginite513a3qsuan.png-82kB]()

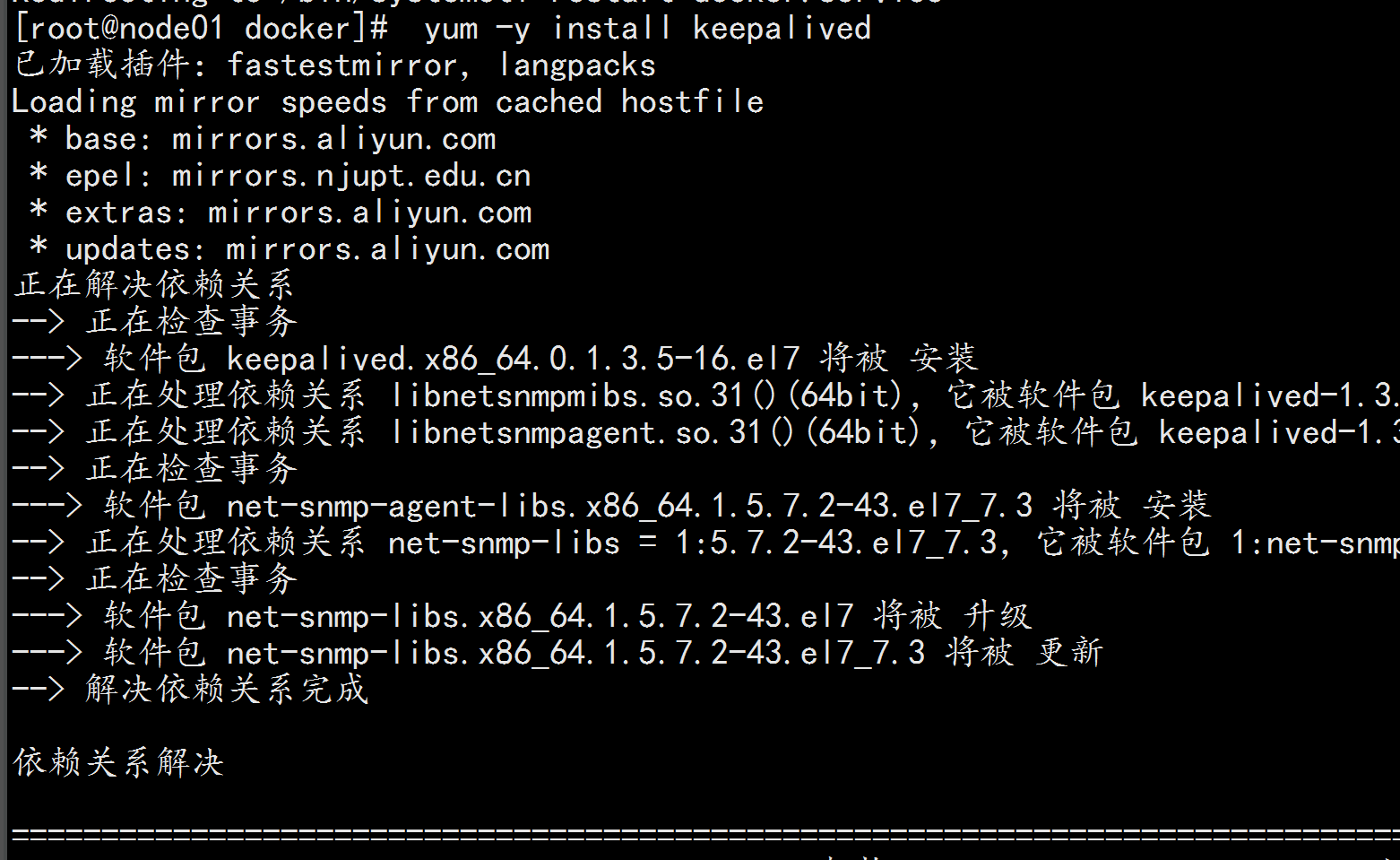

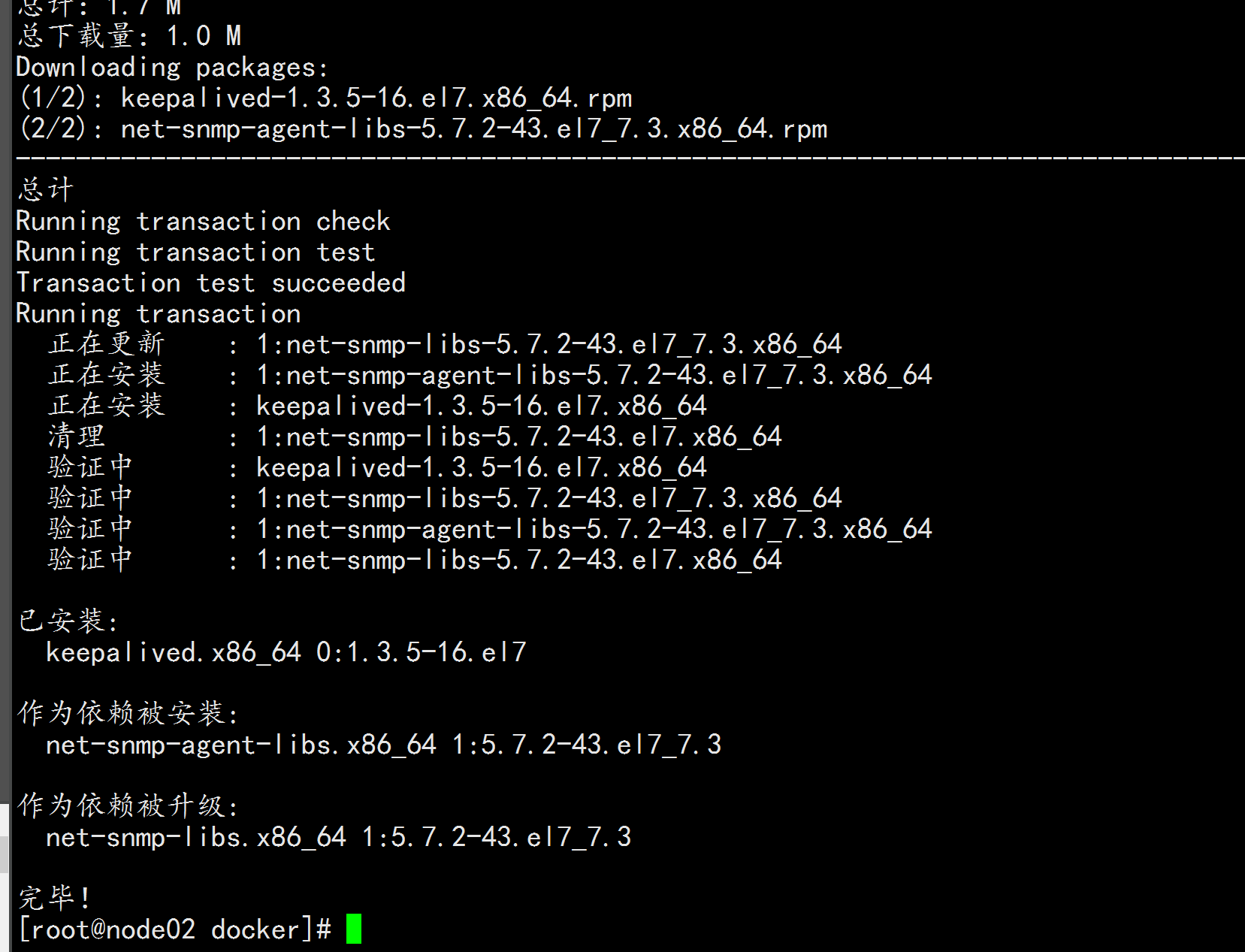

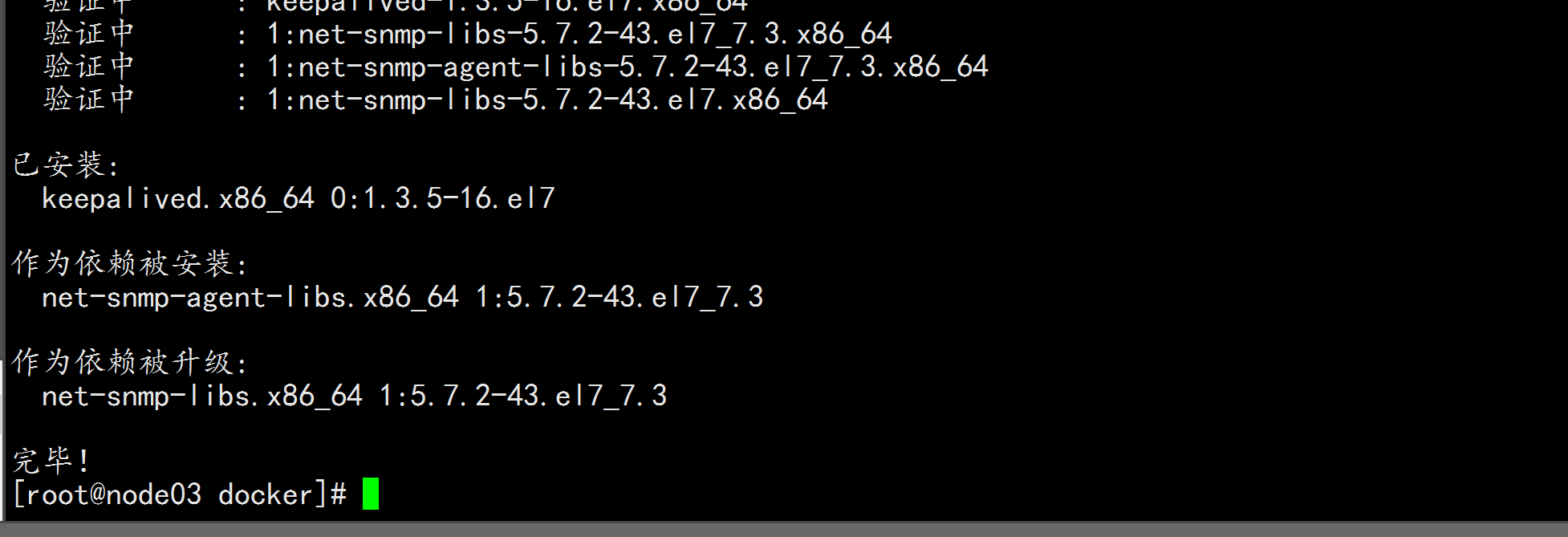

四:安装keepalived

control plane节点都执行本部分操作。

安装keepalived

yum install -y keepalived

![image_1e5f54dsg1a41und1ptl169svs7b4.png-178.7kB]()

![image_1e5f54s0t150k14rm3uv1t33nqkbh.png-176.1kB]()

![image_1e5f55d3h1m5s1o3o1hctmt41da3bu.png-90kB]()

keepalived配置

node01.flyfish 配置:

cat /etc/keepalived/keepalived.conf

---

! Configuration File for keepalived

global_defs {

router_id node01.flyfish

}

vrrp_instance VI_1 {

state MASTER

interface ens33

virtual_router_id 50

priority 100

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

192.168.100.100

}

}

---

node02.flyfish 配置:

cat /etc/keepalived/keepalived.conf

---

! Configuration File for keepalived

global_defs {

router_id node02.flyfish

}

vrrp_instance VI_1 {

state BACKUP

interface ens33

virtual_router_id 50

priority 90

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

192.168.100.100

}

}

---

node03.flyfish 配置

cat /etc/keepalived/keepalived.conf

---

! Configuration File for keepalived

global_defs {

router_id node03.flyfish

}

vrrp_instance VI_1 {

state BACKUP

interface ens33

virtual_router_id 50

priority 90

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

192.168.100.100

}

}

---

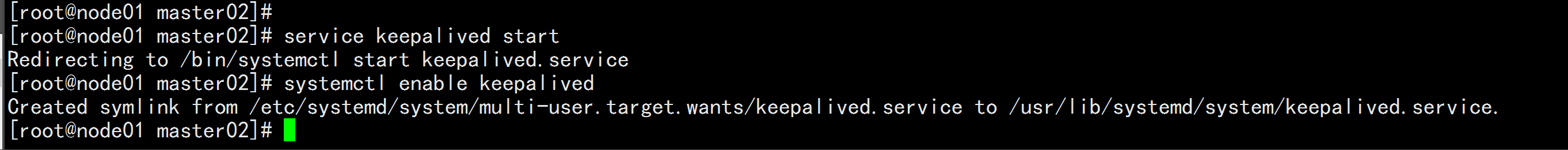

所有control plane启动keepalived服务并设置开机启动

service keepalived start

systemctl enable keepalived

![image_1e5f5qva9i6uefa1skn1n9thr1cb.png-54.9kB]()

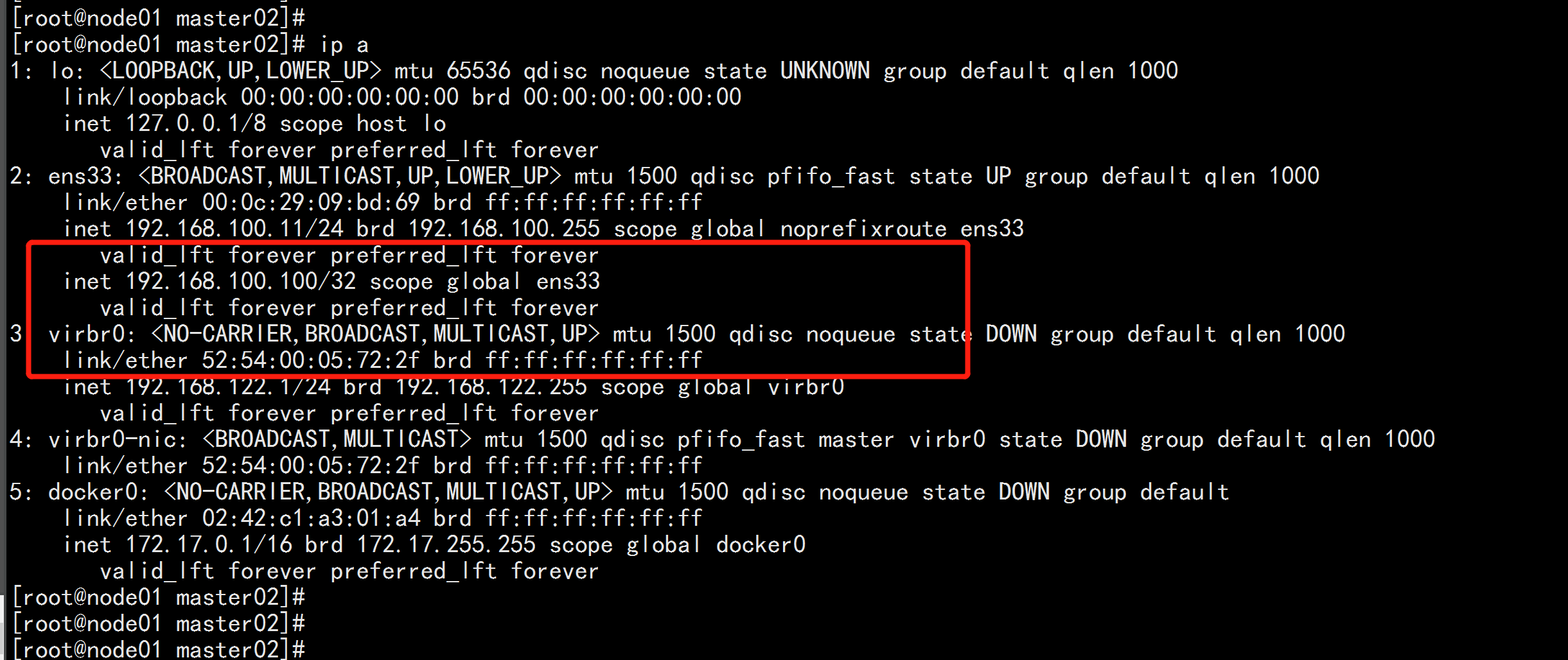

vip在node01.flyfish上

![image_1e5f5s1if17p21t0j112u7fo1l7qco.png-213.9kB]()

五: k8s安装

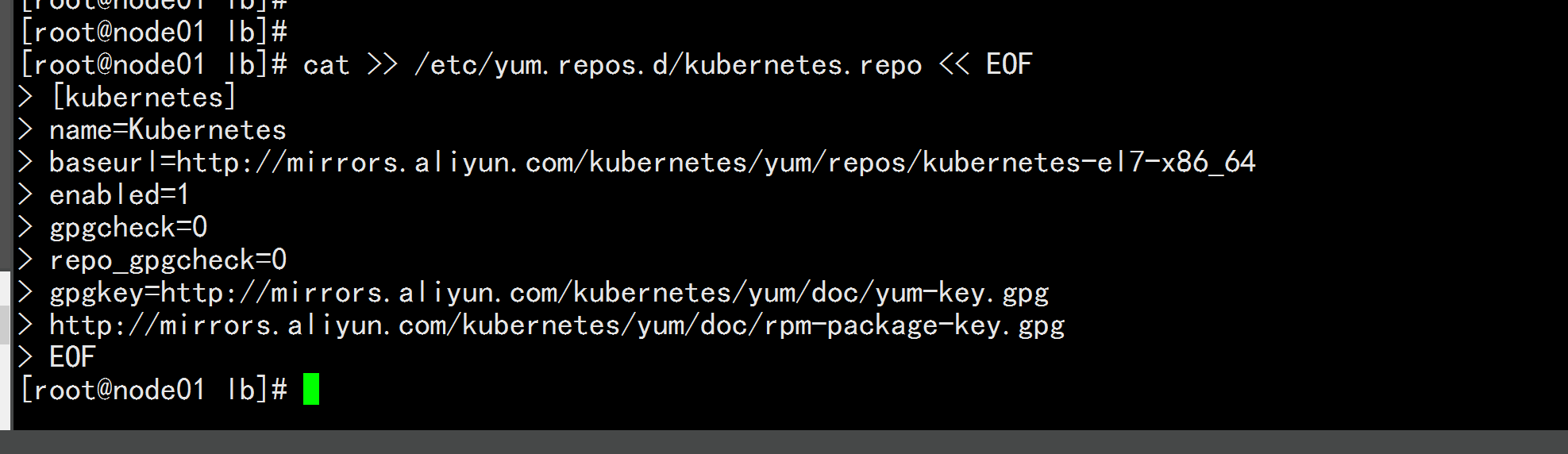

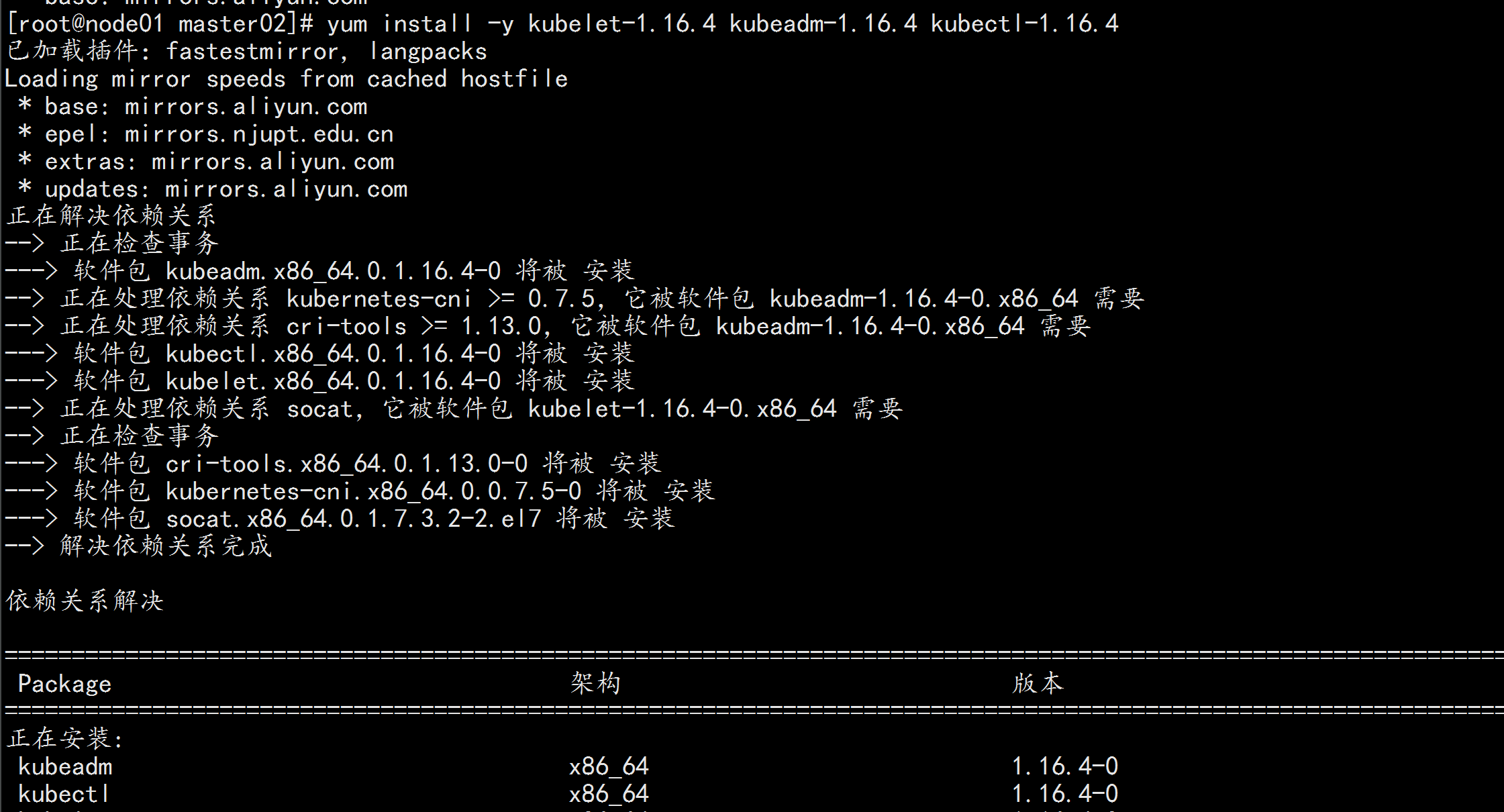

5.1:安装 Kubeadm (主从配置)

control plane和work节点都执行本部分操作。

cat >> /etc/yum.repos.d/kubernetes.repo << EOF

[kubernetes]

name=Kubernetes

baseurl=http://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=http://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg

http://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

![image_1e5epu73g1k2nqjejo31okhn3o41.png-77.8kB]()

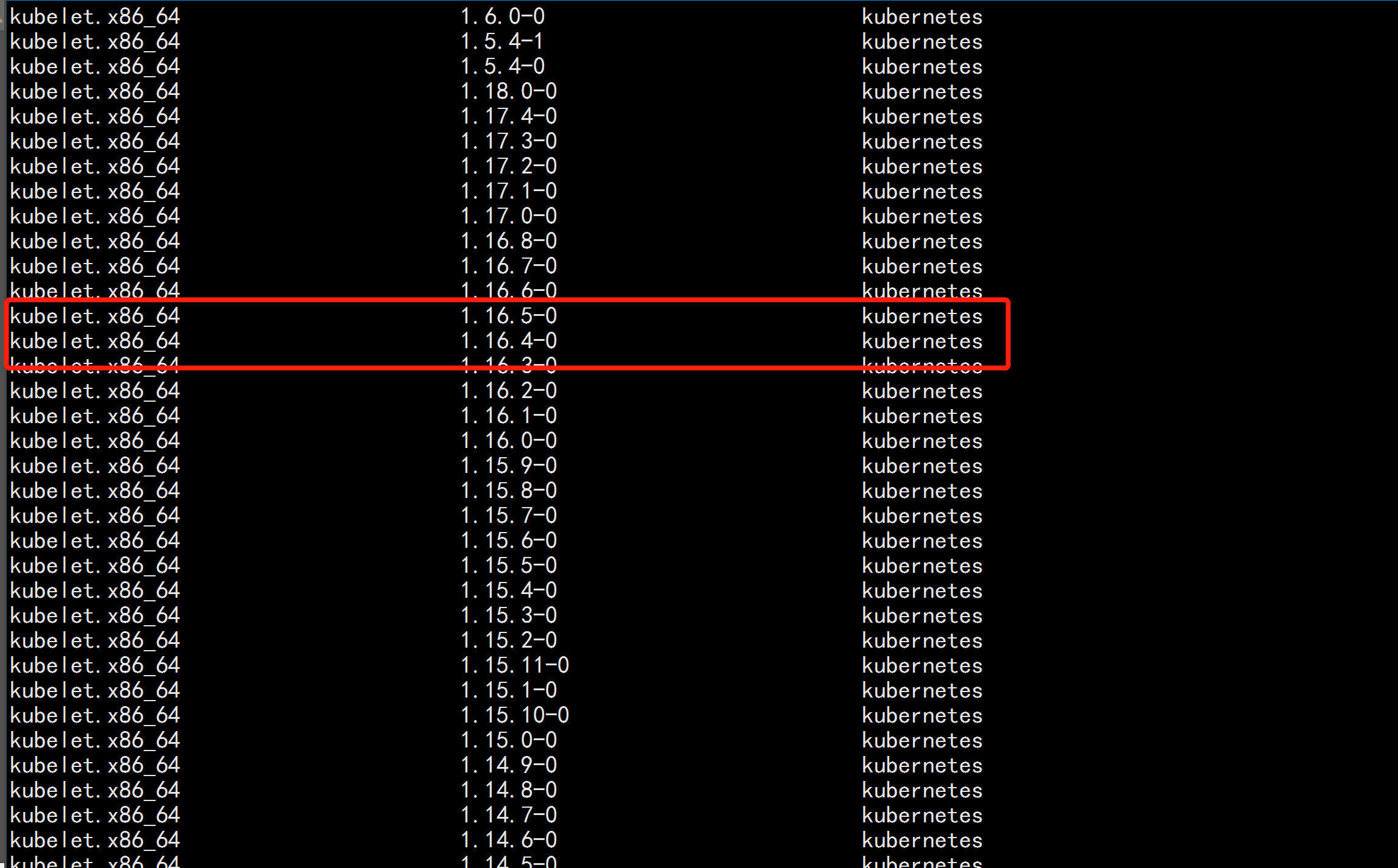

yum list kubelet --showduplicates | sort -r

本文安装的kubelet版本是1.16.4,该版本支持的docker版本为1.13.1, 17.03, 17.06, 17.09, 18.06, 18.09。

![image_1e5f66iho1di42dfdan1e0bqukd5.png-225.5kB]()

yum -y install kubeadm-1.16.4 kubectl-1.16.4 kubelet-1.16.4

---

kubelet 运行在集群所有节点上,用于启动Pod和容器等对象的工具

kubeadm 用于初始化集群,启动集群的命令工具

kubectl 用于和集群通信的命令行,通过kubectl可以部署和管理应用,查看各种资源,创建、删除和更新各种组件

---

启动kubelet:

systemctl enable kubelet && systemctl start kubelet

![image_1e5f6cvk4un4utu11v02lpln0di.png-238.2kB]()

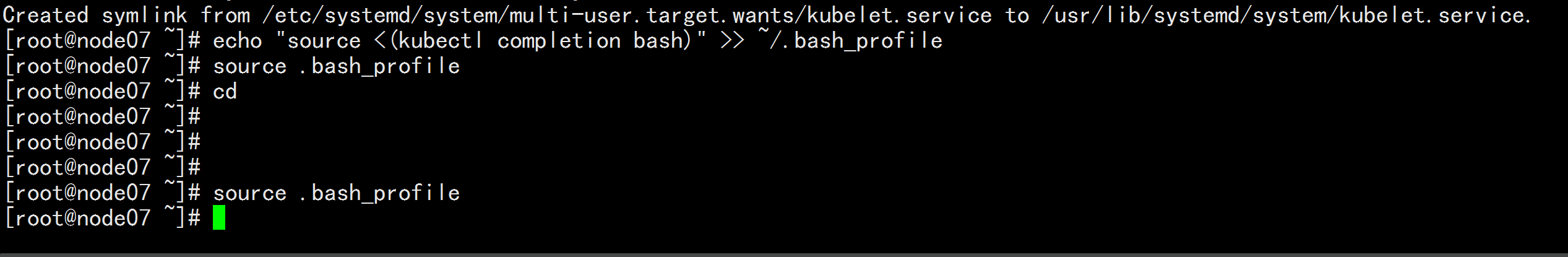

kubectl命令补全

echo "source <(kubectl completion bash)" >> ~/.bash_profile

source .bash_profile

![image_1e5f6il6s1pp7p3613eq1aqagq0dv.png-70.8kB]()

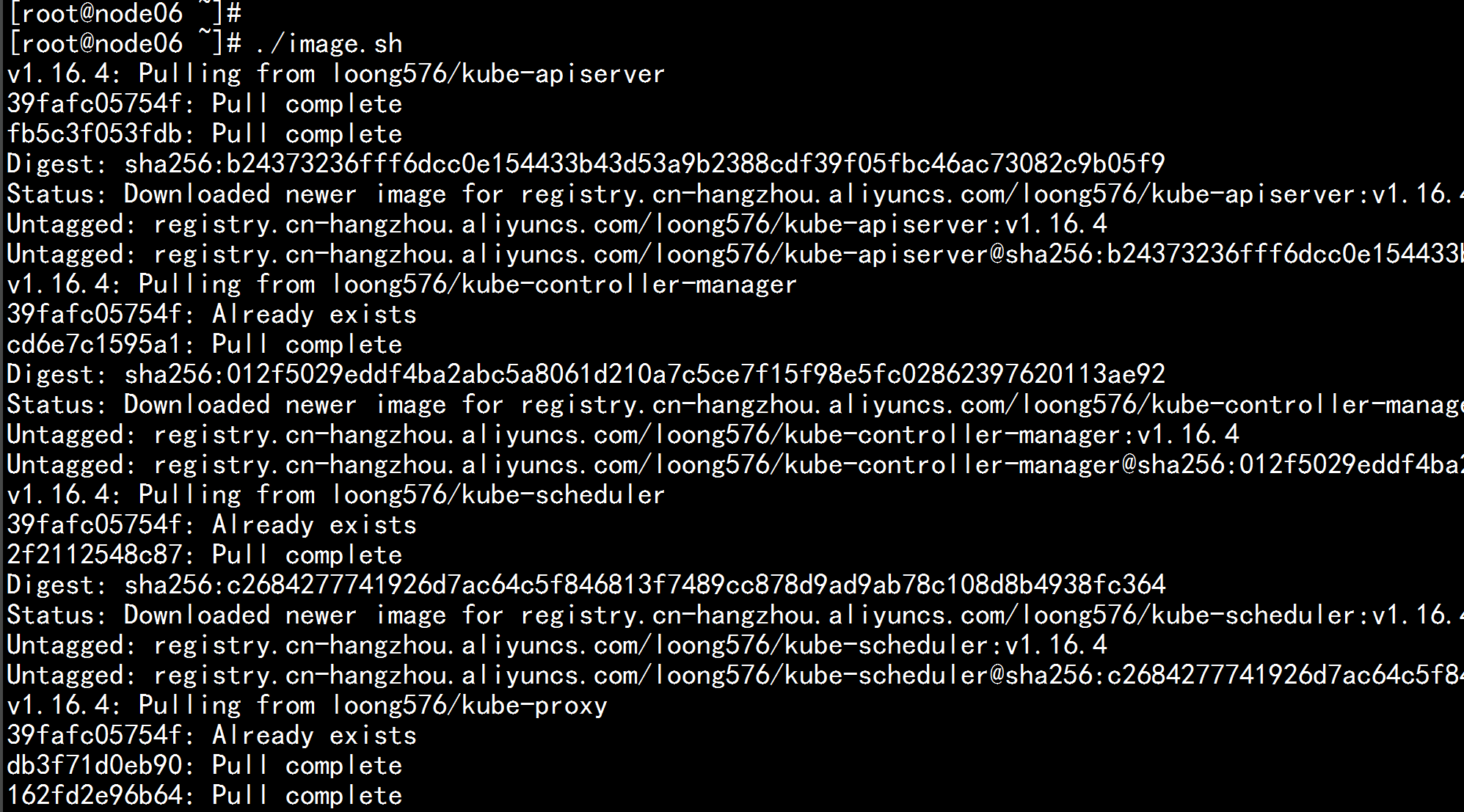

5.2 下载镜像

镜像下载的脚本:

Kubernetes几乎所有的安装组件和Docker镜像都放在goolge自己的网站上,直接访问可能会有网络问题,这里的解决办法是从阿里云镜像仓库下载镜像,拉取到本地以后改回默认的镜像tag。本文通过运行image.sh脚本方式拉取镜像。

下载脚本

vim image.sh

---

#!/bin/bash

url=registry.cn-hangzhou.aliyuncs.com/loong576

version=v1.16.4

images=(`kubeadm config images list --kubernetes-version=$version|awk -F '/' '{print $2}'`)

for imagename in ${images[@]} ; do

docker pull $url/$imagename

docker tag $url/$imagename k8s.gcr.io/$imagename

docker rmi -f $url/$imagename

done

---

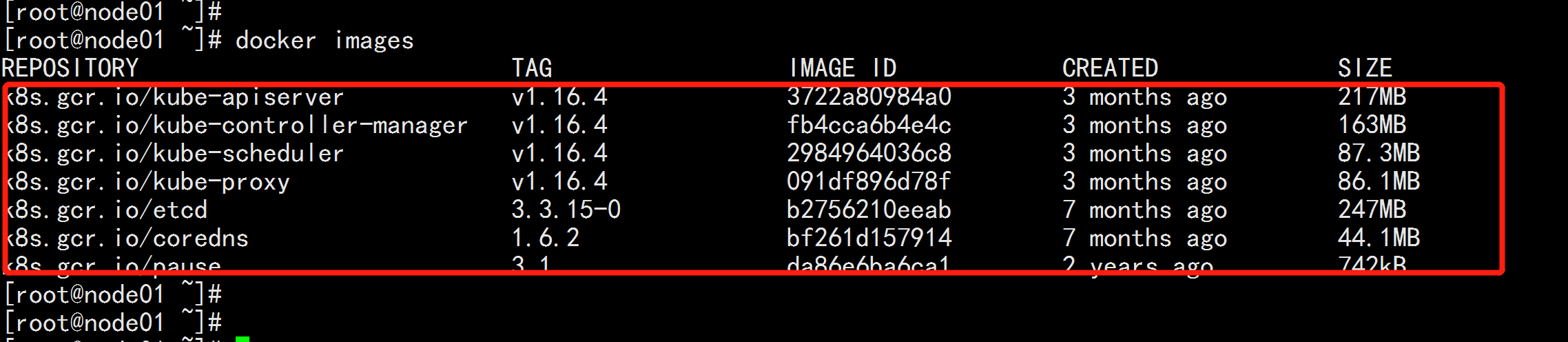

./image.sh

docker images

![image_1e5f76tc418vm1ufqe7l1f0c96jec.png-258.8kB]()

![image_1e5f77mcc1mf91p5c1j281v2625lep.png-103.5kB]()

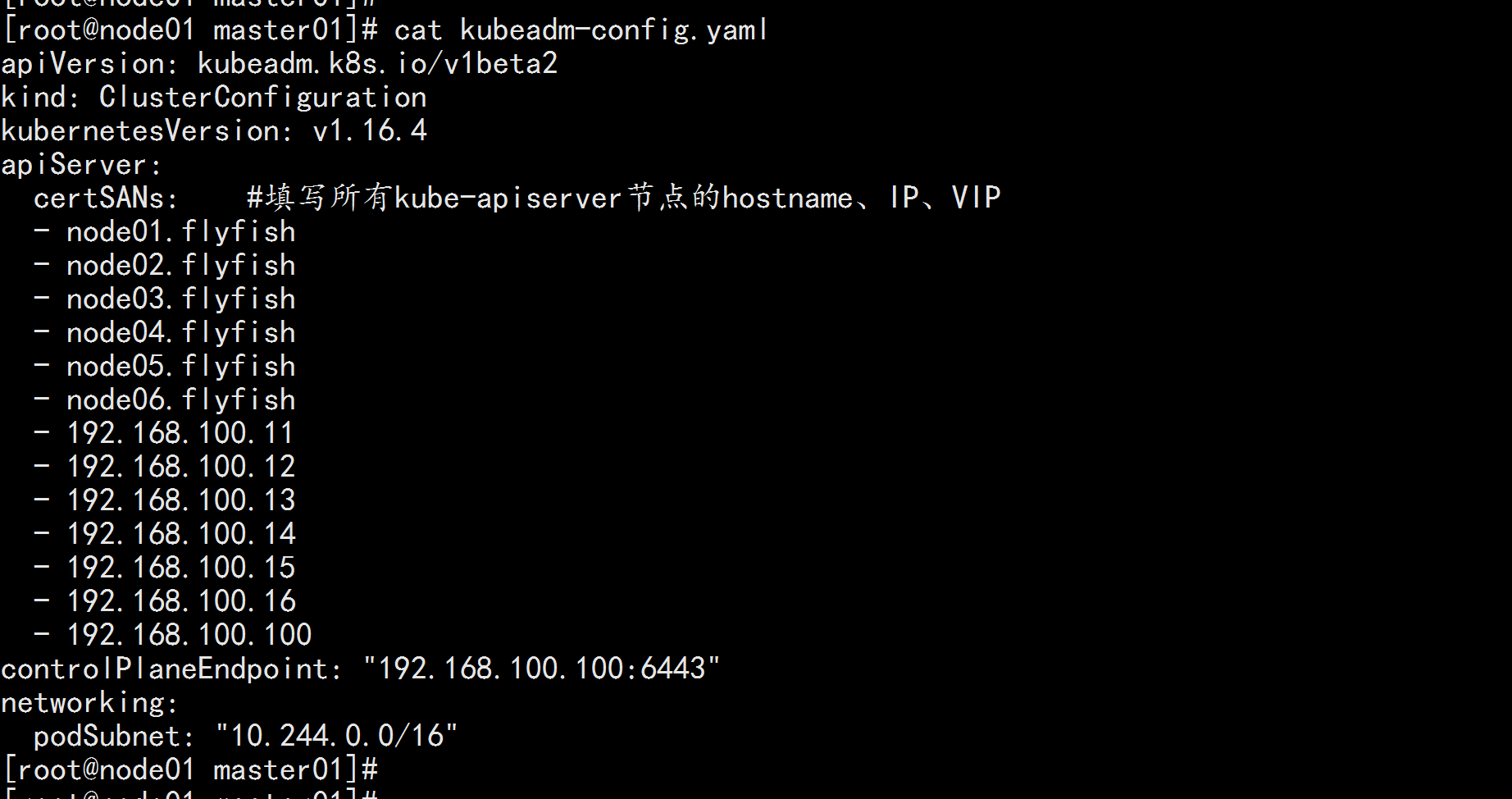

node01.flyfish 节点 初始化

cat kubeadm-config.yaml

---

apiVersion: kubeadm.k8s.io/v1beta2

kind: ClusterConfiguration

kubernetesVersion: v1.16.4

apiServer:

certSANs: #填写所有kube-apiserver节点的hostname、IP、VIP

- node01.flyfish

- node02.flyfish

- node03.flyfish

- node04.flyfish

- node05.flyfish

- node06.flyfish

- 192.168.100.11

- 192.168.100.12

- 192.168.100.13

- 192.168.100.14

- 192.168.100.15

- 192.168.100.16

- 192.168.100.100

controlPlaneEndpoint: "192.168.100.100:6443"

networking:

podSubnet: "10.244.0.0/16"

---

![image_1e5f7kde41k5rk9o1dfb10qh2q8f6.png-125kB]()

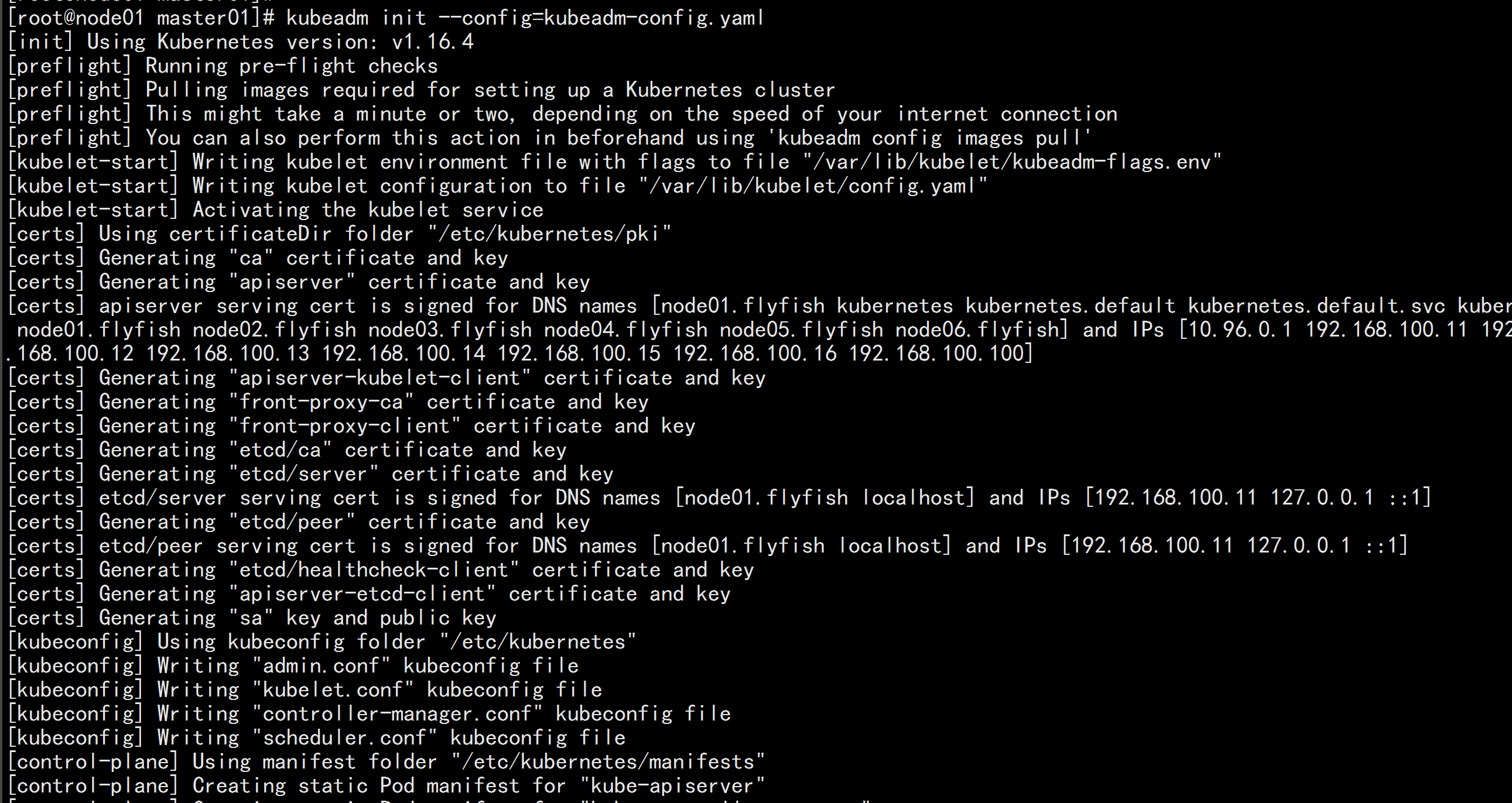

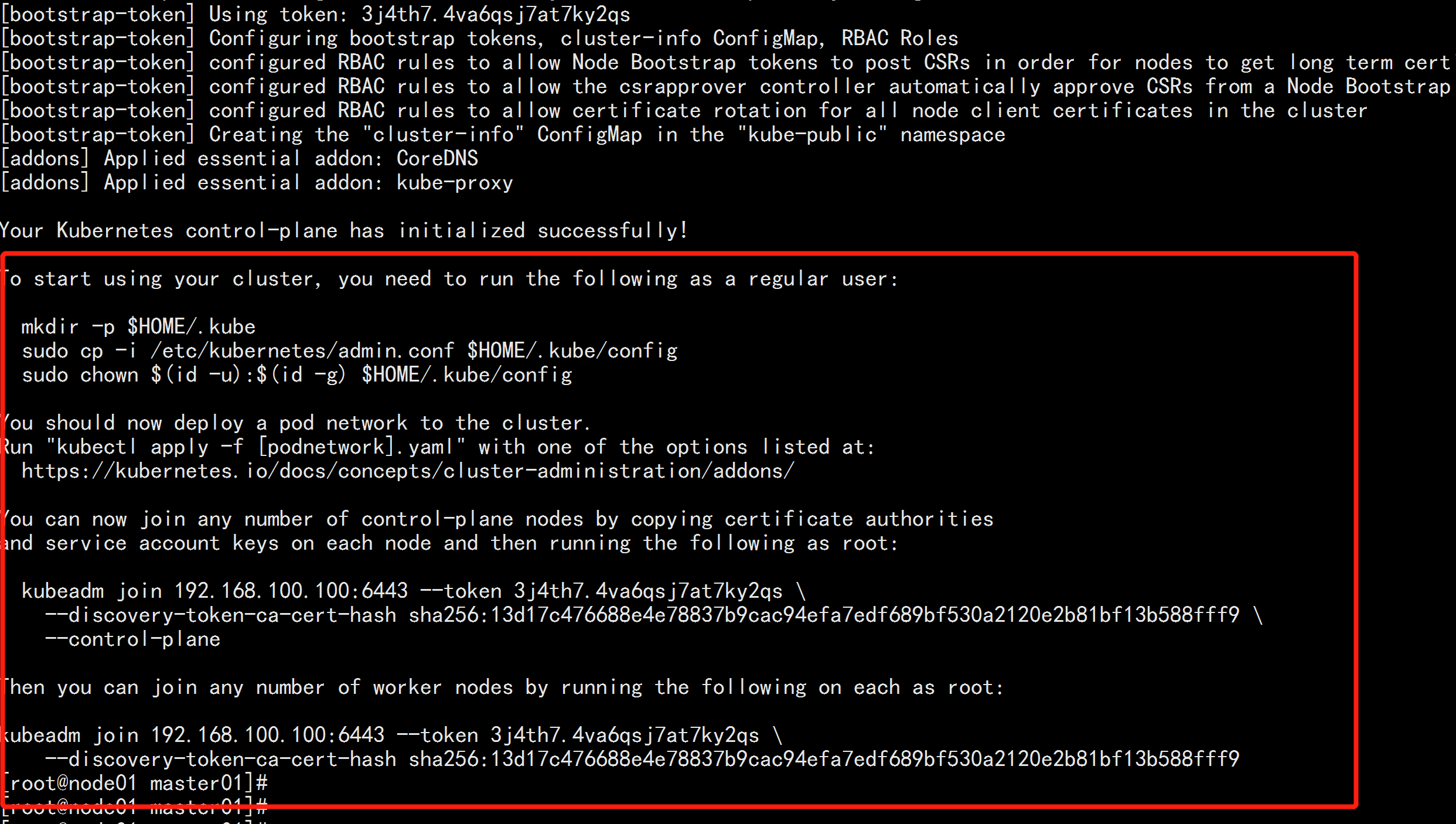

初始化主机节点:

kubeadm init --config=kubeadm-config.yaml

---

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

You can now join any number of control-plane nodes by copying certificate authorities

and service account keys on each node and then running the following as root:

kubeadm join 192.168.100.100:6443 --token 3j4th7.4va6qsj7at7ky2qs \

--discovery-token-ca-cert-hash sha256:13d17c476688e4e78837b9cac94efa7edf689bf530a2120e2b81bf13b588fff9 \

--control-plane

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 192.168.100.100:6443 --token 3j4th7.4va6qsj7at7ky2qs \

--discovery-token-ca-cert-hash sha256:13d17c476688e4e78837b9cac94efa7edf689bf530a2120e2b81bf13b588fff9

---

![image_1e5f7msnnoqh4mf1ii51j6h19f0fj.png-286.5kB]()

![image_1e5f7ob2d1foa8tmk633st1edeh0.png-260.5kB]()

如果初始化失败,可执行kubeadm reset后重新初始化

kubeadm reset

rm -rf $HOME/.kube/config

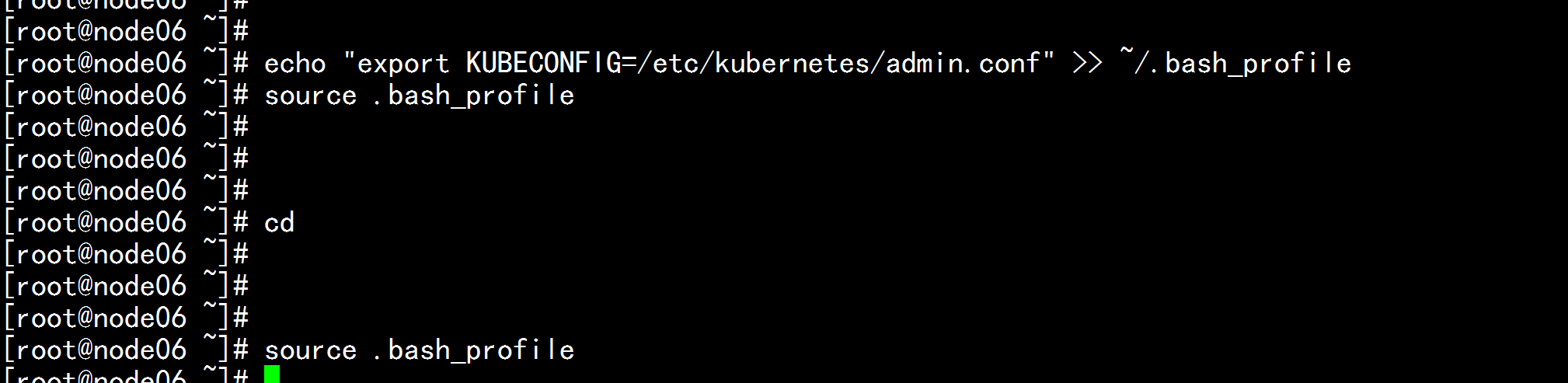

加载环境变量

echo "export KUBECONFIG=/etc/kubernetes/admin.conf" >> ~/.bash_profile

source .bash_profile

![image_1e5f80l981ecc1e512ti1d0hshahd.png-77.2kB]()

本文所有操作都在root用户下执行,若为非root用户,则执行如下操作:

mkdir -p $HOME/.kube

cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

chown $(id -u):$(id -g) $HOME/.kube/config

安装flannel网络

kubectl apply -f https://raw.githubusercontent.com/coreos/flannel/2140ac876ef134e0ed5af15c65e414cf26827915/Documentation/kube-flannel.yml

kubectl apply -f kube-flannel.yml

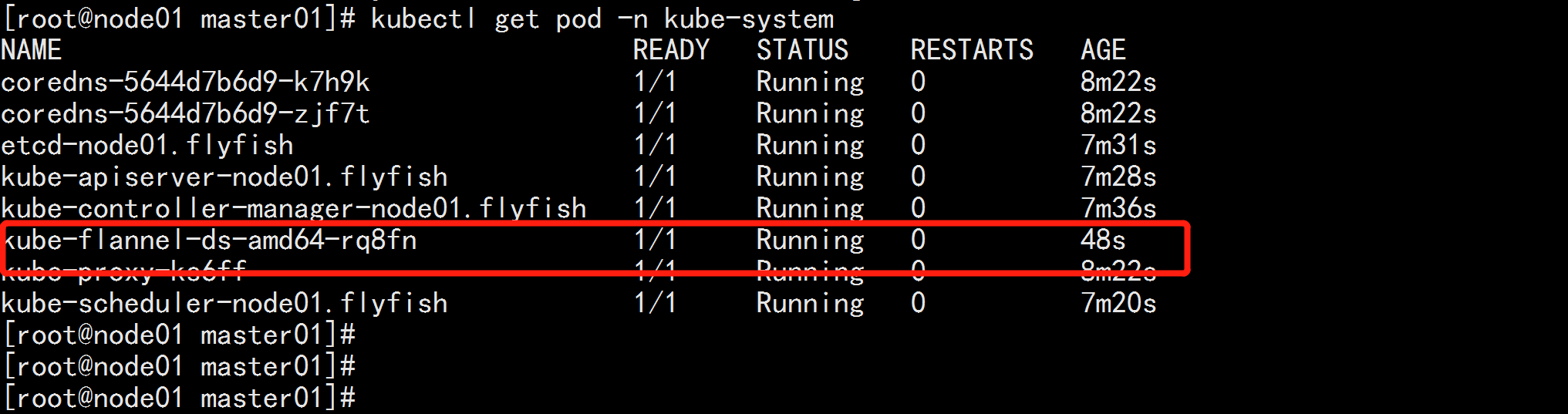

kubectl get pod -n kube-system

![image_1e5f86k9f1mssv9e1o4s14v51bkvhq.png-97.2kB]()

5.3 control plane节点加入集群

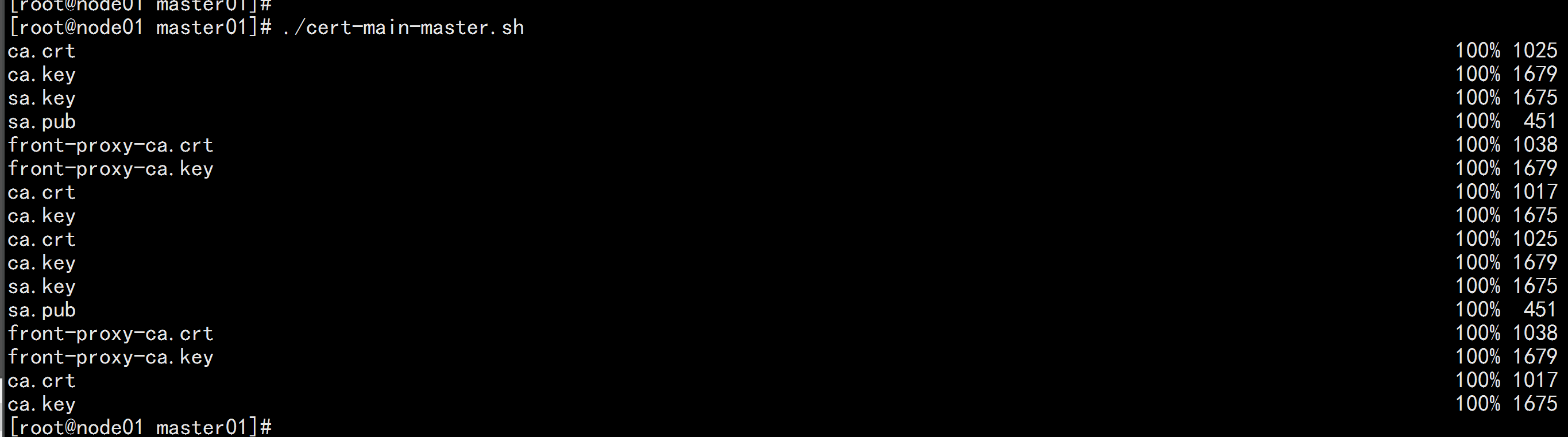

证书分发

在node01.flyfish 上面执行 脚本:cert-main-master.sh

vim cert-main-master.sh

---

#!/bin/bash

USER=root # customizable

CONTROL_PLANE_IPS="192.168.100.12 192.168.100.13"

for host in ${CONTROL_PLANE_IPS}; do

scp /etc/kubernetes/pki/ca.crt "${USER}"@$host:

scp /etc/kubernetes/pki/ca.key "${USER}"@$host:

scp /etc/kubernetes/pki/sa.key "${USER}"@$host:

scp /etc/kubernetes/pki/sa.pub "${USER}"@$host:

scp /etc/kubernetes/pki/front-proxy-ca.crt "${USER}"@$host:

scp /etc/kubernetes/pki/front-proxy-ca.key "${USER}"@$host:

scp /etc/kubernetes/pki/etcd/ca.crt "${USER}"@$host:etcd-ca.crt

# Quote this line if you are using external etcd

scp /etc/kubernetes/pki/etcd/ca.key "${USER}"@$host:etcd-ca.key

done

---

./cert-main-master.sh

![image_1e5fc2c511tv8ikb152r1v09c8slf.png-115.8kB]()

登录 node02.flyfish

cd /root

mkdir -p /etc/kubernetes/pki

mv *.crt *.key *.pub /etc/kubernetes/pki/

cd /etc/kubernetes/pki

mkdir etcd

mv etcd-* etcd

cd etcd

mv etcd-ca.key ca.key

mv etcd-ca.crt ca.crt

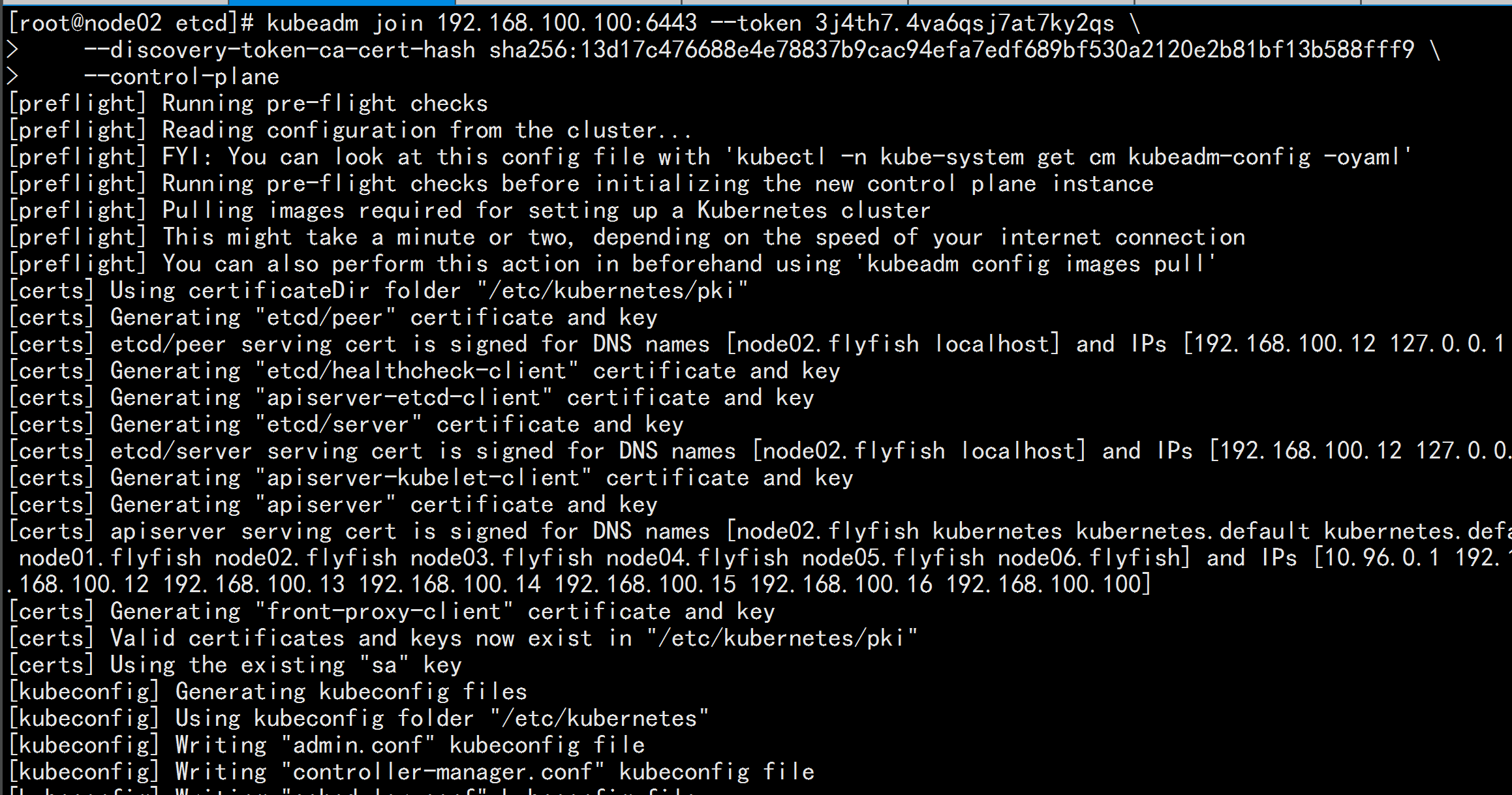

node02.flyfish 加入集群

kubeadm join 192.168.100.100:6443 --token 3j4th7.4va6qsj7at7ky2qs \

--discovery-token-ca-cert-hash sha256:13d17c476688e4e78837b9cac94efa7edf689bf530a2120e2b81bf13b588fff9 \

--control-plane

![image_1e5fc8nh61m0a1rd8140d1d7n1ehgls.png-206.2kB]()

![image_1e5fc971o10lufhalg47mi1gf5m9.png-255.6kB]()

![image_1e5fc9jav1jc6r5o11g9au7nj2mm.png-171kB]()

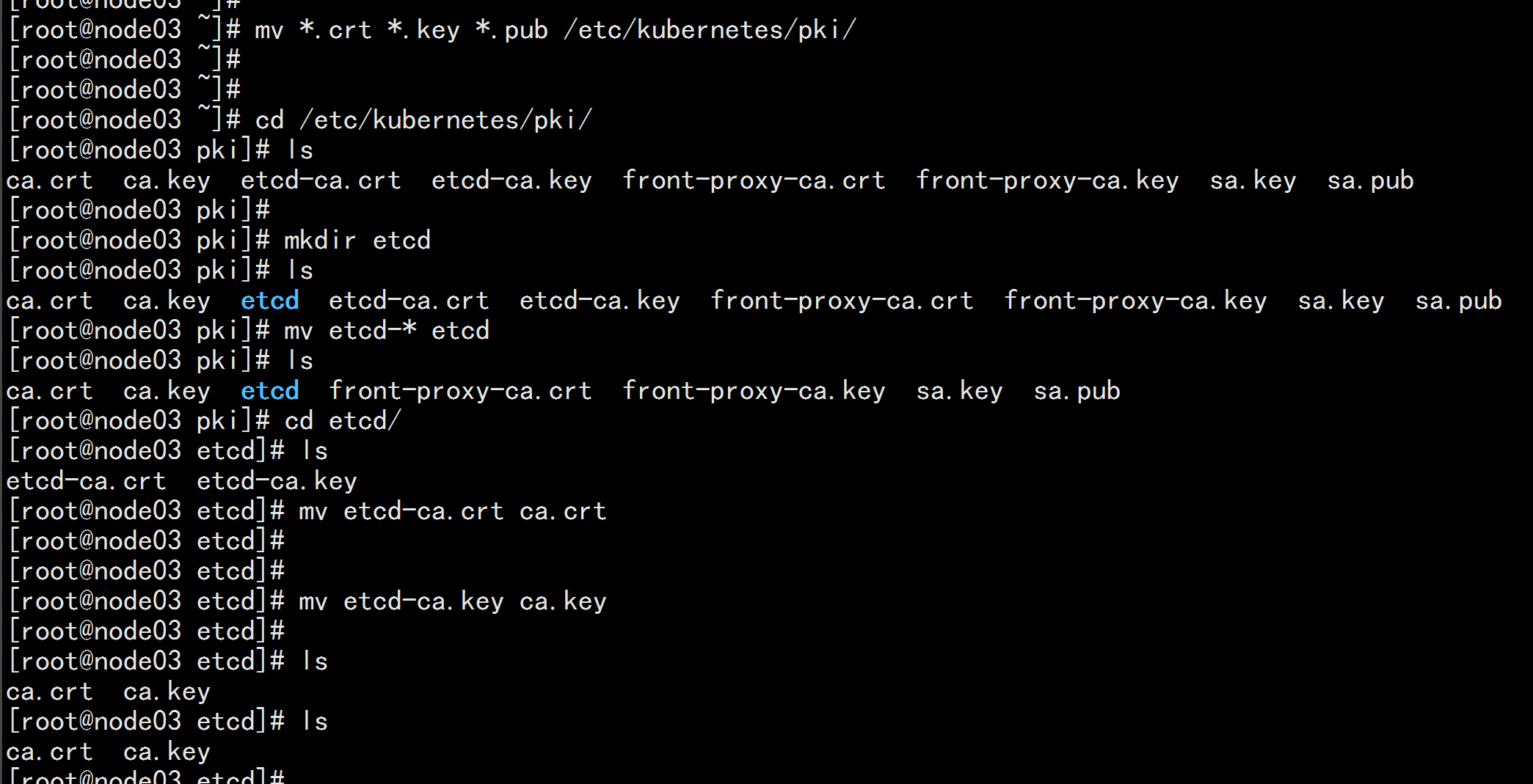

登录 node03.flyfish

cd /root

mkdir -p /etc/kubernetes/pki

mv *.crt *.key *.pub /etc/kubernetes/pki/

cd /etc/kubernetes/pki

mkdir etcd

mv etcd-* etcd

cd etcd

mv etcd-ca.key ca.key

mv etcd-ca.crt ca.crt

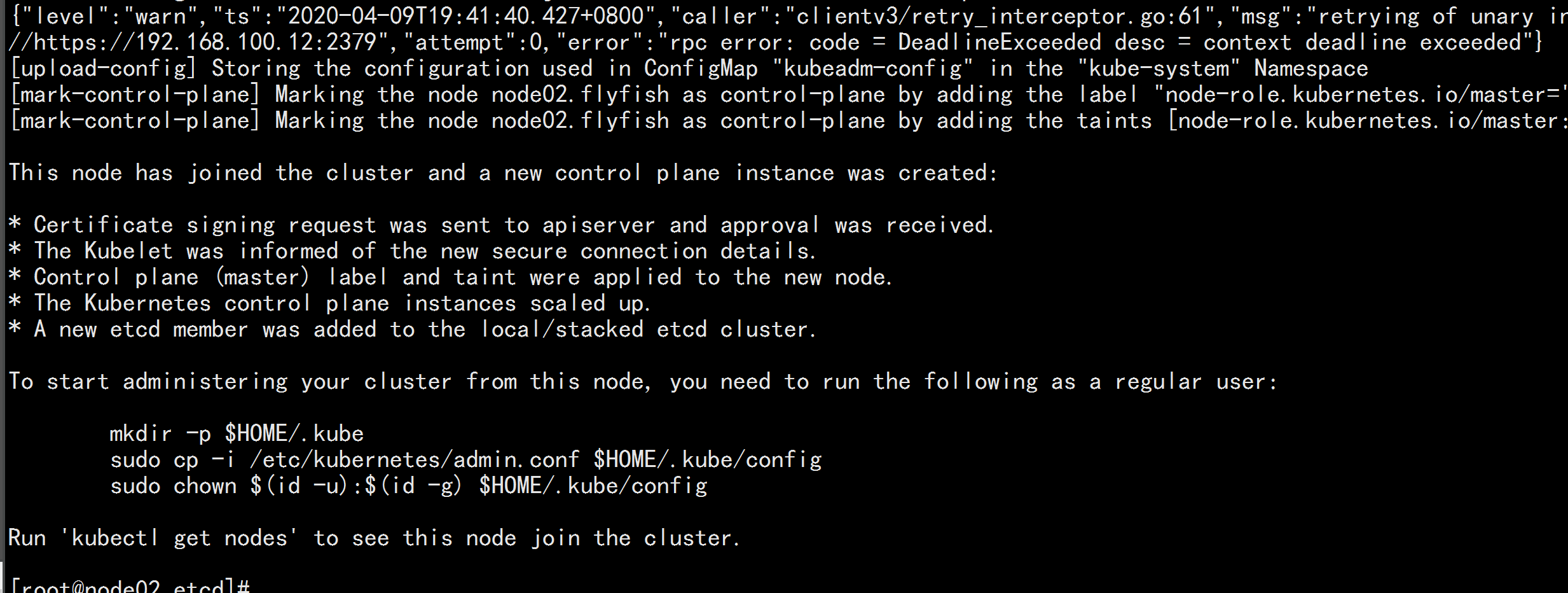

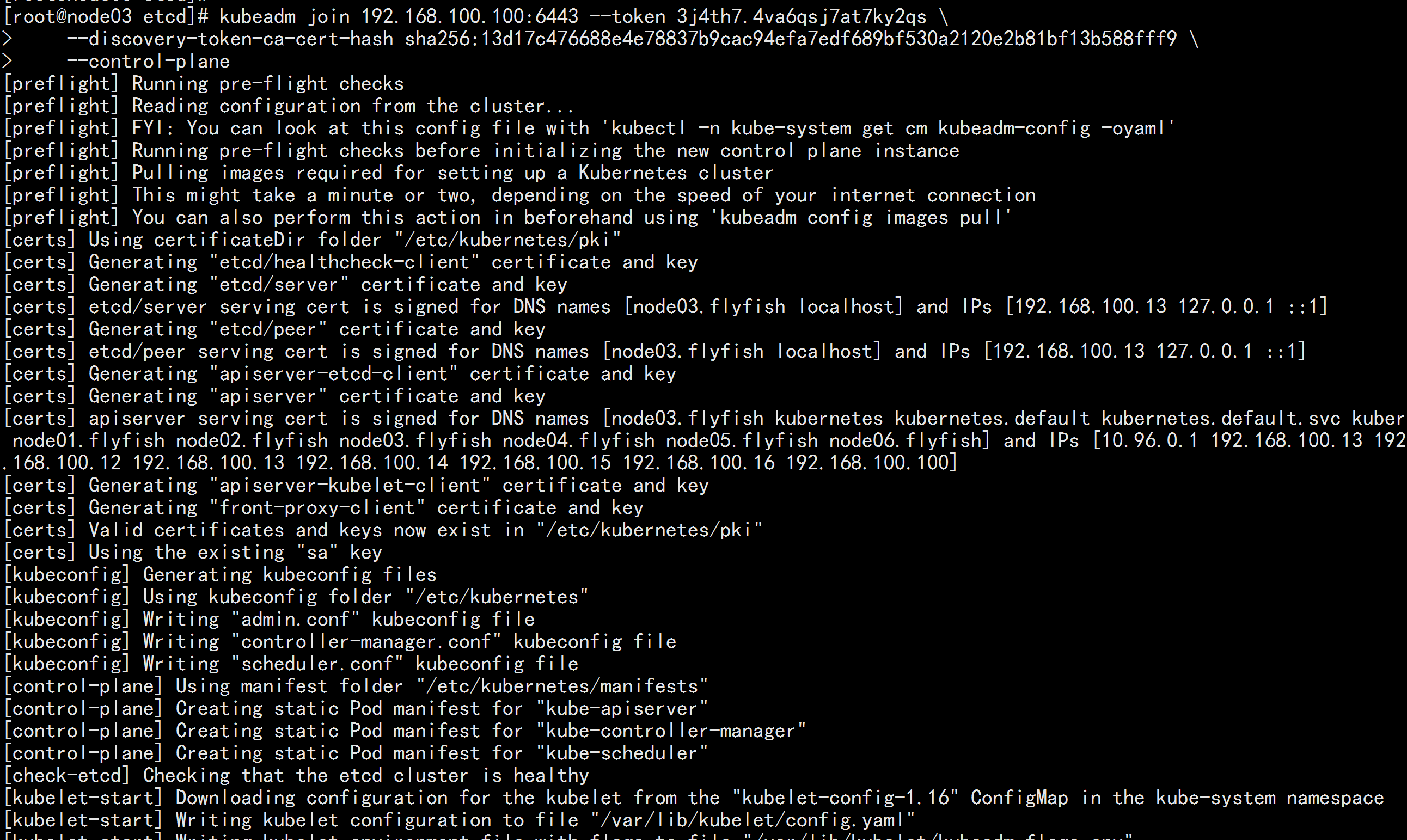

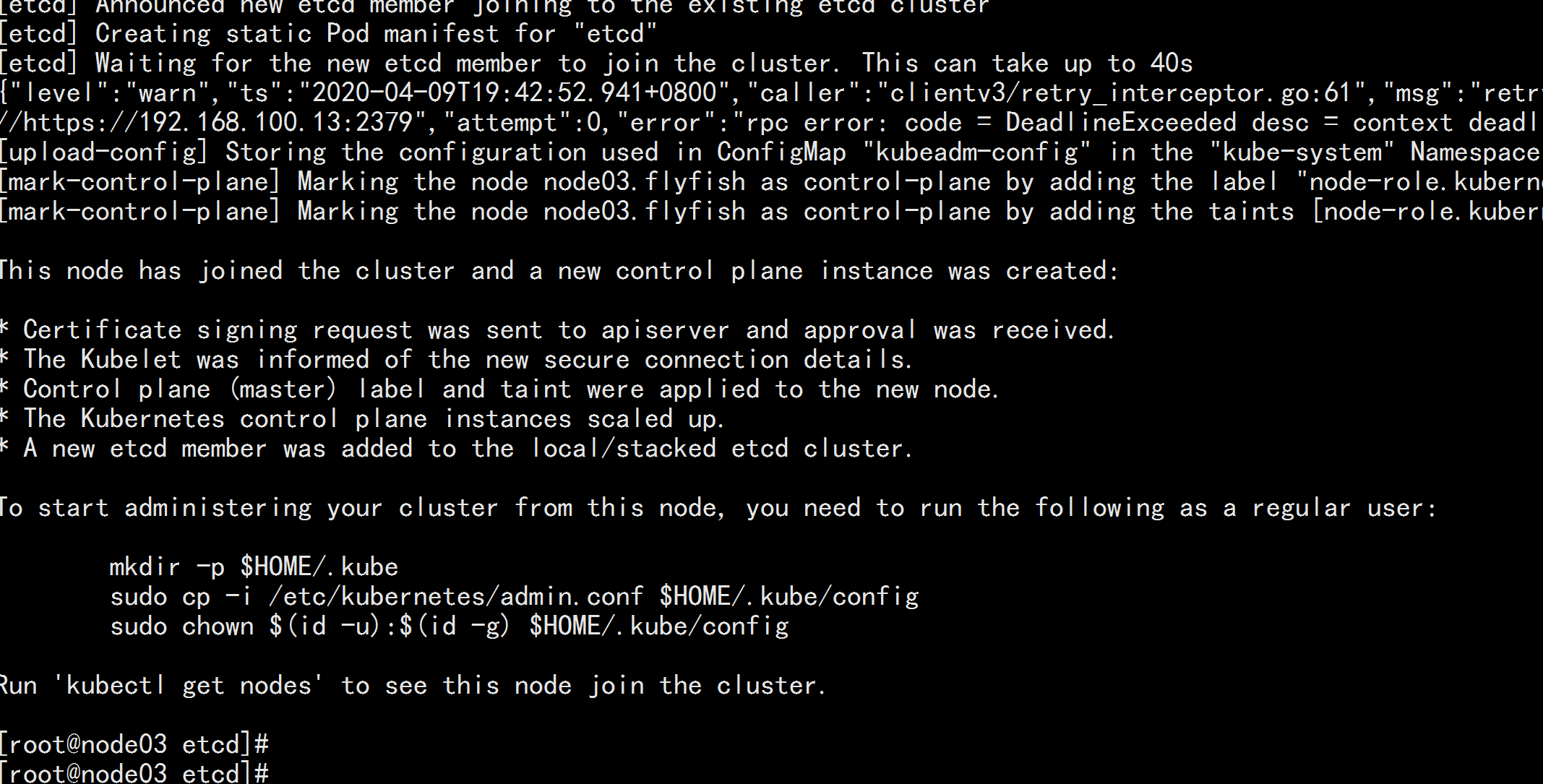

node03.flyfish 加入集群

kubeadm join 192.168.100.100:6443 --token 3j4th7.4va6qsj7at7ky2qs \

--discovery-token-ca-cert-hash sha256:13d17c476688e4e78837b9cac94efa7edf689bf530a2120e2b81bf13b588fff9 \

--control-plane

![image_1e5fcarkl96k1spn1n1ghfn1llln3.png-166.6kB]()

![image_1e5fcbd8s17di1ahbkia1hksn0png.png-338kB]()

![image_1e5fcbsfk8fk13ho1euk6l52o1nt.png-190.1kB]()

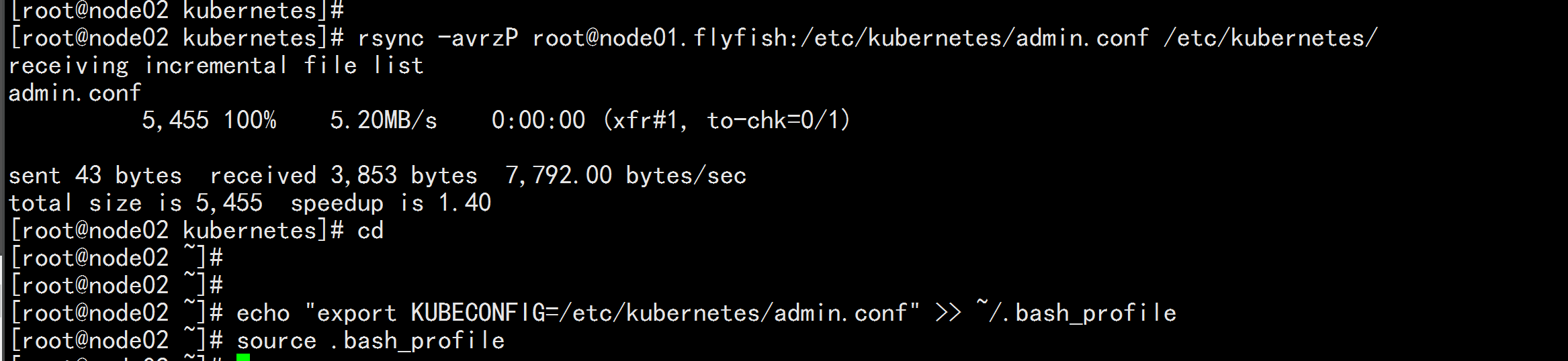

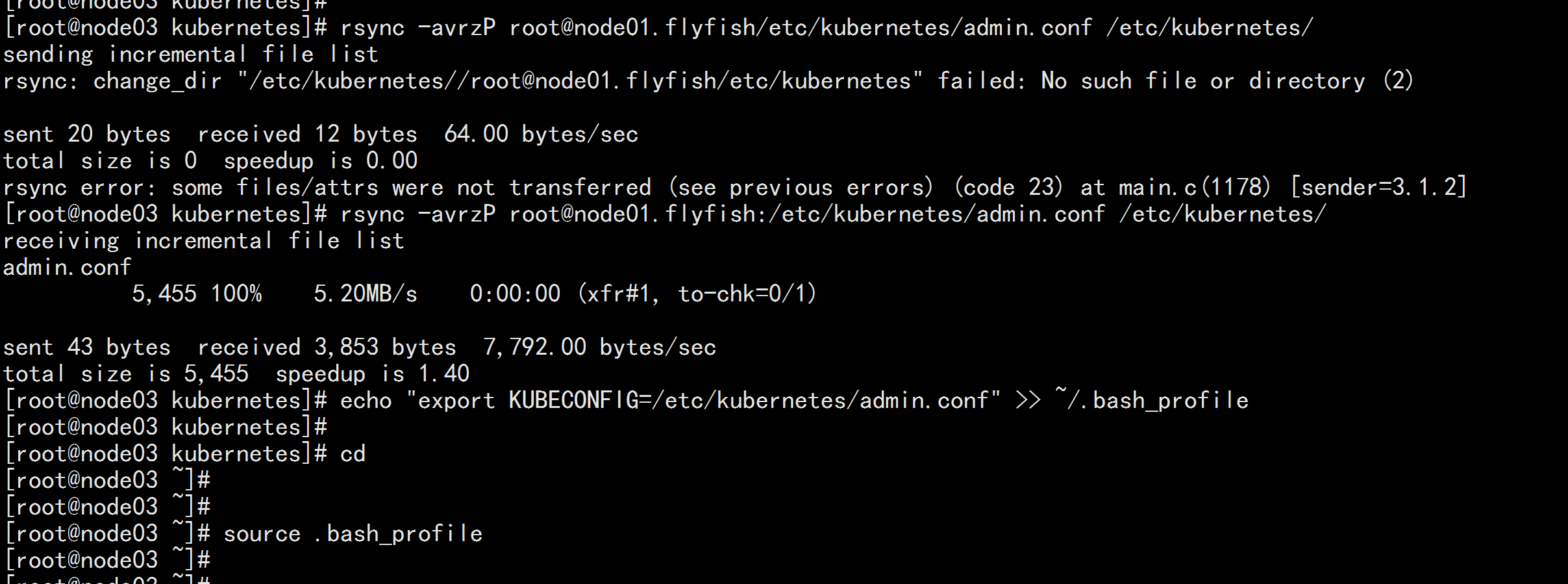

node02.flyfish 与node03.flyfis 加载 环境变量

rsync -avrzP root@node01.flyfish:/etc/kubernetes/admin.conf /etc/kubernetes/

echo "export KUBECONFIG=/etc/kubernetes/admin.conf" >> ~/.bash_profile

source .bash_profile

![image_1e5fatlbr198bqcko0pob13vql2.png-95.7kB]()

![image_1e5fat8t01bu91dknddcih41ghrkl.png-166.4kB]()

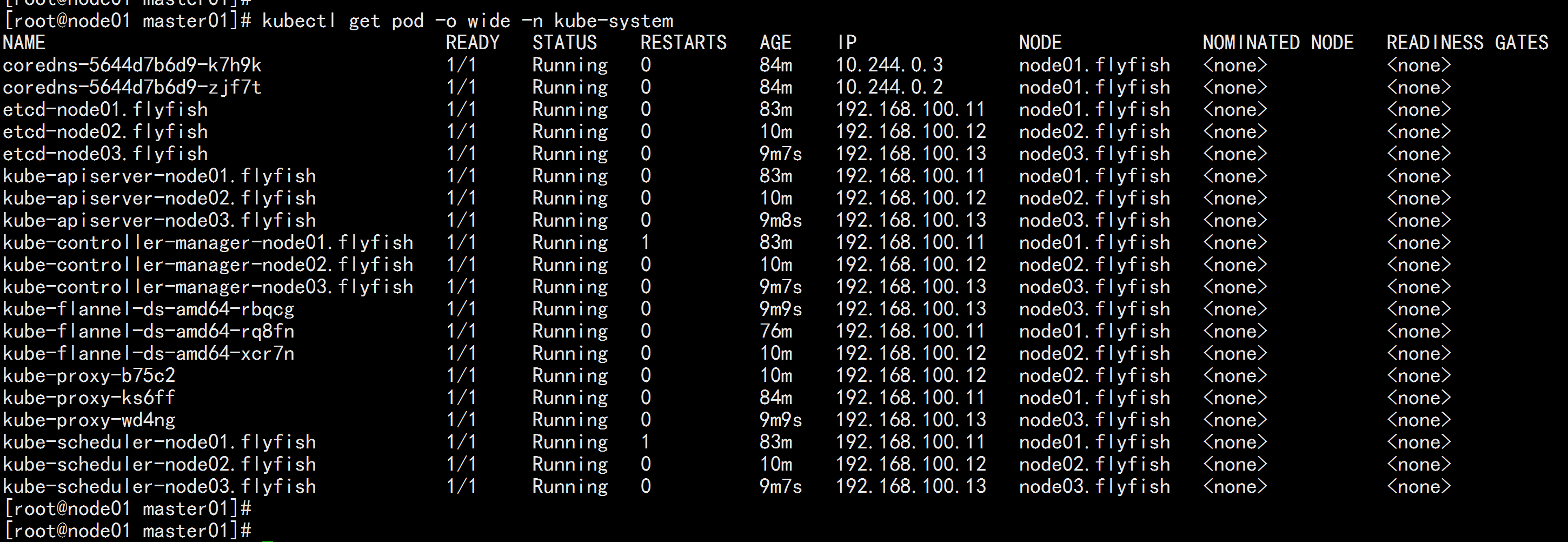

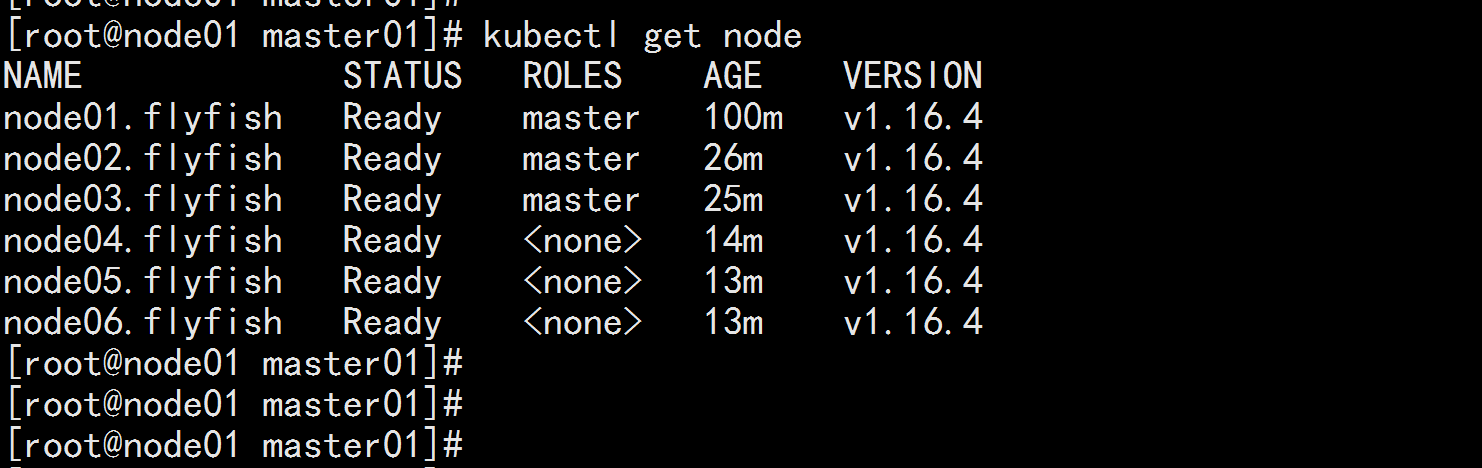

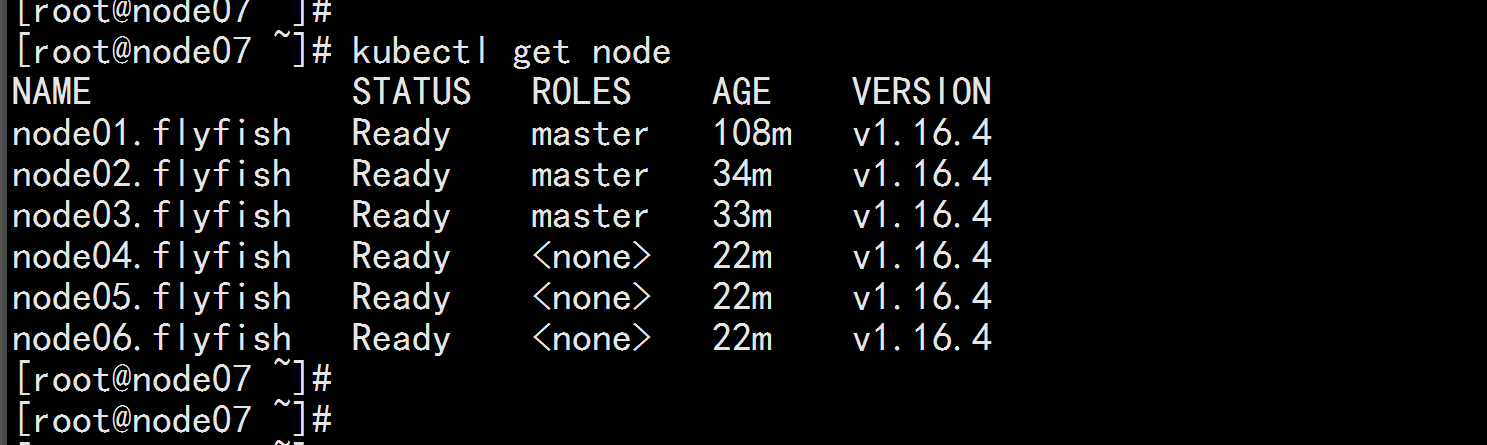

查看节点

kubectl get node

kubectl get pod -o wide -n kube-system

![image_1e5fcf455m0913qh6bk1jq5eokoa.png-59.2kB]()

![image_1e5fcgtgsh6fvq24to1r0i19slon.png-280.5kB]()

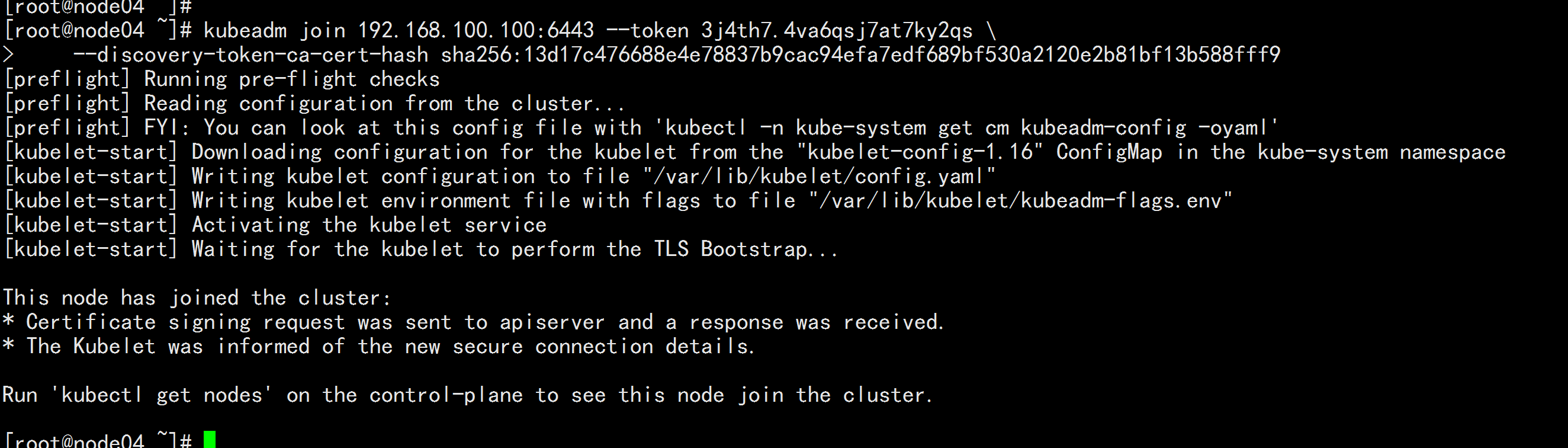

5.4 将从节点加入集群

node04.flyfish 加入 集群

kubeadm join 192.168.100.100:6443 --token 3j4th7.4va6qsj7at7ky2qs \

--discovery-token-ca-cert-hash sha256:13d17c476688e4e78837b9cac94efa7edf689bf530a2120e2b81bf13b588fff9

![image_1e5fcl3ff14rt1mec1j58ok919cp4.png-148.2kB]()

node05.flyfish 加入集群

kubeadm join 192.168.100.100:6443 --token 3j4th7.4va6qsj7at7ky2qs \

--discovery-token-ca-cert-hash sha256:13d17c476688e4e78837b9cac94efa7edf689bf530a2120e2b81bf13b588fff9

![image_1e5fcnb0u15k61e3r42310ca1c1jph.png-150.2kB]()

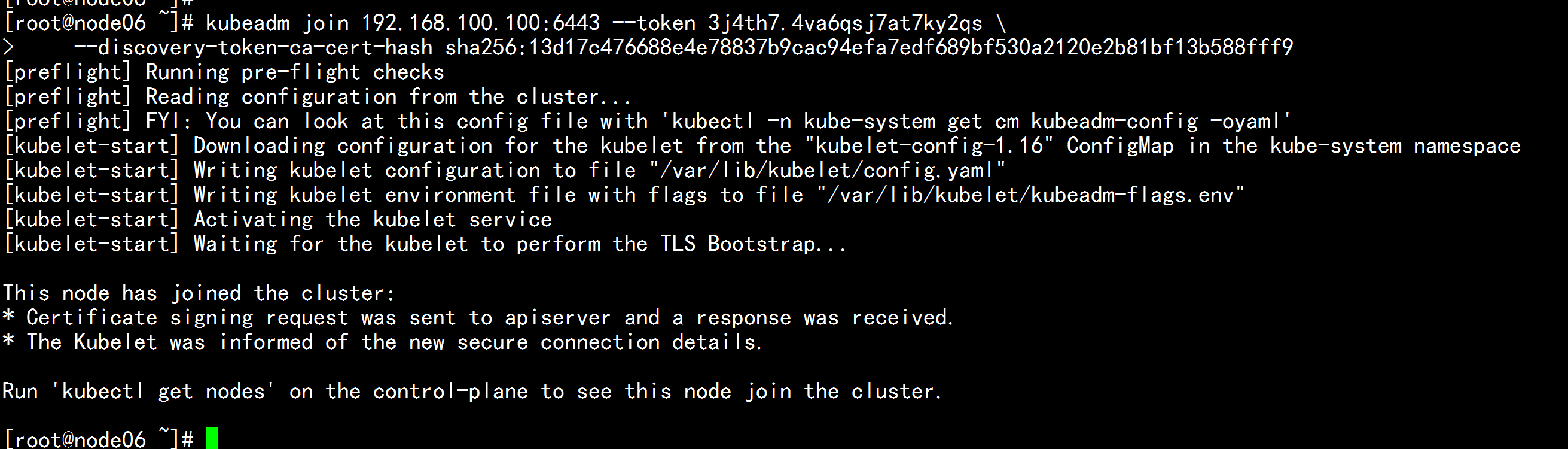

node06.flyfish 加入集群

kubeadm join 192.168.100.100:6443 --token 3j4th7.4va6qsj7at7ky2qs \

--discovery-token-ca-cert-hash sha256:13d17c476688e4e78837b9cac94efa7edf689bf530a2120e2b81bf13b588fff9

![image_1e5fcoki0ao51p0g1c931re735bpu.png-157.2kB]()

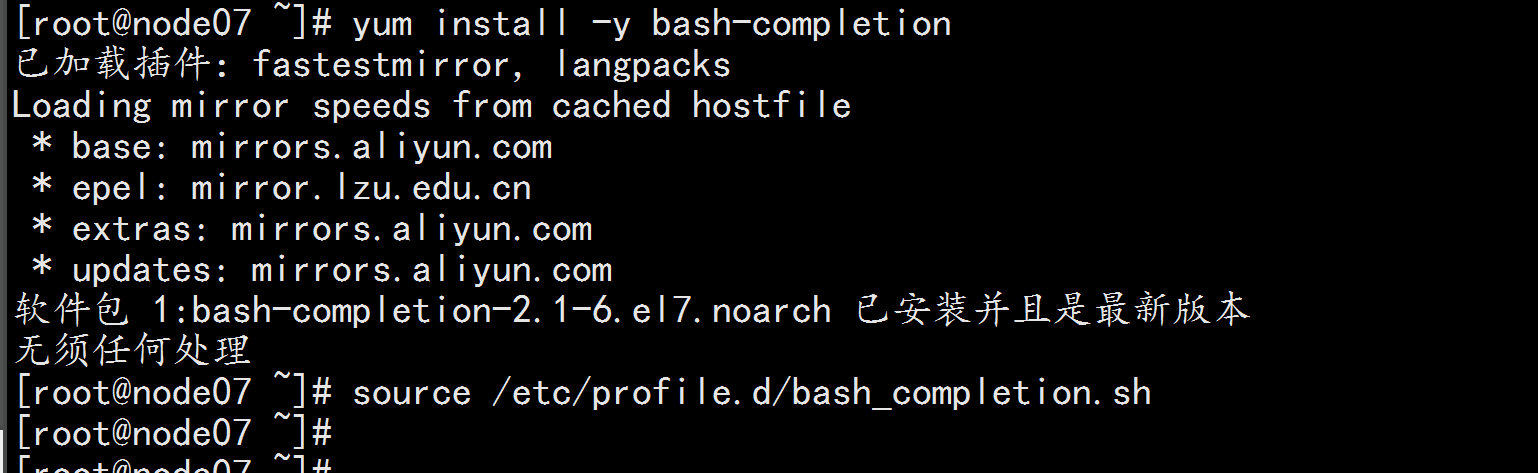

kubectl get node

kubectl get pods -o wide -n kube-system

![image_1e5fde3tlnpf1qq8acd1aag1shrqb.png-70.7kB]()

![image_1e5fdgnpsoib1k52kokovu10fvqr.png-338.5kB]()

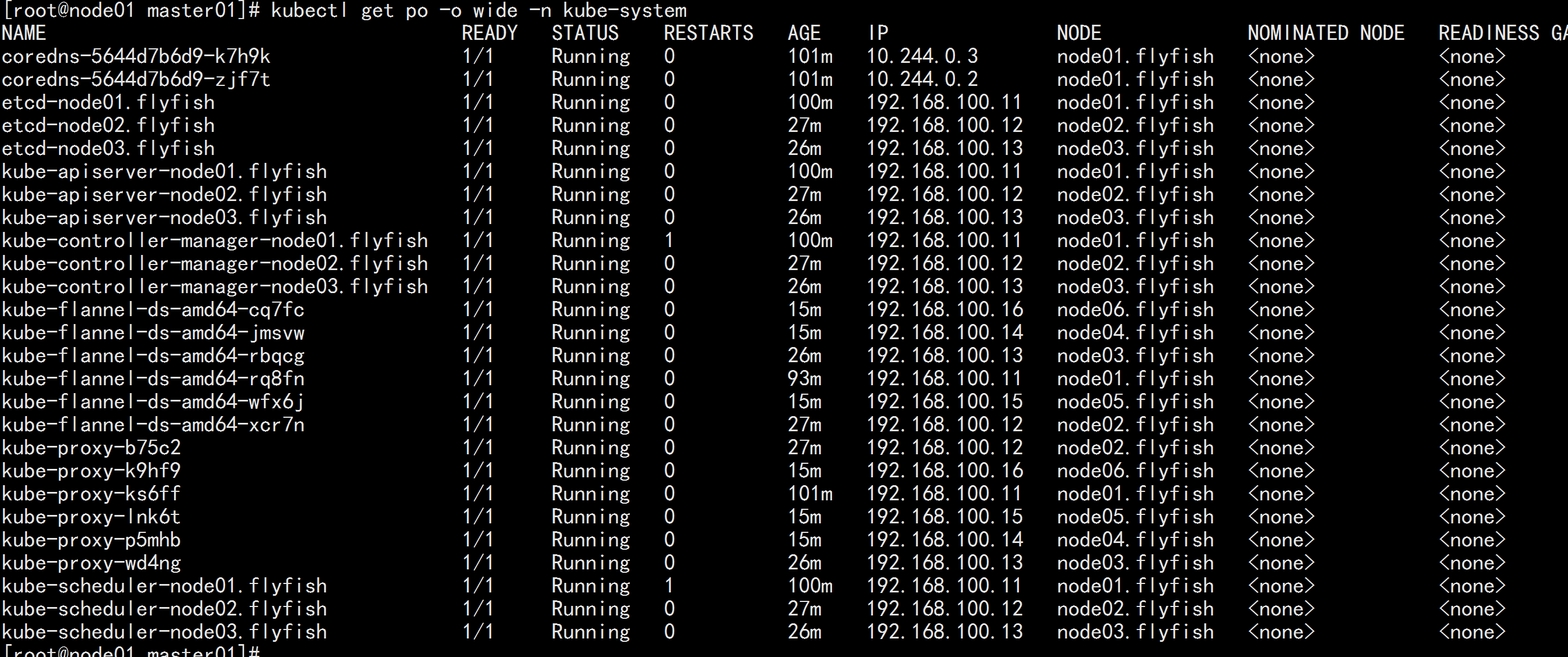

5.5 在node07.flyfish 上面进行测试

登录 node07.flyfish

设置kubernetes源

cat <<EOF > /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

enabled=1

gpgcheck=1

repo_gpgcheck=1

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

yum install -y kubectl-1.16.4

![image_1e5fdmu7bit38h416271of57lor8.png-241.5kB]()

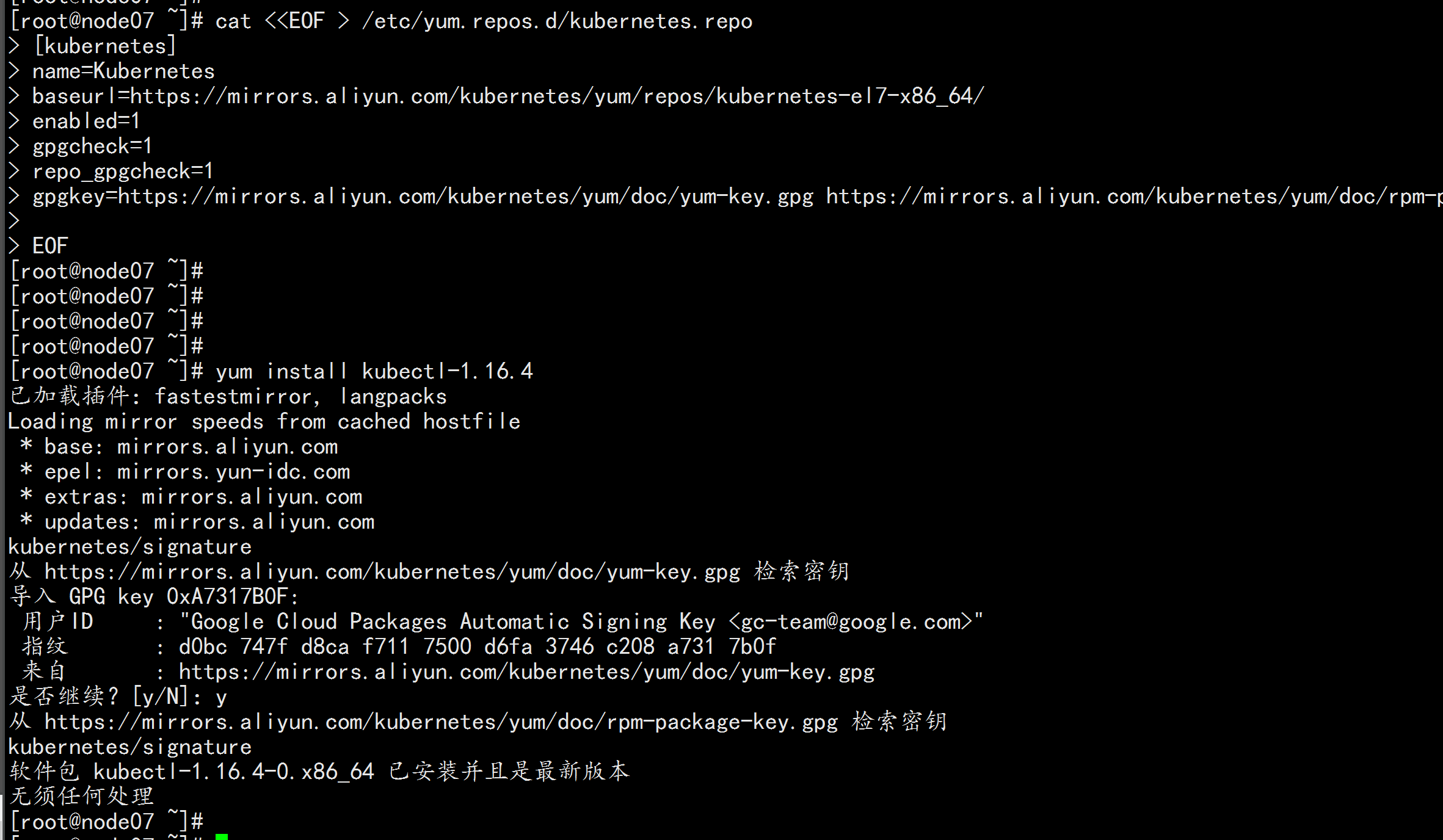

命令补全:

yum install -y bash-completion

source /etc/profile.d/bash_completion.sh

![image_1e5fdplnfhfsa4ucj1qimuugrl.png-76.3kB]()

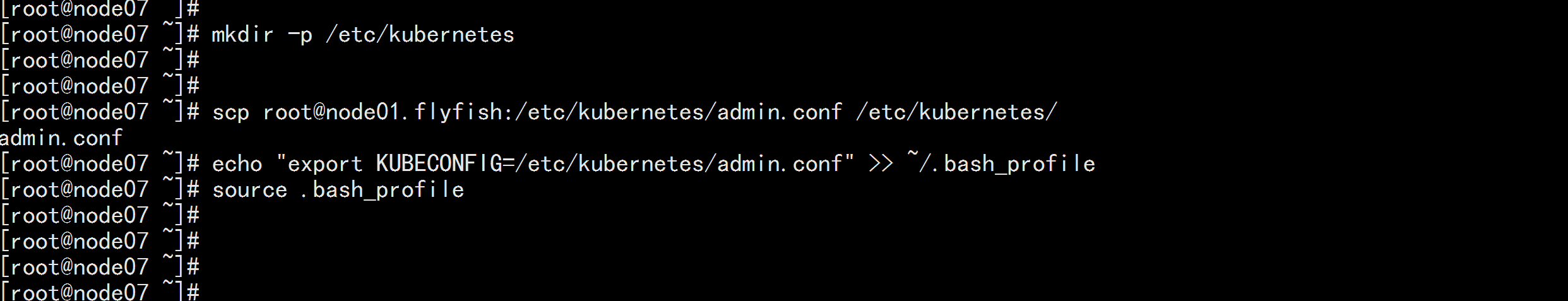

拷贝admin.conf

mkdir -p /etc/kubernetes

scp root@node01.flyfish:/etc/kubernetes/admin.conf /etc/kubernetes/

echo "export KUBECONFIG=/etc/kubernetes/admin.conf" >> ~/.bash_profile

source .bash_profile

![image_1e5fdsoso1jjbelbc24par1gmes2.png-82.8kB]()

查看测试:

kubectl get nodes

kubectl get pod -n kube-system

![image_1e5fdup9q1bpa1l1boonnle193lsf.png-67.1kB]()

![image_1e5fdv6p413f0doq1s1jvc0bokss.png-347.4kB]()

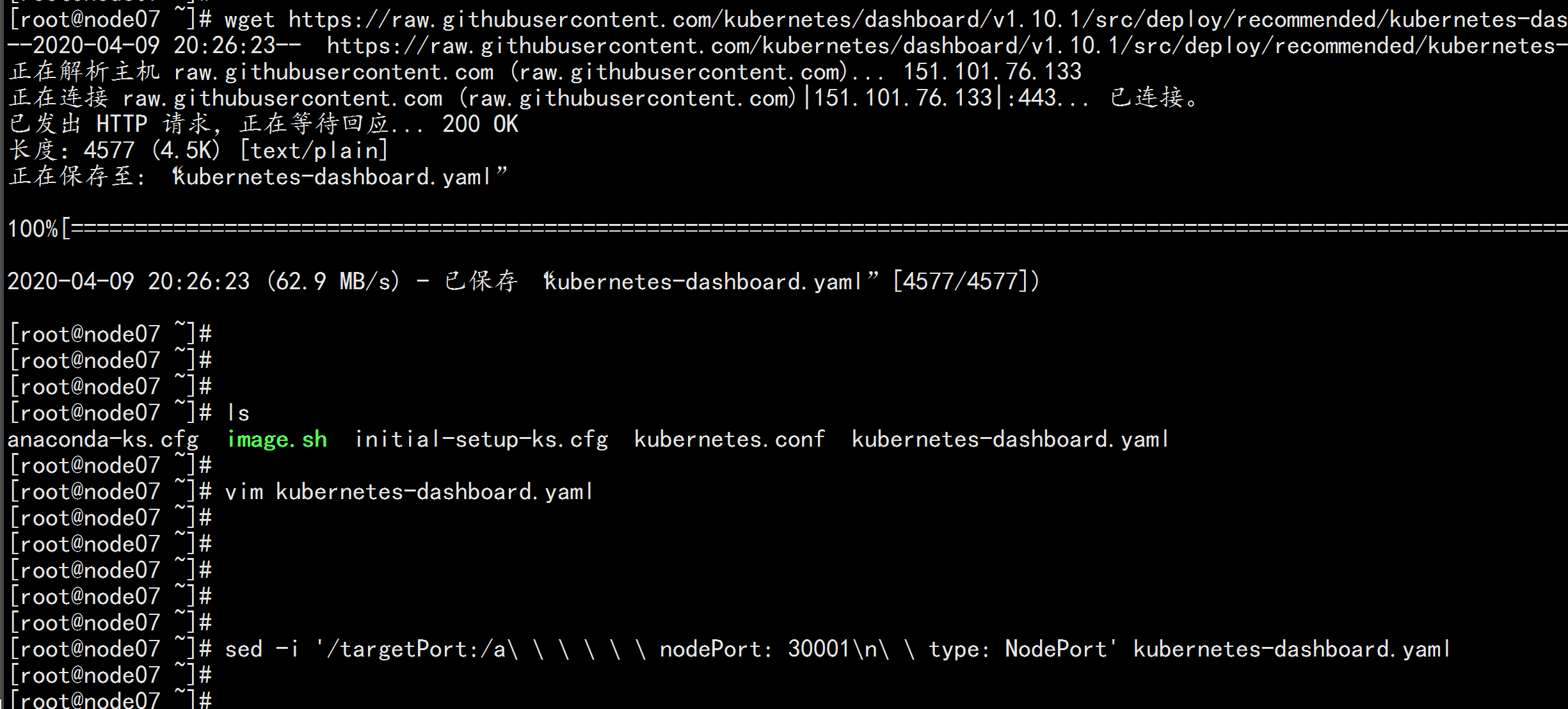

5.6部署dashboard 界面

注:在node07.flyfish节点上进行如下操作

1.创建Dashboard的yaml文件

wget https://raw.githubusercontent.com/kubernetes/dashboard/v2.0.0-beta8/aio/deploy/recommended.yaml

sed -i 's/kubernetesui/registry.cn-hangzhou.aliyuncs.com\/loong576/g' recommended.yaml

sed -i '/targetPort: 8443/a\ \ \ \ \ \ nodePort: 30001\n\ \ type: NodePort' recommended.yaml

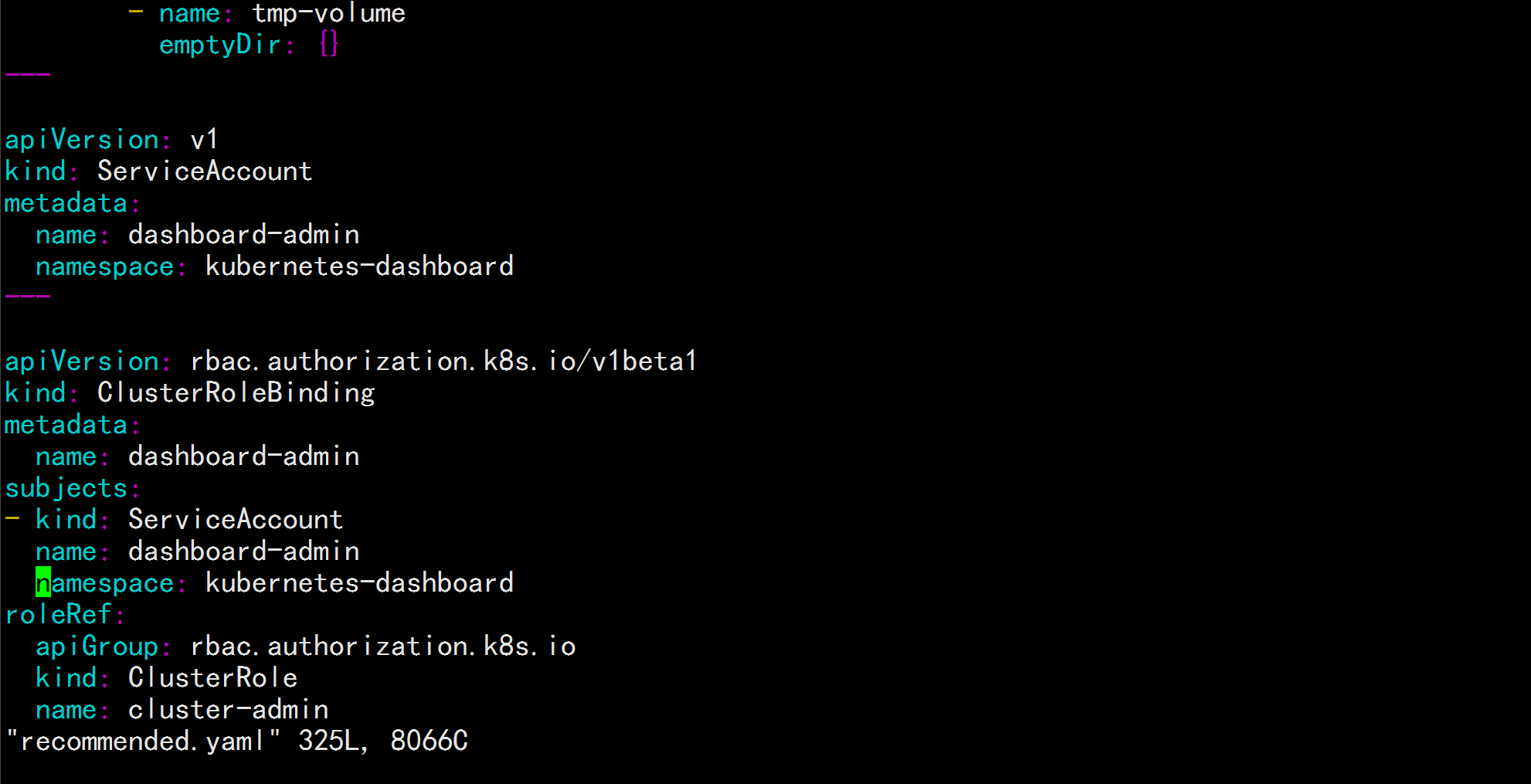

新增管理员帐号

vim recommended.yaml

到最后加上:

---

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: dashboard-admin

namespace: kubernetes-dashboard

---

apiVersion: rbac.authorization.k8s.io/v1beta1

kind: ClusterRoleBinding

metadata:

name: dashboard-admin

subjects:

- kind: ServiceAccount

name: dashboard-admin

namespace: kubernetes-dashboard

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: cluster-admin

---

![image_1e5fejmhs1tm71smij4e1uuh1dabm.png-208.1kB]()

![image_1e5ffp3re17d31h9i1ma7gpgej72a.png-112.2kB]()

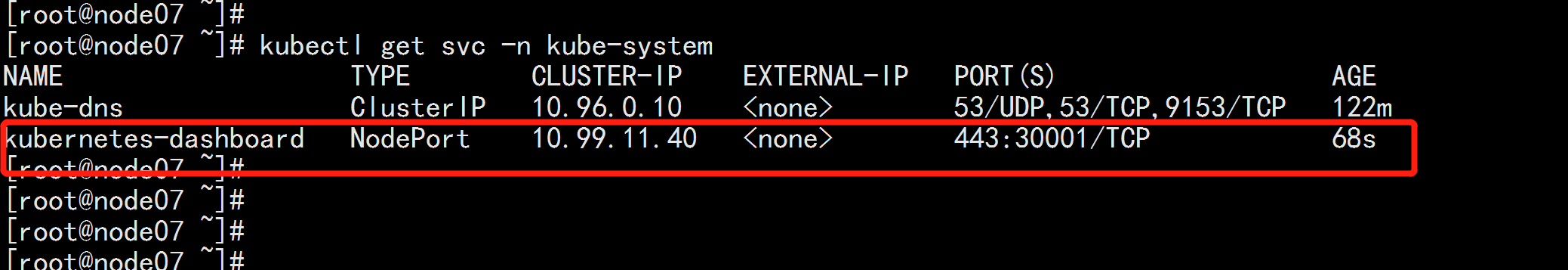

部署Dashboard

kubectl apply -f recommended.yaml

创建完成后,检查相关服务运行状态

kubectl get all -n kubernetes-dashboard

kubectl get svc -n kubernetes-dashboard

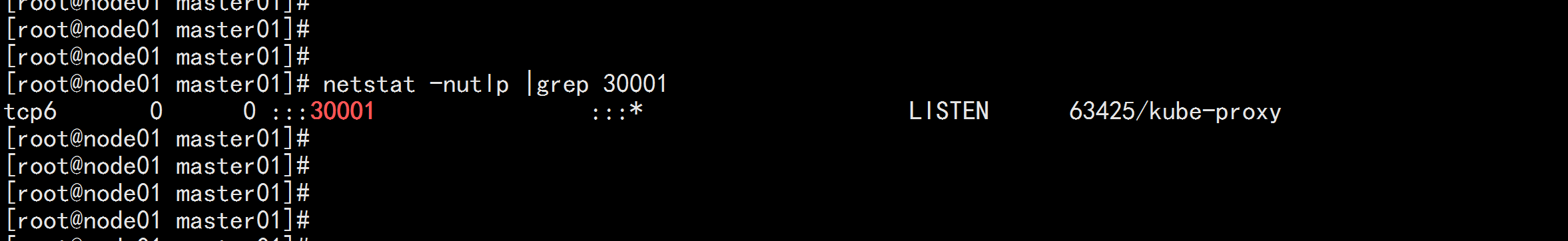

netstat -ntlp|grep 30001

![image_1e5ffrkqm11m17e11d0019kt1dnq2n.png-127.7kB]()

![image_1e5femmt318lv1ck2r51alo1i7i1g.png-62.1kB]()

![image_1e5ffu6lo1ib61g5gqbl1idm1kt434.png-59.6kB]()

在浏览器输入Dashboard访问地址:

https://192.168.100.11:30001

![image_1e5ffvlan17v819um530brdd3g3u.png-267.2kB]()

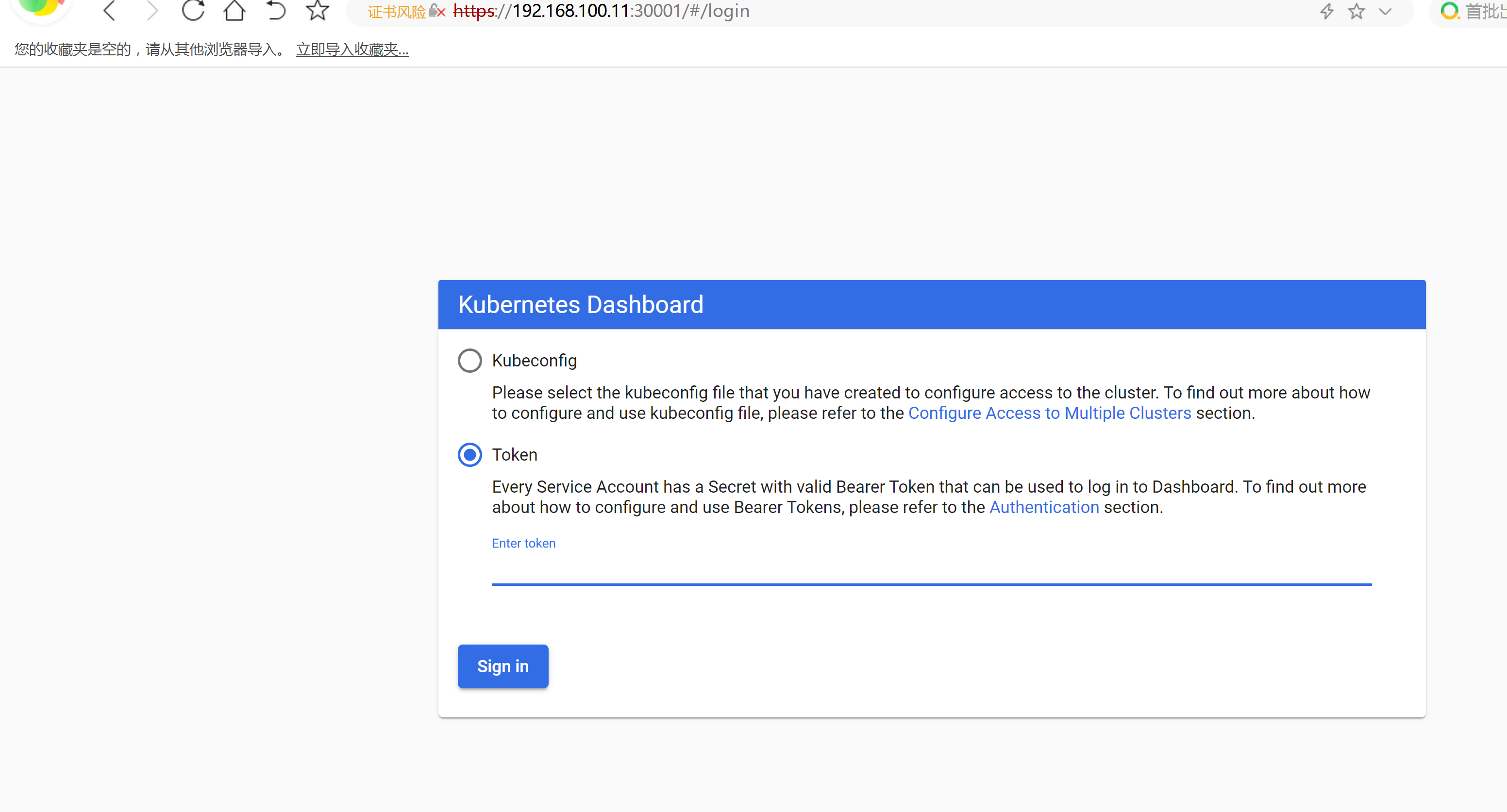

授权令牌

kubectl describe secrets -n kubernetes-dashboard dashboard-admin

----

![image_1e5fg2dns1h6r11vgpq1k5fd5m.png-388.7kB]()

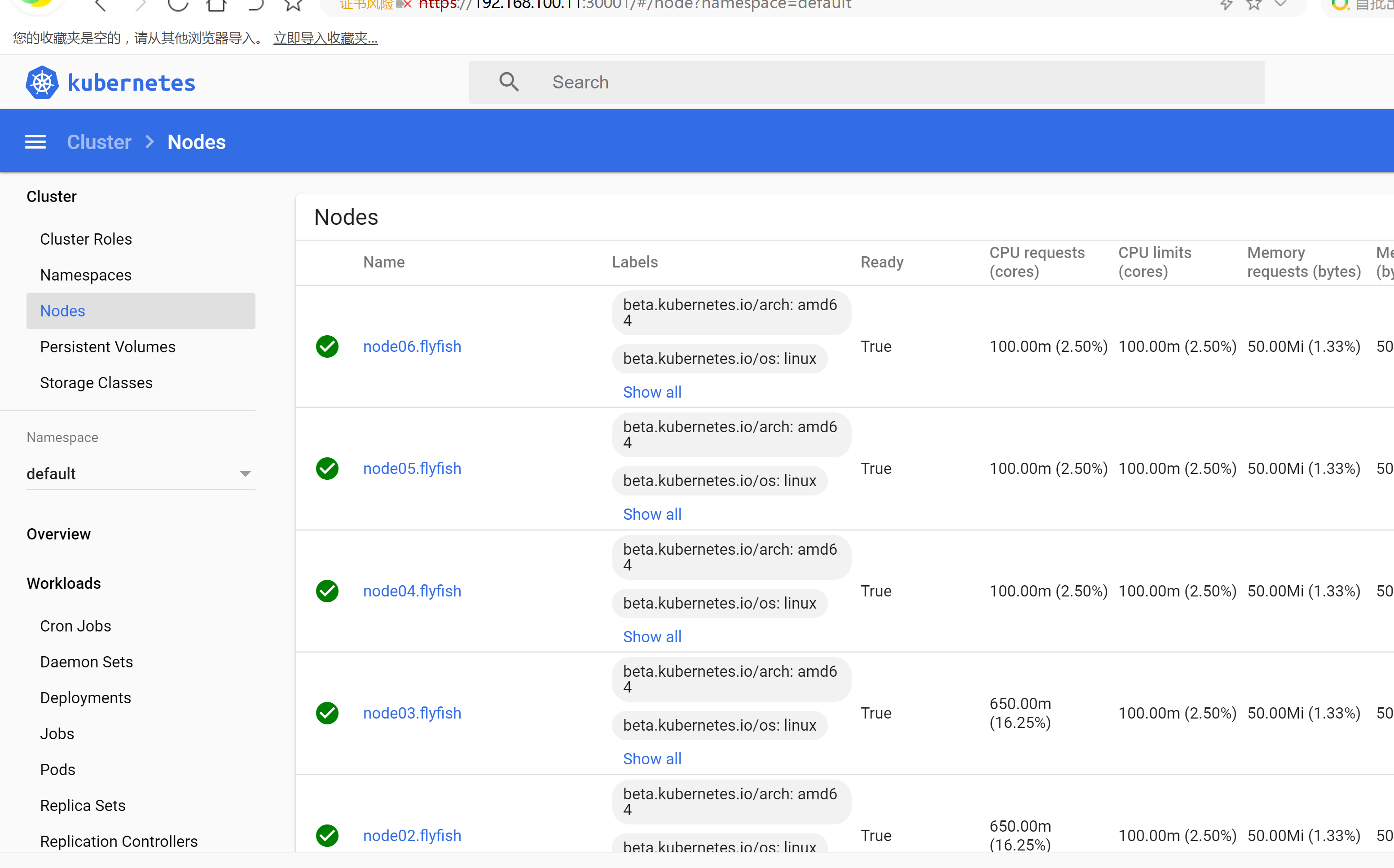

![image_1e5fg47nbruhn2k1gmq1dbvckr13.png-495.3kB]()

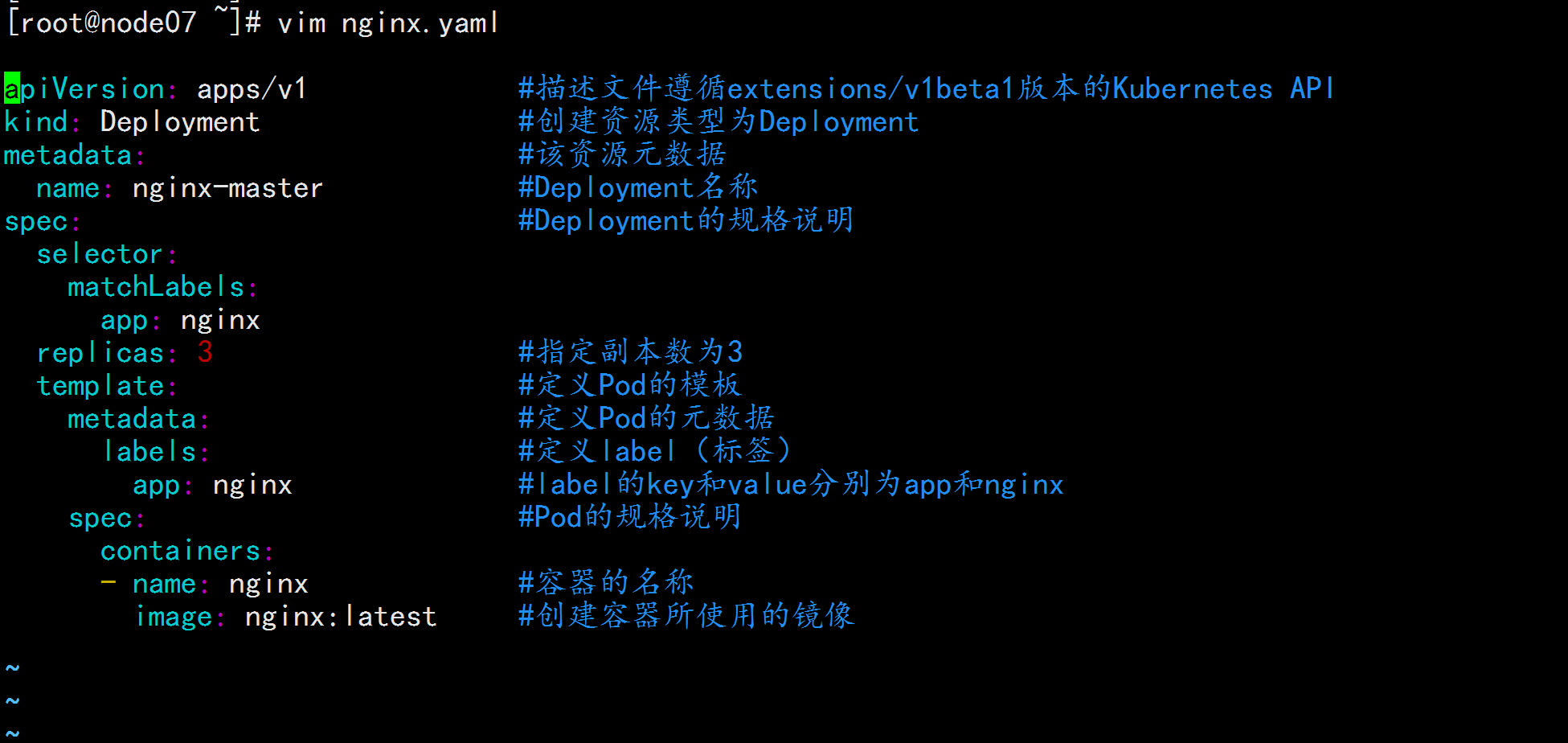

新建一个pod

----

vim nignx.yaml

apiVersion: apps/v1 #描述文件遵循extensions/v1beta1版本的Kubernetes API

kind: Deployment #创建资源类型为Deployment

metadata: #该资源元数据

name: nginx-master #Deployment名称

spec: #Deployment的规格说明

selector:

matchLabels:

app: nginx

replicas: 3 #指定副本数为3

template: #定义Pod的模板

metadata: #定义Pod的元数据

labels: #定义label(标签)

app: nginx #label的key和value分别为app和nginx

spec: #Pod的规格说明

containers:

- name: nginx #容器的名称

image: nginx:latest #创建容器所使用的镜像

----

kubectl apply -f nginx.yaml

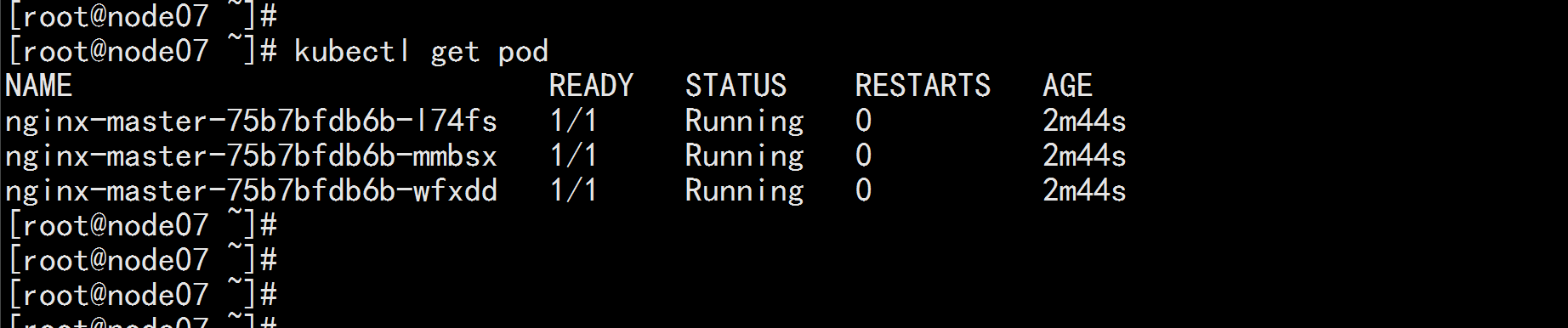

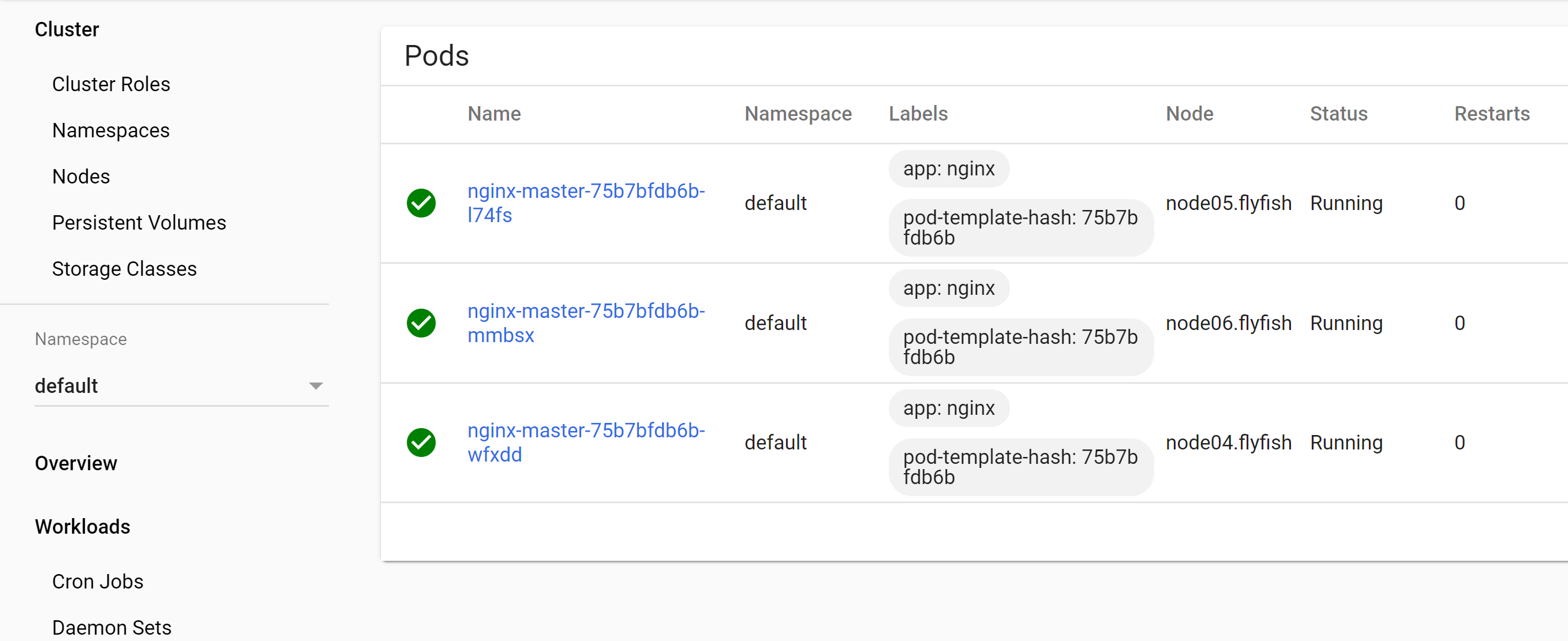

kubectl get pod

![image_1e5fgd1s4fv8d3n9ln1eog4fk1g.png-168.4kB]()

![image_1e5fgdrnptr31ebv1leo9eo13d51t.png-60.3kB]()

![image_1e5fge6kdhjpq6alhf1vo5k282a.png-240.1kB]()