一.功能简述

Apache ZooKeeper是一种用于分布式应用程序的分布式开源协调服务;提供了命名服务、配置管理、集群管理、分布式锁、队列管理等一系列的功能

Ⅰ).角色功能

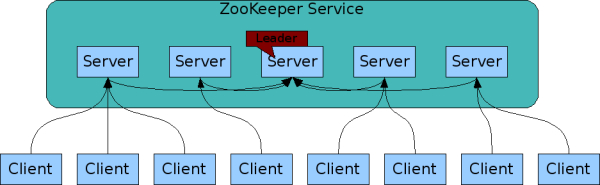

ZooKeeper主要包括leader、learner和client三大类角色,其中learner又分为follower和observer

![]()

功能描述

a).leader

负责进行投票的发起和决议,更新系统状态

b).learner

1).follower

用于接受客户端请求并想客户端返回结果,在选主过程中参与投票

2).observer

可以接受客户端连接,将写请求转发给leader,但observer不参加投票过程,只同步leader的状态,observer的目的是为了扩展系统,提高读取速度

c).client

请求发起方

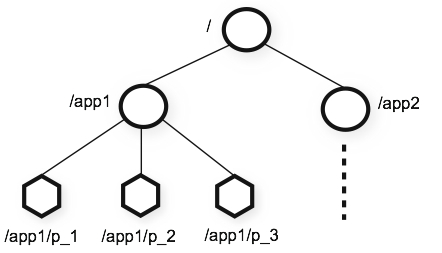

Ⅱ).数据模型和分层命名空间

ZooKeeper提供的namespace非常类似于标准文件系统。名称是由斜杠(/)分隔的路径元素序列。ZooKeeper名称空间中的每个节点都由路径标识

![]()

- 层次化的目录结构,命名符合常规文件系统规范

- 每一个zookeeper节点一个znode,并且具有一个唯一的路径标识

- 节点Znode可以包含数据和子节点,但是EPHEMERAL类型的节点不能有子节点

- Znode中的数据可以有多个版本,查询需要带版本号

- 客户端应用可以在节点上设置监视器

- ZK节点只支持一次性完整读写

Ⅲ).Znode类型

Znode的类型在创建时确定并且之后不能再修改

持久znode不依赖于客户端会话,只有当客户端明确要删除该持久znode时才会被删除

短暂znode的客户端会话结束时,zookeeper会将该短暂znode删除,短暂znode不可以有子节点

- PERSISTENT: 持久的

- EPHEMERAL: 暂时的

- PERSISTENT_SEQUENTIAL: 持久化顺序编号目录节点

- EPHEMERAL_SEQUENTIAL: 暂时化顺序编号目录节点

二.安装部署

zookeeper-3.4.14

zookeeper-3.5.5

Ⅱ).配置

a).tickTime

CS通信心跳时间,单位是毫秒,系统默认是2000毫秒

客户端与服务器或者服务器与服务器之间维持心跳,也就是每个tickTime时间就会发送一次心跳。通过心跳不仅能够用来监听机器的工作状态,还可以通过心跳来控制Flower跟Leader的通信时间,默认情况下FL的会话时常是心跳间隔的两倍

b).initLimit

集群中的follower服务器(F)与leader服务器(L)之间初始连接时能容忍的最多心跳数(tickTime的数量)

c).syncLimit

集群中flower服务器(F)跟leader(L)服务器之间的请求和答应最多能容忍的心跳数

d).dataDir

存放myid信息跟一些版本,日志,跟服务器唯一的ID信息等

e).clientPort

客户端连接zookeeper服务器的端口,默认是2181

## 查看端口情况

netstat -tan

f).集群信息的配置

配置集群信息是存在一定的格式:service.N =YYY:A:B

- N:代表服务器编号(也就是myid里面的值)

- YYY:服务器地址

- A:表示 Flower 跟 Leader的通信端口,简称服务端内部通信的端口(默认2888)

- B:表示 是选举端口(默认是3888)

# The number of milliseconds of each tick

tickTime=2000

# The number of ticks that the initial

# synchronization phase can take

initLimit=10

# The number of ticks that can pass between

# sending a request and getting an acknowledgement

syncLimit=5

# the directory where the snapshot is stored.

dataDir=/home/bigdata/zookeeper-3.4.14/data

# the port at which the clients will connect

clientPort=2181

# the maximum number of client connections.

#maxClientCnxns=60

# The number of snapshots to retain in dataDir

#autopurge.snapRetainCount=3

# Purge task interval in hours

# Set to "0" to disable auto purge feature

#autopurge.purgeInterval=1

# 集群信息的配置

server.1=hostname1:2888:3888

server.2=hostname2:2888:3888

server.3=hostname3:2888:3888

Ⅲ).安装

a).解压

tar -zxvf zookeeper-3.4.14.tar.gz

b).修改配置

默认集群模式配置

1).修改存储路径

dataDir=/home/bigdata/zookeeper-3.4.14/data

2).修改myid

## 分别在每个节点的dataDir配置目录中,创建myid

vi myid

## 内容,代表服务器编号

1

3).增加集群配置

## 集群信息的配置

server.1=hostname1:2888:3888

server.2=hostname2:2888:3888

server.3=hostname3:2888:3888

c).启停服务

## 启动服务

./bin/zkServer.sh start ./conf/zoo.cfg

## 停止服务

./bin/zkServer.sh stop ./conf/zoo.cfg

三.常用命令

Ⅰ).查看状态

## 命令

./bin/zkServer.sh status

## 结果

## 可查看ZK工作模式,使用的配置文件等信息

ZooKeeper JMX enabled by default

Using config: /home/bigdata/zookeeper-3.4.14/bin/../conf/zoo.cfg

Mode: standalone

Ⅱ).客户端连接Zookeeper

./bin/zkCli.sh

Ⅲ).ls 查看

WatchedEvent state:SyncConnected type:None path:null

[zk: localhost:2181(CONNECTED) 0] ls /

[zookeeper]

[zk: localhost:2181(CONNECTED) 1] ls /zookeeper

[quota]

[zk: localhost:2181(CONNECTED) 2]

Ⅳ).get 获取节点数据和更新信息

- cZxid: 创建节点的id

- ctime: 节点的创建时间

- mZxid: 修改节点的id

- mtime: 修改节点的时间

- pZxid: 子节点的id

- cversion: 子节点的版本

- dataVersion: 当前节点数据的版本

- aclVersion: 权限的版本

- ephemeralOwner: 是否是临时节点标识

- dataLength: 数据的长度

- numChildren: 子节点的数量

[zk: localhost:2181(CONNECTED) 2] get /zookeeper

cZxid = 0x0

ctime = Thu Jan 01 08:00:00 CST 1970

mZxid = 0x0

mtime = Thu Jan 01 08:00:00 CST 1970

pZxid = 0x0

cversion = -1

dataVersion = 0

aclVersion = 0

ephemeralOwner = 0x0

dataLength = 0

numChildren = 1

Ⅴ).stat 获得节点的更新信息

[zk: localhost:2181(CONNECTED) 4] stat /zookeeper

cZxid = 0x0

ctime = Thu Jan 01 08:00:00 CST 1970

mZxid = 0x0

mtime = Thu Jan 01 08:00:00 CST 1970

pZxid = 0x0

cversion = -1

dataVersion = 0

aclVersion = 0

ephemeralOwner = 0x0

dataLength = 0

numChildren = 1

Ⅵ).ls2 查看

ls2是ls命令和stat命令的整合

[zk: localhost:2181(CONNECTED) 5] ls2 /zookeeper

[quota]

cZxid = 0x0

ctime = Thu Jan 01 08:00:00 CST 1970

mZxid = 0x0

mtime = Thu Jan 01 08:00:00 CST 1970

pZxid = 0x0

cversion = -1

dataVersion = 0

aclVersion = 0

ephemeralOwner = 0x0

dataLength = 0

numChildren = 1

Ⅶ).create 创建节点

命令:create [-s] [-e] path data acl

- [-s]: 创建顺序节点,自动累加

- [-e]: 创建临时节点

## 创建dremio节点

[zk: localhost:2181(CONNECTED) 6] create /dremio dremio_data

Created /dremio

## 获得dremio节点内容

[zk: localhost:2181(CONNECTED) 7] get /dremio

dremio_data

cZxid = 0x2

ctime = Thu Aug 22 23:15:59 CST 2019

mZxid = 0x2

mtime = Thu Aug 22 23:15:59 CST 2019

pZxid = 0x2

cversion = 0

dataVersion = 0

aclVersion = 0

ephemeralOwner = 0x0

dataLength = 11

numChildren = 0

Ⅷ).set 修改节点

命令: set path data [version]

1).数据修改

## 修改dremio节点数据

[zk: localhost:2181(CONNECTED) 8] set /dremio dremio_data_update

cZxid = 0x2

ctime = Thu Aug 22 23:15:59 CST 2019

mZxid = 0x3

mtime = Thu Aug 22 23:19:58 CST 2019

pZxid = 0x2

cversion = 0

dataVersion = 1

aclVersion = 0

ephemeralOwner = 0x0

dataLength = 18

numChildren = 0

## 获得dremio节点内容

[zk: localhost:2181(CONNECTED) 9] get /dremio

dremio_data_update

cZxid = 0x2

ctime = Thu Aug 22 23:15:59 CST 2019

mZxid = 0x3

mtime = Thu Aug 22 23:19:58 CST 2019

pZxid = 0x2

cversion = 0

dataVersion = 1

aclVersion = 0

ephemeralOwner = 0x0

dataLength = 18

numChildren = 0

2).根据版本号修改

## 获得dremio节点内容,此时dataVersion = 2

[zk: localhost:2181(CONNECTED) 11] get /dremio

dremio_data_update

cZxid = 0x2

ctime = Thu Aug 22 23:15:59 CST 2019

mZxid = 0x4

mtime = Thu Aug 22 23:22:08 CST 2019

pZxid = 0x2

cversion = 0

dataVersion = 2

aclVersion = 0

ephemeralOwner = 0x0

dataLength = 18

numChildren = 0

## 根据版本号更新dataVersion乐观锁

### 数据的版本号已经修改为2,再次使用版本号1修改节点提交错误

[zk: localhost:2181(CONNECTED) 12] set /dremio dremio_data_update 1

version No is not valid : /dremio

### 修改版本号为2的数据,版本号更新为3

[zk: localhost:2181(CONNECTED) 13] set /dremio dremio_data_update 2

cZxid = 0x2

ctime = Thu Aug 22 23:15:59 CST 2019

mZxid = 0x6

mtime = Thu Aug 22 23:25:23 CST 2019

pZxid = 0x2

cversion = 0

dataVersion = 3

aclVersion = 0

ephemeralOwner = 0x0

dataLength = 18

numChildren = 0

Ⅸ).delete删除节点

命令:delete path [version]

## 根据版本号删除

[zk: localhost:2181(CONNECTED) 14] delete /dremio 3

## 获得dremio节点内容

[zk: localhost:2181(CONNECTED) 15] get /dremio

Node does not exist: /dremio

Ⅹ).watcher通知机制

a).设置watch事件

命令:stat path [watch]

## 设置watch事件

[zk: localhost:2181(CONNECTED) 17] stat /hbase watch

Node does not exist: /hbase

## 触发watcher事件

[zk: localhost:2181(CONNECTED) 18] create /hbase hbase

WATCHER::

WatchedEvent state:SyncConnected type:NodeCreated path:/hbase

Created /hbase

b).查看watch事件

命令:get path [watch]

## 设置watch事件

[zk: localhost:2181(CONNECTED) 19] get /solr watch

Node does not exist: /solr

## 不存在的目录无法触发watcher事件

[zk: localhost:2181(CONNECTED) 20] create /solr solr

Created /solr

## 设置watch事件

[zk: localhost:2181(CONNECTED) 22] get /solr watch

solr_data

cZxid = 0xa

ctime = Thu Aug 22 23:34:54 CST 2019

mZxid = 0xb

mtime = Thu Aug 22 23:35:09 CST 2019

pZxid = 0xa

cversion = 0

dataVersion = 1

aclVersion = 0

ephemeralOwner = 0x0

dataLength = 9

numChildren = 0

## 触发watcher事件

[zk: localhost:2181(CONNECTED) 23] set /solr solr_data_update

WATCHER::

cZxid = 0xa

WatchedEvent state:SyncConnected type:NodeDataChanged path:/solr

ctime = Thu Aug 22 23:34:54 CST 2019

mZxid = 0xc

mtime = Thu Aug 22 23:37:02 CST 2019

pZxid = 0xa

cversion = 0

dataVersion = 2

aclVersion = 0

ephemeralOwner = 0x0

dataLength = 16

numChildren = 0

Ⅺ).ACL权限控制

Zookeeper有5种操作权限:CREATE、READ、WRITE、DELETE、ADMIN,即增、删、改、查、管理权限

赋权方式: [scheme:id:permissions]

scheme4种身份认证

- world:默认方式,相当于全世界都能访问

- auth:代表已经认证通过的用户(cli中可以通过addauth digest user:pwd 来添加当前上下文中的授权用户)

- digest:即用户名:密码这种方式认证,这也是业务系统中最常用的

- ip:使用Ip地址认证

a).获取acl权限信息

命令: getAcl /znode_path

默认: world:cdrwa任何人都可以访问

[zk: localhost:2181(CONNECTED) 24] getAcl /solr

'world,'anyone

: cdrwa

b).设置权限

命令: setAcl /znode_path [scheme:id:permissions]

## 默认权限

[zk: localhost:2181(CONNECTED) 24] getAcl /solr

'world,'anyone

: cdrwa

## 设置权限

[zk: localhost:2181(CONNECTED) 26] setAcl /solr world:anyone:rwa

cZxid = 0xa

ctime = Thu Aug 22 23:34:54 CST 2019

mZxid = 0xc

mtime = Thu Aug 22 23:37:02 CST 2019

pZxid = 0xa

cversion = 0

dataVersion = 2

aclVersion = 1

ephemeralOwner = 0x0

dataLength = 16

numChildren = 0

## 查看权限

[zk: localhost:2181(CONNECTED) 27] getAcl /solr

'world,'anyone

: rwa

c).密码明文设置

命令: setAcl /znode_path [scheme:username:password:permissions]

## 创建/hbase/data节点

[zk: localhost:2181(CONNECTED) 28] create /hbase/data hbase_test_data

Created /hbase/data

## 查看默认权限

[zk: localhost:2181(CONNECTED) 29] getAcl /hbase/data

'world,'anyone

: cdrwa

## 使用auth,密码明文设置

[zk: localhost:2181(CONNECTED) 30] setAcl /hbase/data auth:hbase:hbase:cdrwa

Acl is not valid : /hbase/data

## 注册hbase:hbase账号密码

[zk: localhost:2181(CONNECTED) 31] addauth digest hbase:hbase

## 使用auth,密码明文设置

[zk: localhost:2181(CONNECTED) 32] setAcl /hbase/data auth:hbase:hbase:cdrwa

cZxid = 0xe

ctime = Thu Aug 22 23:55:44 CST 2019

mZxid = 0xe

mtime = Thu Aug 22 23:55:44 CST 2019

pZxid = 0xe

cversion = 0

dataVersion = 0

aclVersion = 1

ephemeralOwner = 0x0

dataLength = 15

numChildren = 0

## 查看权限,密码为密文格式

[zk: localhost:2181(CONNECTED) 33] getAcl /hbase/data

'digest,'hbase:zJ5u59GFXPgRIx4zWNFCOCbyBOs=

: cdrwa

d).密码密文设置

命令: setAcl /znode_path [scheme:username:password:permissions]

## 创建/kafka节点

[zk: localhost:2181(CONNECTED) 39] create /kafka kafka

Created /kafka

## 密码密文设置

[zk: localhost:2181(CONNECTED) 40] setAcl /kafka digest:hbase:zJ5u59GFXPgRIx4zWNFCOCbyBOs=:cdrwa

cZxid = 0x13

ctime = Fri Aug 23 00:10:59 CST 2019

mZxid = 0x13

mtime = Fri Aug 23 00:10:59 CST 2019

pZxid = 0x13

cversion = 0

dataVersion = 0

aclVersion = 1

ephemeralOwner = 0x0

dataLength = 5

numChildren = 0

## 查看权限,密码为密文格式

[zk: localhost:2181(CONNECTED) 41] getAcl /kafka

'digest,'hbase:zJ5u59GFXPgRIx4zWNFCOCbyBOs=

: cdrwa

e).ip 控制客户端

命令: setAcl /znode_path [ip:hostname:permissions]

## 设置ip控制的权限信息

[zk: localhost:2181(CONNECTED) 44] setAcl /kafka/ip ip:192.168.133.132:cdrwa

cZxid = 0x15

ctime = Fri Aug 23 00:12:58 CST 2019

mZxid = 0x15

mtime = Fri Aug 23 00:12:58 CST 2019

pZxid = 0x15

cversion = 0

dataVersion = 0

aclVersion = 1

ephemeralOwner = 0x0

dataLength = 2

numChildren = 0

[zk: localhost:2181(CONNECTED) 45] getAcl /kafka/ip

Authentication is not valid : /kafka/ip

f).super 超级管理员

命令如下:

"-Dzookeeper.DigestAuthenticationProvider.superDigest=username:password"

nohup "$JAVA" "-Dzookeeper.log.dir=${ZOO_LOG_DIR}" "-Dzookeeper.root.logger=${ZOO_LOG4J_PROP}" "-Dzookeeper.DigestAuthenticationprovider.superDigest=username:password" \

-cp "$CLASSPATH" $JVMFLAGS $ZOOMAIN "$ZOOCFG" > "$_ZOO_DAEMON_OUT" 2>&1 < /dev/null &

备注:使用super权限需要修改zkServer.sh,添加super管理员,重启zkServer.sh

g).注册账号密码

命令: addauth digest username:password

## 注册hbase:hbase账号密码

[zk: localhost:2181(CONNECTED) 31] addauth digest hbase:hbase

Ⅻ).nc命令查看zookeeper信息

需安装nc: yum install nc

a).查看服务器配置

命令:echo conf|nc hostname 2181

[bigdata@carbondata data]$ echo conf|nc 192.168.133.132 2181

clientPort=2181

dataDir=/home/bigdata/zookeeper-3.4.14/data/version-2

dataLogDir=/home/bigdata/zookeeper-3.4.14/data/version-2

tickTime=2000

maxClientCnxns=60

minSessionTimeout=4000

maxSessionTimeout=40000

serverId=0

b).查看状态信息

命令:echo stat|nc hostname 2181

[bigdata@carbondata data]$ echo stat|nc 192.168.133.132 2181

Zookeeper version: 3.4.14-4c25d480e66aadd371de8bd2fd8da255ac140bcf, built on 03/06/2019 16:18 GMT

Clients:

/127.0.0.1:59358[1](queued=0,recved=523,sent=525)

/192.168.133.132:60578[0](queued=0,recved=1,sent=0)

Latency min/avg/max: 0/0/17

Received: 526

Sent: 527

Connections: 2

Outstanding: 0

Zxid: 0x16

Mode: standalone

Node count: 10

c).查看zookeeper运行状态

命令:echo ruok|nc hostname 2181

[bigdata@carbondata data]$ echo ruok|nc 192.168.133.132 2181

imok

[bigdata@carbondata data]$

d).列出临时节点

命令:echo dump|nc hostname 2181

## 未创建临时节点

[bigdata@carbondata data]$ echo dump|nc 192.168.133.132 2181

SessionTracker dump:

Session Sets (3):

0 expire at Thu Jan 01 10:47:54 CST 1970:

0 expire at Thu Jan 01 10:48:04 CST 1970:

1 expire at Thu Jan 01 10:48:14 CST 1970:

0x100002177b70000

ephemeral nodes dump:

Sessions with Ephemerals (0):

## 创建临时节点

[zk: localhost:2181(CONNECTED) 0] create -e /hbase/tmp test

Created /hbase/tmp

## 列出临时节点

[bigdata@carbondata data]$ echo dump|nc 192.168.133.132 2181

SessionTracker dump:

Session Sets (4):

0 expire at Thu Jan 01 10:55:34 CST 1970:

0 expire at Thu Jan 01 10:55:44 CST 1970:

0 expire at Thu Jan 01 10:55:54 CST 1970:

1 expire at Thu Jan 01 10:55:56 CST 1970:

0x100002177b70003

ephemeral nodes dump:

Sessions with Ephemerals (1):

0x100002177b70003:

/hbase/temp

/hbase/tmp

e).显示连接到服务端的信息

命令:echo cons|nc hostname 2181

[bigdata@carbondata data]$ echo cons|nc 192.168.133.132 2181

/192.168.133.132:60598[0](queued=0,recved=1,sent=0)

/127.0.0.1:59376[1](queued=0,recved=21,sent=21,sid=0x100002177b70003,lop=PING,est=1566491558846,to=30000,lcxid=0x2,lzxid=0x21,lresp=10575010,llat=0,minlat=0,avglat=0,maxlat=3)

f).显示环境变量信息

命令:echo envi|nc hostname 2181

[bigdata@carbondata data]$ echo envi|nc 192.168.133.132 2181

Environment:

zookeeper.version=3.4.14-4c25d480e66aadd371de8bd2fd8da255ac140bcf, built on 03/06/2019 16:18 GMT

host.name=carbondata

java.version=1.8.0_211

java.vendor=Oracle Corporation

java.home=/usr/java/jdk1.8.0_211-amd64/jre

java.class.path=/home/bigdata/zookeeper-3.4.14/bin/../zookeeper-server/target/classes:/home/bigdata/zookeeper-3.4.14/bin/../build/classes:/home/bigdata/zookeeper-3.4.14/bin/../zookeeper-server/target/lib/*.jar:/home/bigdata/zookeeper-3.4.14/bin/../build/lib/*.jar:/home/bigdata/zookeeper-3.4.14/bin/../lib/slf4j-log4j12-1.7.25.jar:/home/bigdata/zookeeper-3.4.14/bin/../lib/slf4j-api-1.7.25.jar:/home/bigdata/zookeeper-3.4.14/bin/../lib/netty-3.10.6.Final.jar:/home/bigdata/zookeeper-3.4.14/bin/../lib/log4j-1.2.17.jar:/home/bigdata/zookeeper-3.4.14/bin/../lib/jline-0.9.94.jar:/home/bigdata/zookeeper-3.4.14/bin/../lib/audience-annotations-0.5.0.jar:/home/bigdata/zookeeper-3.4.14/bin/../zookeeper-3.4.14.jar:/home/bigdata/zookeeper-3.4.14/bin/../zookeeper-server/src/main/resources/lib/*.jar:/home/bigdata/zookeeper-3.4.14/bin/../conf:.:/usr/java/jdk1.8.0_211-amd64/lib/dt.jar:/usr/java/jdk1.8.0_211-amd64/lib/tools.jar

java.library.path=/usr/java/packages/lib/amd64:/usr/lib64:/lib64:/lib:/usr/lib

java.io.tmpdir=/tmp

java.compiler=<NA>

os.name=Linux

os.arch=amd64

os.version=3.10.0-957.el7.x86_64

user.name=bigdata

user.home=/home/bigdata

user.dir=/home/bigdata/zookeeper-3.4.14

g).查看zookeeper的健康信息

命令:echo mntr|nc hostname 2181

[bigdata@carbondata data]$ echo mntr|nc 192.168.133.132 2181

zk_version 3.4.14-4c25d480e66aadd371de8bd2fd8da255ac140bcf, built on 03/06/2019 16:18 GMT

zk_avg_latency 0

zk_max_latency 17

zk_min_latency 0

zk_packets_received 621

zk_packets_sent 622

zk_num_alive_connections 2

zk_outstanding_requests 0

zk_server_state standalone

zk_znode_count 12

zk_watch_count 0

zk_ephemerals_count 2

zk_approximate_data_size 154

zk_open_file_descriptor_count 30

zk_max_file_descriptor_count 4096

zk_fsync_threshold_exceed_count 0

h).展示watch的信息

命令:echo wchs|nc hostname 2181

## 未添加watch前

[bigdata@carbondata data]$ echo wchs|nc 192.168.133.132 2181

0 connections watching 0 paths

Total watches:0

## 添加watch

[zk: localhost:2181(CONNECTED) 3] stat /hbase/tmp watch

cZxid = 0x20

ctime = Fri Aug 23 00:33:42 CST 2019

mZxid = 0x20

mtime = Fri Aug 23 00:33:42 CST 2019

pZxid = 0x20

cversion = 0

dataVersion = 0

aclVersion = 0

ephemeralOwner = 0x100002177b70003

dataLength = 4

numChildren = 0

[zk: localhost:2181(CONNECTED) 4] get /hbase/temp watch

test

cZxid = 0x21

ctime = Fri Aug 23 00:34:54 CST 2019

mZxid = 0x21

mtime = Fri Aug 23 00:34:54 CST 2019

pZxid = 0x21

cversion = 0

dataVersion = 0

aclVersion = 0

ephemeralOwner = 0x100002177b70003

dataLength = 4

numChildren = 0

## 展示watch的信息

[bigdata@carbondata data]$ echo wchs|nc 192.168.133.132 2181

1 connections watching 2 paths

Total watches:2

i).显示session的watch信息

命令:echo wchc|nc hostname 2181

[bigdata@carbondata data]$ echo wchc|nc 192.168.133.132 2181

wchc is not executed because it is not in the whitelist.

[bigdata@carbondata zookeeper-3.4.14]$ echo wchc|nc 192.168.133.132 2181

0x100002177b70003

/hbase/temp

/hbase/tmp

i).显示path的watch信息

命令:echo wchp|nc hostname 2181

[bigdata@carbondata zookeeper-3.4.14]$ echo wchp|nc 192.168.133.132 2181

wchp is not executed because it is not in the whitelist.

[bigdata@carbondata zookeeper-3.4.14]$ echo wchp|nc 192.168.133.132 2181

/hbase/temp

0x100002177b70003

/hbase/tmp

0x100002177b70003

三.问题

问题描述

在查看session和path的watch信息时出现

wchc is not executed because it is not in the whitelist.

wchp is not executed because it is not in the whitelist.

解决办法

需修改配置文件,然后重启Zookeeper,查看

## zoo.cfg配置文件中添加

4lw.commands.whitelist=*

## 重启zookeeper

./bin/zkServer.sh restart ./conf/zoo.cfg

## 显示session的watch信息

[bigdata@carbondata zookeeper-3.4.14]$ echo wchc|nc 192.168.133.132 2181

0x100002177b70003

/hbase/temp

/hbase/tmp

## 显示path的watch信息

[bigdata@carbondata zookeeper-3.4.14]$ echo wchp|nc 192.168.133.132 2181

/hbase/temp

0x100002177b70003

/hbase/tmp

0x100002177b70003