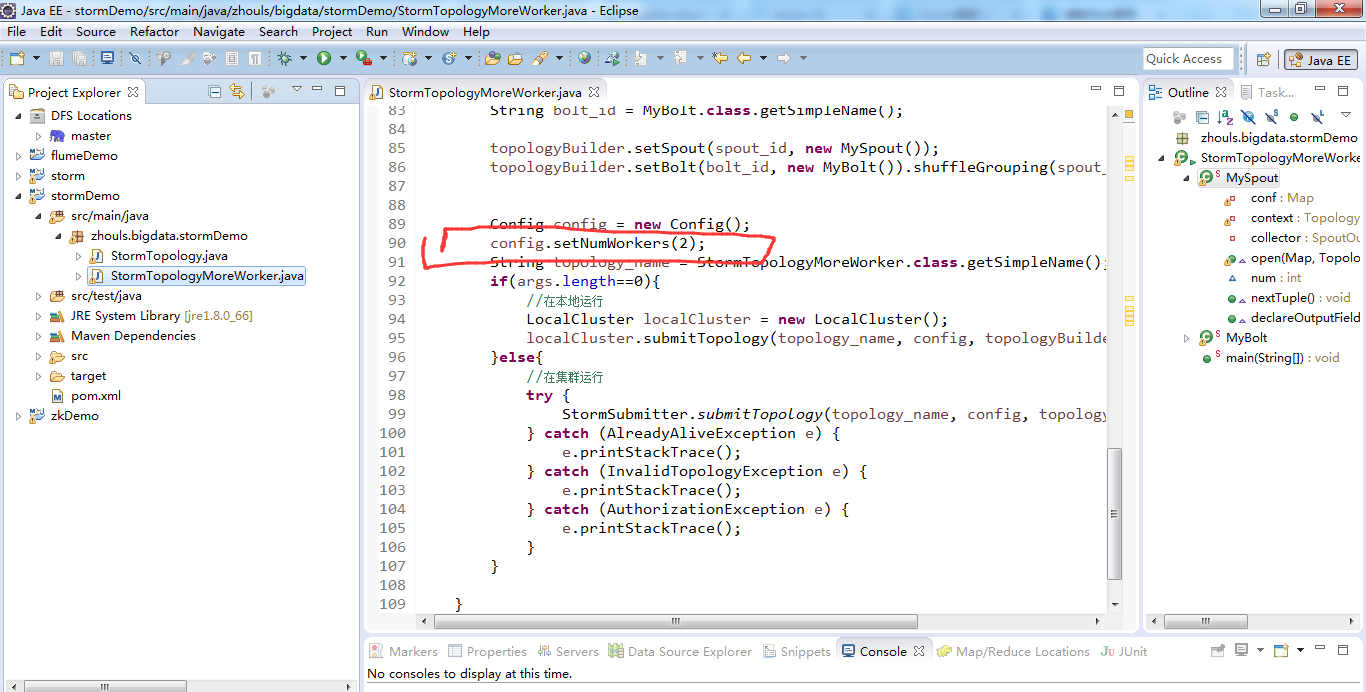

继续编写

StormTopologyMoreWorker.java

![]()

![复制代码]()

package zhouls.bigdata.stormDemo;

import java.util.Map;

import org.apache.storm.Config;

import org.apache.storm.LocalCluster;

import org.apache.storm.StormSubmitter;

import org.apache.storm.generated.AlreadyAliveException;

import org.apache.storm.generated.AuthorizationException;

import org.apache.storm.generated.InvalidTopologyException;

import org.apache.storm.spout.SpoutOutputCollector;

import org.apache.storm.task.OutputCollector;

import org.apache.storm.task.TopologyContext;

import org.apache.storm.topology.OutputFieldsDeclarer;

import org.apache.storm.topology.TopologyBuilder;

import org.apache.storm.topology.base.BaseRichBolt;

import org.apache.storm.topology.base.BaseRichSpout;

import org.apache.storm.tuple.Fields;

import org.apache.storm.tuple.Tuple;

import org.apache.storm.tuple.Values;

import org.apache.storm.utils.Utils;

public class StormTopologyMoreWorker {

public static class MySpout extends BaseRichSpout{

private Map conf;

private TopologyContext context;

private SpoutOutputCollector collector;

public void open(Map conf, TopologyContext context,

SpoutOutputCollector collector) {

this.conf = conf;

this.collector = collector;

this.context = context;

}

int num = 0;

public void nextTuple() {

num++;

System.out.println("spout:"+num);

this.collector.emit(new Values(num));

Utils.sleep(1000);

}

public void declareOutputFields(OutputFieldsDeclarer declarer) {

declarer.declare(new Fields("num"));

}

}

public static class MyBolt extends BaseRichBolt{

private Map stormConf;

private TopologyContext context;

private OutputCollector collector;

public void prepare(Map stormConf, TopologyContext context,

OutputCollector collector) {

this.stormConf = stormConf;

this.context = context;

this.collector = collector;

}

int sum = 0;

public void execute(Tuple input) {

Integer num = input.getIntegerByField("num");

sum += num;

System.out.println("sum="+sum);

}

public void declareOutputFields(OutputFieldsDeclarer declarer) {

}

}

public static void main(String[] args) {

TopologyBuilder topologyBuilder = new TopologyBuilder();

String spout_id = MySpout.class.getSimpleName();

String bolt_id = MyBolt.class.getSimpleName();

topologyBuilder.setSpout(spout_id, new MySpout());

topologyBuilder.setBolt(bolt_id, new MyBolt()).shuffleGrouping(spout_id);

Config config = new Config();

config.setNumWorkers(2);

String topology_name = StormTopologyMoreWorker.class.getSimpleName();

if(args.length==0){

//在本地运行

LocalCluster localCluster = new LocalCluster();

localCluster.submitTopology(topology_name, config, topologyBuilder.createTopology());

}else{

//在集群运行

try {

StormSubmitter.submitTopology(topology_name, config, topologyBuilder.createTopology());

} catch (AlreadyAliveException e) {

e.printStackTrace();

} catch (InvalidTopologyException e) {

e.printStackTrace();

} catch (AuthorizationException e) {

e.printStackTrace();

}

}

}

}

![复制代码]()

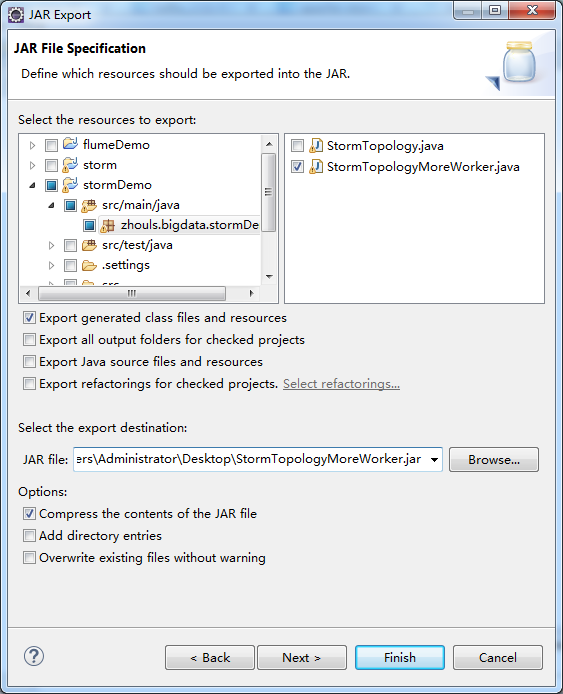

打jar包

![]()

![]()

![]()

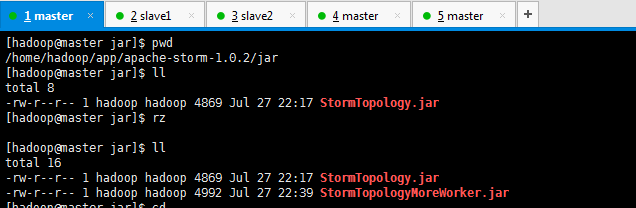

[hadoop@master jar]$ pwd

/home/hadoop/app/apache-storm-1.0.2/jar

[hadoop@master jar]$ ll

total 8

-rw-r--r-- 1 hadoop hadoop 4869 Jul 27 22:17 StormTopology.jar

[hadoop@master jar]$ rz

[hadoop@master jar]$ ll

total 16

-rw-r--r-- 1 hadoop hadoop 4869 Jul 27 22:17 StormTopology.jar

-rw-r--r-- 1 hadoop hadoop 4992 Jul 27 22:39 StormTopologyMoreWorker.jar

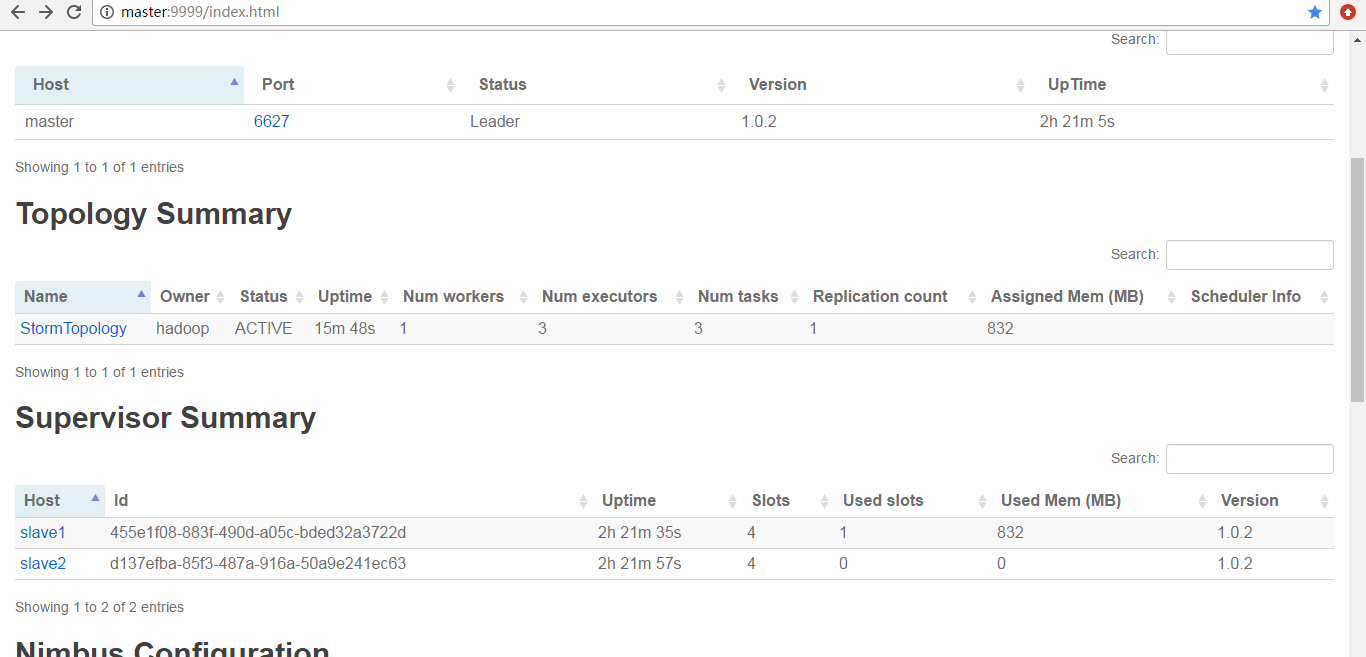

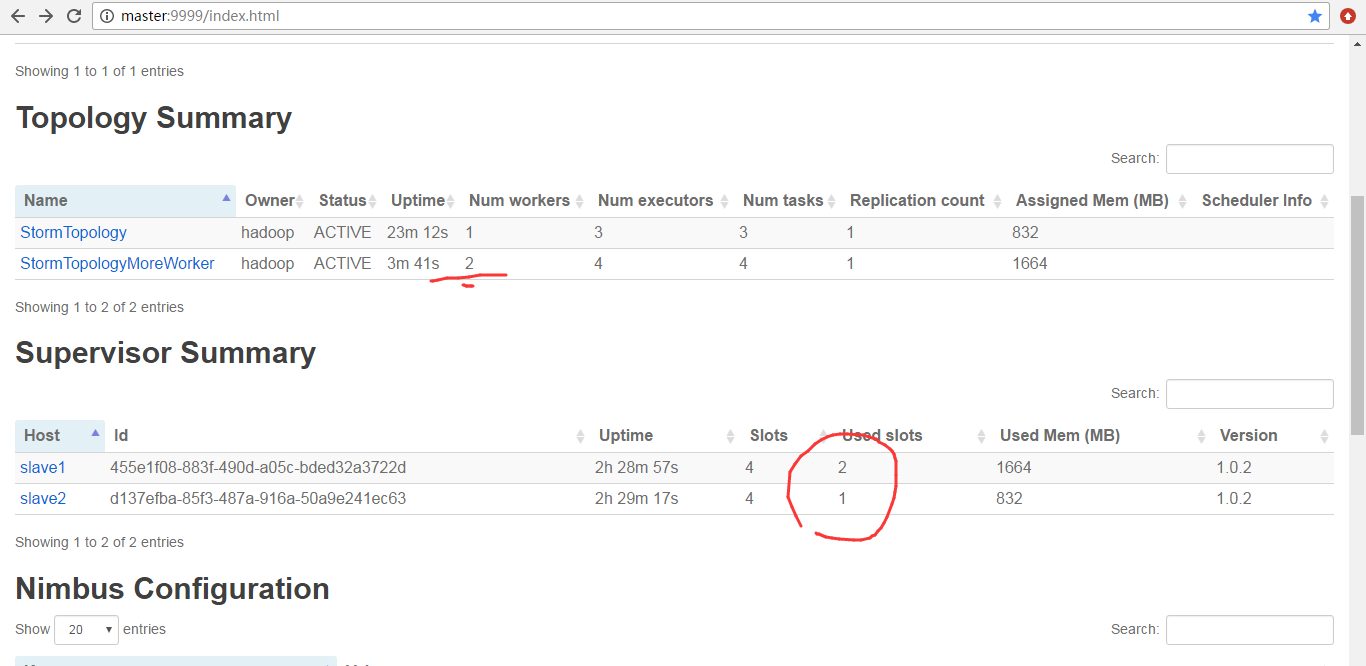

提交作业之前

![]()

![]()

![复制代码]()

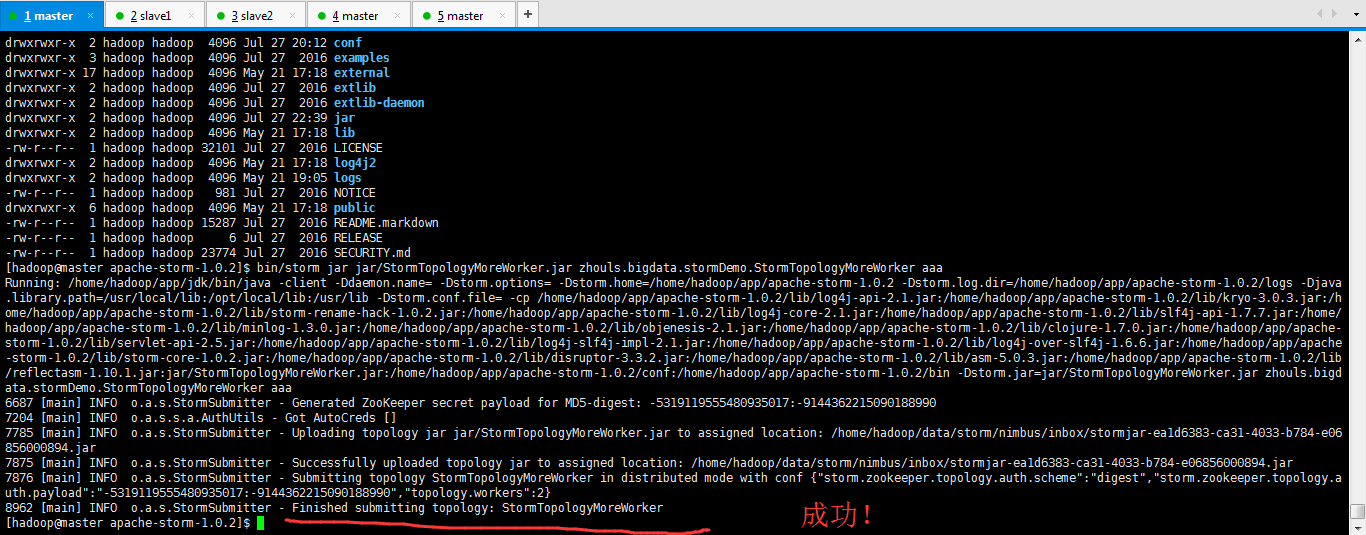

[hadoop@master apache-storm-1.0.2]$ pwd

/home/hadoop/app/apache-storm-1.0.2

[hadoop@master apache-storm-1.0.2]$ ll

total 208

drwxrwxr-x 2 hadoop hadoop 4096 May 21 17:18 bin

-rw-r--r-- 1 hadoop hadoop 82317 Jul 27 2016 CHANGELOG.md

drwxrwxr-x 2 hadoop hadoop 4096 Jul 27 20:12 conf

drwxrwxr-x 3 hadoop hadoop 4096 Jul 27 2016 examples

drwxrwxr-x 17 hadoop hadoop 4096 May 21 17:18 external

drwxrwxr-x 2 hadoop hadoop 4096 Jul 27 2016 extlib

drwxrwxr-x 2 hadoop hadoop 4096 Jul 27 2016 extlib-daemon

drwxrwxr-x 2 hadoop hadoop 4096 Jul 27 22:39 jar

drwxrwxr-x 2 hadoop hadoop 4096 May 21 17:18 lib

-rw-r--r-- 1 hadoop hadoop 32101 Jul 27 2016 LICENSE

drwxrwxr-x 2 hadoop hadoop 4096 May 21 17:18 log4j2

drwxrwxr-x 2 hadoop hadoop 4096 May 21 19:05 logs

-rw-r--r-- 1 hadoop hadoop 981 Jul 27 2016 NOTICE

drwxrwxr-x 6 hadoop hadoop 4096 May 21 17:18 public

-rw-r--r-- 1 hadoop hadoop 15287 Jul 27 2016 README.markdown

-rw-r--r-- 1 hadoop hadoop 6 Jul 27 2016 RELEASE

-rw-r--r-- 1 hadoop hadoop 23774 Jul 27 2016 SECURITY.md

[hadoop@master apache-storm-1.0.2]$ bin/storm jar jar/StormTopologyMoreWorker.jar zhouls.bigdata.stormDemo.StormTopologyMoreWorker aaa

Running: /home/hadoop/app/jdk/bin/java -client -Ddaemon.name= -Dstorm.options= -Dstorm.home=/home/hadoop/app/apache-storm-1.0.2 -Dstorm.log.dir=/home/hadoop/app/apache-storm-1.0.2/logs -Djava.library.path=/usr/local/lib:/opt/local/lib:/usr/lib -Dstorm.conf.file= -cp /home/hadoop/app/apache-storm-1.0.2/lib/log4j-api-2.1.jar:/home/hadoop/app/apache-storm-1.0.2/lib/kryo-3.0.3.jar:/home/hadoop/app/apache-storm-1.0.2/lib/storm-rename-hack-1.0.2.jar:/home/hadoop/app/apache-storm-1.0.2/lib/log4j-core-2.1.jar:/home/hadoop/app/apache-storm-1.0.2/lib/slf4j-api-1.7.7.jar:/home/hadoop/app/apache-storm-1.0.2/lib/minlog-1.3.0.jar:/home/hadoop/app/apache-storm-1.0.2/lib/objenesis-2.1.jar:/home/hadoop/app/apache-storm-1.0.2/lib/clojure-1.7.0.jar:/home/hadoop/app/apache-storm-1.0.2/lib/servlet-api-2.5.jar:/home/hadoop/app/apache-storm-1.0.2/lib/log4j-slf4j-impl-2.1.jar:/home/hadoop/app/apache-storm-1.0.2/lib/log4j-over-slf4j-1.6.6.jar:/home/hadoop/app/apache-storm-1.0.2/lib/storm-core-1.0.2.jar:/home/hadoop/app/apache-storm-1.0.2/lib/disruptor-3.3.2.jar:/home/hadoop/app/apache-storm-1.0.2/lib/asm-5.0.3.jar:/home/hadoop/app/apache-storm-1.0.2/lib/reflectasm-1.10.1.jar:jar/StormTopologyMoreWorker.jar:/home/hadoop/app/apache-storm-1.0.2/conf:/home/hadoop/app/apache-storm-1.0.2/bin -Dstorm.jar=jar/StormTopologyMoreWorker.jar zhouls.bigdata.stormDemo.StormTopologyMoreWorker aaa

6687 [main] INFO o.a.s.StormSubmitter - Generated ZooKeeper secret payload for MD5-digest: -5319119555480935017:-9144362215090188990

7204 [main] INFO o.a.s.s.a.AuthUtils - Got AutoCreds []

7785 [main] INFO o.a.s.StormSubmitter - Uploading topology jar jar/StormTopologyMoreWorker.jar to assigned location: /home/hadoop/data/storm/nimbus/inbox/stormjar-ea1d6383-ca31-4033-b784-e06

856000894.jar

7875 [main] INFO o.a.s.StormSubmitter - Successfully uploaded topology jar to assigned location: /home/hadoop/data/storm/nimbus/inbox/stormjar-ea1d6383-ca31-4033-b784-e06856000894.jar

7876 [main] INFO o.a.s.StormSubmitter - Submitting topology StormTopologyMoreWorker in distributed mode with conf {"storm.zookeeper.topology.auth.scheme":"digest","storm.zookeeper.topology.auth.payload":"-5319119555480935017:-9144362215090188990","topology.workers":2}

8962 [main] INFO o.a.s.StormSubmitter - Finished submitting topology: StormTopologyMoreWorker

[hadoop@master apache-storm-1.0.2]$

![复制代码]()

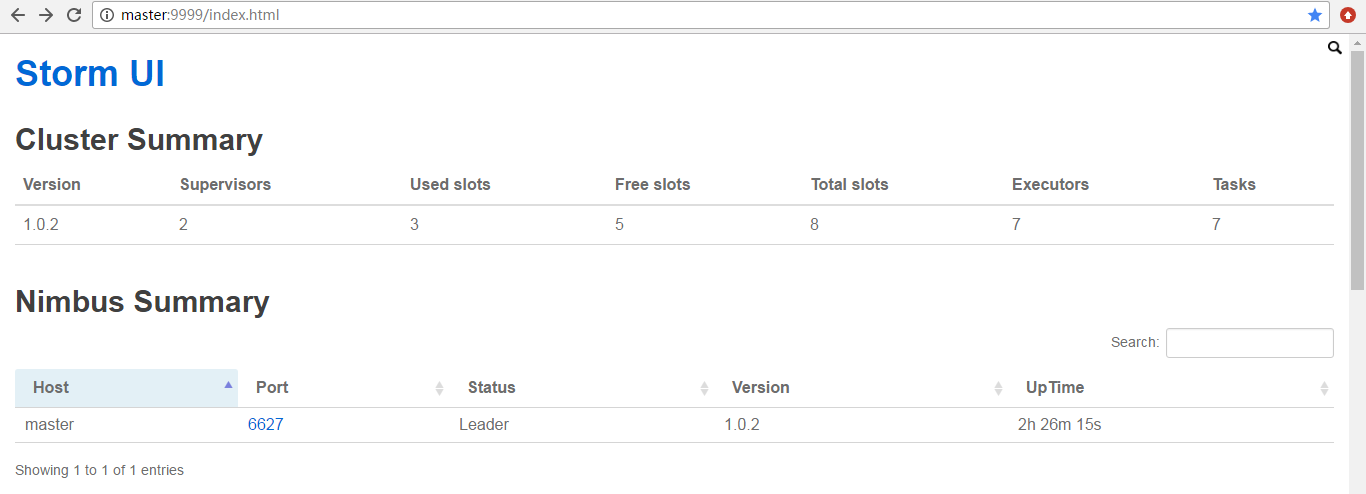

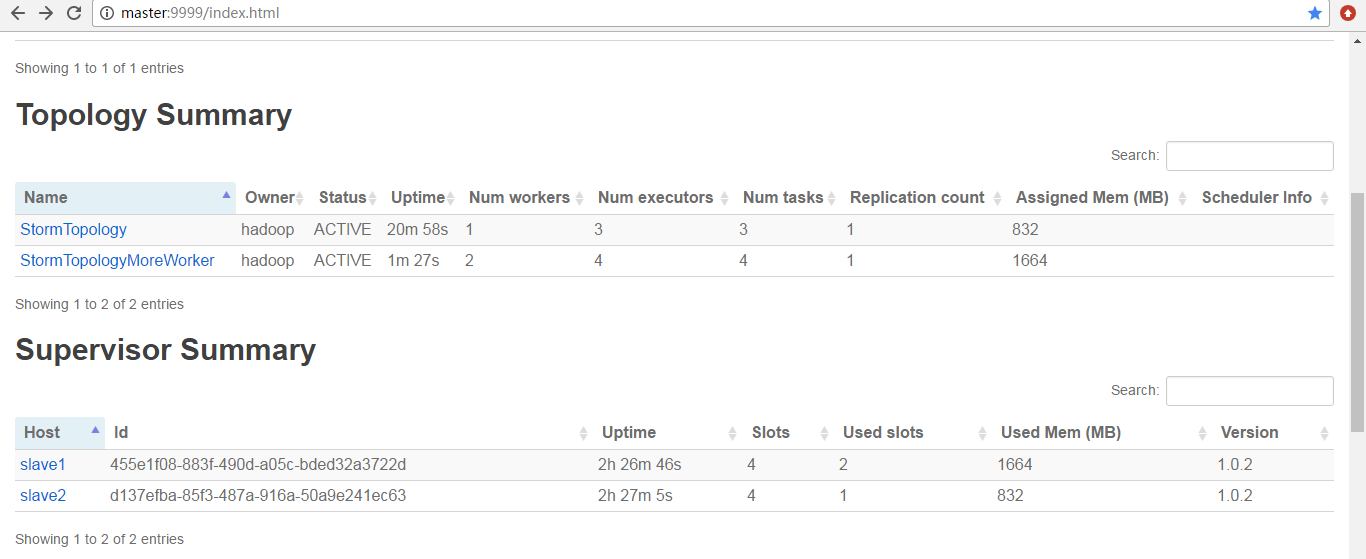

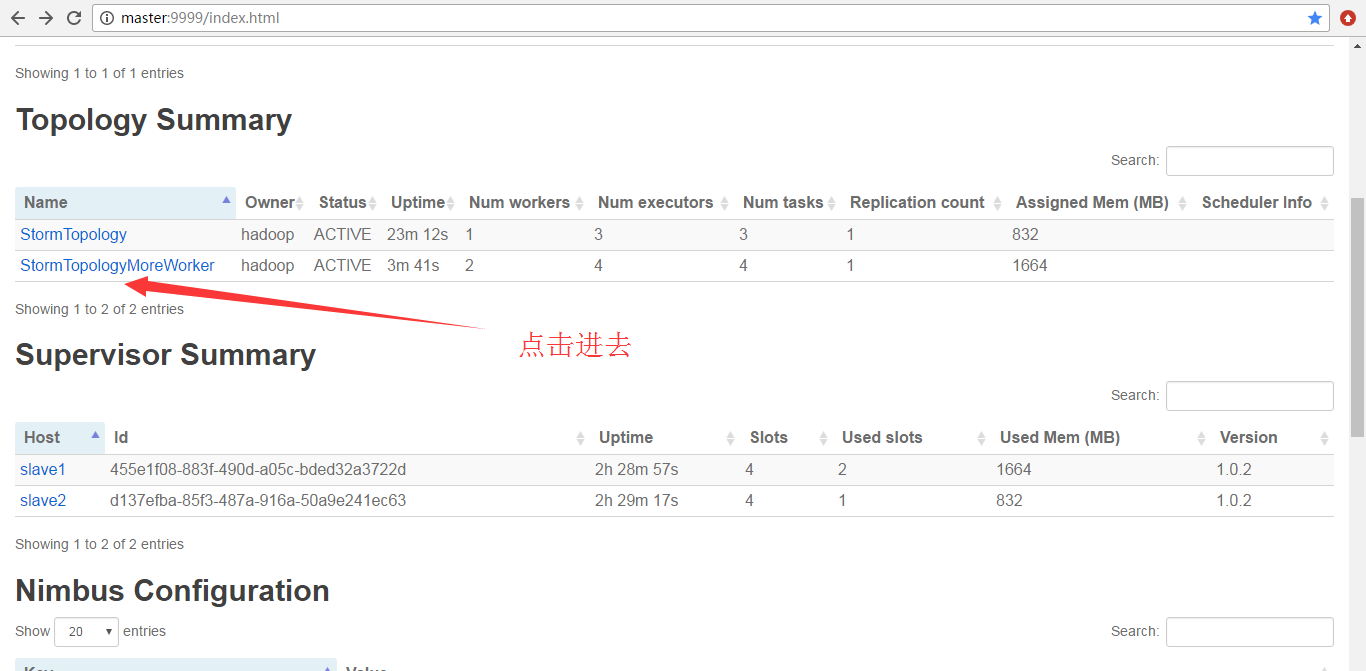

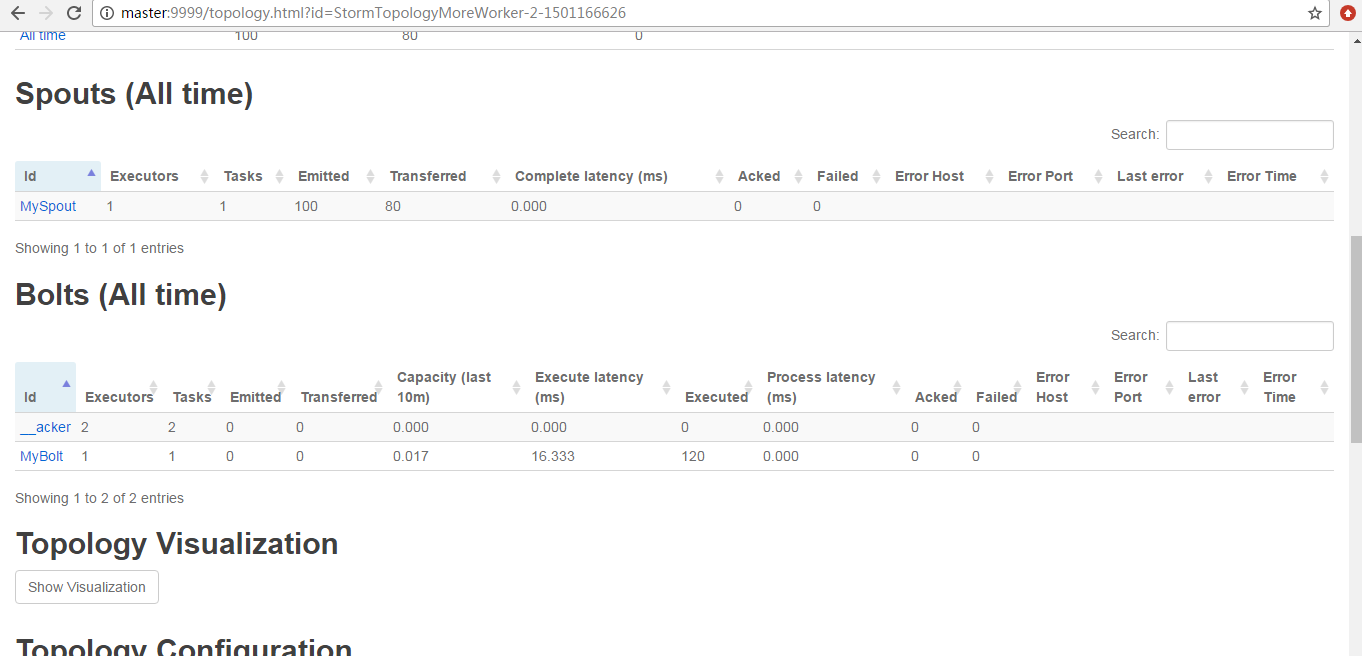

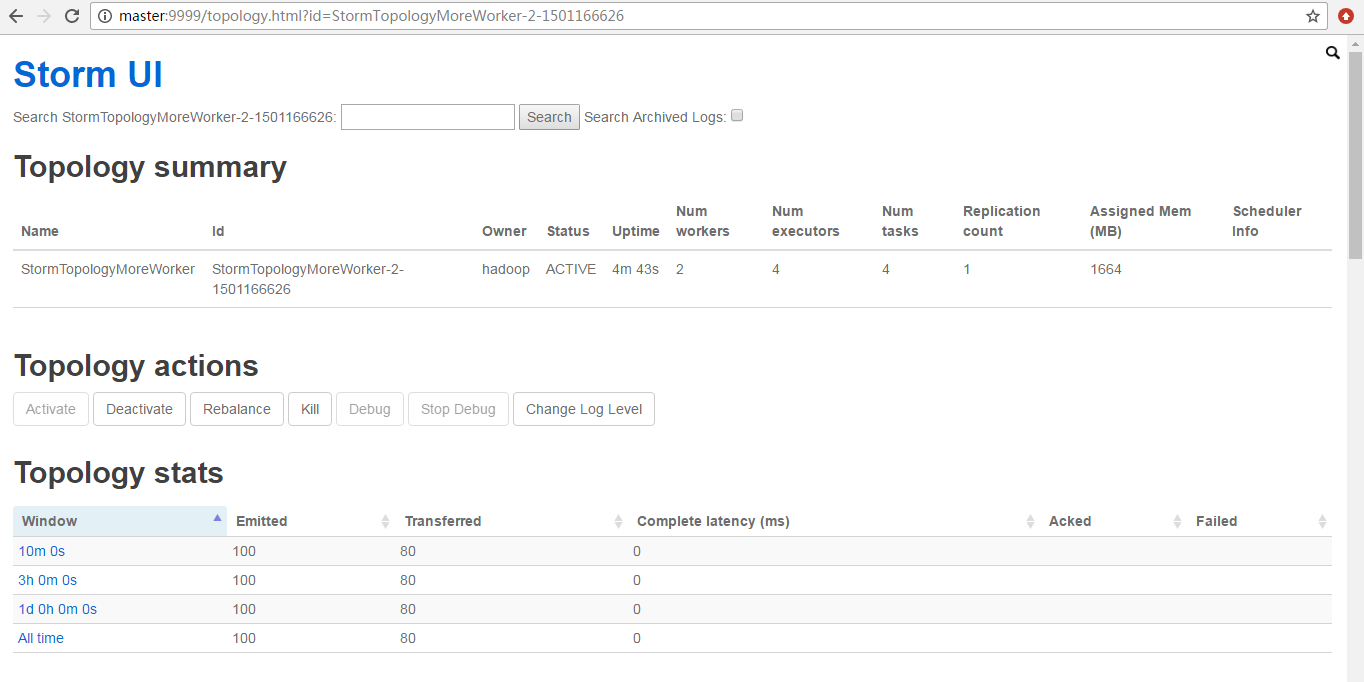

提交之后

![]()

![]()

![]()

![]()

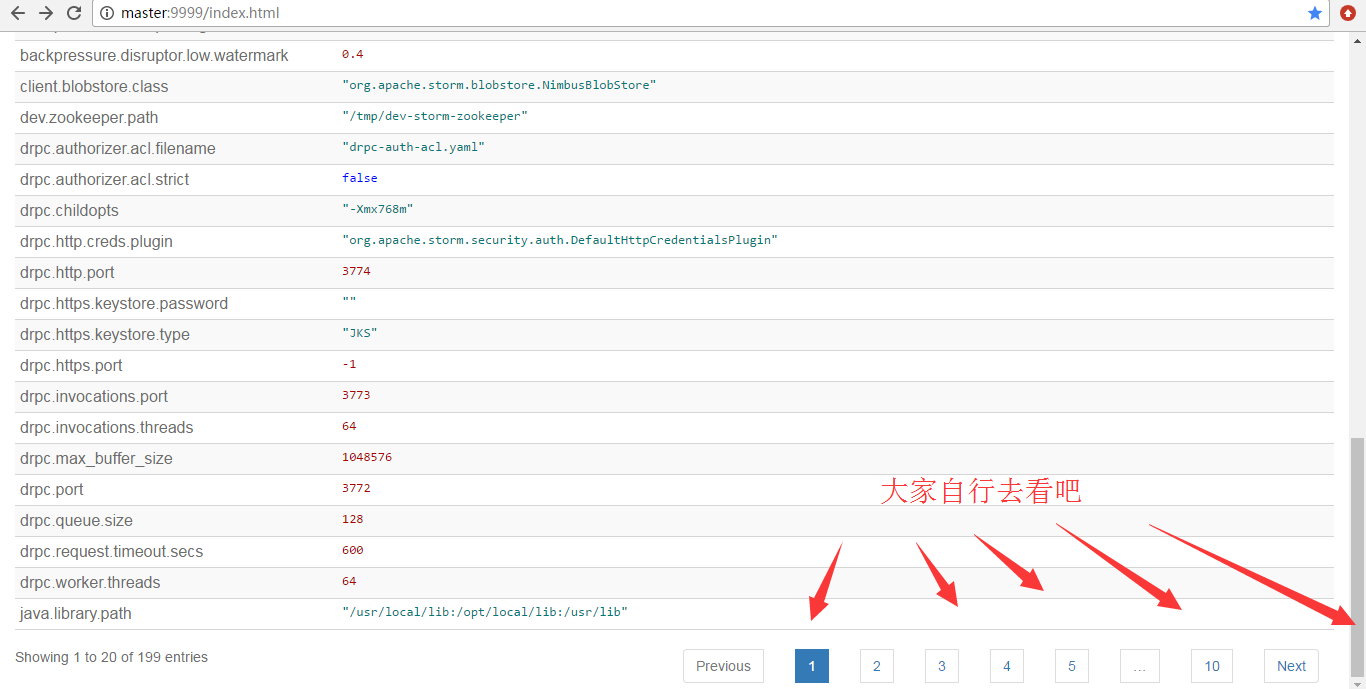

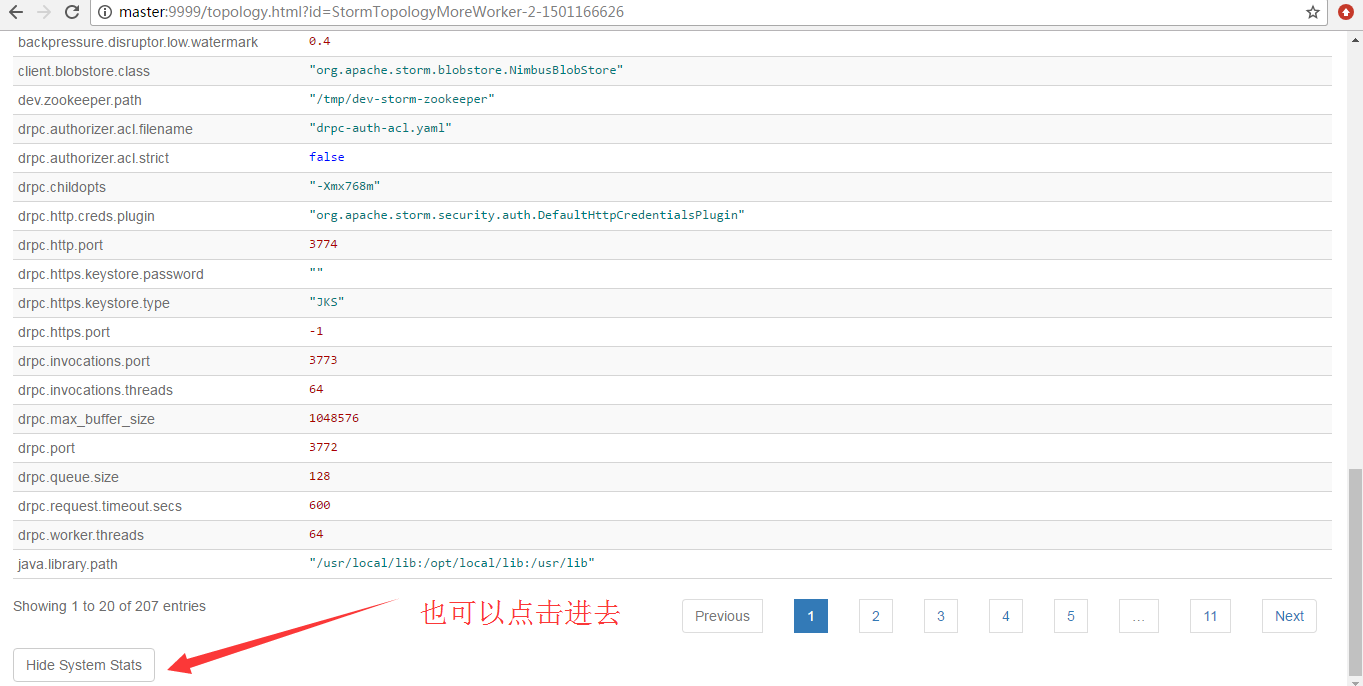

为什么,会是如上的数字呢?大家要学,就要深入去学和理解。

![]()

因为,我之前运行的StormTopology没有停掉

![]()

![]()

![]()

![]()

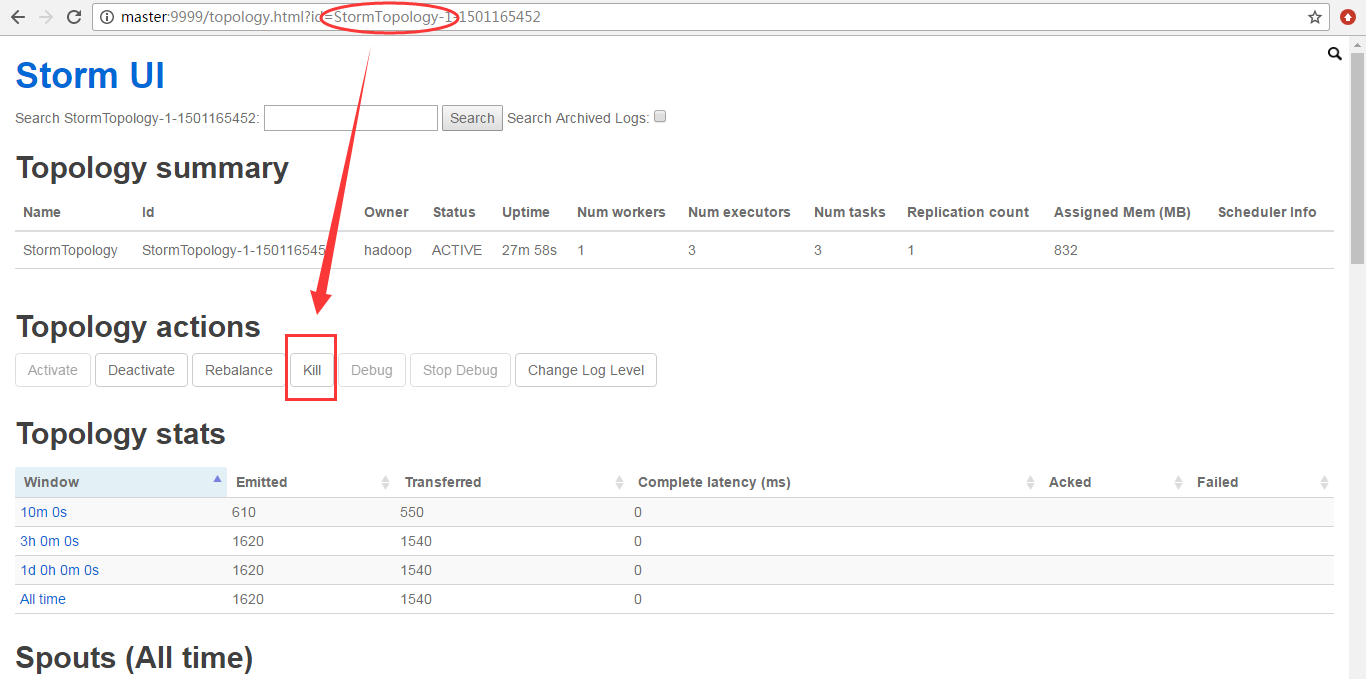

现在呢,我将之前运行的StormTopology给停掉,然后,再来看。非常重要

![]()

![]()

即提示,我们,30秒之后,kill掉。如果大家等不及,可以设置时间短些

![]()

为什么,会是如上的数字呢?大家要学,就要深入去学和理解。

![]()

本文转自大数据躺过的坑博客园博客,原文链接:http://www.cnblogs.com/zlslch/p/7247860.html,如需转载请自行联系原作者