背景:

这篇文章主要是本人结合公司有关监控,详细介绍一下Elastic stack的几个组件:Elasticsearch/kibana/filebeat/metricbeat,通过安装配置部署,以及具体的case来介绍这四个组件,有关一些基本概念,直接从官方网站上copy,毕竟还是一首资料权威,本文英文水平有限,就不误人子弟了,

个人认为最核心的应该是ES;

过程:

用filebeat/metricbeat抓取数据,存在elasticsearch(后面简称ES)中,然后通过kibana展示并可视化,也就是做成漂亮的图形;

Note:

本文没有涉及到logstash,因为公司没有使用到它,所以先不讲,后面有时间我们在来研究;

ES简介:

Elasticsearch is a highly scalable open-source full-text search and analytics engine. It allows you to store, search, and analyze big volumes of data quickly and in near real time. It is generally used as the underlying engine/technology that powers applications that have complex search features and requirements.

Elasticsearch--Index--Type--Document

cluster:默认的cluster name - elasticsearch;

Node:一般是一个机器作为一个节点;

Index: 是具有一些相似特性的文档的集合,类似于数据库的Database,索引名必须小写(lowercase),单个集群中可以定义任意多个index;

Type: 属于index的一个逻辑种类或者分区,在6.0.0版本中不再使用;

Document: 可以被索引的最基本的一个单元;

Shards :当index数据量比较大的时候,select比较慢,你可以把index可以被分成多个shard,这样你可以分布和平行访问shard,increasing performance/throughput.

Replica shard (replicas):failover in case a shard/node fail.replica shard 不会再同一个node中;

创建index的时候可以指定shards和replicas数量,创建index之后,replicas的数量可以修改,但是shards不能修改。

By default, each index in Elasticsearch is allocated 5 primary shards and 1 replica which means that if you have at least two nodes in your cluster, your index will have 5 primary shards and another 5 replica shards (1 complete replica) for a total of 10 shards per index.

eg:0-4表示index:customer被分成5个shard,p代表primary shard, r代表replica shard,

GET _cat/shards/customer*?v

index shard prirep state docs store ip node

customer 4 p STARTED 0 162b 172.16.101.55 sht-sgmhadoopnn-01

customer 4 r STARTED 0 162b 172.16.101.56 sht-sgmhadoopnn-02

customer 1 r STARTED 0 162b 172.16.101.56 sht-sgmhadoopnn-02

customer 1 p STARTED 0 162b 172.16.101.54 sht-sgmhadoopcm-01

customer 3 r STARTED 1 3.4kb 172.16.101.55 sht-sgmhadoopnn-01

customer 3 p STARTED 1 3.4kb 172.16.101.54 sht-sgmhadoopcm-01

customer 2 p STARTED 0 162b 172.16.101.56 sht-sgmhadoopnn-02

customer 2 r STARTED 0 162b 172.16.101.54 sht-sgmhadoopcm-01

customer 0 r STARTED 0 162b 172.16.101.55 sht-sgmhadoopnn-01

customer 0 p STARTED 0 162b 172.16.101.54 sht-sgmhadoopcm-01

1.installation ES (three node cluster) and kiabana

Hostname/IP |

Role |

sht-sgmhadoopcm-01/ 172.16.101.54 |

ES/Kibana/Filebeat/Metricbeat/Cassandra/MySQL |

sht-sgmhadoopnn-01/ 172.16.101.55 |

ES |

sht-sgmhadoopnn-02/ 172.16.101.56 |

ES |

(1)Installation ES

Elasticsearch requires at least Java 8:

[root@sht-sgmhadoopcm-01 local]# java -version

java version "1.8.0_111"

Java(TM) SE Runtime Environment (build 1.8.0_111-b14)

Java HotSpot(TM) 64-Bit Server VM (build 25.111-b14, mixed mode)

[root@sht-sgmhadoopcm-01 ~]# useradd elasticsearch

[root@sht-sgmhadoopcm-01 ~]# wget https://artifacts.elastic.co/downloads/elasticsearch/elasticsearch-5.5.2.tar.gz

[root@sht-sgmhadoopcm-01 ~]# tar xf elasticsearch-5.5.2.tar.gz

[root@sht-sgmhadoopcm-01 ~]# mkdir /usr/local/elasticsearch/{data,logs}

[root@sht-sgmhadoopcm-01 ~]# vim /usr/local/elasticsearch/config/elasticsearch.yml

cluster.name: myelasticsearch

node.name: ${HOSTNAME}

path.data: /usr/local/elasticsearch/data

path.logs: /usr/local/elasticsearch/logs

network.host: 172.16.101.54

#对外服务的端口

http.port: 9200

#集群节点之间交互的端口号

#transport.tcp.port:9300

http.cors.enabled: true

http.cors.allow-origin: "*"

node.master: true

node.data: true

node.ingest: true

#设置默认索引副本数量:

#index.number_of_replicas:3

#index.number_of_shards:5

discovery.zen.ping.unicast.hosts: ["172.16.101.54:9300","172.16.101.55:9300","172.16.101.56:9300"]

discovery.zen.minimum_master_nodes: 2 #防止脑裂(master_eligible_nodes / 2) + 1

[root@sht-sgmhadoopcm-01 ~]# chown -R elasticsearch.elasticsearch elasticsearch

[root@sht-sgmhadoopcm-01 ~]# vi /etc/security/limits.conf

* soft nproc 65536

* hard nproc 65536

* soft nofile 65536

* hard nofile 65536

[root@sht-sgmhadoopcm-01 ~]# vi /etc/sysctl.conf

vm.max_map_count= 262144

[root@sht-sgmhadoopcm-01 ~]# sysctl -p

注意:

同样需要在其他两个节点上172.16.101.55,172.16.101.56上安装配置,你也可以直接rsync到其他两个节点,然后修改对应的配置文件,然后启动elasticsearch,第一个启动的作为master,

其他两个会自动发现集群名为myelasticsearch的master,然后加入这个集群,作为data节点。节点发现是通过zen-disacovery技术。

[root@sht-sgmhadoopcm-01 local]# rsync -avz --progress /usr/local/elasticsearch sht-sgmhadoopnn-01:/usr/local/

[root@sht-sgmhadoopcm-01 local]# rsync -avz --progress /usr/local/elasticsearch sht-sgmhadoopnn-02:/usr/local/

依次启动:

[root@sht-sgmhadoopcm-01 ~]# su - elasticsearch -c "/usr/local/elasticsearch/bin/elasticsearch &"

[root@sht-sgmhadoopnn-01 ~]# su - elasticsearch -c "/usr/local/elasticsearch/bin/elasticsearch &"

[root@sht-sgmhadoopnn-02 ~]# su - elasticsearch -c "/usr/local/elasticsearch/bin/elasticsearch &"

[root@sht-sgmhadoopcm-01 elasticsearch]# ss -nltup|egrep "9200|9300"

tcp LISTEN 0 128 ::ffff:172.16.101.54:9200 :::* users:(("java",pid=2601,fd=158))

tcp LISTEN 0 128 ::ffff:172.16.101.54:9300 :::* users:(("java",pid=2601,fd=118))

[root@sht-sgmhadoopcm-01 elasticsearch]# curlhttp://172.16.101.54:9200/

{

"name" : "sht-sgmhadoopcm-01",

"cluster_name" : "myelasticsearch",

"cluster_uuid" : "GOyhthoIQmebXKddPpZ4eQ",

"version" : {

"number" : "5.5.2",

"build_hash" : "b2f0c09",

"build_date" : "2017-08-14T12:33:14.154Z",

"build_snapshot" : false,

"lucene_version" : "6.6.0"

},

"tagline" : "You Know, for Search"

}

(2) Installation kibana

[root@sht-sgmhadoopcm-01 local]# tar xf kibana-5.5.2-linux-x86_64.tar.gz

[root@sht-sgmhadoopcm-01 local]# mv kibana-5.5.2-linux-x86_64 kibana

[root@sht-sgmhadoopcm-01 kibana]# vim config/kibana.yml

server.port: 5601

server.host: "172.16.101.54"

elasticsearch.url: "http://172.16.101.54:9200"

kibana.index: ".kibana"

[root@sht-sgmhadoopcm-01 kibana]# bin/kibana &

[root@sht-sgmhadoopcm-01 kibana]# ss -nltup|grep 5601

tcp LISTEN 0 128 172.16.101.54:5601 *:* users:(("node",pid=6503,fd=14))

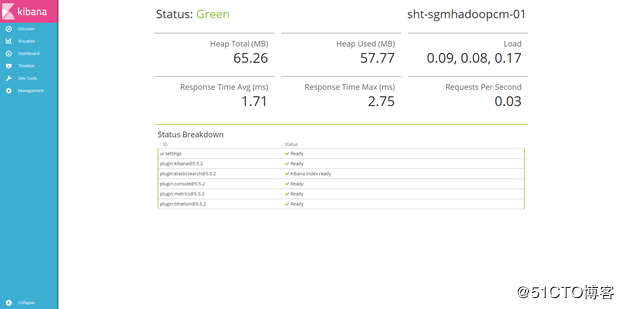

Access kibana through browser web: http://172.16.101.54:5601/

Checking Kibana Status:http://172.16.101.54:5601/status

![1528211721161774.png blob.png]()

(3)Loading Sample Data

• A set of fictitious accounts with randomly generated data. Download this data set by clicking here: accounts.zip

[root@sht-sgmhadoopcm-01 tmp]# unzip accounts.zip

[root@sht-sgmhadoopcm-01 tmp]# curl -H 'Content-Type: application/x-ndjson' -XPOST '172.16.101.54:9200/_bulk?pretty' --data-binary @logs.jsonl

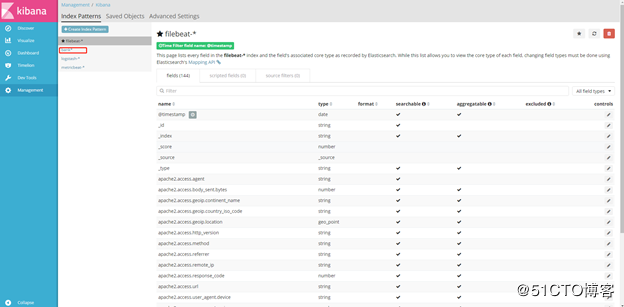

Create index pattern: bank*

![1528211826395606.png blob.png]()

![1528211837303977.png blob.png]()

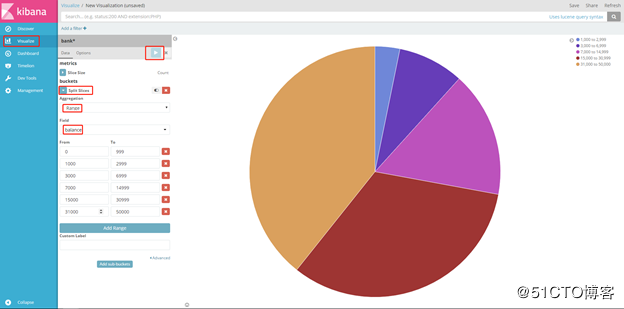

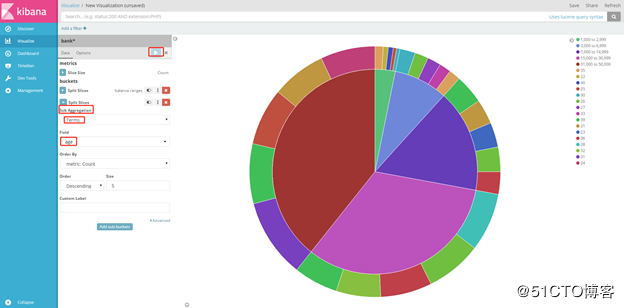

(4)Create a visualization

Now you can see what proportion of the 1000 accounts fall into each balance range.

![1528211896327886.png blob.png]()

Now you can see the break down of the account holders' ages displayed in a ring around the balance ranges.

![1528211905941077.png blob.png]()

2 Exploring Cluster

有两种方式访问elasticsearch,终端上bulk API curl或者kinaba's console

(1)Bulk API

list health

[root@sht-sgmhadoopcm-01 local]# curl -X GET "172.16.101.54:9200/_cat/health?v"

epoch timestamp cluster status node.total node.data shards pri relo init unassign pending_tasks max_task_wait_time active_shards_percent

1527909806 11:23:26 myelasticsearch green 3 3 12 6 0 0 0 0 - 100.0%

list all nodes

[root@sht-sgmhadoopcm-01 local]# curl -X GET "172.16.101.54:9200/_cat/nodes?v"

ip heap.percent ram.percent cpu load_1m load_5m load_15m node.role master name

172.16.101.56 5 78 0 0.00 0.01 0.05 mdi - sht-sgmhadoopnn-02

172.16.101.54 10 88 1 0.00 0.05 0.05 mdi * sht-sgmhadoopcm-01

172.16.101.55 9 48 0 0.00 0.01 0.05 mdi - sht-sgmhadoopnn-01

list all indices

[root@sht-sgmhadoopcm-01 config]#curl -X GET "172.16.101.54:9200/_cat/indices?v"

create an index

[root@sht-sgmhadoopcm-01 config]#curl -X PUT "172.16.101.54:9200/customer?pretty"

(2) Kibana Dev Tools:

GET /_cat/health?v

GET /_cat/nodes?v

GET /_cat/indices?v

GET _cat/shards?v

GET _tasks

create index:

PUT /customer?pretty

list all index:

GET /_cat/indices?v

health status index uuid pri rep docs.count docs.deleted store.size pri.store.size

green open .kibana RMboQeUhQ2-7sL9Ri1YvOg 1 1 1 0 6.4kb 3.2kb

green open customer ad1prAx1RM-NpYpt_8AJrA 5 1 1 0 8.1kb 4kb

Put something into customer index

<REST Verb> /<Index>/<Type>/<ID>

PUT /customer/external/1?pretty

{

"name": "John Doe"

}

GET /customer/external/1?pretty

{

"_index": "customer",

"_type": "external",

"_id": "1",

"_version": 1,

"found": true,

"_source": {

"name": "John Doe"

}

}

delete an index:

DELETE /customer?pretty

Modify data

PUT /customer/external/1?pretty

{

"name": "Jane Doe"

}

index a document without an explicit ID

POST /customer/external?pretty

{

"name": "Jane Doe"

}

update document ID=1

POST /customer/external/1/_update?pretty

{

"doc": { "name": "dehu", "age": 20 }

}

delete document id=AWO_DBrV5YtRPiTwnyJh

DELETE /customer/external/AWO_DBrV5YtRPiTwnyJh?pretty

batch processing

POST /customer/external/_bulk?pretty

{"index":{"_id":"1"}}

{"name": "John Doe" }

{"index":{"_id":"2"}}

{"name": "Jane Doe" }

POST /customer/external/_bulk?pretty

{"update":{"_id":"1"}}

{"doc": { "name": "Jane Doe" } }

{"delete":{"_id":"2"}}

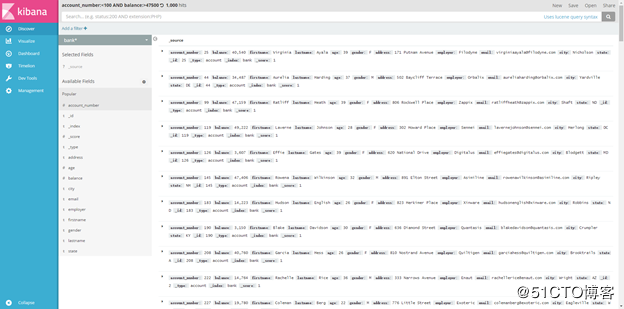

3 Exploring data

#加载accounts.json文件内容到bank中

download data:https://raw.githubusercontent.com/elastic/elasticsearch/master/docs/src/test/resources/accounts.json#

and load it into our cluster as follows:

[root@alish1-monitor-01 tmp]# curl -H "Content-Type: application/json" -XPOST '172.16.101.54:9200/bank/account/_bulk?pretty&refresh' --data-binary "@accounts.json"

GET /_cat/indices/bank?v

health status index uuid pri rep docs.count docs.deleted store.size pri.store.size

green open bank czWxsadvTJmUA1VDZxUa7w 5 1 1000 0 1.3mb 680.2kb

The search API

GET /bank/_search

GET /bank/_search?q=*&sort=account_number:asc&pretty

ES的DSL语法

match_all表示整个document,

from表示从哪个开始,

size表示返回document个数,

sort表示排序

_source指定返回的key-values

GET /customer/_search

{

"query": { "match_all": {} },

"size": 10000

}

#在kibana的开发工具中执行,获得bank这个index的倒数前5个docuemnt

GET /bank/_search

{

"query": { "match_all": {} },

"from":1,

"size": 5,

"sort": { "account_number": { "order": "desc" } }

}

#返回指定的account_number和balance与其对应的value

GET /bank/_search

{

"query": { "match_all": {} },

"_source": ["account_number", "balance"]

}

#返回所有"account_number"=20

GET /bank/_search

{

"query": { "match": { "account_number": 20 } }

}

#return contain “mill” or “lane”

GET /bank/_search

{

"query": { "match": { "address": "mill" } }

}

#return contain “mill lane”

GET /bank/_search

{

"query": { "match_phrase": { "address": "mill lane" } }

}

4 Case1: Display Cassandra system.log with error and warning log on Dashboard through Filebeat fetch data

(1)Installing filebeat

安装filebeat之前,你需要安装和配置一下相关环境;

Elasticsearch

Kibana for the UI

Logstash(可选择的)

[root@sht-sgmhadoopcm-01 local]# wgethttps://artifacts.elastic.co/downloads/beats/filebeat/filebeat-5.5.2-linux-x86_64.tar.gz

[root@sht-sgmhadoopcm-01 local]# tar xf filebeat-5.5.2-linux-x86_64.tar.gz

[root@sht-sgmhadoopcm-01 local]# mv filebeat-5.5.2-linux-x86_64 filebeat

(2)configuring filebate

[root@sht-sgmhadoopcm-01 filebeat]# vim filebeat.yml

#define a single prospector with a single path.

filebeat.prospectors:

- type: log

paths:

- /var/log/*.log

multiline.pattern: '^[[:space:]]'

multiline.negate: false

multiline.match: after

tags: ["system"]

- input_type: log

paths:

- /usr/local/cassandra/logs/*.log

include_lines: ["^ERR", "^WARN"]

multiline.pattern: '^[[:space:]]'

multiline.negate: false

multiline.match: after

tags: ["cassandra"]

#sending ouput to ES

output.elasticsearch:

hosts: ["172.16.101.54:9200"]

#logging

logging.level: warning

test configruation file

[root@sht-sgmhadoopcm-01 filebeat]# ./filebeat -configtest -e

Config OK

(3)Loading the index template in ES

当filebeat启动,连接ES之后,默认自动加载index template(filebeat.template.json),如果已经加载index template,默认不会覆盖;

By default, Filebeat automatically loads the recommended template file(filebeat.template.json)

By default, if a template already exists in the index, it is not overwritten. To overwrite an existing template, set template.overwrite: true in the configuration file.

output.elasticsearch:

hosts: ["172.16.101.54:9200"]

template.name: "filebeat"

template.path: "filebeat.template.json"

template.overwrite: false

(4)Starting Filebeat

[root@sht-sgmhadoopcm-01 filebeat]# ./filebeat -c filebeat.yml &

[root@sht-sgmhadoopcm-01 filebeat]# curl -X GET "172.16.101.54:9200/_cat/indices?v"

health status index uuid pri rep docs.count docs.deleted store.size pri.store.size

green open filebeat-2018.06.03 Z6NazqtBTT2CHJUM9vmBiA 5 1 3055 0 2.7mb 1.3mb

green open .kibana 41OJk7deTPivsetzt5ZDnw 1 1 2 0 40kb 20kb

(5)Loading the kibana index pattern

[root@sht-sgmhadoopcm-01 filebeat]# ./scripts/import_dashboards -only-index -eshttp://172.16.101.54:9200

然后在创建kibana/management/index pattern/filebeat-*

After you’ve created the index pattern, you can select the filebeat-* index pattern in Kibana to explore Filebeat data.

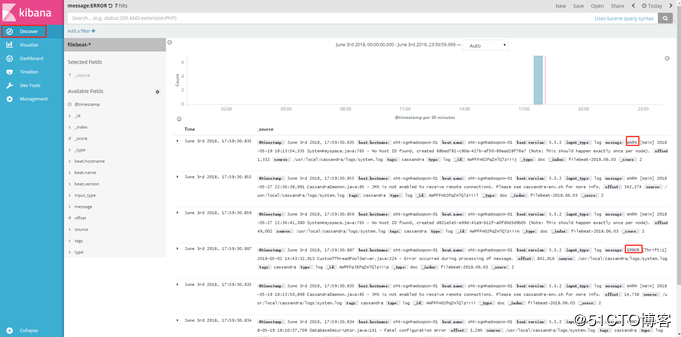

展示system.log日志中所有以ERR和WAR开头的日志:

![img_df3e567d6f16d040326c7a0ea29a4f41.gif]()

(6)也可以通过Dashboard过滤展示ERROR的日志,投影到显示屏,便于及时通知处理:

![img_df3e567d6f16d040326c7a0ea29a4f41.gif]()

![1528212075408696.png blob.png]()

(7)Display only ERROR log in the Dashboard.

![1528212087407046.png blob.png]()

5.Case2: Display system and MySQL module through Metricbeat fetch data

Metricbeat is a lightweight shipper that you can install on your servers to periodically collect metrics from the operating system and from services running on the server

To get started with your own Metricbeat setup, install and configure these related products:

Elasticsearch for storage and indexing the data.

Kibana for the UI.

(1)Installing Metricbeat

[root@sht-sgmhadoopcm-01 local]# tar xf metricbeat-5.5.2-linux-x86_64.tar.gz

[root@sht-sgmhadoopcm-01 local]# mv metricbeat-5.5.2-linux-x86_64 metricbeat

(2)Configuring Metricbate

[root@sht-sgmhadoopcm-01 metricbeat]# vim metricbeat.yml

metricbeat.modules:

- module: system

metricsets:

- cpu

- filesystem

- memory

- network

- process

enabled: true

period: 10s

processes: ['cassandra','mysql','postgres','java','redis','kafka','beat','mango','logstash','elastic','grafana']

- module: mysql

metricsets: ["status"]

enabled: true

period: 30s

hosts: ["root:agm43gadsg@tcp(172.16.101.54:3306)/"]

output.elasticsearch:

hosts: ["192.168.1.42:9200"]

(3)Loading the index template in ES

By default, Metricbeat automatically loads the recommended template file, metricbeat.template.json, if Elasticsearch output is enabled. You can configure metricbeat to load a different template by adjusting the template.name and template.path options in metricbeat.yml file:

output.elasticsearch:

hosts: ["192.168.1.42:9200"]

template.name: "metricbeat"

template.path: "metricbeat.template.json"

template.overwrite: false

(4)Starting Metricbeat

[root@sht-sgmhadoopcm-01 metricbeat]# ./metricbeat -c metricbeat.yml &

(5)Loading Sample Kibana Dashboards

[root@sht-sgmhadoopcm-01 metricbeat]# ./scripts/import_dashboards -eshttp://172.16.101.54:9200

Then create index pattern: metricbeat-*

After importing the dashboards, launch the Kibana web interface by pointing your browser to port 5601.

![img_df3e567d6f16d040326c7a0ea29a4f41.gif]()

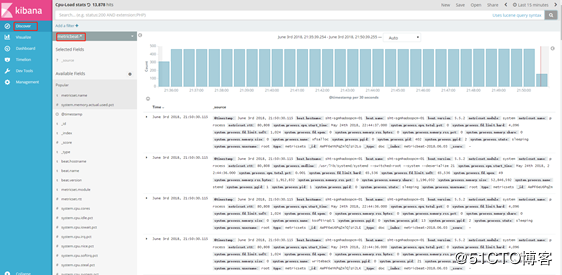

(6)Display System Module Data

![1528212265537678.png blob.png]()

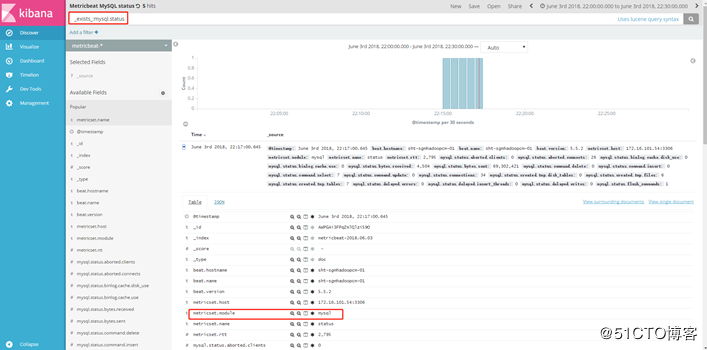

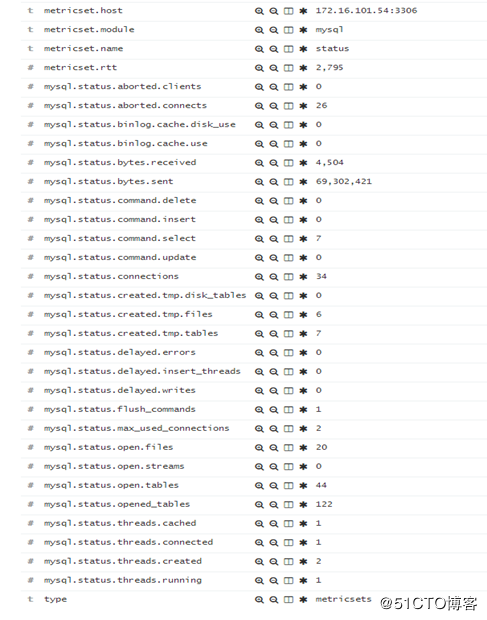

(7)Display MySQL Module Data.

_exists_:mysql.status

![img_df3e567d6f16d040326c7a0ea29a4f41.gif]()

![1528212292444920.png blob.png]()

![1528212301237549.png blob.png]()

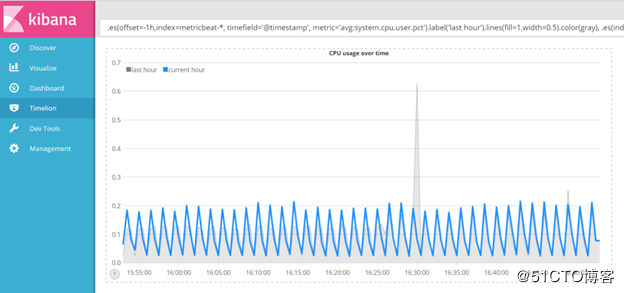

6.Case3: Creating time series visualizations

(1)system.cpu.user.pct

you’ll create will compare the real-time percentage of CPU time spent in user space to the results offset by one hour. In order to create this visualization, we’ll need to create two Timelion expressions. One with the real-time average of system.cpu.user.pct and another with the average offset by one hour.

Use the following expression to update your visualization:

.es(offset=-1h,index=metricbeat-*, timefield='@timestamp', metric='avg:system.cpu.user.pct').label('last hour').lines(fill=1,width=0.5).color(gray), .es(index=metricbeat-*, timefield='@timestamp', metric='avg:system.cpu.user.pct').label('current hour').title('CPU usage over time').color(#1E90FF).legend(columns=2, position=nw)

![1528212403396804.png blob.png]()

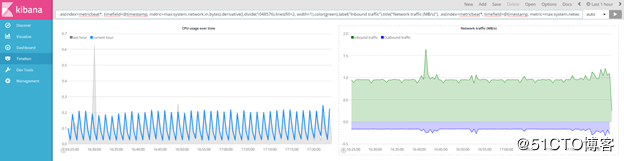

(2)system.network.in.bytes and system.network.out.bytes

create a new Timelion visualization for inbound and outbound network traffic.

Use the following expression to update your visualization:

.es(index=metricbeat*, timefield=@timestamp, metric=max:system.network.in.bytes).derivative().divide(1048576).lines(fill=2, width=1).color(green).label("Inbound traffic").title("Network traffic (MB/s)"), .es(index=metricbeat*, timefield=@timestamp, metric=max:system.network.out.bytes).derivative().multiply(-1).divide(1048576).lines(fill=2, width=1).color(blue).label("Outbound traffic").legend(columns=2, position=nw)

![1528212434385746.png blob.png]()

(3)system.memory.actual.used.bytes

monitors memory consumption

Use the following expression to update your visualization:

.es(index=metricbeat-*, timefield='@timestamp', metric='max:system.memory.actual.used.bytes').label('max memory').title('Memory consumption over time'), .es(index=metricbeat-*, timefield='@timestamp', metric='max:system.memory.actual.used.bytes').if(gt,6742000000,.es(index=metricbeat-*, timefield='@timestamp', metric='max:system.memory.actual.used.bytes'),null).label('warning').color('#FFCC11').lines(width=5), .es(index=metricbeat-*, timefield='@timestamp', metric='max:system.memory.actual.used.bytes').if(gt,6744000000,.es(index=metricbeat-*, timefield='@timestamp', metric='max:system.memory.actual.used.bytes'),null).label('severe').color('red').lines(width=5), .es(index=metricbeat-*, timefield='@timestamp', metric='max:system.memory.actual.used.bytes').mvavg(10).label('mvavg').lines(width=2).color(#5E5E5E).legend(columns=4, position=nw)

![1528212462817319.png blob.png]()

今天就先介绍到这里,其实这些内容都是来自Elastic stack的官方:https://www.elastic.co/guide/index.html

本文只能算是入门,以后还要多多学习交流。