Apache DolphinScheduler的JavaTask可以通过在任务执行日志中输出特定格式的参数来支持OUT参数的下游传输,通过捕捉日志并将其作为参数传递给下游任务。这种机制允许任务间的数据流动和通信,增强了工作流的灵活性和动态性。

那具体要怎么做呢?本文将进行详细的讲解。

0 修改一行源码

org.apache.dolphinscheduler.plugin.task.java.JavaTask

![file]()

1、针对JAVA类

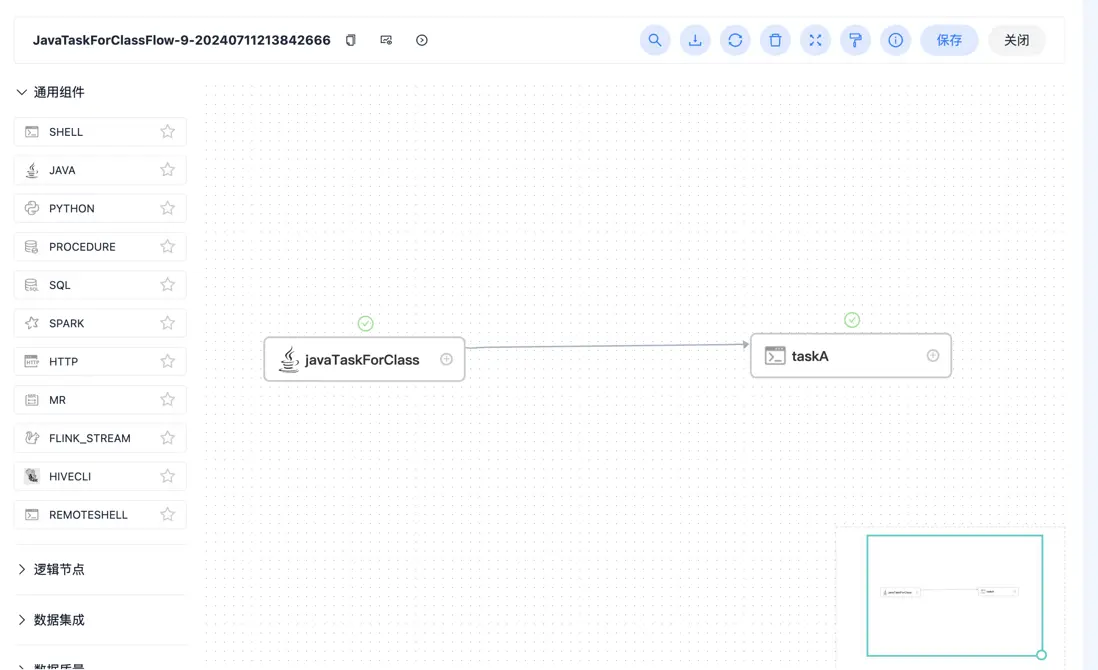

流程定义图

![file]()

1.1、javaTaskForClass设置

![file]()

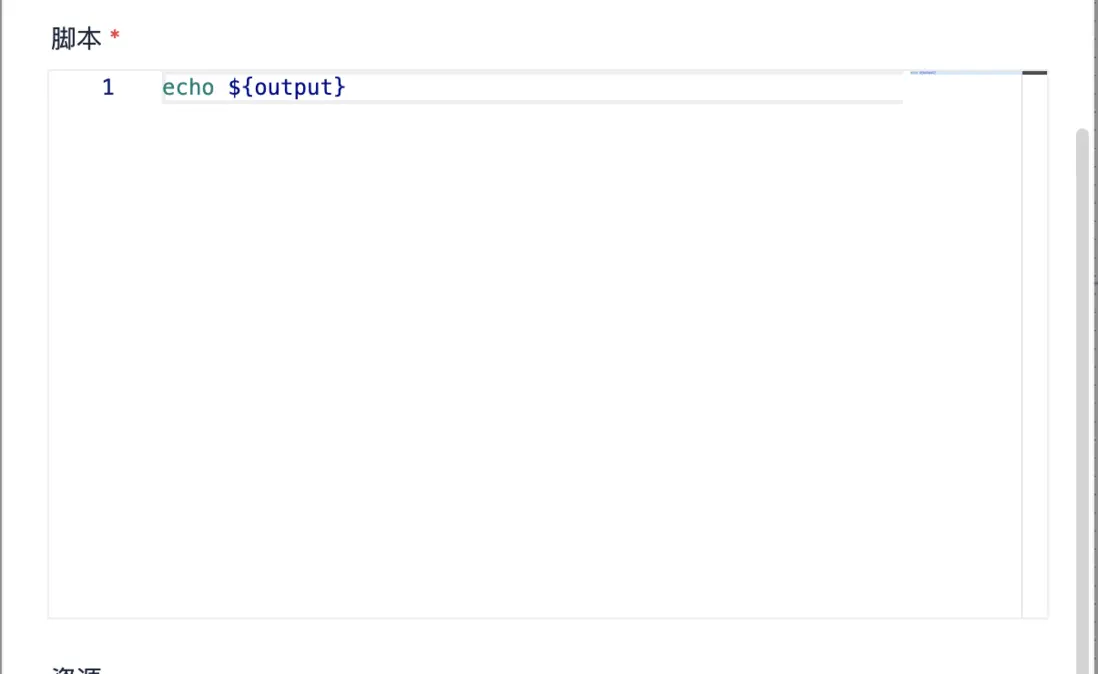

1.2、taskA设置

![file]()

1.3、taskA输出

INFO] 2024-07-11 21:38:46.121 +0800 - Set taskVarPool: [{"prop":"output","direct":"IN","type":"VARCHAR","value":"123"}] successfully

[INFO] 2024-07-11 21:38:46.121 +0800 - ***********************************************************************************************

[INFO] 2024-07-11 21:38:46.121 +0800 - ********************************* Execute task instance *************************************

[INFO] 2024-07-11 21:38:46.122 +0800 - ***********************************************************************************************

[INFO] 2024-07-11 21:38:46.122 +0800 - Final Shell file is:

[INFO] 2024-07-11 21:38:46.122 +0800 - ****************************** Script Content *****************************************************************

[INFO] 2024-07-11 21:38:46.122 +0800 - #!/bin/bash

BASEDIR=$(cd `dirname $0`; pwd)

cd $BASEDIR

source /etc/profile

export HADOOP_HOME=${HADOOP_HOME:-/home/hadoop-3.3.1}

export HADOOP_CONF_DIR=${HADOOP_CONF_DIR:-/opt/soft/hadoop/etc/hadoop}

export SPARK_HOME=${SPARK_HOME:-/home/spark-3.2.1-bin-hadoop3.2}

export PYTHON_HOME=${PYTHON_HOME:-/opt/soft/python}

export HIVE_HOME=${HIVE_HOME:-/home/hive-3.1.2}

export FLINK_HOME=/home/flink-1.18.1

export DATAX_HOME=${DATAX_HOME:-/opt/soft/datax}

export SEATUNNEL_HOME=/opt/software/seatunnel

export CHUNJUN_HOME=${CHUNJUN_HOME:-/opt/soft/chunjun}

export PATH=$HADOOP_HOME/bin:$SPARK_HOME/bin:$PYTHON_HOME/bin:$JAVA_HOME/bin:$HIVE_HOME/bin:$FLINK_HOME/bin:$DATAX_HOME/bin:$SEATUNNEL_HOME/bin:$CHUNJUN_HOME/bin:$PATH

echo 123

[INFO] 2024-07-11 21:38:46.123 +0800 - ****************************** Script Content *****************************************************************

[INFO] 2024-07-11 21:38:46.123 +0800 - Executing shell command : sudo -u root -i /tmp/dolphinscheduler/exec/process/root/13850571680800/14237629094560_9/2095/1689/2095_1689.sh

[INFO] 2024-07-11 21:38:46.127 +0800 - process start, process id is: 884510

[INFO] 2024-07-11 21:38:48.127 +0800 - ->

123

[INFO] 2024-07-11 21:38:48.128 +0800 - process has exited. execute path:/tmp/dolphinscheduler/exec/process/root/13850571680800/14237629094560_9/2095/1689, processId:884510 ,exitStatusCode:0 ,processWaitForStatus:true ,processExitValue:0

2、针对JAR

2.1、jar包封装示例

-

2.1.1、pom.xml

<project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd"> <modelVersion>4.0.0</modelVersion>

<groupId>demo</groupId>

<artifactId>java-demo</artifactId>

<version>1.0-SNAPSHOT</version>

<packaging>jar</packaging>

<name>java-demo</name>

<url>http://maven.apache.org</url>

<properties>

<project.build.sourceEncoding>UTF-8</project.build.sourceEncoding>

</properties>

<dependencies>

<dependency>

<groupId>junit</groupId>

<artifactId>junit</artifactId>

<version>3.8.1</version>

<scope>test</scope>

</dependency>

</dependencies>

<build>

<plugins>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-shade-plugin</artifactId>

<version>3.2.4</version>

<executions>

<execution>

<phase>package</phase>

<goals>

<goal>shade</goal>

</goals>

<configuration>

<artifactSet>

<excludes>

<exclude>com.google.code.findbugs:jsr305</exclude>

<exclude>org.slf4j:*</exclude>

<exclude>log4j:*</exclude>

<exclude>org.apache.hadoop:*</exclude>

</excludes>

</artifactSet>

<filters>

<filter>

<!-- Do not copy the signatures in the META-INF folder.

Otherwise, this might cause SecurityExceptions when using the JAR. -->

<artifact>*:*</artifact>

<excludes>

<exclude>META-INF/*.SF</exclude>

<exclude>META-INF/*.DSA</exclude>

<exclude>META-INF/*.RSA</exclude>

</excludes>

</filter>

</filters>

<transformers combine.children="append">

<transformer

implementation="org.apache.maven.plugins.shade.resource.ManifestResourceTransformer">

<mainClass>demo.Demo</mainClass>

</transformer>

</transformers>

</configuration>

</execution>

</executions>

</plugin>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-compiler-plugin</artifactId>

<configuration>

<source>1.8</source>

<target>1.8</target>

</configuration>

</plugin>

</plugins>

</build>

</project>

-

2.1.2、demo.Demo类具体内容

package demo;

public class Demo {

public static void main(String[] args) {

System.out.println("${setValue(output=123)}");

}

}

-

2.1.3、上传jar到资源中心

mvn clean package,将编译好的java-demo-1.0-SNAPSHOT.jar上传到资源中心,

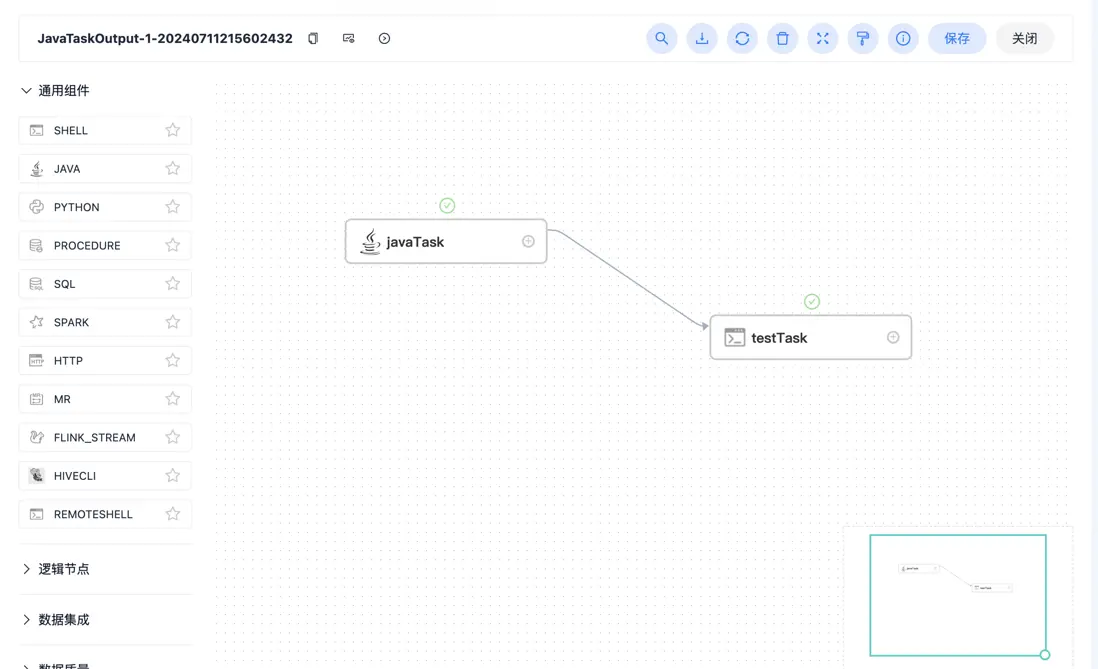

2.2、流程定义图

![file]()

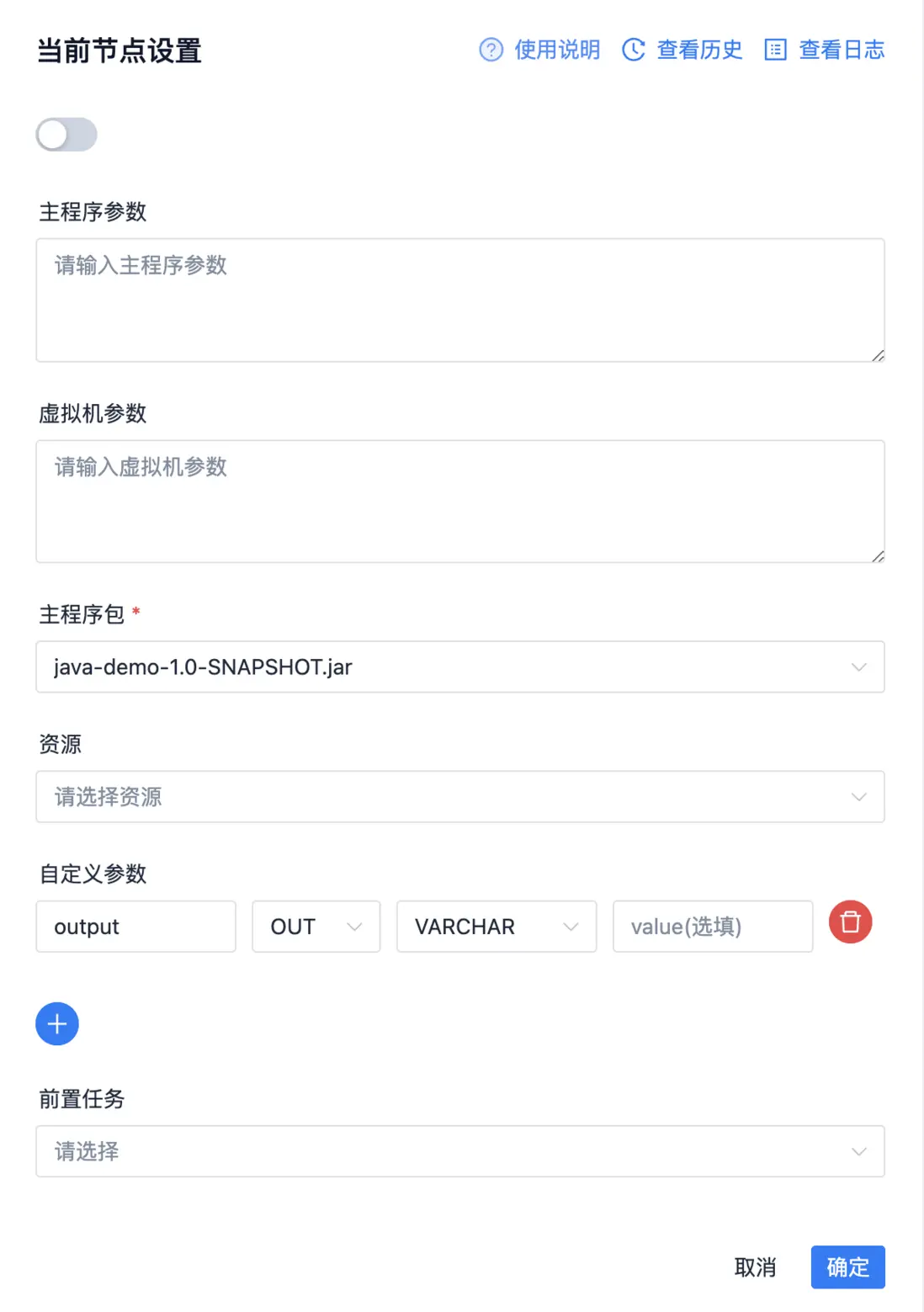

2.3、javaTask

![file]()

2.4、testTask

![file]()

2.5、testTask内容输出

[INFO] 2024-07-11 21:56:05.324 +0800 - Set taskVarPool: [{"prop":"output","direct":"IN","type":"VARCHAR","value":"123"}] successfully

[INFO] 2024-07-11 21:56:05.324 +0800 - ***********************************************************************************************

[INFO] 2024-07-11 21:56:05.324 +0800 - ********************************* Execute task instance *************************************

[INFO] 2024-07-11 21:56:05.324 +0800 - ***********************************************************************************************

[INFO] 2024-07-11 21:56:05.325 +0800 - Final Shell file is:

[INFO] 2024-07-11 21:56:05.325 +0800 - ****************************** Script Content *****************************************************************

[INFO] 2024-07-11 21:56:05.325 +0800 - #!/bin/bash

BASEDIR=$(cd `dirname $0`; pwd)

cd $BASEDIR

source /etc/profile

export HADOOP_HOME=${HADOOP_HOME:-/home/hadoop-3.3.1}

export HADOOP_CONF_DIR=${HADOOP_CONF_DIR:-/opt/soft/hadoop/etc/hadoop}

export SPARK_HOME=${SPARK_HOME:-/home/spark-3.2.1-bin-hadoop3.2}

export PYTHON_HOME=${PYTHON_HOME:-/opt/soft/python}

export HIVE_HOME=${HIVE_HOME:-/home/hive-3.1.2}

export FLINK_HOME=/home/flink-1.18.1

export DATAX_HOME=${DATAX_HOME:-/opt/soft/datax}

export SEATUNNEL_HOME=/opt/software/seatunnel

export CHUNJUN_HOME=${CHUNJUN_HOME:-/opt/soft/chunjun}

export PATH=$HADOOP_HOME/bin:$SPARK_HOME/bin:$PYTHON_HOME/bin:$JAVA_HOME/bin:$HIVE_HOME/bin:$FLINK_HOME/bin:$DATAX_HOME/bin:$SEATUNNEL_HOME/bin:$CHUNJUN_HOME/bin:$PATH

echo 123

[INFO] 2024-07-11 21:56:05.325 +0800 - ****************************** Script Content *****************************************************************

[INFO] 2024-07-11 21:56:05.325 +0800 - Executing shell command : sudo -u root -i /tmp/dolphinscheduler/exec/process/root/13850571680800/14243296570784_1/2096/1691/2096_1691.sh

[INFO] 2024-07-11 21:56:05.329 +0800 - process start, process id is: 885572

[INFO] 2024-07-11 21:56:07.329 +0800 -

转载自Journey 原文链接:https://segmentfault.com/a/1190000045054384

本文由 白鲸开源科技 提供发布支持!