![screenshot screenshot]()

出品丨Docker公司(ID:docker-cn)

编译丨小东

每周一、三、五,与您不见不散!

用过 Kubernetes 的用户都知道 Kubernetes API 真的非常庞大。在最新的版本中,从 Pods 和 Deployments 到 Validating Webhook Configuration 和 ResourceQuota,超过 50 个一级对象。如果您是开发人员,我确信这会很容易导致群集配置时出现紊乱。因此,需要一种简化的方法(如 Swarm CLI / API)来部署和管理在 Kubernetes 集群上运行的应用程序。在上一篇文章《Kubernetes 实战教学,手把手教您如何在 K8s 平台上使用 Compose(一)》中,简要的介绍了简化 Kubernetes 部署以及管理的工具 —— Compose,今天将展示如何在 Kubernetes 上运用 Compose 的实战演示。

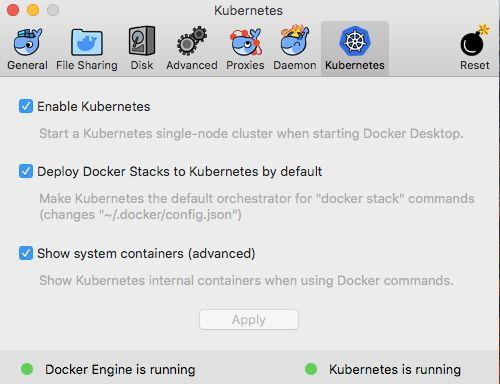

测试所用的基础设施

- Docker 版本:Docker Desktop Community v2.0.1.0;

- 系统:macOS High Sierra v10.13.6;

- Docker Engine:v18.09.1;

- Docker Compose:v1.23.2;

- Kubernetes:v1.13.0;

先决条件

验证 Docker Desktop 版本

[Captains-Bay]? > docker version

Client: Docker Engine - Community

Version: 18.09.1

API version: 1.39

Go version: go1.10.6

Git commit: 4c52b90

Built: Wed Jan 9 19:33:12 2019

OS/Arch: darwin/amd64

Experimental: false

Server: Docker Engine - Community

Engine:

Version: 18.09.1

API version: 1.39 (minimum version 1.12)

Go version: go1.10.6

Git commit: 4c52b90

Built: Wed Jan 9 19:41:49 2019

OS/Arch: linux/amd64

Experimental: true

Kubernetes:

Version: v1.12.4

StackAPI: v1beta2

[Captains-Bay]? >

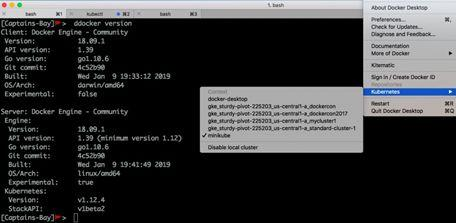

安装 Minikube

curl -Lo minikube https://storage.googleapis.com/minikube/releases/latest/minikube-darwin-amd64 \

&& chmod +x minikube

验证 Minikube 版本

minikube version

minikube version: v0.32.0

检查 Minikube 状态

minikube status

host: Stopped

kubelet:

apiserver:

开始 Minikube

![screenshot screenshot]()

]? > minikube start

Starting local Kubernetes v1.12.4 cluster...

Starting VM...

Getting VM IP address...

Moving files into cluster...

Setting up certs...

Connecting to cluster...

Setting up kubeconfig...

Stopping extra container runtimes...

Machine exists, restarting cluster components...

Verifying kubelet health ...

Verifying apiserver health ....Kubectl is now configured to use the cluster.

Loading cached images from config file.

Everything looks great. Please enjoy minikube!

By now, you should be able to see context switching happening under UI windows under Kubernetes section as shown below:

检查 Minikube 状态

? > minikube status

host: Running

kubelet: Running

apiserver: Running

kubectl: Correctly Configured: pointing to minikube-vm at 192.168.99.100[Captains-Bay]? >

列出 Minikube 集群节点

kubectl get nodes

NAME STATUS ROLES AGE VERSION

minikube Ready master 12h v1.12.4

创建“compose”命名空间

kubectl create namespace compose

namespace "compose" created

创建“tiller”服务帐户

kubectl -n kube-system create serviceaccount tiller

serviceaccount "tiller" created

授予对集群的访问权限

kubectl -n kube-system create clusterrolebinding tiller --clusterrole cluster-admin --serviceaccount kube-system:tiller

clusterrolebinding "tiller" created

初始化 Helm 组件

? > helm init --service-account tiller

$HELM_HOME has been configured at /Users/ajeetraina/.helm.

Tiller (the Helm server-side component) has been installed into your Kubernetes Cluster.

Please note: by default, Tiller is deployed with an insecure 'allow unauthenticated users' policy.

For more information on securing your installation see: https://docs.helm.sh/using_helm/#securing-your-helm-installation

Happy Helming!

验证 Helm 版本

helm version

Client: &version.Version{SemVer:"v2.12.1", GitCommit:"20adb27c7c5868466912eebdf6664e7390ebe710", GitTreeState:"clean"}

Server: &version.Version{SemVer:"v2.12.1", GitCommit:"20adb27c7c5868466912eebdf6664e7390ebe710", GitTreeState:"clean"}

部署 etcd operator

? > helm install --name etcd-operator stable/etcd-operator --namespace compose

NAME: etcd-operator

LAST DEPLOYED: Fri Jan 11 10:08:06 2019

NAMESPACE: compose

STATUS: DEPLOYED

RESOURCES:

==> v1/ServiceAccount

NAME SECRETS AGE

etcd-operator-etcd-operator-etcd-backup-operator 1 1s

etcd-operator-etcd-operator-etcd-operator 1 1s

etcd-operator-etcd-operator-etcd-restore-operator 1 1s

==> v1beta1/ClusterRole

NAME AGE

etcd-operator-etcd-operator-etcd-operator 1s

==> v1beta1/ClusterRoleBinding

NAME AGE

etcd-operator-etcd-operator-etcd-backup-operator 1s

etcd-operator-etcd-operator-etcd-operator 1s

etcd-operator-etcd-operator-etcd-restore-operator 1s

==> v1/Service

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

etcd-restore-operator ClusterIP 10.104.102.245 19999/TCP 1s

==> v1beta1/Deployment

NAME DESIRED CURRENT UP-TO-DATE AVAILABLE AGE

etcd-operator-etcd-operator-etcd-backup-operator 1 1 1 0 1s

etcd-operator-etcd-operator-etcd-operator 1 1 1 0 1s

etcd-operator-etcd-operator-etcd-restore-operator 1 1 1 0 1s

==> v1/Pod(related)

NAME READY STATUS RESTARTS AGE

etcd-operator-etcd-operator-etcd-backup-operator-7978f8bc4r97s7 0/1 ContainerCreating 0 1s

etcd-operator-etcd-operator-etcd-operator-6c57fff9d5-kdd7d 0/1 ContainerCreating 0 1s

etcd-operator-etcd-operator-etcd-restore-operator-6d787599vg4rb 0/1 ContainerCreating 0 1s

NOTES:

1. etcd-operator deployed.

If you would like to deploy an etcd-cluster set cluster.enabled to true in values.yaml

Check the etcd-operator logs

export POD=$(kubectl get pods -l app=etcd-operator-etcd-operator-etcd-operator --namespace compose --output name)

kubectl logs $POD --namespace=compose

? >

创建 etcd 集群

$cat compose-etcd.yaml

apiVersion: "etcd.database.coreos.com/v1beta2"

kind: "EtcdCluster"

metadata:

name: "compose-etcd"

namespace: "compose"

spec:

size: 3

version: "3.2.13"

kubectl apply -f compose-etcd.yaml

etcdcluster "compose-etcd" created

这将在 compose 命名空间中引入一个 etcd 集群。

下载 Compose 安装程序

wget https://github.com/docker/compose-on-kubernetes/releases/download/v0.4.18/installer-darwin

在 Kubernetes 上部署 Compose

./installer-darwin -namespace=compose -etcd-servers=http://compose-etcd-client:2379 -tag=v0.4.18

INFO[0000] Checking installation state

INFO[0000] Install image with tag "v0.4.18" in namespace "compose"

INFO[0000] Api server: image: "docker/kube-compose-api-server:v0.4.18", pullPolicy: "Always"

INFO[0000] Controller: image: "docker/kube-compose-controller:v0.4.18", pullPolicy: "Always"

确保已启用 Compose Stack 控制器

[Captains-Bay]? > kubectl api-versions| grep compose

compose.docker.com/v1beta1

compose.docker.com/v1beta2

列出 Minikube 的服务

[Captains-Bay]? > minikube service list

|-------------|-------------------------------------|-----------------------------|

| NAMESPACE | NAME | URL |

|-------------|-------------------------------------|-----------------------------|

| compose | compose-api | No node port |

| compose | compose-etcd-client | No node port |

| compose | etcd-restore-operator | No node port |

| default | db1 | No node port |

| default | example-etcd-cluster-client-service | http://192.168.99.100:32379 |

| default | kubernetes | No node port |

| default | web1 | No node port |

| default | web1-published | http://192.168.99.100:32511 |

| kube-system | kube-dns | No node port |

| kube-system | kubernetes-dashboard | No node port |

| kube-system | tiller-deploy | No node port |

|-------------|-------------------------------------|-----------------------------|

[Captains-Bay]? >

验证 StackAPI

[Captains-Bay]? > docker version

Client: Docker Engine - Community

Version: 18.09.1

API version: 1.39

Go version: go1.10.6

Git commit: 4c52b90

Built: Wed Jan 9 19:33:12 2019

OS/Arch: darwin/amd64

Experimental: false

Server: Docker Engine - Community

Engine:

Version: 18.09.1

API version: 1.39 (minimum version 1.12)

Go version: go1.10.6

Git commit: 4c52b90

Built: Wed Jan 9 19:41:49 2019

OS/Arch: linux/amd64

Experimental: true

Kubernetes:

Version: v1.12.4

StackAPI: v1beta2

[Captains-Bay]? >

直接使用 Docker Compose 部署 Web 应用程序堆栈

[Captains-Bay]? > docker stack deploy -c docker-compose2.yml myapp4

Waiting for the stack to be stable and running...

db1: Ready [pod status: 1/2 ready, 1/2 pending, 0/2 failed]

web1: Ready [pod status: 2/2 ready, 0/2 pending, 0/2 failed]

Stack myapp4 is stable and running

[Captains-Bay]? > docker stack ls

NAME SERVICES ORCHESTRATOR NAMESPACE

myapp4 2 Kubernetes default

[Captains-Bay]? > kubectl get po

NAME READY STATUS RESTARTS AGE

db1-55959c855d-jwh69 1/1 Running 0 57s

db1-55959c855d-kbcm4 1/1 Running 0 57s

web1-58cc9c58c7-sgsld 1/1 Running 0 57s

web1-58cc9c58c7-tvlhc 1/1 Running 0 57s

因此,我们使用 Docker Compose 文件成功地将一个 Web 应用程序栈部署到运行在 Minikube 中的单节点 Kubernetes 集群上。