一、环境要求

这里使用RHEL7.5

master、etcd:192.168.10.101,主机名:master

node1:192.168.10.103,主机名:node1

node2:192.168.10.104,主机名:node2

所有机子能基于主机名通信,编辑每台机子的/etc/hosts文件:

192.168.10.101 master

192.168.10.103 node1

192.168.10.104 node2

所有机子时间要同步

所有机子关闭防火墙和selinux。

master可以免密登录全部机子。

【重要问题】

集群初始化以及节点加入集群的时候都会从谷歌仓库下载镜像,然而,我们并不能访问到谷歌,所以无法下载所需的镜像。而我已经将所需镜像上传至阿里云个人仓库。

二、安装步骤

1、etcd cluster,仅master节点;

2、flannel,集群的所有节点;

3、配置k8s的master:仅master节点;

kubernetes-master

启动的服务:kube-apiserver,kube-scheduler,kube-controller-manager

4、配置k8s的各Node节点;

kubernetes-node

先设定启动docker服务;

启动的k8s的服务:kube-proxy,kubelet

kubeadm

1、master,nodes:安装kubelet,kubeadm,docker

2、master:kubeadm init

3、nodes:kubeadm join

https://github.com/kubernetes/kubeadm/blob/master/docs/design/design_v1.10.md

三、集群安装

1、master节点安装配置

(1)yum源配置

这里使用1.12.0版本。下载地址:https://github.com/kubernetes/kubernetes/blob/master/CHANGELOG-1.12.md#downloads-for-v1120

这里使用yum下载。配置yum源,先配置docker的yum源,直接下载阿里云的repo文件即可:

[root@master ~]# curl -o /etc/yum.repos.d/docker-ce.repo https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

创建kubernetes的yum源文件:

[root@master ~]# vim /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes Repo

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

gpgcheck=1

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg

enabled=1

将这两个repo文件复制到其他节点的/etc/yum.repo.d目录中:

[root@master ~]# for i in 102 103; do scp /etc/yum.repos.d/{docker-ce.repo,kubernetes.repo} root@192.168.10.$i:/etc/yum.repos.d/; done

安装yum源的检验key:

[root@master ~]# ansible all -m shell -a "curl -O https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg && rpm --import rpm-package-key.gpg"

(2)安装docker、kubelet、kubeadm、kubectl

[root@master ~]# yum install docker-ce kubelet kubeadm kubectl -y

(3)修改防火墙

[root@master ~]# echo 1 > /proc/sys/net/bridge/bridge-nf-call-ip6tables

[root@master ~]# echo 1 > /proc/sys/net/bridge/bridge-nf-call-iptables

[root@master ~]# ansible all -m shell -a "iptables -P FORWARD ACCEPT"

注意:这是临时修改,重启机器参数会失效。

永久修改:/usr/lib/sysctl.d/00-system.conf

(4)修改docker服务文件并启动docker

[root@master ~]# vim /usr/lib/systemd/system/docker.service

[Service]

Type=notify

# the default is not to use systemd for cgroups because the delegate issues still

# exists and systemd currently does not support the cgroup feature set required

# for containers run by docker

#Environment="HTTPS_PROXY=http://www.ik8s.io:10080"

Environment="NO_PROXY=127.0.0.1/8,127.0.0.1/16"

在Service段中添加:

Environment="NO_PROXY=127.0.0.1/8,127.0.0.1/16"

启动docker:

[root@master ~]# systemctl daemon-reload

[root@master ~]# systemctl start docker

[root@master ~]# systemctl enable docker

(5)设置kubelet开机启动

[root@master ~]# systemctl enable kubelet

(6)初始化

编辑配置文件,忽略某些参数:

[root@master ~]# vim /etc/sysconfig/kubelet

KUBELET_EXTRA_ARGS="--fail-swap-on=false"

执行初始化:

[root@master ~]# kubeadm init --kubernetes-version=v1.12.0 --pod-network-cidr=10.244.0.0/16 --service-cidr=10.96.0.0/12 --ignore-preflight-errors=Swap

[init] using Kubernetes version: v1.12.0

[preflight] running pre-flight checks

[WARNING Swap]: running with swap on is not supported. Please disable swap

[preflight/images] Pulling images required for setting up a Kubernetes cluster

[preflight/images] This might take a minute or two, depending on the speed of your internet connection

[preflight/images] You can also perform this action in beforehand using 'kubeadm config images pull'

[preflight] Some fatal errors occurred:

[ERROR ImagePull]: failed to pull image k8s.gcr.io/kube-apiserver:v1.12.0: output: Error response from daemon: Get https://k8s.gcr.io/v2/: proxyconnect tcp: dial tcp 172.96.236.117:10080: connect: connection refused

, error: exit status 1

[ERROR ImagePull]: failed to pull image k8s.gcr.io/kube-controller-manager:v1.12.0: output: Error response from daemon: Get https://k8s.gcr.io/v2/: proxyconnect tcp: dial tcp 172.96.236.117:10080: connect: connection refused

, error: exit status 1

[ERROR ImagePull]: failed to pull image k8s.gcr.io/kube-scheduler:v1.12.0: output: Error response from daemon: Get https://k8s.gcr.io/v2/: proxyconnect tcp: dial tcp 172.96.236.117:10080: connect: connection refused

, error: exit status 1

[ERROR ImagePull]: failed to pull image k8s.gcr.io/kube-proxy:v1.12.0: output: Error response from daemon: Get https://k8s.gcr.io/v2/: proxyconnect tcp: dial tcp 172.96.236.117:10080: connect: connection refused

, error: exit status 1

[ERROR ImagePull]: failed to pull image k8s.gcr.io/pause:3.1: output: Error response from daemon: Get https://k8s.gcr.io/v2/: proxyconnect tcp: dial tcp 172.96.236.117:10080: connect: connection refused

, error: exit status 1

[ERROR ImagePull]: failed to pull image k8s.gcr.io/etcd:3.2.24: output: Error response from daemon: Get https://k8s.gcr.io/v2/: proxyconnect tcp: dial tcp 172.96.236.117:10080: connect: connection refused

, error: exit status 1

[ERROR ImagePull]: failed to pull image k8s.gcr.io/coredns:1.2.2: output: Error response from daemon: Get https://k8s.gcr.io/v2/: proxyconnect tcp: dial tcp 172.96.236.117:10080: connect: connection refused

, error: exit status 1

[preflight] If you know what you are doing, you can make a check non-fatal with `--ignore-preflight-errors=...`

[root@master ~]#

无法下载镜像。因为无法访问谷歌镜像仓库。可以通过其他途径下载镜像到本地,再执行初始化。

镜像下载脚本:https://github.com/yanyuzm/k8s_images_script

相关镜像我已上传到阿里云,执行以下脚本即可:

[root@master ~]# vim pull-images.sh

#!/bin/bash

images=(kube-apiserver:v1.12.0 kube-controller-manager:v1.12.0 kube-scheduler:v1.12.0 kube-proxy:v1.12.0 pause:3.1 etcd:3.2.24 coredns:1.2.2)

for ima in ${images[@]}

do

docker pull registry.cn-shenzhen.aliyuncs.com/lurenjia/$ima

docker tag registry.cn-shenzhen.aliyuncs.com/lurenjia/$ima k8s.gcr.io/$ima

docker rmi -f registry.cn-shenzhen.aliyuncs.com/lurenjia/$ima

done

[root@master ~]# sh pull-images.sh

用到的镜像有:

[root@master ~]# docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

k8s.gcr.io/kube-controller-manager v1.12.0 07e068033cf2 2 weeks ago 164MB

k8s.gcr.io/kube-apiserver v1.12.0 ab60b017e34f 2 weeks ago 194MB

k8s.gcr.io/kube-scheduler v1.12.0 5a1527e735da 2 weeks ago 58.3MB

k8s.gcr.io/kube-proxy v1.12.0 9c3a9d3f09a0 2 weeks ago 96.6MB

k8s.gcr.io/etcd 3.2.24 3cab8e1b9802 3 weeks ago 220MB

k8s.gcr.io/coredns 1.2.2 367cdc8433a4 6 weeks ago 39.2MB

k8s.gcr.io/pause 3.1 da86e6ba6ca1 9 months ago 742kB

[root@master ~]#

重新初始化:

[root@master ~]# kubeadm init --kubernetes-version=v1.12.0 --pod-network-cidr=10.244.0.0/16 --service-cidr=10.96.0.0/12 --ignore-preflight-errors=Swap

[init] using Kubernetes version: v1.12.0

[preflight] running pre-flight checks

[preflight/images] Pulling images required for setting up a Kubernetes cluster

[preflight/images] This might take a minute or two, depending on the speed of your internet connection

[preflight/images] You can also perform this action in beforehand using 'kubeadm config images pull'

[kubelet] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[preflight] Activating the kubelet service

[certificates] Generated front-proxy-ca certificate and key.

[certificates] Generated front-proxy-client certificate and key.

[certificates] Generated etcd/ca certificate and key.

[certificates] Generated etcd/peer certificate and key.

[certificates] etcd/peer serving cert is signed for DNS names [master localhost] and IPs [192.168.10.101 127.0.0.1 ::1]

[certificates] Generated apiserver-etcd-client certificate and key.

[certificates] Generated etcd/server certificate and key.

[certificates] etcd/server serving cert is signed for DNS names [master localhost] and IPs [127.0.0.1 ::1]

[certificates] Generated etcd/healthcheck-client certificate and key.

[certificates] Generated ca certificate and key.

[certificates] Generated apiserver certificate and key.

[certificates] apiserver serving cert is signed for DNS names [master kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local] and IPs [10.96.0.1 192.168.10.101]

[certificates] Generated apiserver-kubelet-client certificate and key.

[certificates] valid certificates and keys now exist in "/etc/kubernetes/pki"

[certificates] Generated sa key and public key.

[kubeconfig] Wrote KubeConfig file to disk: "/etc/kubernetes/admin.conf"

[kubeconfig] Wrote KubeConfig file to disk: "/etc/kubernetes/kubelet.conf"

[kubeconfig] Wrote KubeConfig file to disk: "/etc/kubernetes/controller-manager.conf"

[kubeconfig] Wrote KubeConfig file to disk: "/etc/kubernetes/scheduler.conf"

[controlplane] wrote Static Pod manifest for component kube-apiserver to "/etc/kubernetes/manifests/kube-apiserver.yaml"

[controlplane] wrote Static Pod manifest for component kube-controller-manager to "/etc/kubernetes/manifests/kube-controller-manager.yaml"

[controlplane] wrote Static Pod manifest for component kube-scheduler to "/etc/kubernetes/manifests/kube-scheduler.yaml"

[etcd] Wrote Static Pod manifest for a local etcd instance to "/etc/kubernetes/manifests/etcd.yaml"

[init] waiting for the kubelet to boot up the control plane as Static Pods from directory "/etc/kubernetes/manifests"

[init] this might take a minute or longer if the control plane images have to be pulled

[apiclient] All control plane components are healthy after 71.135592 seconds

[uploadconfig] storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace

[kubelet] Creating a ConfigMap "kubelet-config-1.12" in namespace kube-system with the configuration for the kubelets in the cluster

[markmaster] Marking the node master as master by adding the label "node-role.kubernetes.io/master=''"

[markmaster] Marking the node master as master by adding the taints [node-role.kubernetes.io/master:NoSchedule]

[patchnode] Uploading the CRI Socket information "/var/run/dockershim.sock" to the Node API object "master" as an annotation

[bootstraptoken] using token: qaqahg.5xbt355fl26wu8tg

[bootstraptoken] configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials

[bootstraptoken] configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token

[bootstraptoken] configured RBAC rules to allow certificate rotation for all node client certificates in the cluster

[bootstraptoken] creating the "cluster-info" ConfigMap in the "kube-public" namespace

[addons] Applied essential addon: CoreDNS

[addons] Applied essential addon: kube-proxy

Your Kubernetes master has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

You can now join any number of machines by running the following on each node

as root:

kubeadm join 192.168.10.101:6443 --token qaqahg.5xbt355fl26wu8tg --discovery-token-ca-cert-hash sha256:654f52a18fa04234c05eb38a001d92b9831982d06272e5a22b7d898bc6280e47

[root@master ~]#

OK。初始化成功。

初始化成功,最后的提示:很重要

Your Kubernetes master has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

You can now join any number of machines by running the following on each node

as root:

kubeadm join 192.168.10.101:6443 --token qaqahg.5xbt355fl26wu8tg --discovery-token-ca-cert-hash sha256:654f52a18fa04234c05eb38a001d92b9831982d06272e5a22b7d898bc6280e47

master节点:按照提示,做以下操作:

[root@master ~]# mkdir -p $HOME/.kube

[root@master ~]# cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

cp: overwrite ‘/root/.kube/config’? y

[root@master ~]# chown $(id -u):$(id -g) $HOME/.kube/config

[root@master ~]#

查看一下:

[root@master ~]# kubectl get componentstatus

NAME STATUS MESSAGE ERROR

controller-manager Healthy ok

scheduler Healthy ok

etcd-0 Healthy {"health": "true"}

[root@master ~]# kubectl get cs

NAME STATUS MESSAGE ERROR

scheduler Healthy ok

controller-manager Healthy ok

etcd-0 Healthy {"health": "true"}

[root@master ~]#

健康状态。

查看集群节点:

[root@master ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

master NotReady master 110m v1.12.1

[root@master ~]#

只有master节点,但处于NotReady状态。因为没有部署flannel。

(7)安装flannel

地址:https://github.com/coreos/flannel

执行以下命令:

[root@master ~]# kubectl apply -f https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

clusterrole.rbac.authorization.k8s.io/flannel created

clusterrolebinding.rbac.authorization.k8s.io/flannel created

serviceaccount/flannel created

configmap/kube-flannel-cfg created

daemonset.extensions/kube-flannel-ds-amd64 created

daemonset.extensions/kube-flannel-ds-arm64 created

daemonset.extensions/kube-flannel-ds-arm created

daemonset.extensions/kube-flannel-ds-ppc64le created

daemonset.extensions/kube-flannel-ds-s390x created

[root@master ~]#

执行完成后,需要等待很长时间,因为要下载flannel镜像。

[root@master ~]# docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

k8s.gcr.io/kube-controller-manager v1.12.0 07e068033cf2 2 weeks ago 164MB

k8s.gcr.io/kube-apiserver v1.12.0 ab60b017e34f 2 weeks ago 194MB

k8s.gcr.io/kube-scheduler v1.12.0 5a1527e735da 2 weeks ago 58.3MB

k8s.gcr.io/kube-proxy v1.12.0 9c3a9d3f09a0 2 weeks ago 96.6MB

k8s.gcr.io/etcd 3.2.24 3cab8e1b9802 3 weeks ago 220MB

k8s.gcr.io/coredns 1.2.2 367cdc8433a4 6 weeks ago 39.2MB

quay.io/coreos/flannel v0.10.0-amd64 f0fad859c909 8 months ago 44.6MB

k8s.gcr.io/pause 3.1 da86e6ba6ca1 9 months ago 742kB

[root@master ~]#

OK,flannel镜像下载完成。查看节点:

[root@master ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

master Ready master 155m v1.12.1

[root@master ~]#

OK,master处于Ready状态。

如果flannel下载不成功,可以下载阿里云的:

docker pull registry.cn-shenzhen.aliyuncs.com/lurenjia/flannel:v0.10.0-amd64

下载成功后,修改镜像的tag:

docker tag registry.cn-shenzhen.aliyuncs.com/lurenjia/flannel:v0.10.0-amd64 quay.io/coreos/flannel:v0.10.0-amd64

查看一下命名空间情况:

[root@master ~]# kubectl get ns

NAME STATUS AGE

default Active 158m

kube-public Active 158m

kube-system Active 158m

[root@master ~]#

查看kube-system的pod:

[root@master ~]# kubectl get pods -n kube-system

NAME READY STATUS RESTARTS AGE

coredns-576cbf47c7-hfvcq 1/1 Running 0 158m

coredns-576cbf47c7-xcpgd 1/1 Running 0 158m

etcd-master 1/1 Running 6 132m

kube-apiserver-master 1/1 Running 9 132m

kube-controller-manager-master 1/1 Running 33 132m

kube-flannel-ds-amd64-vqc9h 1/1 Running 3 41m

kube-proxy-z9xrw 1/1 Running 4 158m

kube-scheduler-master 1/1 Running 33 132m

[root@master ~]#

2、node节点安装配置

1、安装docker-ce、kubelet、kubeadm

[root@node1 ~]# yum install docker-ce kubelet kubeadm -y

[root@node2 ~]# yum install docker-ce kubelet kubeadm -y

2、复制master节点的文件到node

[root@master ~]# scp /etc/sysconfig/kubelet 192.168.10.103:/etc/sysconfig/

kubelet 100% 42 45.4KB/s 00:00

[root@master ~]# scp /etc/sysconfig/kubelet 192.168.10.104:/etc/sysconfig/

kubelet 100% 42 4.0KB/s 00:00

[root@master ~]#

3、node节点加入集群

启动docker、kubelet

[root@node1 ~]# systemctl start docker kubelet

[root@node1 ~]# systemctl enable docker kubelet

[root@node2 ~]# systemctl start docker kubelet

[root@node2 ~]# systemctl enable docker kubelet

node节点加入集群:

[root@node1 ~]# kubeadm join 192.168.10.101:6443 --token qaqahg.5xbt355fl26wu8tg --discovery-token-ca-cert-hash sha256:654f52a18fa04234c05eb38a001d92b9831982d06272e5a22b7d898bc6280e47 --ignore-preflight-errors=Swap

[preflight] running pre-flight checks

[WARNING RequiredIPVSKernelModulesAvailable]: the IPVS proxier will not be used, because the following required kernel modules are not loaded: [ip_vs ip_vs_rr ip_vs_wrr ip_vs_sh] or no builtin kernel ipvs support: map[ip_vs_wrr:{} ip_vs_sh:{} nf_conntrack_ipv4:{} ip_vs:{} ip_vs_rr:{}]

you can solve this problem with following methods:

1. Run 'modprobe -- ' to load missing kernel modules;

2. Provide the missing builtin kernel ipvs support

[WARNING Swap]: running with swap on is not supported. Please disable swap

[preflight] Some fatal errors occurred:

[ERROR FileContent--proc-sys-net-bridge-bridge-nf-call-iptables]: /proc/sys/net/bridge/bridge-nf-call-iptables contents are not set to 1

[preflight] If you know what you are doing, you can make a check non-fatal with `--ignore-preflight-errors=...`

[root@node1 ~]# echo 1 > /proc/sys/net/bridge/bridge-nf-call-iptables

[root@node1 ~]#

报错,按提示设置即可。

[root@node1 ~]# kubeadm join 192.168.10.101:6443 --token qaqahg.5xbt355fl26wu8tg --discovery-token-ca-cert-hash sha256:654f52a18fa04234c05eb38a001d92b9831982d06272e5a22b7d898bc6280e47 --ignore-preflight-errors=Swap

[preflight] running pre-flight checks

[WARNING RequiredIPVSKernelModulesAvailable]: the IPVS proxier will not be used, because the following required kernel modules are not loaded: [ip_vs ip_vs_rr ip_vs_wrr ip_vs_sh] or no builtin kernel ipvs support: map[ip_vs_rr:{} ip_vs_wrr:{} ip_vs_sh:{} nf_conntrack_ipv4:{} ip_vs:{}]

you can solve this problem with following methods:

1. Run 'modprobe -- ' to load missing kernel modules;

2. Provide the missing builtin kernel ipvs support

[WARNING Swap]: running with swap on is not supported. Please disable swap

[discovery] Trying to connect to API Server "192.168.10.101:6443"

[discovery] Created cluster-info discovery client, requesting info from "https://192.168.10.101:6443"

[discovery] Requesting info from "https://192.168.10.101:6443" again to validate TLS against the pinned public key

[discovery] Cluster info signature and contents are valid and TLS certificate validates against pinned roots, will use API Server "192.168.10.101:6443"

[discovery] Successfully established connection with API Server "192.168.10.101:6443"

[kubelet] Downloading configuration for the kubelet from the "kubelet-config-1.12" ConfigMap in the kube-system namespace

[kubelet] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[preflight] Activating the kubelet service

[tlsbootstrap] Waiting for the kubelet to perform the TLS Bootstrap...

[patchnode] Uploading the CRI Socket information "/var/run/dockershim.sock" to the Node API object "node1" as an annotation

This node has joined the cluster:

* Certificate signing request was sent to apiserver and a response was received.

* The Kubelet was informed of the new secure connection details.

Run 'kubectl get nodes' on the master to see this node join the cluster.

[root@node1 ~]#

OK,node1加入成功。

[root@node2 ~]# echo 1 > /proc/sys/net/bridge/bridge-nf-call-iptables

[root@node2 ~]# kubeadm join 192.168.10.101:6443 --token qaqahg.5xbt355fl26wu8tg --discovery-token-ca-cert-hash sha256:654f52a18fa04234c05eb38a001d92b9831982d06272e5a22b7d898bc6280e47 --ignore-preflight-errors=Swap

[preflight] running pre-flight checks

[WARNING RequiredIPVSKernelModulesAvailable]: the IPVS proxier will not be used, because the following required kernel modules are not loaded: [ip_vs_rr ip_vs_wrr ip_vs_sh ip_vs] or no builtin kernel ipvs support: map[ip_vs:{} ip_vs_rr:{} ip_vs_wrr:{} ip_vs_sh:{} nf_conntrack_ipv4:{}]

you can solve this problem with following methods:

1. Run 'modprobe -- ' to load missing kernel modules;

2. Provide the missing builtin kernel ipvs support

[WARNING Swap]: running with swap on is not supported. Please disable swap

[discovery] Trying to connect to API Server "192.168.10.101:6443"

[discovery] Created cluster-info discovery client, requesting info from "https://192.168.10.101:6443"

[discovery] Requesting info from "https://192.168.10.101:6443" again to validate TLS against the pinned public key

[discovery] Cluster info signature and contents are valid and TLS certificate validates against pinned roots, will use API Server "192.168.10.101:6443"

[discovery] Successfully established connection with API Server "192.168.10.101:6443"

[kubelet] Downloading configuration for the kubelet from the "kubelet-config-1.12" ConfigMap in the kube-system namespace

[kubelet] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[preflight] Activating the kubelet service

[tlsbootstrap] Waiting for the kubelet to perform the TLS Bootstrap...

[patchnode] Uploading the CRI Socket information "/var/run/dockershim.sock" to the Node API object "node2" as an annotation

This node has joined the cluster:

* Certificate signing request was sent to apiserver and a response was received.

* The Kubelet was informed of the new secure connection details.

Run 'kubectl get nodes' on the master to see this node join the cluster.

[root@node2 ~]#

OK,node2加入成功。

4、node手动下载kube-proxy、pause镜像

node节点均执行以下命令:

for ima in kube-proxy:v1.12.0 pause:3.1;do docker pull registry.cn-shenzhen.aliyuncs.com/lurenjia/$ima && docker tag registry.cn-shenzhen.aliyuncs.com/lurenjia/$ima k8s.gcr.io/$ima && docker rmi -f registry.cn-shenzhen.aliyuncs.com/lurenjia/$ima ;done

5、到master节点查看node情况:

[root@master ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

master Ready master 3h10m v1.12.1

node1 Ready <none> 18m v1.12.1

node2 Ready <none> 17m v1.12.1

[root@master ~]#

OK,全部处于Ready状态。如果node节点还是不正常,就重启一下node节点的docker、kubelet服务。

查看kube-system的pod信息:

[root@master ~]# kubectl get pods -n kube-system -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE

coredns-576cbf47c7-hfvcq 1/1 Running 0 3h11m 10.244.0.3 master <none>

coredns-576cbf47c7-xcpgd 1/1 Running 0 3h11m 10.244.0.2 master <none>

etcd-master 1/1 Running 6 165m 192.168.10.101 master <none>

kube-apiserver-master 1/1 Running 9 165m 192.168.10.101 master <none>

kube-controller-manager-master 1/1 Running 33 165m 192.168.10.101 master <none>

kube-flannel-ds-amd64-bd4d8 1/1 Running 0 21m 192.168.10.103 node1 <none>

kube-flannel-ds-amd64-srhb9 1/1 Running 0 20m 192.168.10.104 node2 <none>

kube-flannel-ds-amd64-vqc9h 1/1 Running 3 74m 192.168.10.101 master <none>

kube-proxy-8bfvt 1/1 Running 1 21m 192.168.10.103 node1 <none>

kube-proxy-gz55d 1/1 Running 1 20m 192.168.10.104 node2 <none>

kube-proxy-z9xrw 1/1 Running 4 3h11m 192.168.10.101 master <none>

kube-scheduler-master 1/1 Running 33 165m 192.168.10.101 master <none>

[root@master ~]#

至此,集群搭建成功。看看搭建用到的镜像有哪些:

master节点:

[root@master ~]# docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

k8s.gcr.io/kube-controller-manager v1.12.0 07e068033cf2 2 weeks ago 164MB

k8s.gcr.io/kube-apiserver v1.12.0 ab60b017e34f 2 weeks ago 194MB

k8s.gcr.io/kube-scheduler v1.12.0 5a1527e735da 2 weeks ago 58.3MB

k8s.gcr.io/kube-proxy v1.12.0 9c3a9d3f09a0 2 weeks ago 96.6MB

k8s.gcr.io/etcd 3.2.24 3cab8e1b9802 3 weeks ago 220MB

k8s.gcr.io/coredns 1.2.2 367cdc8433a4 6 weeks ago 39.2MB

quay.io/coreos/flannel v0.10.0-amd64 f0fad859c909 8 months ago 44.6MB

k8s.gcr.io/pause 3.1 da86e6ba6ca1 9 months ago 742kB

[root@master ~]#

node节点:

[root@node1 ~]# docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

k8s.gcr.io/kube-proxy v1.12.0 9c3a9d3f09a0 2 weeks ago 96.6MB

quay.io/coreos/flannel v0.10.0-amd64 f0fad859c909 8 months ago 44.6MB

k8s.gcr.io/pause 3.1 da86e6ba6ca1 9 months ago 742kB

[root@node1 ~]#

[root@node2 ~]# docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

k8s.gcr.io/kube-proxy v1.12.0 9c3a9d3f09a0 2 weeks ago 96.6MB

quay.io/coreos/flannel v0.10.0-amd64 f0fad859c909 8 months ago 44.6MB

k8s.gcr.io/pause 3.1 da86e6ba6ca1 9 months ago 742kB

[root@node2 ~]#

四、集群应用

跑一个nginx

[root@master ~]# kubectl run nginx-deploy --image=nginx --port=80 --replicas=1

deployment.apps/nginx-deploy created

[root@master ~]# kubectl get deploy

NAME DESIRED CURRENT UP-TO-DATE AVAILABLE AGE

nginx-deploy 1 1 1 1 10s

[root@master ~]# kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE

nginx-deploy-8c5fc574c-d8jxj 1/1 Running 0 18s 10.244.2.4 node2 <none>

[root@master ~]#

在node节点上看看可不可以访问这个nginx:

[root@node1 ~]# curl -I 10.244.2.4

HTTP/1.1 200 OK

Server: nginx/1.15.5

Date: Tue, 16 Oct 2018 12:02:34 GMT

Content-Type: text/html

Content-Length: 612

Last-Modified: Tue, 02 Oct 2018 14:49:27 GMT

Connection: keep-alive

ETag: "5bb38577-264"

Accept-Ranges: bytes

[root@node1 ~]#

返回200,访问成功。

[root@master ~]# kubectl expose deployment nginx-deploy --name=nginx --port=80 --target-port=80 --protocol=TCP

service/nginx exposed

[root@master ~]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 21h

nginx ClusterIP 10.104.88.59 <none> 80/TCP 51s

[root@master ~]#

启动一个busybox:

[root@master ~]# kubectl run client --image=busybox --replicas=1 -it --restart=Never

If you don't see a command prompt, try pressing enter.

/ #

/ # wget -O - -q http://nginx:80

<!DOCTYPE html>

<html>

<head>

<title>Welcome to nginx!</title>

<style>

body {

width: 35em;

margin: 0 auto;

font-family: Tahoma, Verdana, Arial, sans-serif;

}

</style>

</head>

<body>

<h1>Welcome to nginx!</h1>

<p>If you see this page, the nginx web server is successfully installed and

working. Further configuration is required.</p>

<p>For online documentation and support please refer to

<a href="http://nginx.org/">nginx.org</a>.<br/>

Commercial support is available at

<a href="http://nginx.com/">nginx.com</a>.</p>

<p><em>Thank you for using nginx.</em></p>

</body>

</html>

/ #

删除重新建:

[root@master ~]# kubectl delete svc nginx

service "nginx" deleted

[root@master ~]# kubectl expose deployment nginx-deploy --name=nginx

service/nginx exposed

[root@master ~]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 22h

nginx ClusterIP 10.110.52.68 <none> 80/TCP 8s

[root@master ~]#

创建多副本:

[root@master ~]# kubectl run myapp --image=ikubernetes/myapp:v1 --replicas=2

deployment.apps/myapp created

[root@master ~]#

[root@master ~]# kubectl get deployment

NAME DESIRED CURRENT UP-TO-DATE AVAILABLE AGE

myapp 2 2 2 2 49s

nginx-deploy 1 1 1 1 36m

[root@master ~]# kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE

client 1/1 Running 0 3m49s 10.244.2.6 node2 <none>

myapp-6946649ccd-knd8r 1/1 Running 0 78s 10.244.2.7 node2 <none>

myapp-6946649ccd-pfl2r 1/1 Running 0 78s 10.244.1.6 node1 <none>

nginx-deploy-8c5fc574c-5bjjm 1/1 Running 0 12m 10.244.1.5 node1 <none>

[root@master ~]#

给myapp创建service:

[root@master ~]# kubectl expose deployment myapp --name=myapp --port=80

service/myapp exposed

[root@master ~]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 22h

myapp ClusterIP 10.110.238.138 <none> 80/TCP 11s

nginx ClusterIP 10.110.52.68 <none> 80/TCP 9m37s

[root@master ~]#

将myapp扩展到5个:

[root@master ~]# kubectl scale --replicas=5 deployment myapp

deployment.extensions/myapp scaled

[root@master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

client 1/1 Running 0 5m24s

myapp-6946649ccd-6kqxt 1/1 Running 0 8s

myapp-6946649ccd-7xj45 1/1 Running 0 8s

myapp-6946649ccd-8nh9q 1/1 Running 0 8s

myapp-6946649ccd-knd8r 1/1 Running 0 11m

myapp-6946649ccd-pfl2r 1/1 Running 0 11m

nginx-deploy-8c5fc574c-5bjjm 1/1 Running 0 23m

[root@master ~]#

修改myapp:

[root@master ~]# kubectl edit svc myapp

type: NodePort

type改为:NodePort

[root@master ~]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 23h

myapp NodePort 10.110.238.138 <none> 80:30937/TCP 35m

nginx ClusterIP 10.110.52.68 <none> 80/TCP 44m

[root@master ~]#

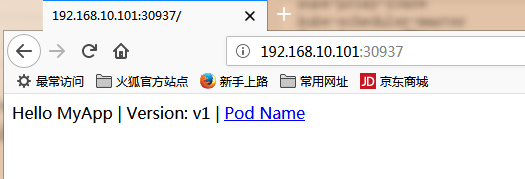

端口:30937,物理机打开:192.168.10.101:30937

![]()

OK,可以访问。

五、集群资源

1、资源类型

资源:实例化之后为对象,主要有:

wordload:Pod,ReplicaSet,Deployment,StatefulSet,DaemonSet,Job,Cronjob。。。

服务发现及均衡:Service,Ingress,。。。

配置与存储:Volume,CSI,特殊的有ConfigMap,Secret;DownwardAPI

集群级资源:Namespace,Node,Role,ClusterRole,RoleBinding,ClusterRoleBinding

元数据型资源:HPA,PodTemplate,LimitRange

2、创建资源的方法:

apiserver仅接收JSON格式的资源定义;

yaml格式提供配置清单,apiserver可自动将其转为json格式,而后再提交;

大部分资源的配置清单:

apiVesion: group/version,使用kubectl api-versions可以查看

kind: 资源类别

metadata:元数据(name,namespace,labels,annotations)

每个的资源的引用PATH:/api/GROUP/VERSION/namespace/NAMESPACE/TYPE/NAME,例如:

/api/v1/namespaces/default/pods/myapp-6946649ccd-c6m9b

spec:期望的状态,disired state

status:当前状态,current state,本字段由kubernetes集群维护

查看某种资源的定义,比如:查看pod

root@master ~]# kubectl explain pod

KIND: Pod

VERSION: v1

DESCRIPTION:

。。。

pod资源定义示例:

[root@master ~]# mkdir maniteste

[root@master ~]# vim maniteste/pod-demo.yaml

apiVersion: v1

kind: Pod

metadata:

name: pod-demo

namespace: default

labels:

app: myapp

tier: frontend

spec:

containers:

- name: myapp

image: ikubernetes/myapp:v1

- name: busybox

image: busybox:latest

command:

- "/bin/sh"

- "-c"

- "sleep 5"

创建资源:

[root@master ~]# kubectl create -f maniteste/pod-demo.yaml

[root@master ~]# kubectl describe pods pod-demo

Name: pod-demo

Namespace: default

Priority: 0

PriorityClassName: <none>

Node: node2/192.168.10.104

Start Time: Wed, 17 Oct 2018 19:54:03 +0800

Labels: app=myapp

tier=frontend

Annotations: <none>

Status: Running

IP: 10.244.2.26

查看日志:

[root@master ~]# curl 10.244.2.26

Hello MyApp | Version: v1 | <a href="hostname.html">Pod Name</a>

[root@master ~]# kubectl logs pod-demo myapp

10.244.0.0 - - [17/Oct/2018:11:56:49 +0000] "GET / HTTP/1.1" 200 65 "-" "curl/7.29.0" "-"

[root@master ~]#

1个pod跑2个容器。

删除pod:kubectl delete -f maniteste/pod-demo.yaml

六、Pod控制器

1、查看pod的containers定义信息:kubectl explain pods.spec.containers

资源配置清单:

自主式Pod资源

资源清单格式:

一级字段:apiVersion(group/version),kind,metadata(name,namespace,labels,annotations,。。),spec,status(只读)

Pod资源:

spec.containers <\[\]object>

\- name <string>

image <string>

imagePullPlocy Always | Never | IfNotPresent

2、标签:

key=value,key由字母、数字、_、-、.组成。

value:可以为空,只能字母或数字开头或结尾,中间可以使用

打标签:

[root@master ~]# kubectl get pods -l app --show-labels

NAME READY STATUS RESTARTS AGE LABELS

pod-demo 0/2 ContainerCreating 0 4m46s app=myapp,tier=frontend

[root@master ~]# kubectl label pods pod-demo release=haha

pod/pod-demo labeled

[root@master ~]# kubectl get pods -l app --show-labels

NAME READY STATUS RESTARTS AGE LABELS

pod-demo 0/2 ContainerCreating 0 5m27s app=myapp,release=haha,tier=frontend

[root@master ~]#

查看拥有某标签的pod:

[root@master ~]# kubectl get pods -l app,release

NAME READY STATUS RESTARTS AGE

pod-demo 0/2 ContainerCreating 0 7m43s

[root@master ~]#

标签选择器:

等值关系:=、==、!=

如: kubectl get pods -l release=stable

集合关系:KEY in (VALUE1,VALUE2….) 、KEY notin (VALUE1,VALUE2….)、KEY、!KEY

[root@master ~]# kubectl get pods -l "release notin (stable,haha)"

NAME READY STATUS RESTARTS AGE

client 0/1 Error 0 46h

myapp-6946649ccd-2lncx 1/1 Running 2 46h

nginx-deploy-8c5fc574c-5bjjm 1/1 Running 2 46h

[root@master ~]#

许多资源支持内嵌字段定义其使用的标签选择器:

matchLabels:直接给定键值

matchExpressions:基于给定的表达式来定义使用标签选择器,{key:"KEY",operator:"OPRATOR",values:[VAL1,VAL2,。。。]}

操作符(operator):In,NotIn:values字段的值必须为非空列表,Exists,NotExists:values字段的值必须为空列表

3、nodeSelector :节点标签选择器

nodeName

给某个节点打标签,比如:

[root@master ~]# kubectl label nodes node1 disktype=ssd

node/node1 labeled

[root@master ~]#

修改yaml文件:

[root@master ~]# vim maniteste/pod-demo.yaml

apiVersion: v1

kind: Pod

metadata:

name: pod-demo

namespace: default

labels:

app: myapp

tier: frontend

spec:

containers:

- name: myapp

image: ikubernetes/myapp:v1

ports:

- name: http

containerPort: 80

- name: busybox

image: busybox:latest

imagePullPolicy: IfNotPresent

command:

- "/bin/sh"

- "-c"

- "sleep 5"

nodeSelector:

disktype: ssd

重新创建:

[root@master ~]# kubectl delete pods pod-demo

pod "pod-demo" deleted

[root@master ~]# kubectl create -f maniteste/pod-demo.yaml

pod/pod-demo created

[root@master ~]#

4、annotations

与label不同的地方在于,它不能用于挑选资源对象,仅用于为对象提供“元数据”

示例:

apiVersion: v1

kind: Pod

metadata:

name: pod-demo

namespace: default

labels:

app: myapp

tier: frontend

annotations:

haha.com/create_by: "hello world"

spec:

containers:

- name: myapp

image: ikubernetes/myapp:v1

ports:

- name: http

containerPort: 80

- name: busybox

image: busybox:latest

imagePullPolicy: IfNotPresent

command:

- "/bin/sh"

- "-c"

- "sleep 3600"

nodeSelector:

disktype: ssd

5、Pod生命周期

状态:Pending(挂起),Running,Failed,Success,Unknown

Pod生命周期中的重要行为:初始化容器、容器探测(liveness、readliness)

restartPolicy:Always, OnFailure,Never. Default to Always

探针类型: ExecAction、TCPSocketAction、HTTPGetAction。

ExecAction举例:

[root@master ~]# vim liveness-exec.yaml

apiVersion: v1

kind: Pod

metadata:

name: liveness-exec-pod

namespace: default

spec:

containers:

- name: liveness-exec-container

image: busybox:latest

imagePullPolicy: IfNotPresent

command: ["/bin/sh","-c","touch /tmp/healthy; sleep 30; rm -f /tmp/healthy; sleep 3600"]

livenessProbe:

exec:

command: ["test","-e","/tmp/healthy"]

initialDelaySeconds: 2

periodSeconds: 3

创建:

[root@master ~]# kubectl create -f liveness-exec.yaml

pod/liveness-exec-pod created

[root@master ~]# kubectl get pods -w

NAME READY STATUS RESTARTS AGE

client 0/1 Error 0 3d

liveness-exec-pod 1/1 Running 3 3m

myapp-6946649ccd-2lncx 1/1 Running 4 3d

nginx-deploy-8c5fc574c-5bjjm 1/1 Running 4 3d

liveness-exec-pod 1/1 Running 4 4m

HTTPGetAction举例:

[root@master ~]# vim liveness-httpGet.yaml

apiVersion: v1

kind: Pod

metadata:

name: liveness-httpget-pod

namespace: default

spec:

containers:

- name: liveness-httpget-container

image: ikubernetes/myapp:v1

imagePullPolicy: IfNotPresent

ports:

- name: http

containerPort: 80

livenessProbe:

httpGet:

port: http

path: /index.html

initialDelaySeconds: 1

periodSeconds: 3

[root@master ~]# kubectl create -f liveness-httpGet.yaml

pod/liveness-httpget-pod created

[root@master ~]#

readiness:

[root@master ~]# vim readiness-httget.yaml

apiVersion: v1

kind: Pod

metadata:

name: readiness-httpget-pod

namespace: default

spec:

containers:

kind: Pod

metadata:

name: readiness-httpget-pod

namespace: default

spec:

containers:

- name: readiness-httpget-container

image: ikubernetes/myapp:v1

imagePullPolicy: IfNotPresent

ports:

- name: http

containerPort: 80

readinessProbe:

httpGet:

port: http

path: /index.html

initialDelaySeconds: 1

periodSeconds: 3

容器生命周期-poststart示例:

[root@master ~]# vim poststart-pod.yaml

apiVersion: v1

kind: Pod

metadata:

name: poststart-pod

namespace: default

spec:

containers:

- name: busybox-httpd

image: busybox:latest

imagePullPolicy: IfNotPresent

lifecycle:

postStart:

exec:

command: ["/bin/sh","-c","echo Home_Page >> /tmp/index.html"]

#command: ['/bin/sh','-c','sleep 3600']

command: ["/bin/httpd"]

args: ["-f","-h /tmp"]

[root@master ~]# kubectl create -f poststart-pod.yaml

pod/poststart-pod created

[root@master ~]#

但,使用/tmp目录作为网站目录肯定是不行的。

6、Pod控制器

pod控制器有多种类型:

ReplicaSet: 代用户创建指定数量的pod副本数量,确保pod副本数量符合预期状态,并且支持滚动式自动扩容和缩容功能。

ReplicaSet主要三个组件组成:

(1)用户期望的pod副本数量

(2)标签选择器,判断哪个pod归自己管理

(3)当现存的pod数量不足,会根据pod资源模板进行新建

帮助用户管理无状态的pod资源,精确反应用户定义的目标数量,但是RelicaSet不是直接使用的控制器,而是使用Deployment。

Deployment:工作在ReplicaSet之上,用于管理无状态应用,目前来说最好的控制器。支持滚动更新和回滚功能,还提供声明式配置。

DaemonSet:用于确保集群中的每一个节点只运行特定的pod副本,通常用于实现系统级后台任务。比如ELK服务

特性:服务是无状态的,服务必须是守护进程

Job:只要完成就立即退出,不需要重启或重建。

Cronjob:周期性任务控制,不需要持续后台运行,

StatefulSet:管理有状态应用

ReplicaSet(rs)示例:

[root@master ~]# kubectl get deployments

NAME DESIRED CURRENT UP-TO-DATE AVAILABLE AGE

myapp 1 1 1 1 4d

nginx-deploy 1 1 1 1 4d1h

[root@master ~]# kubectl delete deploy myapp

deployment.extensions "myapp" deleted

[root@master ~]# kubectl delete deploy nginx-deploy

deployment.extensions "nginx-deploy" deleted

[root@master ~]#

[root@master ~]# vim rs-demo.yaml

apiVersion: apps/v1

kind: ReplicaSet

metadata:

name: myapp

namespace: default

spec:

replicas: 2

selector:

matchLabels:

app: myapp

release: canary

template:

metadata:

name: myapp-pod

labels:

app: myapp

release: canary

environment: qa

spec:

containers:

- name: myapp-conatainer

image: ikubernetes/myapp:v1

ports:

- name: http

containerPort: 80

[root@master ~]# kubectl create -f rs-demo.yaml

replicaset.apps/myapp created

查看标签:

[root@master ~]# kubectl get pods --show-labels

NAME READY STATUS RESTARTS AGE LABELS

client 0/1 Error 0 4d run=client

liveness-httpget-pod 1/1 Running 1 107m <none>

myapp-fspr7 1/1 Running 0 75s app=myapp,environment=qa,release=canary

myapp-ppxrw 1/1 Running 0 75s app=myapp,environment=qa,release=canary

pod-demo 2/2 Running 0 3s app=myapp,tier=frontend

readiness-httpget-pod 1/1 Running 0 86m <none>

[root@master ~]#

给pod-demo打一个标签release=canary:

[root@master ~]# kubectl label pods pod-demo release=canary

pod/pod-demo labeled

deploy示例:

[root@master ~]# kubectl delete rs myapp

replicaset.extensions "myapp" deleted

[root@master ~]#

[root@master ~]# vim deploy-demo.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: myapp-deploy

namespace: default

spec:

replicas: 2

selector:

matchLabels:

app: myapp

release: canary

template:

metadata:

labels:

app: myapp

release: canary

spec:

containers:

- name: myapp

image: ikubernetes/myapp:v1

ports:

- name: http

containerPort: 80

[root@master ~]# kubectl create -f deploy-demo.yaml

deployment.apps/myapp-deploy created

[root@master ~]#

[root@master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

client 0/1 Error 0 4d20h

liveness-httpget-pod 1/1 Running 2 22h

myapp-deploy-574965d786-5x42g 1/1 Running 0 70s

myapp-deploy-574965d786-dqzpd 1/1 Running 0 70s

pod-demo 2/2 Running 3 20h

readiness-httpget-pod 1/1 Running 1 21h

[root@master ~]# kubectl get rs

NAME DESIRED CURRENT READY AGE

myapp-deploy-574965d786 2 2 2 93s

[root@master ~]#

如果要修改副本数,则编辑deploy-demo.yaml修改副本数,执行kubectl apply -f deploy-demo.yaml

或者:kubectl patch deployment myapp-deploy -p '{"spec":{"replicas":5}}',这里是修改5个副本。

修改其他属性,比如:

[root@master ~]# kubectl patch deployment myapp-deploy -p '{"spec":{"strategy":{"rollingUpdate":{"maxSurge":1,"maxUnavailable":0}}}}'

deployment.extensions/myapp-deploy patched

[root@master ~]#

更新版本:

[root@master ~]# kubectl set image deployment myapp-deploy myapp=ikubernetes/myapp:v3 && kubectl rollout pause deployment myapp-deploy

deployment.extensions/myapp-deploy image updated

deployment.extensions/myapp-deploy paused

[root@master ~]#

[root@master ~]# kubectl rollout status deployment myapp-deploy

Waiting for deployment "myapp-deploy" rollout to finish: 1 out of 2 new replicas have been updated...

[root@master ~]# kubectl rollout resume deployment myapp-deploy

deployment.extensions/myapp-deploy resumed

[root@master ~]#

版本回滚:

[root@master ~]# kubectl rollout undo deployment myapp-deploy --to-revision=1

deployment.extensions/myapp-deploy

[root@master ~]#

DaemonSet示例:

node1、node2执行:docker pull ikubernetes/filebeat:5.6.5-alpine

编辑yaml文件:

[root@master ~]# vim ds-demo.yaml

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: myapp-ds

namespace: default

spec:

selector:

matchLabels:

app: filebeat

release: stable

template:

metadata:

labels:

app: filebeat

release: stable

spec:

containers:

- name: filebeat

image: ikubernetes/filebeat:5.6.5-alpine

env:

- name: REDIS_HOST

value: redis.default.svc.cluster.local

- name: REDIS_LOG_LEVEL

value: info

[root@master ~]# kubectl apply -f ds-demo.yaml

daemonset.apps/myapp-ds created

[root@master ~]#

修改yaml文件:

[root@master ~]# vim ds-demo.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: redis

namespace: default

spec:

replicas: 1

selector:

matchLabels:

app: redis

role: logstor

template:

metadata:

labels:

app: redis

role: logstor

spec:

containers:

- name: redis

image: redis:4.0-alpine

ports:

- name: redis

containerPort: 6379

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: filebeat-ds

namespace: default

spec:

selector:

matchLabels:

app: filebeat

release: stable

template:

metadata:

labels:

app: filebeat

release: stable

spec:

containers:

- name: filebeat

image: ikubernetes/filebeat:5.6.5-alpine

env:

- name: REDIS_HOST

value: redis.default.svc.cluster.local

- name: REDIS_LOG_LEVEL

value: info

[root@master ~]# kubectl delete -f ds-demo.yaml

[root@master ~]# kubectl apply -f ds-demo.yaml

deployment.apps/redis created

daemonset.apps/filebeat-ds created

[root@master ~]#

暴露redis端口:

[root@master ~]# kubectl expose deployment redis --port=6379

service/redis exposed

[root@master ~]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 5d20h

myapp NodePort 10.110.238.138 <none> 80:30937/TCP 4d21h

nginx ClusterIP 10.110.52.68 <none> 80/TCP 4d21h

redis ClusterIP 10.97.196.222 <none> 6379/TCP 11s

[root@master ~]#

进入redis:

[root@master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

redis-664bbc646b-sg6wk 1/1 Running 0 2m55s

[root@master ~]# kubectl exec -it redis-664bbc646b-sg6wk -- /bin/sh

/data # netstat -tnl

Active Internet connections (only servers)

Proto Recv-Q Send-Q Local Address Foreign Address State

tcp 0 0 0.0.0.0:6379 0.0.0.0:* LISTEN

tcp 0 0 :::6379 :::* LISTEN

/data # nslookup redis.default.svc.cluster.local

nslookup: can't resolve '(null)': Name does not resolve

Name: redis.default.svc.cluster.local

Address 1: 10.97.196.222 redis.default.svc.cluster.local

/data #

/data # redis-cli -h redis.default.svc.cluster.local

redis.default.svc.cluster.local:6379> keys *

(empty list or set)

redis.default.svc.cluster.local:6379>

进入filebeat:

[root@master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

client 0/1 Error 0 4d21h

filebeat-ds-bszfz 1/1 Running 0 6m2s

filebeat-ds-w5nzb 1/1 Running 0 6m2s

redis-664bbc646b-sg6wk 1/1 Running 0 6m2s

[root@master ~]# kubectl exec -it filebeat-ds-bszfz -- /bin/sh

/ # printenv

/ # nslookup redis.default.svc.cluster.local

/ # kill -1 1

更新:[root@master ~]# kubectl set image daemonsets filebeat-ds filebeat=ikubernetes/filebeat:5.6.6-alpine

七、Service资源

service是kubernetes中最核心的资源对象之一,Service可以理解成是微服务架构中的一个"微服务“

简单讲,一个service本质上是一组pod组成的一个集群,service和pod之间是通过Label串起来,相同的Service的pod的Label是一样的。同一个service下的所有pod是通过kube-proxy实现负载均衡,而每个service都会分配一个全局唯一的虚拟ip,也就cluster ip。在该service整个生命周期内,cluster ip保持不变,而在kubernetes中还有一个dns服务,它把service的name和cluster ip应声起来。

工作模式:userspace、iptables、ipvs

类型:ExternalName, ClusterIP, NodePort, LoadBalancer

资源记录:SVC_NAME.NS_NAME.DOMAIN.LTD.

svc.cluster.local. 例如:redis.default.svc.cluster.local.

[root@master ~]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 5d20h

myapp NodePort 10.110.238.138 <none> 80:30937/TCP 4d22h

nginx ClusterIP 10.110.52.68 <none> 80/TCP 4d22h

redis ClusterIP 10.97.196.222 <none> 6379/TCP 29m

[root@master ~]# kubectl delete svc redis

[root@master ~]# kubectl delete svc nginx

[root@master ~]# kubectl delete svc myapp

[root@master ~]# vim redis-svc.yaml

apiVersion: v1

kind: Service

metadata:

name: redis

namespace: default

spec:

selector:

app: redis

role: logstor

clusterIP: 10.97.97.97

type: ClusterIP

ports:

- port: 6379

targetPort: 6379

[root@master ~]# kubectl apply -f redis-svc.yaml

service/redis created

[root@master ~]#

NodePort:

[root@master ~]# vim myapp-svc.yaml

apiVersion: v1

kind: Service

metadata:

name: myapp

namespace: default

spec:

selector:

app: myapp

role: canary

clusterIP: 10.99.99.99

type: NodePort

ports:

- port: 80

targetPort: 80

nodePort: 30080

[root@master ~]# kubectl apply -f myapp-svc.yaml

service/myapp created

[root@master ~]#

[root@master ~]# kubectl patch svc myapp -p '{"spec":{"sessionAffinity":"ClientIP"}}'

service/myapp patched

[root@master ~]#

不指定ClusterIP:

[root@master ~]# vim myapp-svc-headless.yaml

apiVersion: v1

kind: Service

metadata:

name: myapp-svc

namespace: default

spec:

selector:

app: myapp

release: canary

clusterIP: "None"

ports:

- port: 80

targetPort: 80

[root@master ~]# kubectl apply -f myapp-svc-headless.yaml

service/myapp-svc created

[root@master ~]# kubectl get svc -n kube-system

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kube-dns ClusterIP 10.96.0.10 <none> 53/UDP,53/TCP 5d21h

[root@master ~]# dig -t A myapp-svc.default.svc.cluster.local. @10.96.0.10

; <<>> DiG 9.9.4-RedHat-9.9.4-61.el7_5.1 <<>> -t A myapp-svc.default.svc.cluster.local. @10.96.0.10

;; global options: +cmd

;; Got answer:

;; ->>HEADER<<- opcode: QUERY, status: NOERROR, id: 32215

;; flags: qr aa rd ra; QUERY: 1, ANSWER: 5, AUTHORITY: 0, ADDITIONAL: 1

;; OPT PSEUDOSECTION:

; EDNS: version: 0, flags:; udp: 4096

;; QUESTION SECTION:

;myapp-svc.default.svc.cluster.local. IN A

;; ANSWER SECTION:

myapp-svc.default.svc.cluster.local. 5 IN A 10.244.1.59

myapp-svc.default.svc.cluster.local. 5 IN A 10.244.2.51

myapp-svc.default.svc.cluster.local. 5 IN A 10.244.1.60

myapp-svc.default.svc.cluster.local. 5 IN A 10.244.1.58

myapp-svc.default.svc.cluster.local. 5 IN A 10.244.2.52

;; Query time: 2 msec

;; SERVER: 10.96.0.10#53(10.96.0.10)

;; WHEN: Sun Oct 21 19:41:16 CST 2018

;; MSG SIZE rcvd: 319

[root@master ~]#

八、ingress及ingress controller

Ingress可以简单的理解成k8s内部的nginx, 用作负载均衡器。

Ingress由两部分组成:Ingress Controller 和 Ingress 服务。

ingress-nginx:https://github.com/kubernetes/ingress-nginx、https://kubernetes.github.io/ingress-nginx/deploy/

1、下载相关文件

[root@master ~]# mkdir ingress-nginx

[root@master ~]# cd ingress-nginx

[root@master ingress-nginx]# for file in namespace.yaml configmap.yaml rbac.yaml with-rbac.yaml ; do curl -O https://raw.githubusercontent.com/kubernetes/ingress-nginx/master/deploy/${file};done

2、创建

[root@master ingress-nginx]# kubectl apply -f ./

3、编写yaml文件

[root@master ~]# mkdir maniteste/ingress

[root@master ~]# cd maniteste/ingress

[root@master ingress]#vim deploy-demo.yaml

apiVersion: v1

kind: Service

metadata:

name: myapp

namespace: default

spec:

selector:

app: myapp

release: canary

ports:

- name: http

port: 80

targetPort: 80

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: myapp-deploy

namespace: default

spec:

replicas: 3

selector:

matchLabels:

app: myapp

release: canary

template:

metadata:

labels:

app: myapp

release: canary

spec:

containers:

- name: myapp

image: ikubernetes/myapp:v2

ports:

- name: http

containerPort: 80

[root@master ingress]# kubectl delete svc myapp

[root@master ingress]# kubectl delete deployment myapp-deploy

[root@master ingress]# kubectl apply -f deploy-demo.yaml

service/myapp created

deployment.apps/myapp-deploy created

[root@master ingress]#

3、创建service

如果不定义nodePort则会随机映射端口。

4、app

[root@master ingress-nginx]# vim ingress-myapp.yaml

apiVersion: extensions/v1beta1

kind: Ingress

metadata:

name: ingress-myapp

namespace: default

annotations:

kubernetes.io/ingress.class: "nginx"

spec:

rules:

- host: myapp.haha.com

http:

paths:

- path:

backend:

serviceName: myapp

servicePort: 80

[root@master ingress-nginx]# kubectl apply -f ingress-myapp.yaml

[root@master ~]# kubectl get ingresses

NAME HOSTS ADDRESS PORTS AGE

ingress-myapp myapp.haha.com 80 58s

[root@master ~]#

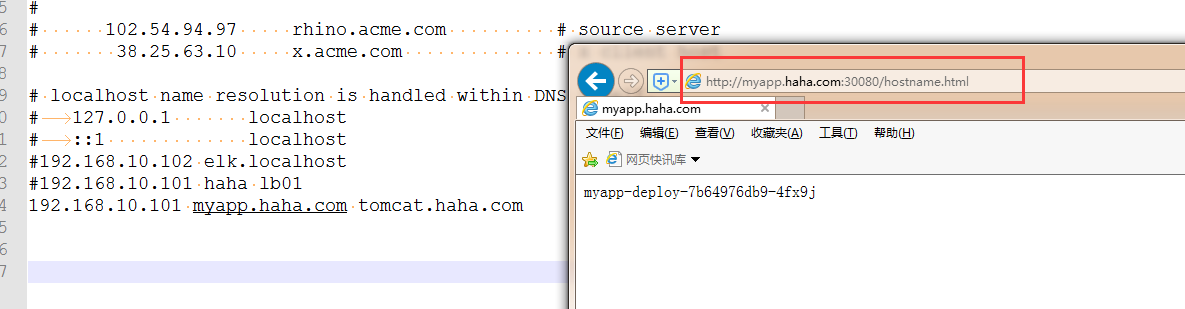

修改物理机的hosts,浏览器打开:

![]()

可以这样查看:kubectl get svc -n ingress-nginx

5、部署一个Tomcat

[root@master ingress-nginx]# vim tomcat-deploy.yaml

apiVersion: v1

kind: Service

metadata:

name: tomcat

namespace: default

spec:

selector:

app: tomcat

release: canary

ports:

- name: http

port: 8080

targetPort: 8080

- name: ajp

port: 8009

targetPort: 8009

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: tomcat-deploy

namespace: default

spec:

replicas: 3

selector:

matchLabels:

app: tomcat

release: canary

template:

metadata:

labels:

app: tomcat

release: canary

spec:

containers:

- name: tomcat

image: tomcat:8.5.34-jre8-alpine

ports:

- name: http

containerPort: 8080

- name: ajp

containerPort: 8009

[root@master ingress-nginx]# vim ingress-tomcat.yaml

apiVersion: extensions/v1beta1

kind: Ingress

metadata:

name: ingress-tomcat

namespace: default

annotations:

kubernetes.io/ingress.class: "tomcat"

spec:

rules:

- host: tomcat.haha.com

http:

paths:

- path:

backend:

serviceName: tomcat

servicePort: 8080

[root@master ingress-nginx]# kubectl apply -f tomcat-deploy.yaml

[root@master ingress-nginx]# kubectl apply -f ingress-tomcat.yaml

查看Tomcat:

[root@master ~]# kubectl get pod

NAME READY STATUS RESTARTS AGE

myapp-deploy-7b64976db9-5ww72 1/1 Running 0 66m

myapp-deploy-7b64976db9-fm7jl 1/1 Running 0 66m

myapp-deploy-7b64976db9-s6f95 1/1 Running 0 66m

tomcat-deploy-695dbfd5bd-6kx42 1/1 Running 0 5m54s

tomcat-deploy-695dbfd5bd-f5d7n 0/1 ImagePullBackOff 0 5m54s

tomcat-deploy-695dbfd5bd-v5d9d 1/1 Running 0 5m54s

[root@master ~]# kubectl exec tomcat-deploy-695dbfd5bd-6kx42 -- netstat -tnl

Active Internet connections (only servers)

Proto Recv-Q Send-Q Local Address Foreign Address State

tcp 0 0 127.0.0.1:8005 0.0.0.0:* LISTEN

tcp 0 0 0.0.0.0:8009 0.0.0.0:* LISTEN

tcp 0 0 0.0.0.0:8080 0.0.0.0:* LISTEN

[root@master ~]#

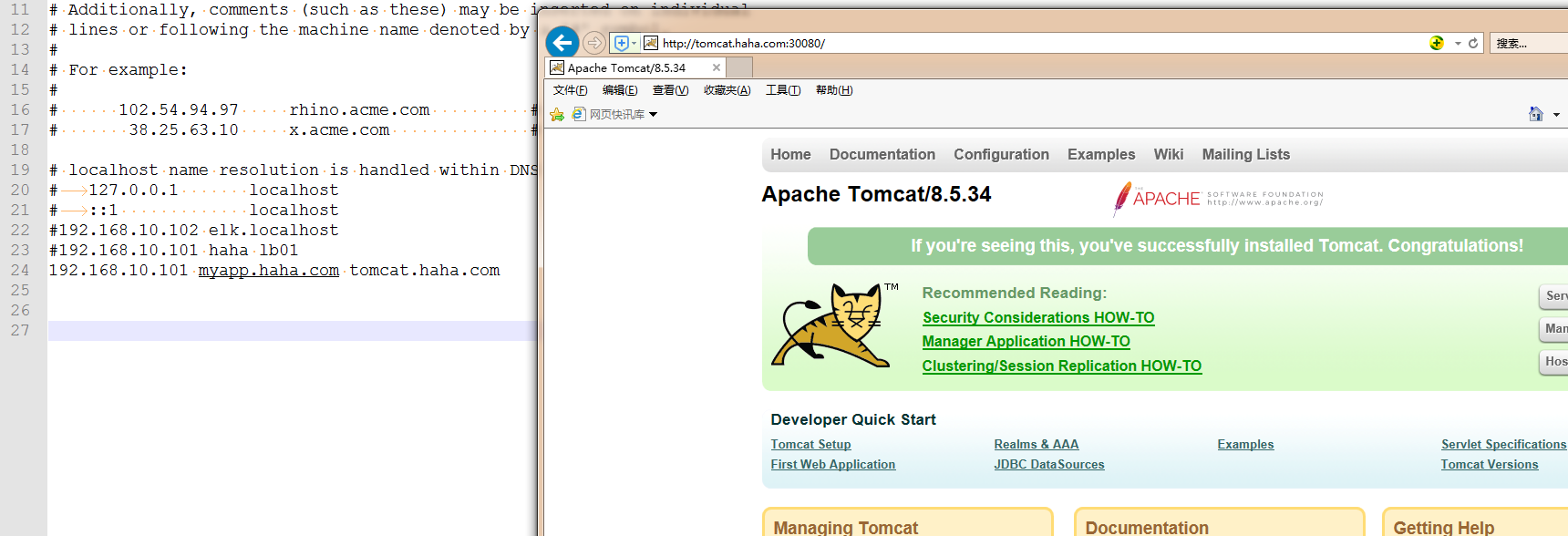

可以使用:docker pull tomcat:8.5.34-jre8-alpine实现下载好镜像。

![]()

创建ssl证书:

创建私钥:

[root@master ingress]# openssl genrsa -out tls.key 2048

创建自签证书:

[root@master ingress]# openssl req -new -x509 -key tls.key -out tls.crt -subj /C=CN/ST=Guangdong/L=Guangdong/O=DevOps/CN=tomcat.haha.com

要想将证书注入到pod,必须转格式:

[root@master ingress]# kubectl create secret tls tomcat-ingress-secret --cert=tls.crt --key=tls.key

secret/tomcat-ingress-secret created

[root@master ingress]# kubectl get secret

NAME TYPE DATA AGE

default-token-kcvkv kubernetes.io/service-account-token 3 8d

tomcat-ingress-secret kubernetes.io/tls 2 29s

[root@master ingress]#

格式为:kubernetes.io/tls

配置tomcat:

[root@master ingress]# vim ingress-tomcat-tls.yaml

apiVersion: extensions/v1beta1

kind: Ingress

metadata:

name: ingress-tomcat-tls

namespace: default

annotations:

kubernetes.io/ingress.class: "nginx"

spec:

tls:

- hosts:

- tomcat.haha.com

secretName: tomcat-ingress-secret

rules:

- host: tomcat.haha.com

http:

paths:

- path:

backend:

serviceName: tomcat

servicePort: 8080

[root@master ingress]# kubectl apply -f ingress-tomcat-tls.yaml

ingress.extensions/ingress-tomcat-tls created

[root@master ingress]#

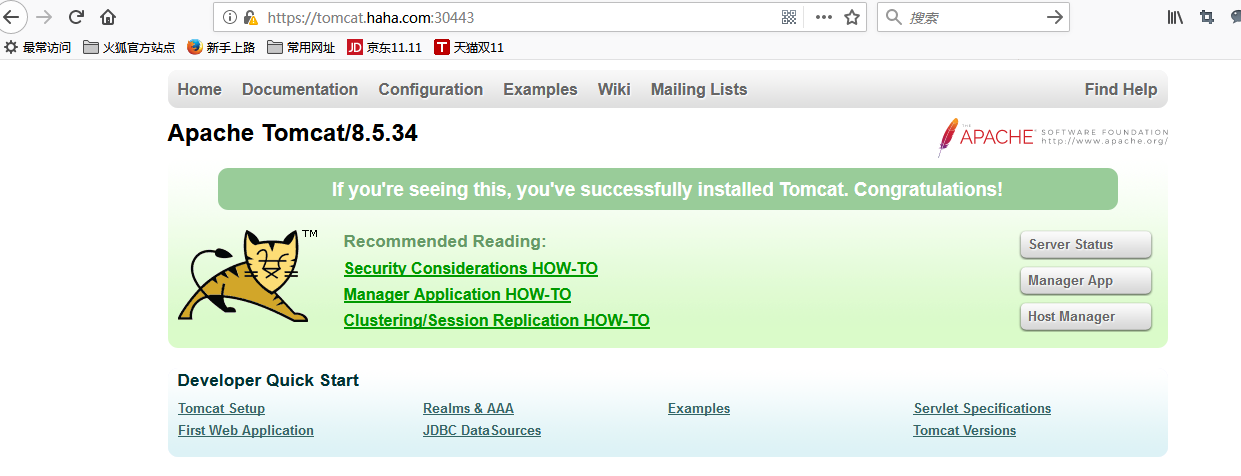

浏览器打开:https://tomcat.haha.com:30443/

![]()