使用vk虚拟节点提升k8s集群容量和弹性

![image image]()

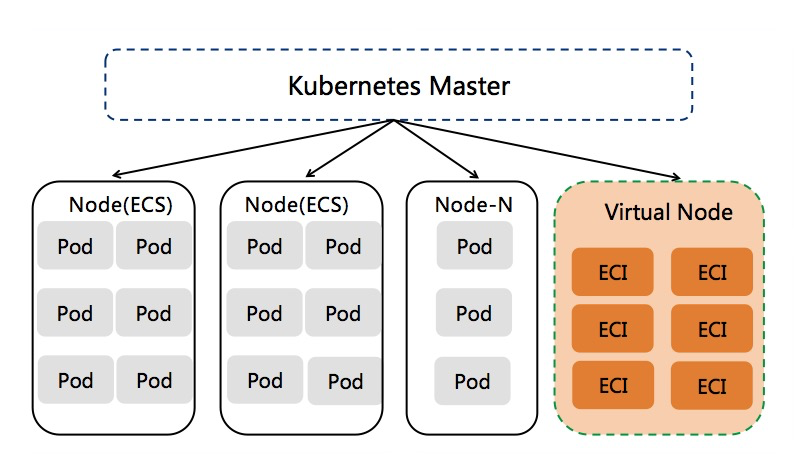

在kubernetes集群中添加vk虚拟节点的方式已被非常多的客户普遍使用,基于vk虚拟节点可以极大提升集群的Pod容量和弹性,灵活动态的按需创建ECI Pod,免去集群容量规划的麻烦。目前vk虚拟节点已广泛应用在如下场景。

- 在线业务的波峰波谷弹性需求:如在线教育、电商等行业有着明显的波峰波谷计算特征,使用vk可以显著减少固定资源池的维护,降低计算成本。

- 提升集群Pod容量:当传统的flannel网络模式集群因vpc路由表条目或者vswitch网络规划限制导致集群无法添加更多节点时,使用虚拟节点可以规避上述问题,简单而快速的提升集群Pod容量。

- 数据计算:使用vk承载Spark、Presto等计算场景,有效降低计算成本。

- CI/CD和其他Job类型任务

创建多个vk虚拟节点

参考ACK产品文档部署虚拟节点:https://help.aliyun.com/document_detail/118970.html

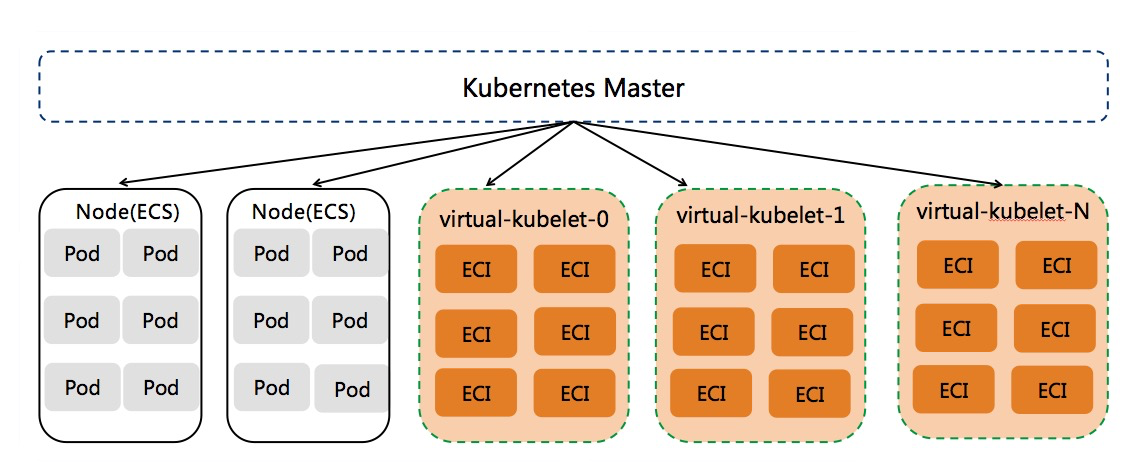

通常而言,如果单个k8s集群内eci pod数量小于3000,我们推荐部署单个vk节点。对于希望vk承载更多pod的场景,我们建议在k8s集群中部署多个vk节点来对vk进行水平扩展,多个vk节点的部署形态可以缓解单个vk节点的压力,支撑更大的eci pod容量。比如3个vk节点可以支撑33000个eci pod,10个vk节点可以支撑103000个eci pod。

![image image]()

为了更简单的进行vk水平扩展,我们使用statefulset的方式部署vk controller,每个vk controller管理一个vk节点。当需要更多的vk虚拟节点时,只需要修改statefulset的replicas即可。配置并部署下面的statefulset yaml文件(配置AK和vpc/vswitch/安全组等信息),statefulset的默认Pod副本数量是1。

apiVersion: apps/v1

kind: StatefulSet

metadata:

labels:

app: virtual-kubelet

name: virtual-kubelet

namespace: kube-system

spec:

replicas: 1

selector:

matchLabels:

app: virtual-kubelet

serviceName: ""

template:

metadata:

labels:

app: virtual-kubelet

spec:

containers:

- args:

- --provider

- alibabacloud

- --nodename

- $(VK_INSTANCE)

env:

- name: VK_INSTANCE

valueFrom:

fieldRef:

apiVersion: v1

fieldPath: metadata.name

- name: KUBELET_PORT

value: "10250"

- name: VKUBELET_POD_IP

valueFrom:

fieldRef:

apiVersion: v1

fieldPath: status.podIP

- name: VKUBELET_TAINT_KEY

value: "virtual-kubelet.io/provider"

- name: VKUBELET_TAINT_VALUE

value: "alibabacloud"

- name: VKUBELET_TAINT_EFFECT

value: "NoSchedule"

- name: ECI_REGION

value: xxx

- name: ECI_VPC

value: vpc-xxx

- name: ECI_VSWITCH

value: vsw-xxx

- name: ECI_SECURITY_GROUP

value: sg-xxx

- name: ECI_QUOTA_CPU

value: "1000000"

- name: ECI_QUOTA_MEMORY

value: 6400Ti

- name: ECI_QUOTA_POD

value: "3000"

- name: ECI_ACCESS_KEY

value: xxx

- name: ECI_SECRET_KEY

value: xxx

- name: ALIYUN_CLUSTERID

value: xxx

image: registry.cn-hangzhou.aliyuncs.com/acs/virtual-nodes-eci:v1.0.0.2-aliyun

imagePullPolicy: Always

name: ack-virtual-kubelet

dnsPolicy: ClusterFirst

restartPolicy: Always

schedulerName: default-scheduler

serviceAccount: ack-virtual-node-controller

serviceAccountName: ack-virtual-node-controller

修改statefulset的pod副本数量,添加更多vk节点。

# kubectl -n kube-system scale statefulset virtual-kubelet --replicas=3

statefulset.apps/virtual-kubelet scaled

# kubectl get no

NAME STATUS ROLES AGE VERSION

cn-hangzhou.192.168.1.1 Ready <none> 63d v1.12.6-aliyun.1

cn-hangzhou.192.168.1.2 Ready <none> 63d v1.12.6-aliyun.1

virtual-kubelet-0 Ready agent 1m v1.11.2-aliyun-1.0.207

virtual-kubelet-1 Ready agent 1m v1.11.2-aliyun-1.0.207

virtual-kubelet-2 Ready agent 1m v1.11.2-aliyun-1.0.207

# kubectl -n kube-system get statefulset virtual-kubelet

NAME READY AGE

virtual-kubelet 3/3 3m

# kubectl -n kube-system get pod|grep virtual-kubelet

virtual-kubelet-0 1/1 Running 0 15m

virtual-kubelet-1 1/1 Running 0 11m

virtual-kubelet-2 1/1 Running 0

当我们在vk namespace中创建多个nginx pod时,可以发现pod被调度到了多个vk节点上。

# kubectl create ns vk

# kubectl label namespace vk virtual-node-affinity-injection=enabled

# kubectl -n vk run nginx --image nginx:alpine --replicas=10

deployment.extensions/nginx scaled

# kubectl -n vk get pod -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

nginx-544b559c9b-4vgzx 1/1 Running 0 1m 192.168.165.198 virtual-kubelet-2 <none> <none>

nginx-544b559c9b-544tm 1/1 Running 0 1m 192.168.125.10 virtual-kubelet-0 <none> <none>

nginx-544b559c9b-9q7v5 1/1 Running 0 1m 192.168.165.200 virtual-kubelet-1 <none> <none>

nginx-544b559c9b-llqmq 1/1 Running 0 1m 192.168.165.199 virtual-kubelet-2 <none> <none>

nginx-544b559c9b-p6c5g 1/1 Running 0 1m 192.168.165.197 virtual-kubelet-0 <none> <none>

nginx-544b559c9b-q8mpt 1/1 Running 0 1m 192.168.165.196 virtual-kubelet-0 <none> <none>

nginx-544b559c9b-rf5sq 1/1 Running 0 1m 192.168.125.8 virtual-kubelet-0 <none> <none>

nginx-544b559c9b-s64kc 1/1 Running 0 1m 192.168.125.11 virtual-kubelet-2 <none> <none>

nginx-544b559c9b-vfv56 1/1 Running 0 1m 192.168.165.201 virtual-kubelet-1 <none> <none>

nginx-544b559c9b-wfb2z 1/1 Running 0 1m 192.168.125.9 virtual-kubelet-1 <none> <none>

减少vk虚拟节点数量

因为vk上的eci pod是按需创建,当没有eci pod时vk虚拟节点不会占用实际的资源,所以一般情况下我们不需要减少vk节点数。但用户如果确实希望减少vk节点数时,我们建议按照如下步骤操作。

假设当前集群中有5个vk节点,分别为virtual-kubelet-0/.../virtual-kubelet-4。我们希望缩减到1个vk节点,那么我们需要删除virtual-kubelet-1/../virtual-kubelet-4这4个节点。

- 先优雅下线vk节点,驱逐上面的pod到其他节点上,同时也禁止更多pod调度到待删除的vk节点上。

# kubectl drain virtual-kubelet-1 virtual-kubelet-2 virtual-kubelet-3 virtual-kubelet-4

# kubectl get no

NAME STATUS ROLES AGE VERSION

cn-hangzhou.192.168.1.1 Ready <none> 66d v1.12.6-aliyun.1

cn-hangzhou.192.168.1.2 Ready <none> 66d v1.12.6-aliyun.1

virtual-kubelet-0 Ready agent 3d6h v1.11.2-aliyun-1.0.207

virtual-kubelet-1 Ready,SchedulingDisabled agent 3d6h v1.11.2-aliyun-1.0.207

virtual-kubelet-2 Ready,SchedulingDisabled agent 3d6h v1.11.2-aliyun-1.0.207

virtual-kubelet-3 Ready,SchedulingDisabled agent 66m v1.11.2-aliyun-1.0.207

virtual-kubelet-4 Ready,SchedulingDisabled agent 66m v1.11.2-aliyun-1.0.207

之所以需要先优雅下线vk节点的原因是vk节点上的eci pod是被vk controller管理,如果vk节点上还存在eci pod时删除vk controller,那样将导致eci pod被残留,vk controller也无法继续管理那些pod。

- 待vk节点下线后,修改virtual-kubelet statefulset的副本数量,使其缩减到我们期望的vk节点数量。

# kubectl -n kube-system scale statefulset virtual-kubelet --replicas=1

statefulset.apps/virtual-kubelet scaled

# kubectl -n kube-system get pod|grep virtual-kubelet

virtual-kubelet-0 1/1 Running 0 3d6h

等待一段时间,我们会发现那些vk节点变成NotReady状态。

# kubectl get no

NAME STATUS ROLES AGE VERSION

cn-hangzhou.192.168.1.1 Ready <none> 66d v1.12.6-aliyun.1

cn-hangzhou.192.168.1.2 Ready <none> 66d v1.12.6-aliyun.1

virtual-kubelet-0 Ready agent 3d6h v1.11.2-aliyun-1.0.207

virtual-kubelet-1 NotReady,SchedulingDisabled agent 3d6h v1.11.2-aliyun-1.0.207

virtual-kubelet-2 NotReady,SchedulingDisabled agent 3d6h v1.11.2-aliyun-1.0.207

virtual-kubelet-3 NotReady,SchedulingDisabled agent 70m v1.11.2-aliyun-1.0.207

virtual-kubelet-4 NotReady,SchedulingDisabled agent 70m v1.11.2-aliyun-1.0.207

# kubelet delete no virtual-kubelet-1 virtual-kubelet-2 virtual-kubelet-3 virtual-kubelet-4

node "virtual-kubelet-1" deleted

node "virtual-kubelet-2" deleted

node "virtual-kubelet-3" deleted

node "virtual-kubelet-4" deleted

# kubectl get no

NAME STATUS ROLES AGE VERSION

cn-hangzhou.192.168.1.1 Ready <none> 66d v1.12.6-aliyun.1

cn-hangzhou.192.168.1.2 Ready <none> 66d v1.12.6-aliyun.1

virtual-kubelet-0 Ready agent 3d6h v1.11.2-aliyun-1.0.207